由于前面安装版本过老,导致学习过程中出现了很多问题,今天安装了一个新一点的版本。安装结束启动时遇到一点问题,记录在这里。

第一步:hive-1.2安装

通过WinSCP将apache-hive-1.2.1-bin.tar.gz上传到/usr/hive/目录下

[root@spark1 hive]# chmod u+x apache-hive-1.2.1-bin.tar.gz #增加执行权限

[root@spark1 hive]# tar -zxvf apache-hive-1.2.1-bin.tar.gz #解压

[root@spark1 hive]# mv apache-hive-1.2.1-bin hive-1.2

[root@spark1 hive]# vi /etc/profile #配置环境变量

export HIVE_HOME=/usr/hive/hive-1.2

export PATH=$HIVE_HOME/bin

[root@spark1 hive]# source /etc/profile #是环境变量生效

[root@spark1 hive]# which hive #查看安装路径

第二步:安装mysql

[root@spark1 hive]# yum install -y mysql-server #下载安装

[root@spark1 hive]# service mysqld start #启动mysql

[root@spark1 hive]# chkconfig mysqld on #设置开机自动启动

[root@spark1 hive]# yum install -y mysql-connector-java #yum安装mysql connector

[root@spark1 hive]# cp /usr/share/java/mysql-connector-java-5.1.17.jar /usr/hive/hive-0.13/lib #拷贝到hive中的lib目录下

第三步:在mysql上创建hive元数据库,并对hive进行授权

mysql> create database if not exists hive_metadata;

mysql> grant all privileges on hive_metadata.* to 'hive'@'%' identified by 'hive';

mysql> grant all privileges on hive_metadata.* to 'hive'@'localhost' identified by 'hive';

mysql> grant all privileges on hive_metadata.* to 'hive'@'spark1' identified by 'hive';

mysql> flush privileges;

mysql> use hive_metadata;

mysql> exit

第四步:配置文件

[root@spark1 hive]# cd /usr/hive/hive-1.2/conf #进入到conf目录

[root@spark1 conf]# mv hive-default.xml.template hive-site.xml #重命名

[root@spark1 conf]# vi hive-site.xml

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://spark1:3306/hive_metadata?createDatabaseIfNotExist=true</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>hive</value>

<description>password to use against metastore database</description>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

<description>location of default database for the warehouse</description>

</property>

[root@spark1 conf]# mv hive-env.sh.template hive-env.sh #重命名

[root@spark1 ~]# vi /usr/hive/hive-1.2/bin/hive-config.sh #加入java、hive、hadoop 路径

export JAVA_HOME=/usr/java/jdk1.8

export HIVE_HOME=/usr/hive/hive-1.2

export HADOOP_HOME=/usr/hadoop/hadoop-2.6.0

[hadoop@spark1 hive]$ cd hive-1.2

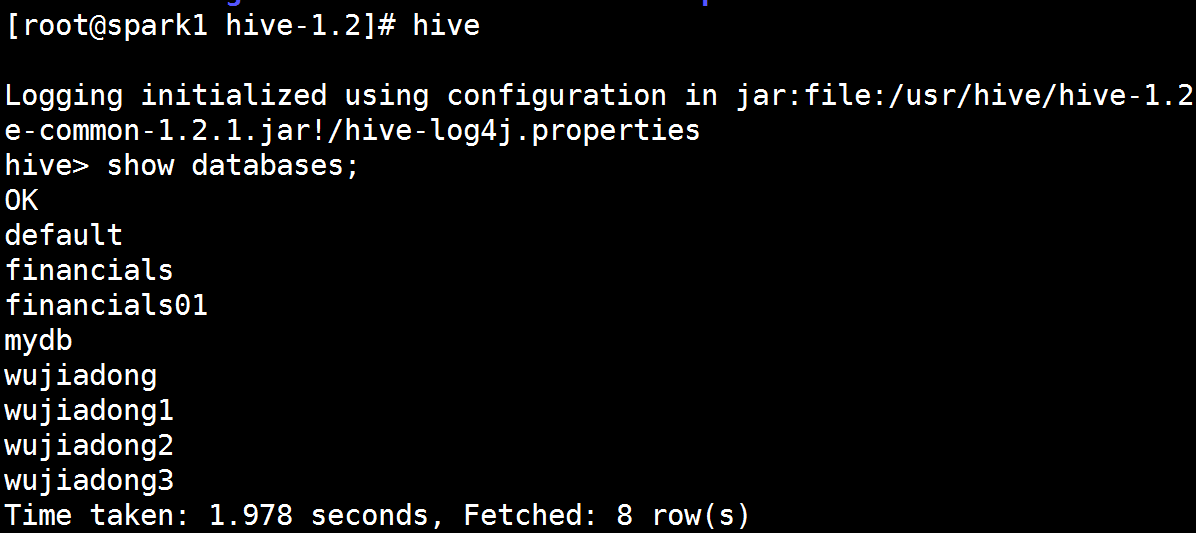

[hadoop@spark1 hive-1.2]$ hive #进入hive开始使用

测试hive

hive> hive;

启动报错

Exception in thread "main" java.lang.RuntimeException: java.lang.IllegalArgumentException: java.net.URISyntaxException: Relative path in absolute URI: ({system:java.io.tmpdir%7D/)%7Bsystem:user.name%7D

启动hive-1.2报错1

Exception in thread "main" java.lang.RuntimeException: java.lang.IllegalArgumentException: java.net.URISyntaxException: Relative path in absolute URI: ({system:java.io.tmpdir%7D/)%7Bsystem:user.name%7D

解决办法

将hive-site.xml中三个含有system:java.io.tmpdir配置项的值修改为 $HIVE_HOME/iotmp 即:

/usr/hive/hive-1.2/iotmp

<property>

<name>hive.exec.local.scratchdir</name>

<value>/usr/hive/hive-1.2/iotmp</value>

<description>Local scratch space for Hive jobs</description>

</property>

<property>

<name>hive.downloaded.resources.dir</name>

<value>/usr/hive/hive-1.2/iotmp</value>

<description>Temporary local directory for added resources in the remote file system.</description>

</property>

<property>

<name>hive.downloaded.resources.dir</name>

<value>/usr/hive/hive-1.2/iotmp</value>

<description>Temporary local directory for added resources in the remote file system.</description>

</property>

搞定1之后报错2

[ERROR] Terminal initialization failed; falling back to unsupported

原因是hadoop目录下存在老版本jline:

/usr/hadoop/hadoop-2.6.0/share/hadoop/yarn/lib/jline-0.9.94.jar

解决方法:

将hive目录/usr/hive/hive-1.2/lib下的新版本jline的JAR包拷贝到hadoop下:

cp jline-2.12.jar /usr/hadoop/hadoop-2.6.0/share/hadoop/yarn/lib

结果如下图