1.选一个自己感兴趣的主题或网站。(所有同学不能雷同)

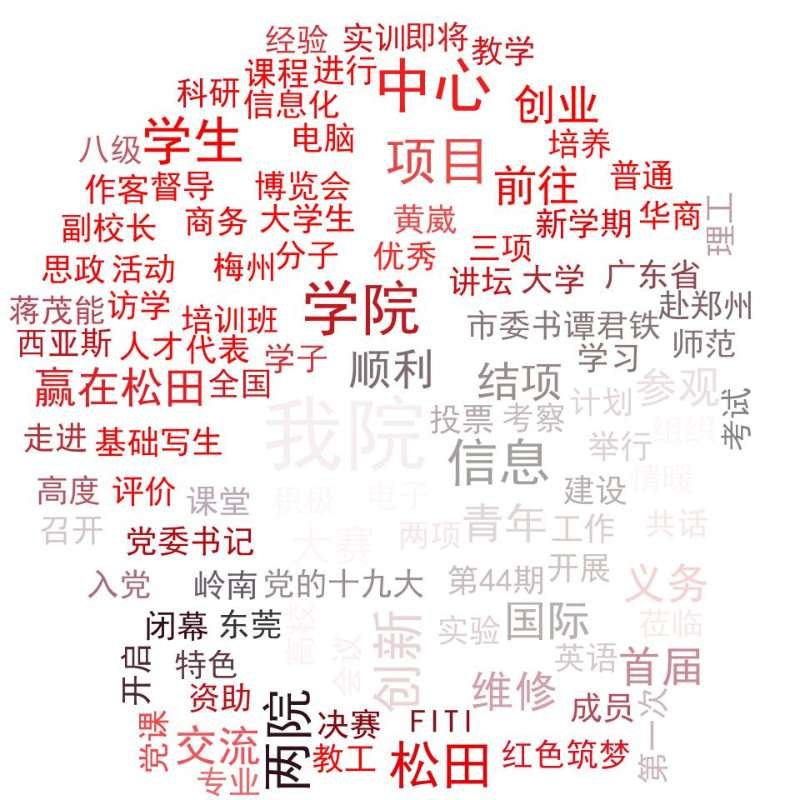

我选了附近松田学校的校园网来爬取

2.用python 编写爬虫程序,从网络上爬取相关主题的数据。

# -*- coding: utf-8 -*- import requests from bs4 import BeautifulSoup as bs def gettext(url): header = { 'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.117 Safari/537.36'} html = requests.get(url, headers=header).content soup = bs(html, 'html.parser') info = soup.select('div.newList.black01 a') a = [] for i in info: a.append(i.text) print(i.text) return a if __name__ == '__main__': url = "http://www.sontan.net/newsCenter.do" #html = getreq(url) info = gettext(url) print(info) for i in info: print(i) f = open('i.txt', 'a+',encoding='utf-8') f.write(i) f.write(' ') f.close()

3.对爬了的数据进行文本分析,生成词云。

import jieba import PIL from wordcloud import WordCloud import matplotlib.pyplot as p import os info = open('i.txt', 'r', encoding='utf-8').read() text = '' text += ' '.join(jieba.lcut(info)) wc = WordCloud(font_path='C:WindowsFontsSTZHONGS.TTF', background_color='White', max_words=50) wc.generate_from_text(text) p.imshow(wc) # p.imshow(wc.recolor(color_func=00ff00)) p.axis("off") p.show() wc.to_file('dream.jpg')

4.对文本分析结果进行解释说明。

5.写一篇完整的博客,描述上述实现过程、遇到的问题及解决办法、数据分析思想及结论。

一开始遇到的问题很多,做函数的时候发现自己的基本功非常的不扎实,甚至在导入库方面的知识也很匮乏,好在在同学的帮助下,我还是顺利的完成了任务。感觉做大数据爬取还是很有意思的,不过在爬其他网站的时候经常爬不到东西,应该是被限制了访问,这个问题以后再去深究吧。

6.最后提交爬取的全部数据、爬虫及数据分析源代码。

# -*- coding: utf-8 -*- import requests from bs4 import BeautifulSoup as bs def gettext(url): header = { 'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.117 Safari/537.36'} html = requests.get(url, headers=header).content soup = bs(html, 'html.parser') info = soup.select('div.newList.black01 a') a = [] for i in info: a.append(i.text) print(i.text) return a if __name__ == '__main__': url = "http://www.sontan.net/newsCenter.do" #html = getreq(url) info = gettext(url) print(info) for i in info: print(i) f = open('i.txt', 'a+',encoding='utf-8') f.write(i) f.write(' ') f.close() import jieba import PIL from wordcloud import WordCloud import matplotlib.pyplot as p import os info = open('i.txt', 'r', encoding='utf-8').read() text = '' text += ' '.join(jieba.lcut(info)) wc = WordCloud(font_path='C:WindowsFontsSTZHONGS.TTF', background_color='White', max_words=50) wc.generate_from_text(text) p.imshow(wc) # p.imshow(wc.recolor(color_func=00ff00)) p.axis("off") p.show() wc.to_file('dream.jpg')