1. Deep inside of kafka-connect start up

To begin with, let's take a look at how kafka connect start.

1.1 start command

# background running mode cd /home/lenmom/workspace/software/confluent-community-5.1.0-2.11/ &&./bin/connect-distributed -daemon ./etc/schema-registry/connect-avro-distributed.properties # or console running mode cd /home/lenmom/workspace/software/confluent-community-5.1.0-2.11/ &&./bin/connect-distributed ./etc/schema-registry/connect-avro-distributed.properties

we saw the start command is connect-distributed, then take a look at content of this file

#!/bin/sh # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. if [ $# -lt 1 ]; then echo "USAGE: $0 [-daemon] connect-distributed.properties" exit 1 fi base_dir=$(dirname $0) ### ### Classpath additions for Confluent Platform releases (LSB-style layout) ### #cd -P deals with symlink from /bin to /usr/bin java_base_dir=$( cd -P "$base_dir/../share/java" && pwd ) # confluent-common: required by kafka-serde-tools # kafka-serde-tools (e.g. Avro serializer): bundled with confluent-schema-registry package for library in "kafka" "confluent-common" "kafka-serde-tools" "monitoring-interceptors"; do dir="$java_base_dir/$library" if [ -d "$dir" ]; then classpath_prefix="$CLASSPATH:" if [ "x$CLASSPATH" = "x" ]; then classpath_prefix="" fi CLASSPATH="$classpath_prefix$dir/*" fi done if [ "x$KAFKA_LOG4J_OPTS" = "x" ]; then LOG4J_CONFIG_NORMAL_INSTALL="/etc/kafka/connect-log4j.properties" LOG4J_CONFIG_ZIP_INSTALL="$base_dir/../etc/kafka/connect-log4j.properties" if [ -e "$LOG4J_CONFIG_NORMAL_INSTALL" ]; then # Normal install layout KAFKA_LOG4J_OPTS="-Dlog4j.configuration=file:${LOG4J_CONFIG_NORMAL_INSTALL}" elif [ -e "${LOG4J_CONFIG_ZIP_INSTALL}" ]; then # Simple zip file layout KAFKA_LOG4J_OPTS="-Dlog4j.configuration=file:${LOG4J_CONFIG_ZIP_INSTALL}" else # Fallback to normal default KAFKA_LOG4J_OPTS="-Dlog4j.configuration=file:$base_dir/../config/connect-log4j.properties" fi fi export KAFKA_LOG4J_OPTS if [ "x$KAFKA_HEAP_OPTS" = "x" ]; then export KAFKA_HEAP_OPTS="-Xms256M -Xmx2G" fi EXTRA_ARGS=${EXTRA_ARGS-'-name connectDistributed'} COMMAND=$1 case $COMMAND in -daemon) EXTRA_ARGS="-daemon "$EXTRA_ARGS shift ;; *) ;; esac export CLASSPATH exec $(dirname $0)/kafka-run-class $EXTRA_ARGS org.apache.kafka.connect.cli.ConnectDistributed "$@"

we found that to start the kafka connect process, it called another file kafka-run-class,so let's goto kafka-run-class.

1.2 kafka-run-class

. . . . # Launch mode if [ "x$DAEMON_MODE" = "xtrue" ]; then nohup $JAVA $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@" > "$CONSOLE_OUTPUT_FILE" 2>&1 < /dev/null & else exec $JAVA $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@" fi

at the end of this file, it launched the connect process by invoking java command, and this is the location where we can add logic to remote debugging.

2. copy kafka-run-class and rename the copy to kafka-connect-debugging

cp bin/kafka-run-class bin/kafka-connect-debugging

modify the invoke command in kafka-connect-debugging to add java remote debugging support.

vim bin/kafka-connect-debugging

the invoke command as follows:

. . . export JPDA_OPTS="-agentlib:jdwp=transport=dt_socket,address=8888,server=y,suspend=y" #export JPDA_OPTS="" # Launch mode if [ "x$DAEMON_MODE" = "xtrue" ]; then nohup $JAVA $JPDA_OPTS $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@" > "$CONSOLE_OUTPUT_FILE" 2>&1 < /dev/null & else exec $JAVA $JPDA_OPTS $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@" fi

The added command means to start the kafka-connect as server and listen at port number 8888, and paused for the debugging client to connect.

if we don't want to run in debug mode, just uncomment the line

#export JPDA_OPTS=""

which means remote the # symbol in this line.

3. edit connect-distributed file

cd /home/lenmom/workspace/software/confluent-community-5.1.0-2.11/ vim ./bin/connect-distributed

replace last line from

exec $(dirname $0)/kafka-run-class $EXTRA_ARGS org.apache.kafka.connect.cli.ConnectDistributed "$@"

to

exec $(dirname $0)/kafka-connect-debugging $EXTRA_ARGS org.apache.kafka.connect.cli.ConnectDistributed "$@"

4. debugging

4.1 start kafka-connect

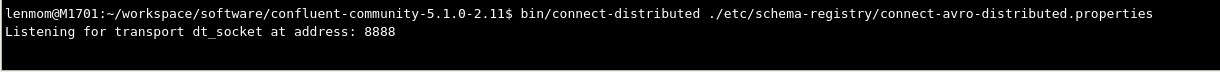

lenmom@M1701:~/workspace/software/confluent-community-5.1.0-2.11$ bin/connect-distributed ./etc/schema-registry/connect-avro-distributed.properties Listening for transport dt_socket at address: 8888

we see the process is paused and listening on port 8888, until the debugging client attached on.

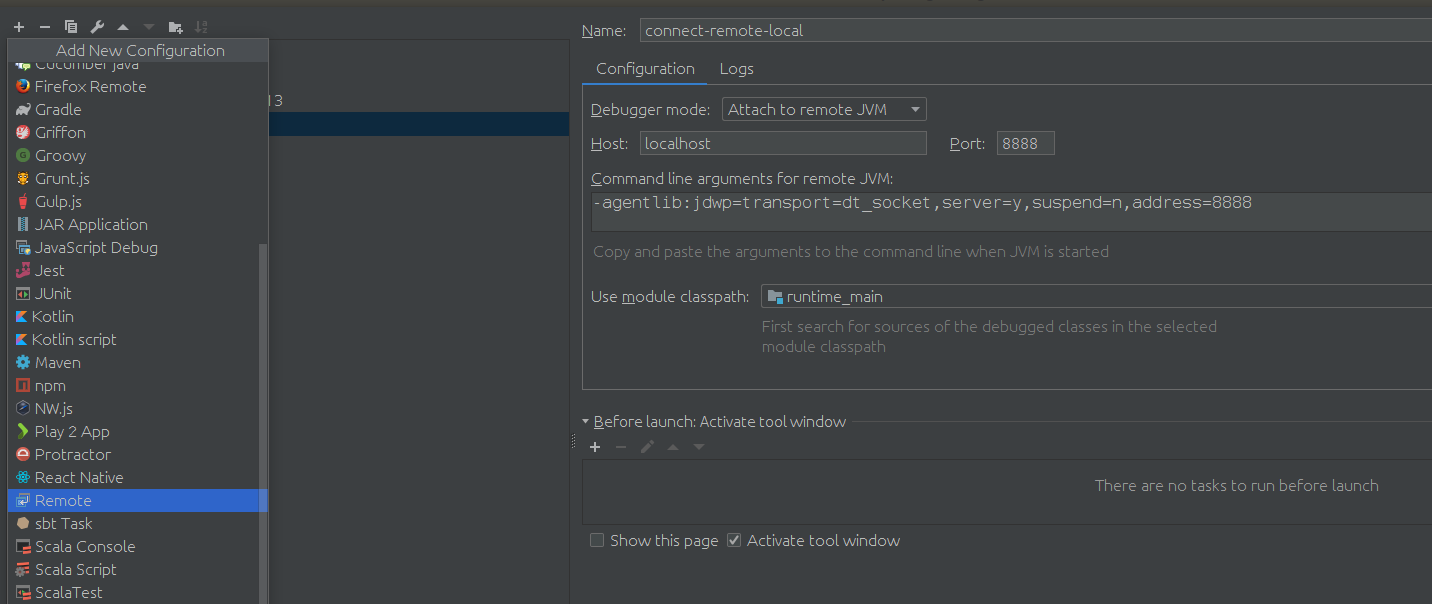

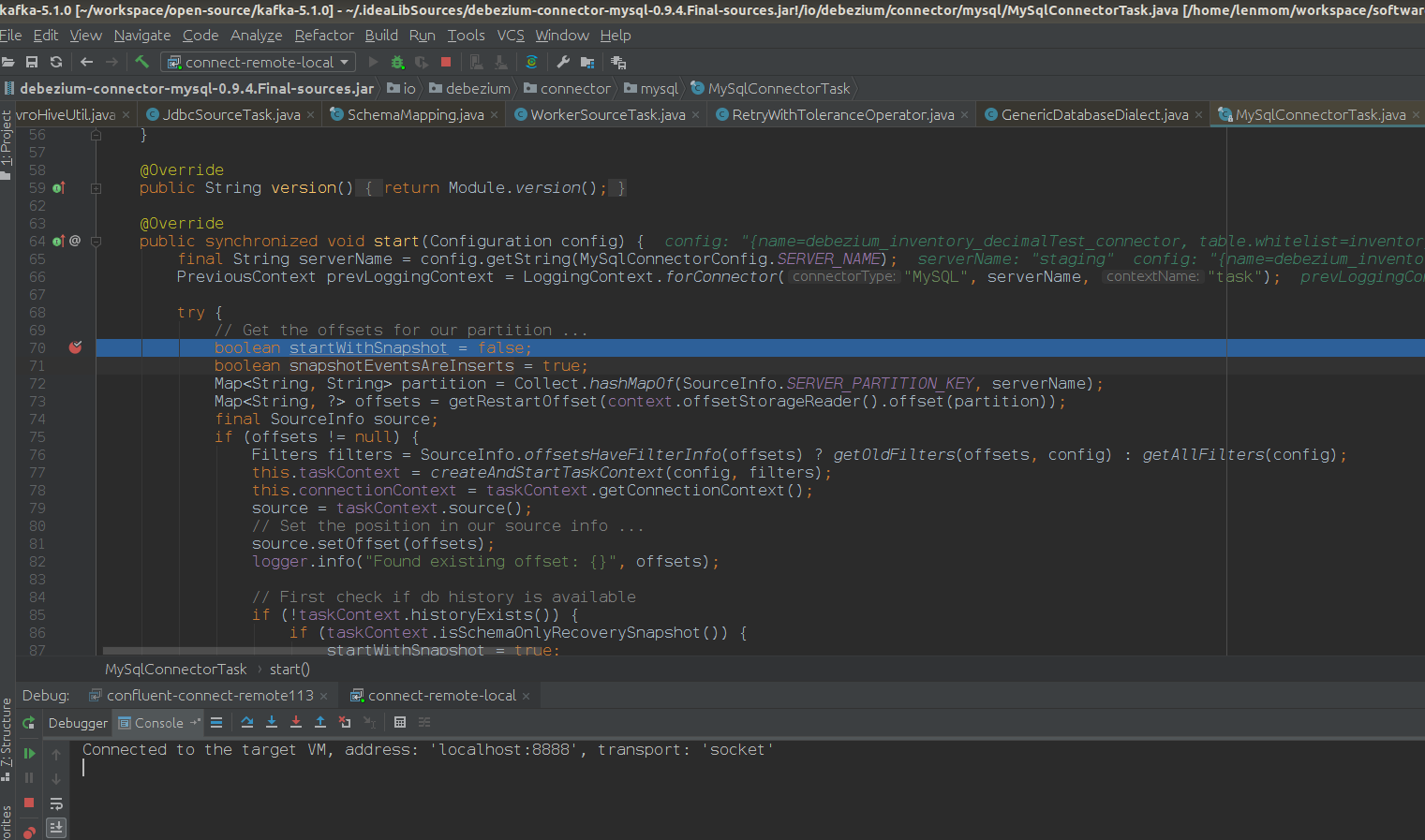

4.2 attach the kafka-connect using idea

after setup the debugg setting, just client debugging, is ok now. show a screenshot of my scenario.

Have fun!