一、词频统计

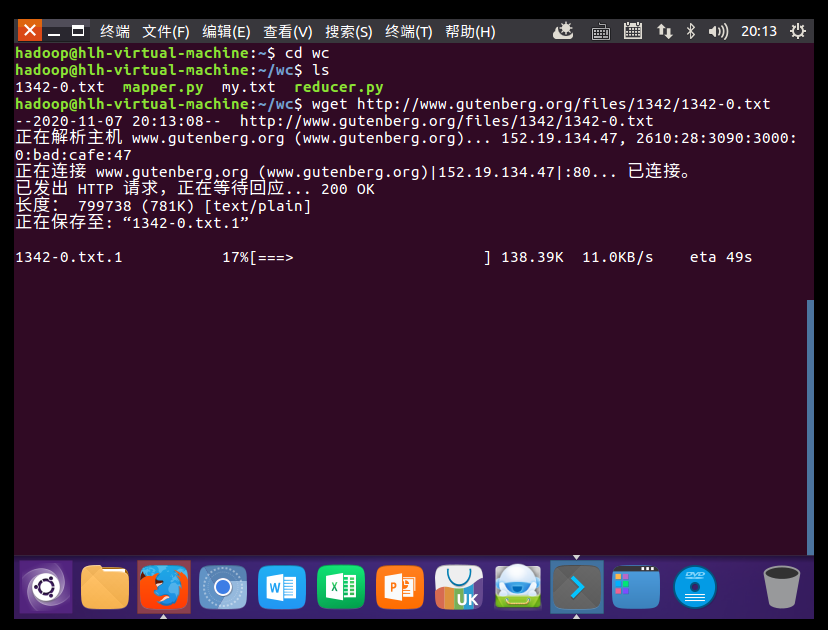

1.下载电子书

wget http://www.gutenberg.org/files/1342/1342-0.txt

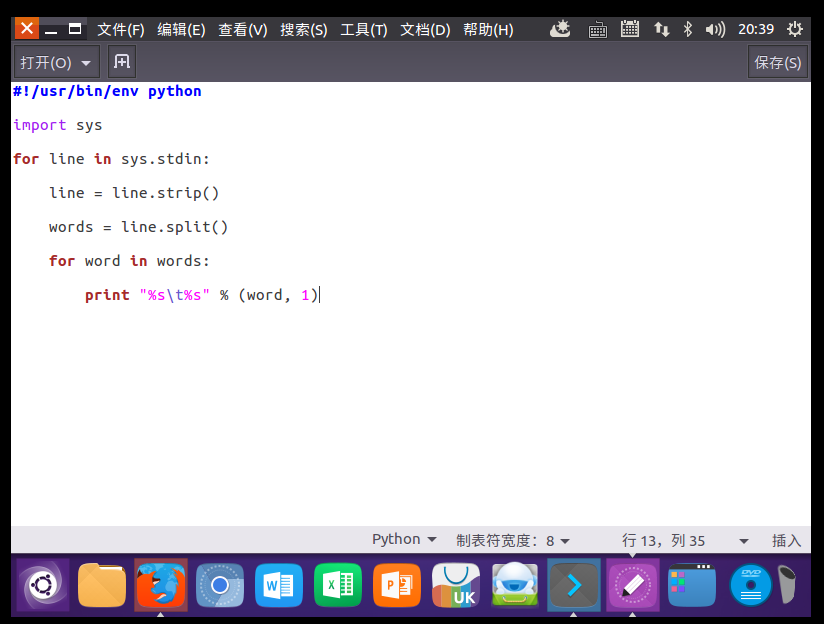

2.编写mapper与reducer函数

mapper.py

#!/usr/bin/env python

import sys

for line in sys.stdin:

line = line.strip()

words = line.split()

for word in words:

print "%s %s" % (word, 1)

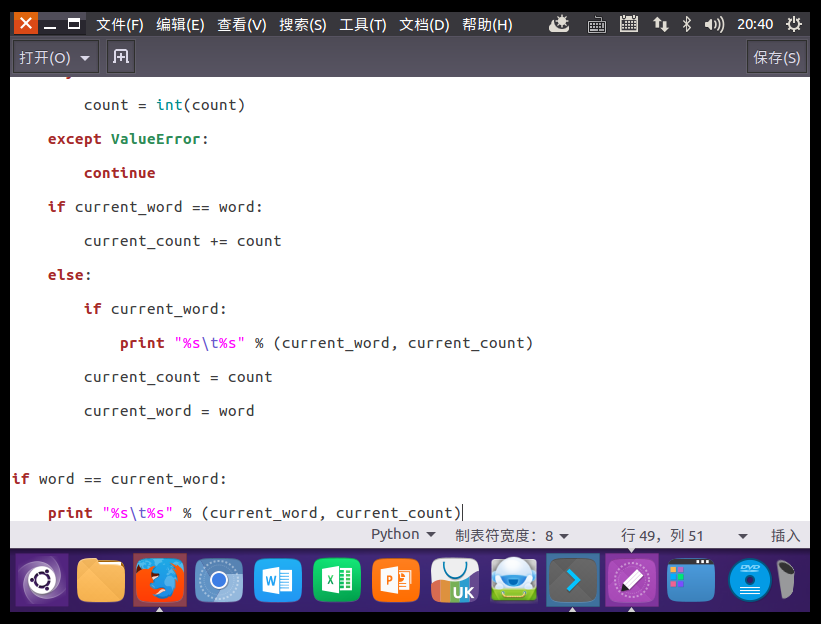

reducer.py

#!/usr/bin/env python

from operator import itemgetter

import sys

current_word = None

current_count = 0

word = None

for line in sys.stdin:

line = line.strip()

word, count = line.split(' ', 1)

try:

count = int(count)

except ValueError:

continue

if current_word == word:

current_count += count

else:

if current_word:

print "%s %s" % (current_word, current_count)

current_count = count

current_word = word

if word == current_word:

print "%s %s" % (current_word, current_count)

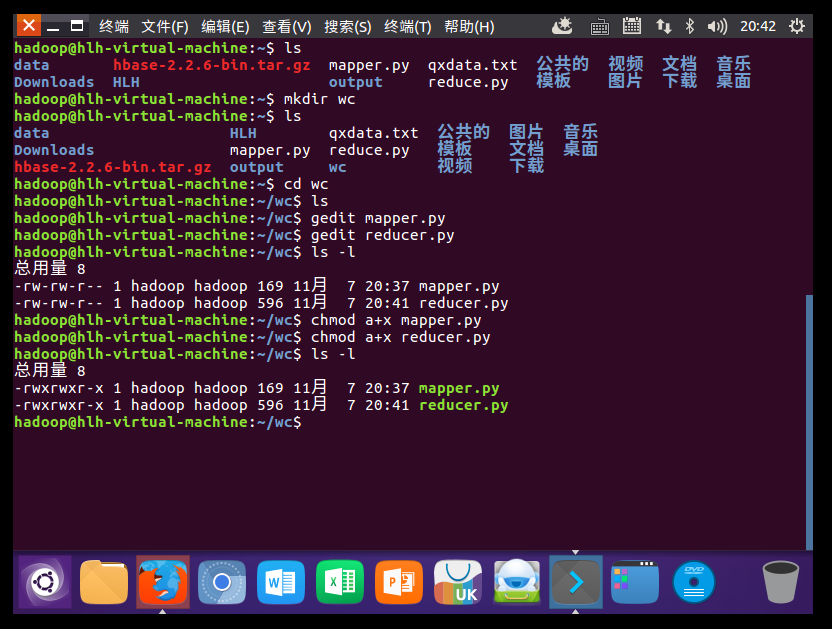

授权

ls -l

chmod a+x mapper.py

chmod a+x reducer.py

ls -l

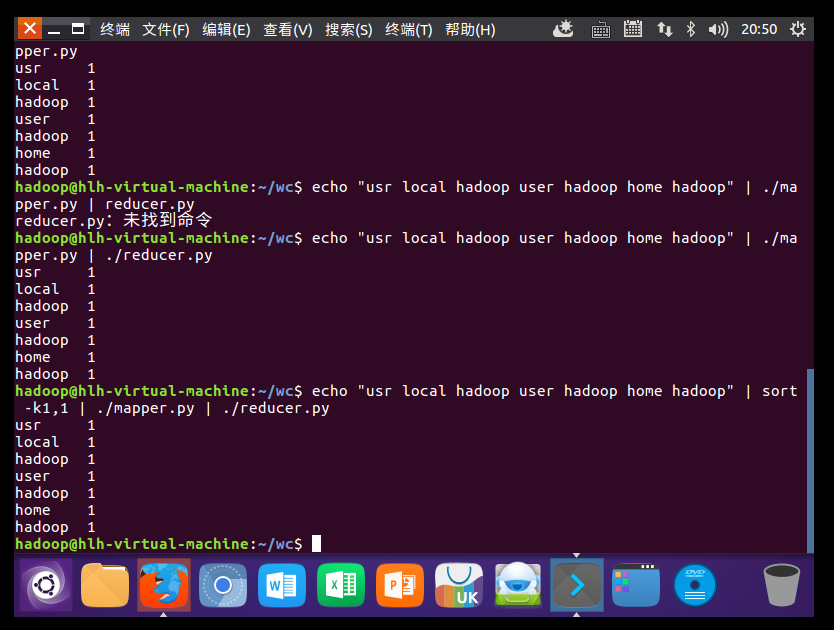

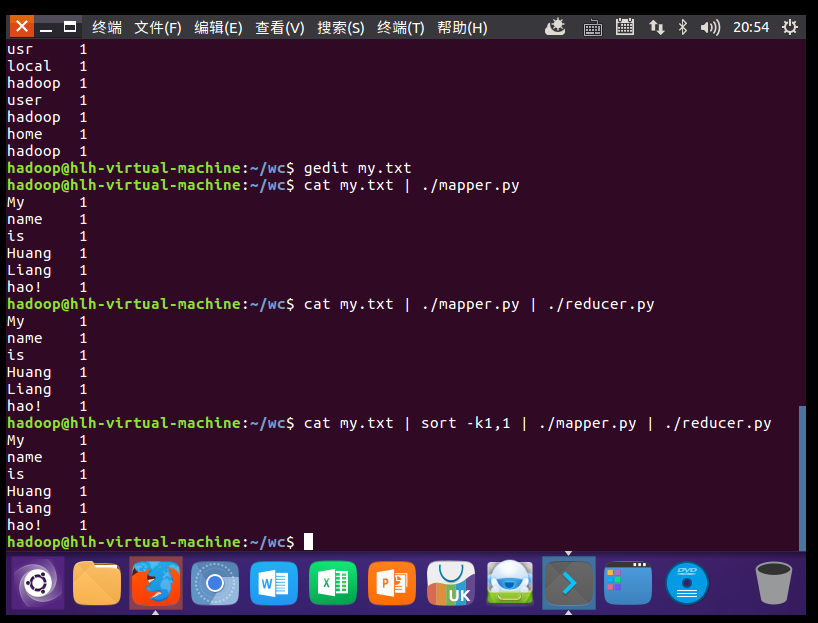

3.本地测试mapper与reducer

echo “usr local hadoop user hadoop home hadoop" | ./mapper.py

echo “usr local hadoop user hadoop home hadoop" | ./mapper.py | ./reducer.py

echo “usr local hadoop user hadoop home hadoop" | ./mapper.py | sort -k1,1 | ./reducer.py

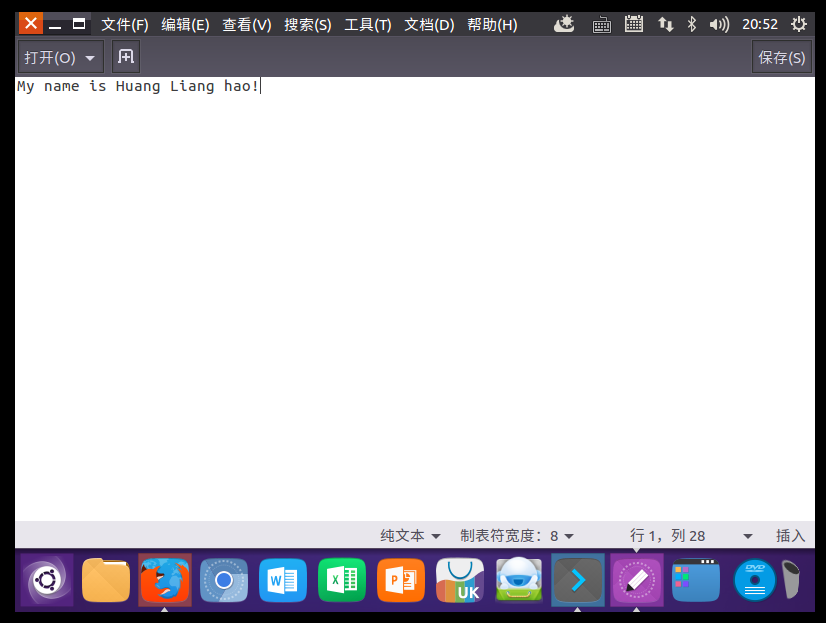

gedit my.txt

cat my.txt | ./mapper.py

cat my.txt | ./mapper.py | ./reducer.py

cat my.txt | ./mapper.py | sort -k1,1 | ./reducer.py

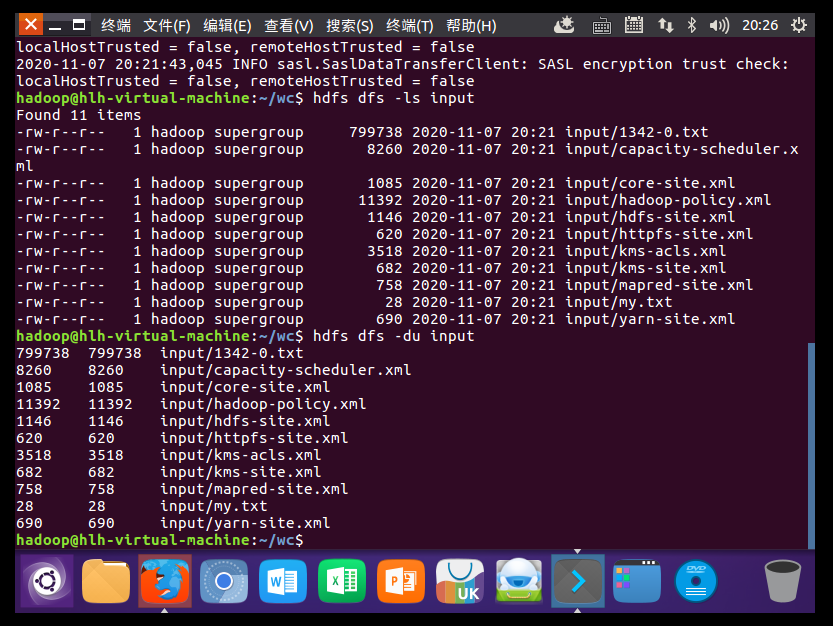

4.将文本数据上传到HDFS上

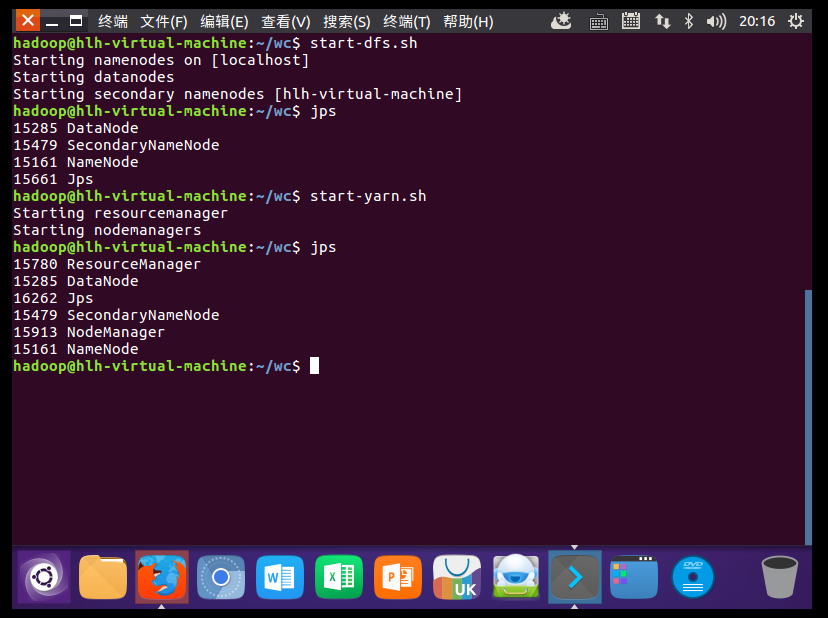

start-dfs.sh

start.yarn.sh

jps

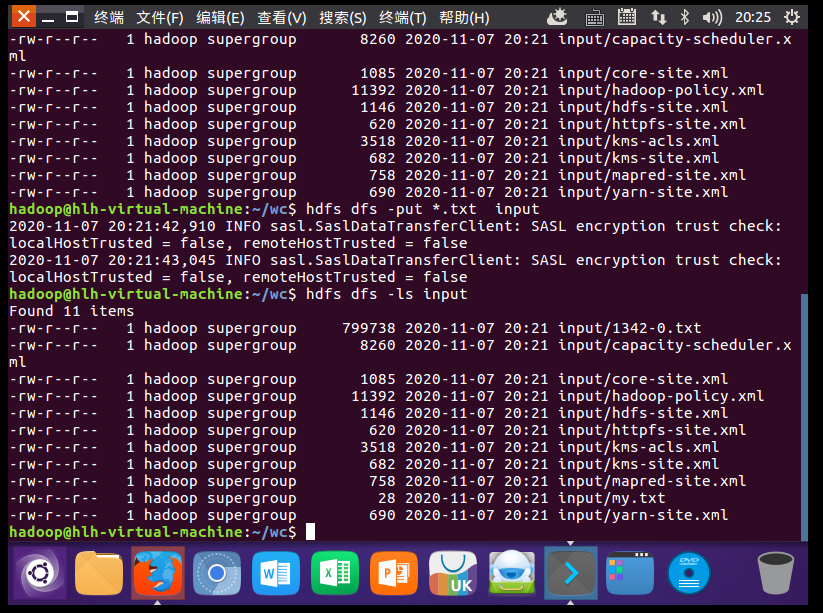

hdfs dfs -put *.txt input

hdfs dfs -ls input

hdfs dfs -du input

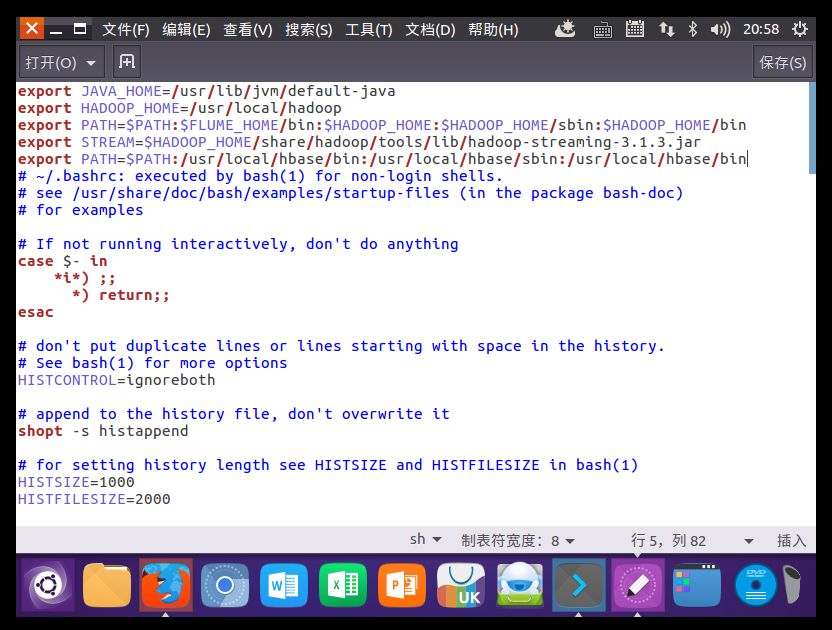

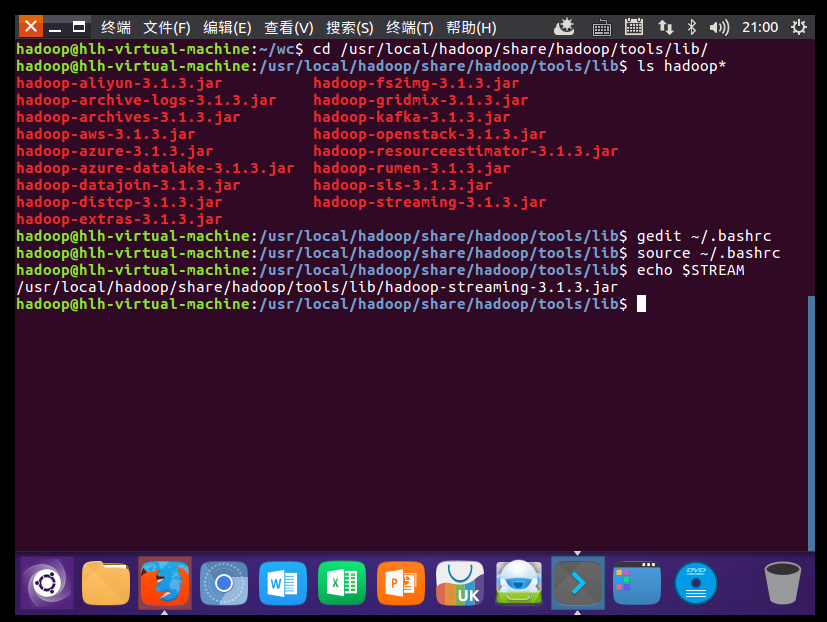

5.准备Hadoop streaming

配置~/.bashrc

export STREAM=$HADOOP_HOME /share/hadoop/tools/lib/hadoop-streaming-3.1.3.jar

验证配置是否成功

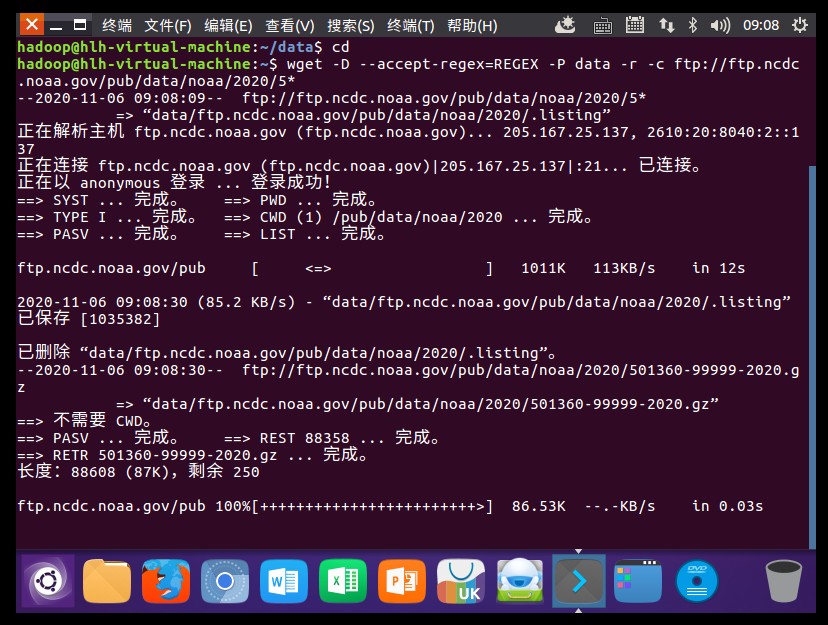

二、气象数据分析

1.下载气象数据

wget -D --accept-regex=REGEX -P data -r -c ftp://ftp.ncdc.noaa.gov/pub/data/noaa/2020/5*

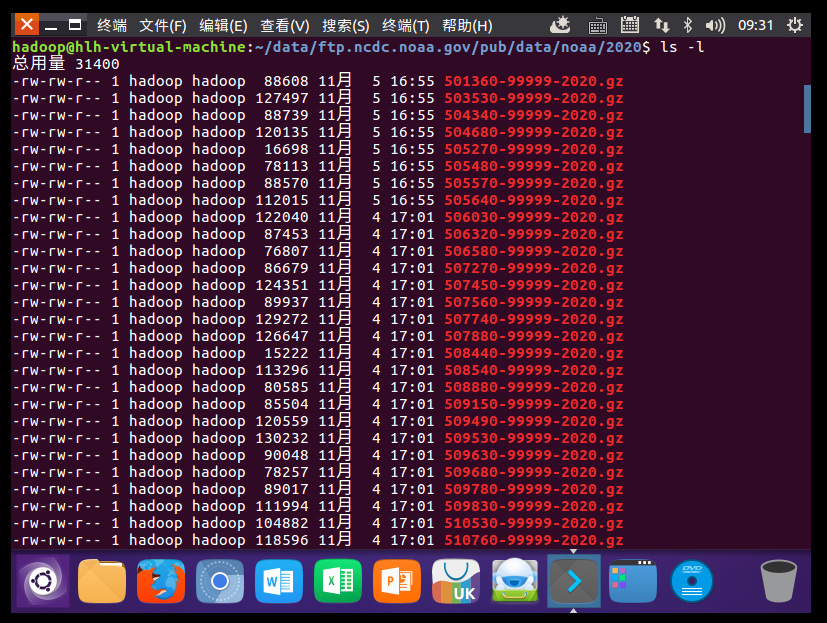

2.查看下载的气象数据文件

cd data/ftp.ncdc.noaa.gov/pub/data/noaa/2020

ls -l

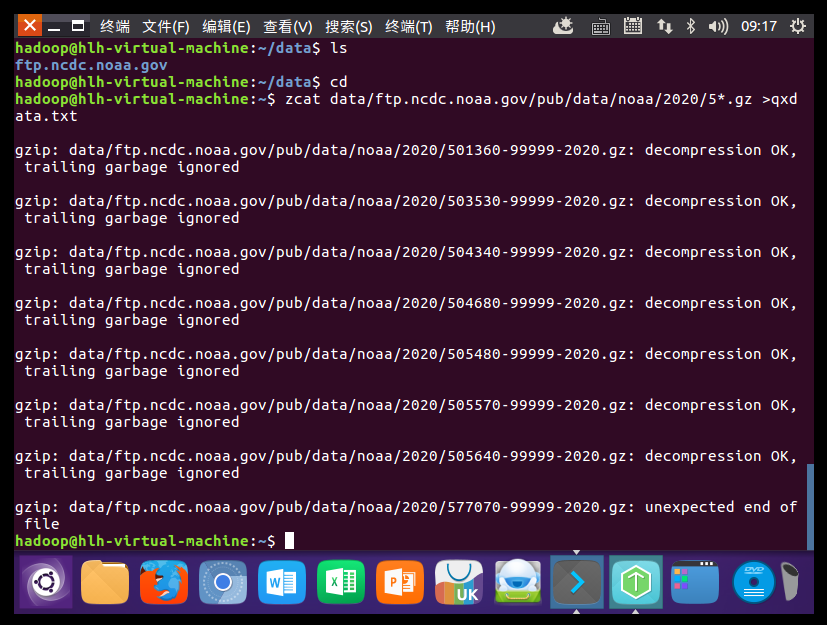

3.解压气象数据文件

zcat data/ftp.ncdc.noaa.gov/pub/data/noaa/2020/5*.gz >qxdata.txt

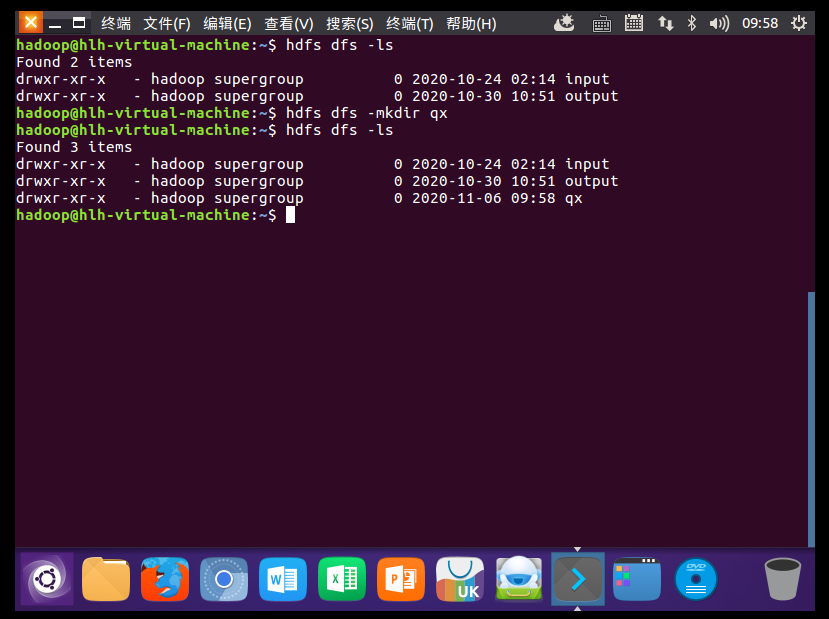

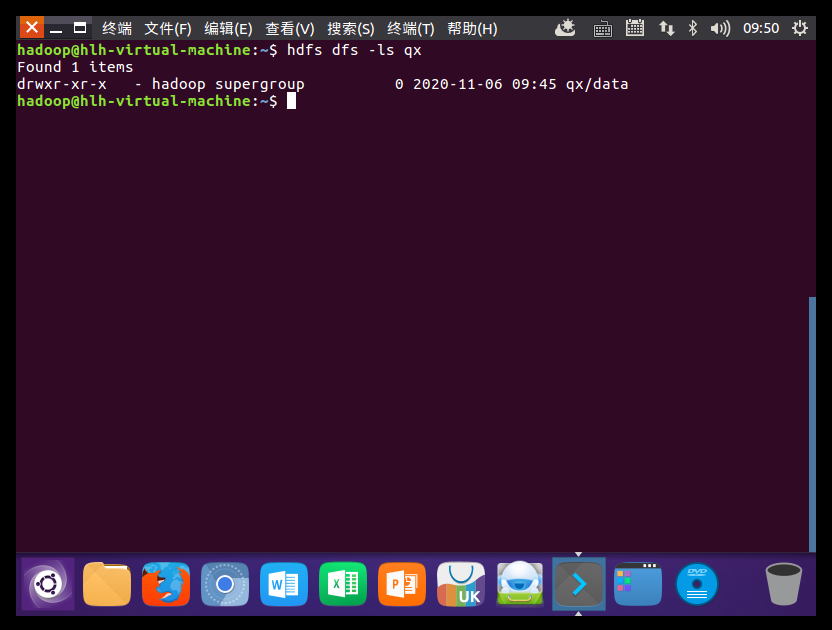

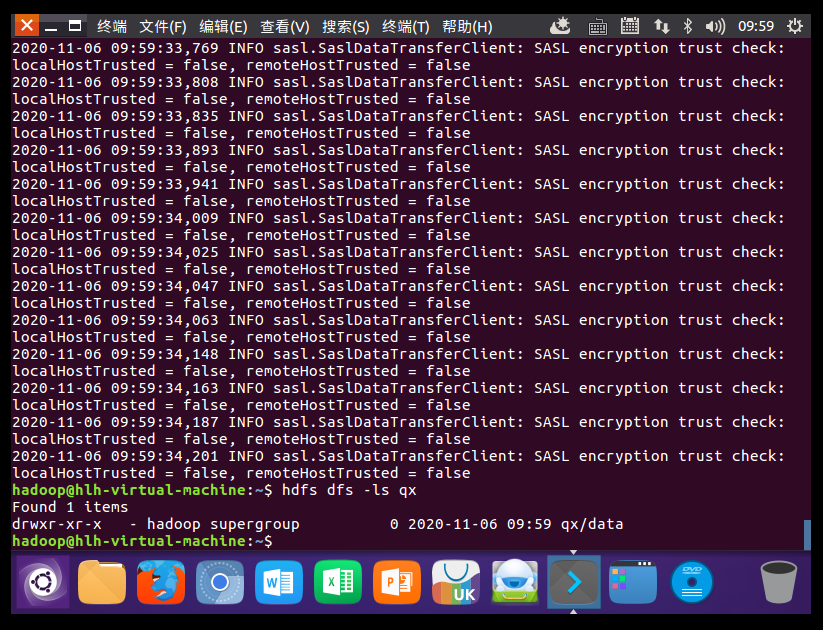

4.上传气象数据文件

hdfs dfs -mkdir qx

hdfs dfs -put data qx