1 //////////////////////////////////////////////////////////////////////////// 2 // 3 // Copyright 1993-2015 NVIDIA Corporation. All rights reserved. 4 // 5 // Please refer to the NVIDIA end user license agreement (EULA) associated 6 // with this source code for terms and conditions that govern your use of 7 // this software. Any use, reproduction, disclosure, or distribution of 8 // this software and related documentation outside the terms of the EULA 9 // is strictly prohibited. 10 // 11 //////////////////////////////////////////////////////////////////////////// 12 13 // 14 // This sample illustrates the usage of CUDA events for both GPU timing and 15 // overlapping CPU and GPU execution. Events are inserted into a stream 16 // of CUDA calls. Since CUDA stream calls are asynchronous, the CPU can 17 // perform computations while GPU is executing (including DMA memcopies 18 // between the host and device). CPU can query CUDA events to determine 19 // whether GPU has completed tasks. 20 // 21 22 // includes, system 23 #include <stdio.h> 24 25 // includes CUDA Runtime 26 #include <cuda_runtime.h> 27 28 // includes, project 29 #include <helper_cuda.h> 30 #include <helper_functions.h> // helper utility functions 31 32 __global__ void increment_kernel(int *g_data, int inc_value) 33 { 34 int idx = blockIdx.x * blockDim.x + threadIdx.x;// thread id 计算分三级:thread, block .grid . 35 g_data[idx] = g_data[idx] + inc_value; //每一个线程,把对应的操作数增加一个常数 36 } 37 38 bool correct_output(int *data, const int n, const int x) 39 { 40 for (int i = 0; i < n; i++) 41 if (data[i] != x) 42 { 43 printf("Error! data[%d] = %d, ref = %d ", i, data[i], x); 44 return false; 45 } 46 47 return true; 48 } 49 50 int main(int argc, char *argv[]) 51 { 52 int devID; 53 cudaDeviceProp deviceProps; 54 55 printf("[%s] - Starting... ", argv[0]); 56 57 // This will pick the best possible CUDA capable device 58 devID = findCudaDevice(argc, (const char **)argv); 59 60 // get device name 61 checkCudaErrors(cudaGetDeviceProperties(&deviceProps, devID)); 62 printf("CUDA device [%s] ", deviceProps.name); 63 64 int n = 16 * 1024 * 1024; 65 int nbytes = n * sizeof(int); 66 int value = 26; 67 68 // allocate host memory 69 int *a = 0; 70 checkCudaErrors(cudaMallocHost((void **)&a, nbytes)); 71 memset(a, 0, nbytes); 72 73 // allocate device memory 74 int *d_a=0; 75 checkCudaErrors(cudaMalloc((void **)&d_a, nbytes)); 76 checkCudaErrors(cudaMemset(d_a, 255, nbytes)); 77 78 // set kernel launch configuration 79 dim3 threads = dim3(1024, 1);//每个block1024个threads,一维 80 dim3 blocks = dim3(n / threads.x, 1);//block数量, 81 82 // create cuda event handles 83 cudaEvent_t start, stop;//运算计时 84 checkCudaErrors(cudaEventCreate(&start)); 85 checkCudaErrors(cudaEventCreate(&stop)); 86 87 StopWatchInterface *timer = NULL; 88 sdkCreateTimer(&timer); 89 sdkResetTimer(&timer); 90 91 checkCudaErrors(cudaDeviceSynchronize()); 92 float gpu_time = 0.0f; 93 printf("a=%d %d %d %d %d %d %d %d %d ",a[n-1-0],a[n-1-1],a[n-1-2],a[n-1-3],a[n-1-4],a[n-1-5],a[n-1-6],a[n-1-7],a[n-1-8]); 94 // asynchronously issue work to the GPU (all to stream 0) 95 sdkStartTimer(&timer); 96 cudaEventRecord(start, 0); 97 cudaMemcpyAsync(d_a, a, nbytes, cudaMemcpyHostToDevice, 0);//把host中变量a复制到device中的变量d_a 98 increment_kernel<<<blocks, threads, 0, 0>>>(d_a, value);//device执行 99 cudaMemcpyAsync(a, d_a, nbytes, cudaMemcpyDeviceToHost, 0);//device结果复制到host 100 cudaEventRecord(stop, 0); 101 sdkStopTimer(&timer); 102 103 // have CPU do some work while waiting for stage 1 to finish 104 unsigned long int counter=0; 105 106 while (cudaEventQuery(stop) == cudaErrorNotReady) 107 { 108 counter++; 109 } 110 111 checkCudaErrors(cudaEventElapsedTime(&gpu_time, start, stop)); 112 113 // print the cpu and gpu times 114 printf("time spent executing by the GPU: %.2f ", gpu_time); 115 printf("time spent by CPU in CUDA calls: %.2f ", sdkGetTimerValue(&timer)); 116 printf("CPU executed %lu iterations while waiting for GPU to finish ", counter); 117 printf("a=%d %d %d %d %d %d %d %d %d ",a[n-1-0],a[n-1-1],a[n-1-2],a[n-1-3],a[n-1-4],a[n-1-5],a[n-1-6],a[7],a[8]); 118 119 // check the output for correctness 120 bool bFinalResults = correct_output(a, n, value); 121 122 // release resources 123 checkCudaErrors(cudaEventDestroy(start)); 124 checkCudaErrors(cudaEventDestroy(stop)); 125 checkCudaErrors(cudaFreeHost(a)); 126 checkCudaErrors(cudaFree(d_a)); 127 128 exit(bFinalResults ? EXIT_SUCCESS : EXIT_FAILURE); 129 }

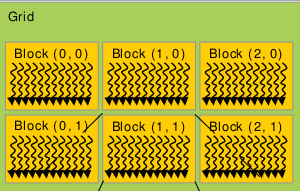

一个grid包含多个blocks,这些blocks的组织方式可以是一维,二维或者三维。任何一个block包含有多个Threads,这些Threads的组织方式也可以是一维,二维或者三维。举例来讲:比如上图中,任何一个block中有10个Thread,那么,Block(0,0)的第一个Thread的ThreadIdx是0,Block(1,0)的第一个Thread的ThreadIdx是11;Block(2,0)的第一个Thread的ThreadIdx是21,......,依此类推,