结构化感知器(Structured Perceptron, SP)是由Collins [1]在EMNLP'02上提出来的,用于解决序列标注的问题。中文分词工具THULAC、LTP所采用的分词模型便是基于此。

1. 结构化感知器

模型

CRF全局化地以最大熵准则建模概率(P(Y|X));其中,(X)为输入序列(x_1^n),(Y)为标注序列(y_1^n)。不同于CRF建模概率函数,SP则是以最大熵准则建模score函数:

其中,(Phi_s(Y,X))为本地特征函数(phi_s(h_i,y_i))的全局化表示:

那么,SP解决序列标注问题,可视作为:给定(X)序列,求解score函数最大值对应的(Y)序列:

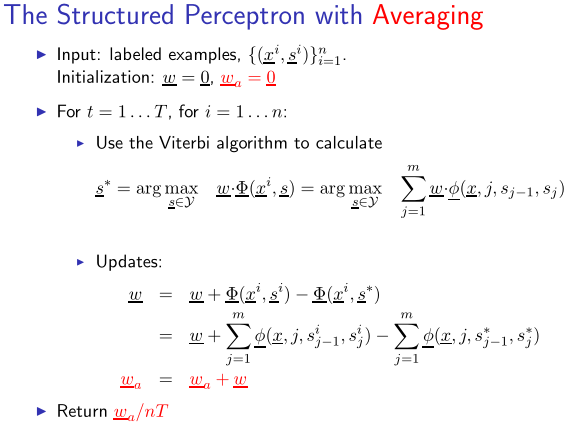

为了避免模型过拟合,保留每一次更新的权重,然后对其求平均。具体流程如下所示:

因此,结构化感知器也被称为平均感知器(Average Perceptron)。

解码

在将SP应用于中文分词时,除了事先定义的特征模板外,还用用到一个状态转移特征((y_{t-1}, y_t))。记在时刻(t)的状态为(y)的路径(y_1^{t})所对应的score函数最大值为

则有,在时刻(t+1)

其中,(w_{y',y})为转移特征((y',y))所对应的权值,(F(y_{t+1}=y,X))为(y_{t+1}=y)所对应的特征模板的特征值的加权之和。

2. 开源实现

张开旭的minitools/cws(THULAC的雏形)给出了SP中文分词的简单实现。首先,来看看定义的特征模板:

def gen_features(self, x): # 枚举得到每个字的特征向量

for i in range(len(x)):

left2 = x[i - 2] if i - 2 >= 0 else '#'

left1 = x[i - 1] if i - 1 >= 0 else '#'

mid = x[i]

right1 = x[i + 1] if i + 1 < len(x) else '#'

right2 = x[i + 2] if i + 2 < len(x) else '#'

features = ['1' + mid, '2' + left1, '3' + right1,

'4' + left2 + left1, '5' + left1 + mid, '6' + mid + right1, '7' + right1 + right2]

yield features

共定义了7个特征:

- (x_iy_i)

- (x_{i-1}y_i)

- (x_{i+1}y_i)

- (x_{i-2}x_{i-1}y_i)

- (x_{i-1}x_{i}y_i)

- (x_{i}x_{i+1}y_i)

- (x_{i+1}x_{i+2}y_i)

将状态B、M、E、S分别对应于数字0、1、2、3:

def load_example(words): # 词数组,得到x,y

y = []

for word in words:

if len(word) == 1:

y.append(3)

else:

y.extend([0] + [1] * (len(word) - 2) + [2])

return ''.join(words), y

训练语料则采取的更新权重:

for i in range(args.iteration):

print('第 %i 次迭代' % (i + 1), end=' '), sys.stdout.flush()

evaluator = Evaluator()

for l in open(args.train, 'r', 'utf-8'):

x, y = load_example(l.split())

z = cws.decode(x)

evaluator(dump_example(x, y), dump_example(x, z))

cws.weights._step += 1

if z != y:

cws.update(x, y, 1)

cws.update(x, z, -1)

evaluator.report()

cws.weights.update_all()

cws.weights.average()

Viterbi算法用于解码,与HMM相类似:

def decode(self, x): # 类似隐马模型的动态规划解码算法

# 类似隐马模型中的转移概率

transitions = [[self.weights.get_value(str(i) + ':' + str(j), 0) for j in range(4)]

for i in range(4)]

# 类似隐马模型中的发射概率

emissions = [[sum(self.weights.get_value(str(tag) + feature, 0) for feature in features)

for tag in range(4)] for features in self.gen_features(x)]

# 类似隐马模型中的前向概率

alphas = [[[e, None] for e in emissions[0]]]

for i in range(len(x) - 1):

alphas.append([max([alphas[i][j][0] + transitions[j][k] + emissions[i + 1][k], j]

for j in range(4))

for k in range(4)])

# 根据alphas中的“指针”得到最优序列

alpha = max([alphas[-1][j], j] for j in range(4))

i = len(x)

tags = []

while i:

tags.append(alpha[1])

i -= 1

alpha = alphas[i][alpha[1]]

return list(reversed(tags))

3. 参考资料

[1] Collins, Michael. "Discriminative training methods for hidden markov models: Theory and experiments with perceptron algorithms." Proceedings of the ACL-02 conference on Empirical methods in natural language processing-Volume 10. Association for Computational Linguistics, 2002.

[2] Zhang, Yue, and Stephen Clark. "Chinese segmentation with a word-based perceptron algorithm." Annual Meeting-Association for Computational Linguistics. Vol. 45. No. 1. 2007.

[3] Kai Zhao and Liang Huang, Structured Prediction with Perceptron: Theory and Algorithms.

[4] Michael Collins, Lecture 4, COMS E6998-3: The Structured Perceptron.