运行代码:

from __future__ import print_function

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

# 神经层函数

def add_layer(inputs, in_size, out_size, activation_function=None):

Weights = tf.Variable(tf.random_normal([in_size, out_size]))

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1)

Wx_plus_b = tf.matmul(inputs, Weights) + biases

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b)

return outputs

# 导入数据

x_data = np.linspace(-1, 1, 300)[:, np.newaxis]

noise = np.random.normal(0, 0.05, x_data.shape)

y_data = np.square(x_data) - 0.5 + noise

# 利用占位符定义我们所需的神经网络输入

xs = tf.placeholder(tf.float32, [None, 1])

ys = tf.placeholder(tf.float32, [None, 1])

# 定义隐藏层

l1 = add_layer(xs, 1, 10, activation_function=tf.nn.relu)

# 定义输出层

prediction = add_layer(l1, 10, 1, activation_function=None)

# 计算误差和提供准确率

loss = tf.reduce_mean(tf.reduce_sum(tf.square(ys-prediction), reduction_indices=[1]))

train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss)

init = tf.global_variables_initializer()

# 输出结果

sess = tf.Session()

sess.run(init)

# matplotlib可视化

fig = plt.figure()

ax = fig.add_subplot(1,1,1)

ax.scatter(x_data, y_data)

plt.ion()

plt.show()

# 机器学习,学习1000次

for i in range(1000):

# 每50步输出学习误差

sess.run(train_step, feed_dict={xs: x_data, ys: y_data})

if i % 50 == 0:

# 可视化结果和改进

try:

ax.lines.remove(lines[0])

except Exception:

pass

prediction_value = sess.run(prediction, feed_dict={xs: x_data})

# 用红色和宽度为5的线来显示预测结果,并暂停0.1秒

lines = ax.plot(x_data, prediction_value, 'r-', lw=5)

plt.pause(1)

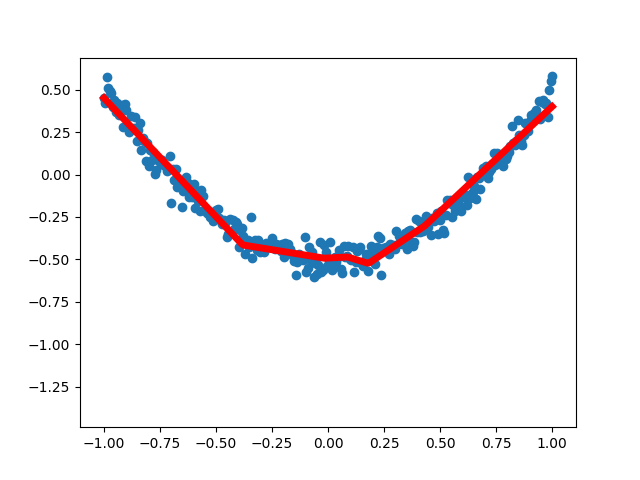

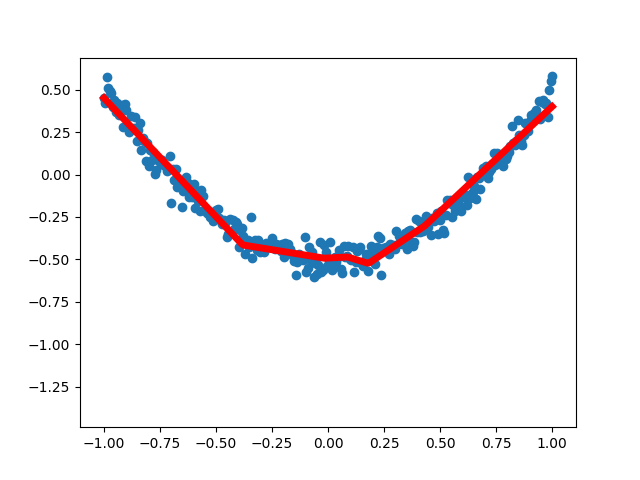

运行结果: