下载一长篇中文文章。

从文件读取待分析文本。

news = open('gzccnews.txt','r',encoding = 'utf-8')

安装与使用jieba进行中文分词。

pip install jieba

import jieba

list(jieba.lcut(news))

生成词频统计

排序

排除语法型词汇,代词、冠词、连词

输出词频最大TOP20

import jieba article = open('test.txt','r').read() dele = {'。','!','?','的','“','”','(',')',' ','》','《',','} jieba.add_word('大数据') words = list(jieba.cut(article)) articleDict = {} articleSet = set(words)-dele for w in articleSet: if len(w)>1: articleDict[w] = words.count(w) articlelist = sorted(articleDict.items(),key = lambda x:x[1], reverse = True) for i in range(10): print(articlelist[i])

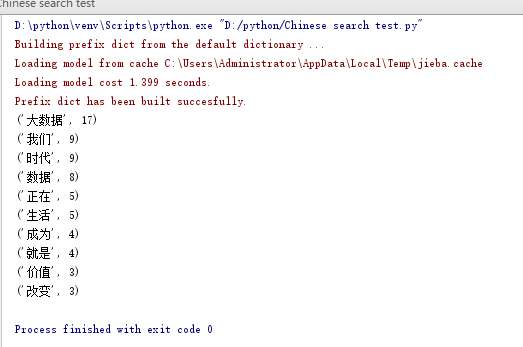

运行截图: