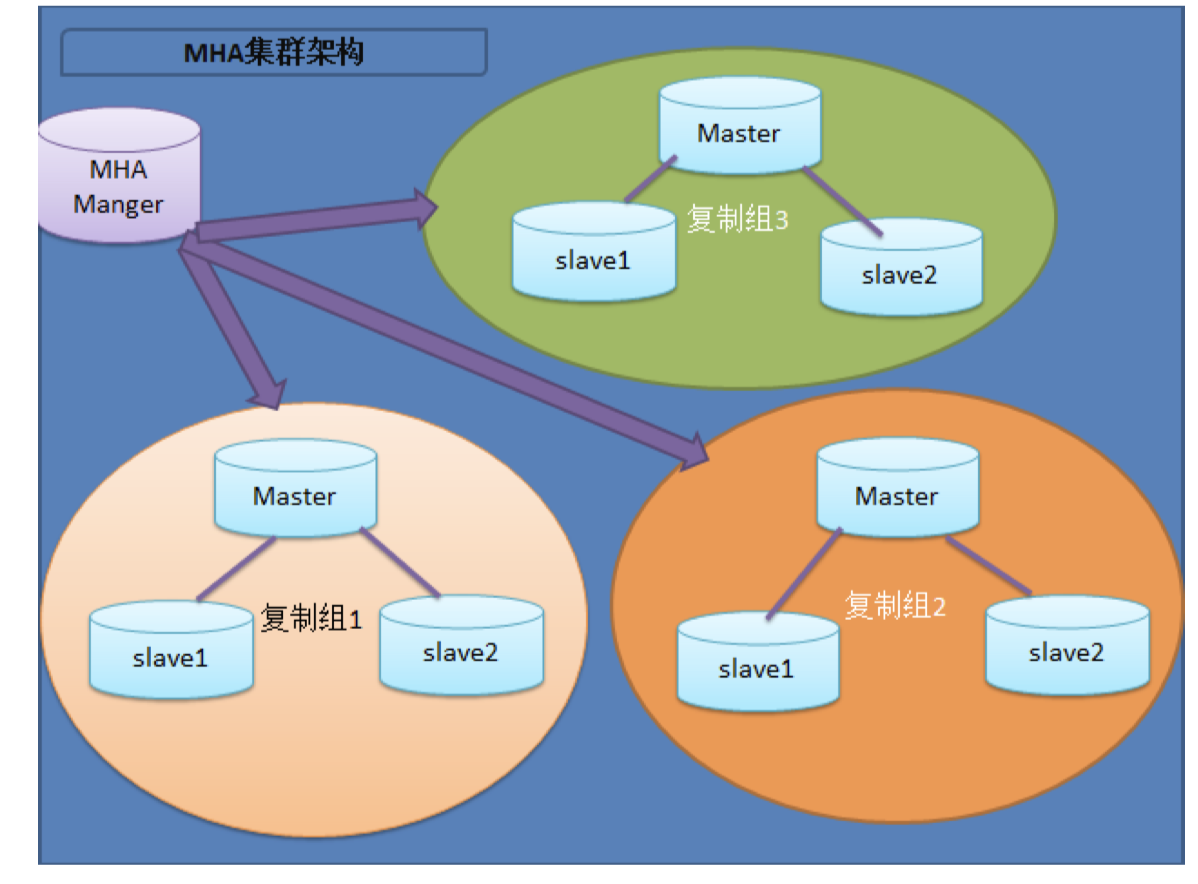

架构图:

1.Mysql高可用(MHA)

介绍:mysql的高可用主要是解决主库宕机,从库可以直接接替主库工作,可以实现故障自动转移,也是解决单点故障问题

前提:假如我们有三台服务器,架构是一主两从,设想一下如何设计当主库宕机时,从库可以直接接替主从继续工作?

常用的主从复制基本架构:

(1)一主一从

(2)一主多从

(3)多级主从

(4)双主

(5)循环复制

1.1 高性能架构:

读写分离架构(读的性能较高)

Msql proxy(Atlas,mysql router,proxySQL(percona),maxscale)、amebba(taobao)

分布式架构(读写性能都提高)

分库分表 -------> cobal --------> TDDL(头都大了),DRDS

mycat --->DBLE自主研发等

NewSQL-----》TiDB

1.2 高可用架构

(1)单活:MMM架构——mysql-mmm(google) (2)单活:MHA架构——mysql-master-ha(日本DeNa),T-MHA (3)多活:MGR ——5.7 新特性 MySQL Group replication(5.7.17) --->Innodb Cluster (4)多活:MariaDB Galera Cluster架构,(PXC)Percona XtraDB Cluster、MySQL Cluster(Oracle rac)架构

1.3 高可用MHA

架构工作原理

主库宕机处理过程 1. 监控节点 (通过配置文件获取所有节点信息) 系统,网络,SSH连接性 主从状态,重点是主库 2. 选主 (1) 如果判断从库(position或者GTID),数据有差异,最接近于Master的slave,成为备选主 (2) 如果判断从库(position或者GTID),数据一致,按照配置文件顺序,选主. (3) 如果设定有权重(candidate_master=1),按照权重强制指定备选主. 1. 默认情况下如果一个slave落后master 100M的relay logs的话,即使有权重,也会失效. 2. 如果check_repl_delay=0的化,即使落后很多日志,也强制选择其为备选主 3. 数据补偿 (1) 当SSH能连接,从库对比主库GTID 或者position号,立即将二进制日志保存至各个从节点并且应用(save_binary_logs ) (2) 当SSH不能连接, 对比从库之间的relaylog的差异(apply_diff_relay_logs) 4. Failover 将备选主进行身份切换,对外提供服务 其余从库和新主库确认新的主从关系 5. 应用透明(VIP) 6. 故障切换通知(send_reprt) 7. 二次数据补偿(binlog_server) 8. 自愈自治(待开发...)

MHA工作原理:

MHA的目的在于维持MySQL Replication中Master库的高可用性,其最大特点是可以修复多个Slave之间的差异日志,最终使所有Slave保持数据一致,然后从中选择一个充当新的Master,并将其它Slave指向它。 当master出现故障时,可以通过对比slave之间I/O thread 读取主库binlog的position号,选取最接近的slave做为备选主库(备胎)。其它的从库可以通过与备选主库对比生成差异的中继日志。在备选主库上应用从原来master保存的binlog,同时将备选主库提升为master。最后在其它slave上应用相应的差异中继日志并开始从新的master开始复制。

架构设计:

node01: 主库 172.17.94.206:3307 安装MHA node节点

node02:从库 172.17.94.207:3307 安装 MHA node 节点

node02 从库 172.17.94.207:3308 安装 MHA node 节点

node03 安装MHA manage 节点

2. 环境搭建

2.1 配置关键程序软连接

ln -s /application/mysql/bin/mysqlbinlog /usr/bin/mysqlbinlog

ln -s /application/mysql/bin/mysql /usr/bin/mysql

2.2 配置各个节点互信(免交互)

### 172.17.94.206:3307 主库

### 172.17.94.207:3307 从库1

### 172.17.94.207:3308 从库2

在主库中操作:

rm -rf /root/.ssh ssh-keygen cd /root/.ssh mv id_rsa.pub authorized_keys scp -r /root/.ssh 172.17.94.207:/root ###操作是将主库中的/root/.ssh 文件拷贝到其他从库的根目录下

验证一下各个节点是否是免密连接的

3.安装软件和搭建MHA(所有节点)

(1)#下载mha软件 mha官网:https://code.google.com/archive/p/mysql-master-ha/ github下载地址:https://github.com/yoshinorim/mha4mysql-manager/wiki/Downloads #软件包 mha4mysql-manager-0.56-0.el6.noarch.rpm mha4mysql-node-0.56-0.el6.noarch.rpm (2)#所有节点安装Node软件依赖包 yum install perl-DBD-MySQL -y rpm -ivh mha4mysql-node-0.56-0.el6.noarch.rpm (3)在主库中创建mha需要的用户(注意:应该此时主从复制已经存在,所以当主库建立用户mha时,从库中也相应的生成mha用户) grant all privileges on *.* to mha@'172.17.94.%' identified by '123'; (4)manager软件安装(这个软件可以选择一个台节点压力不是很大的作为管理节点,这里一般不要在主库上安装)

##安装依赖包 ############yum install -y perl-Config-Tiny epel-release perl-Log-Dispatch perl-Parallel-ForkManager perl-Time-HiRes(这个依赖包不全,需要用下面的一个)

#yum install perl-DBI perl-DBD-MySQL perl-Config-Tiny perl-Log-Dispatch perl-Parallel-ForkManager perl-Time-HiRes perl-Params-Validate perl-DateTime -y

#yum install epel-release -y

rpm -ivh mha4mysql-manager-0.56-0.el6.noarch.rpm

3.1 MHA软件构成

Manager工具包主要包括以下几个工具:

masterha_manger 启动MHA

masterha_check_ssh 检查MHA的SSH配置状况

masterha_check_repl 检查MySQL复制状况

masterha_master_monitor 检测master是否宕机

masterha_check_status 检测当前MHA运行状态

masterha_master_switch 控制故障转移(自动或者手动)

masterha_conf_host 添加或删除配置的server信息

Node工具包主要包括以下几个工具:

这些工具通常由MHA Manager的脚本触发,无需人为操作

save_binary_logs 保存和复制master的二进制日志(脚本)

apply_diff_relay_logs 识别差异的中继日志事件并将其差异的事件应用于其他的(脚本)

purge_relay_logs 清除中继日志(不会阻塞SQL线程)(脚本)

4.配置文件

(5)准备配置文件(这里的配置文件时安装MHA管理节点)

创建配置文件目录

mkdir -p /etc/mha

创建日志目录

mkdir -p /var/log/mha/app1

编辑mha配置文件

vim /etc/mha/app1.cnf

[server default]

manager_log=/var/log/mha/app1/manager ###这个自己定义就可以了,这个是mha比较重要的日志

manager_workdir=/var/log/mha/app1 ###这个自己定义就可以了

master_binlog_dir=/data/binlog ###这个是主库的binlog日志的位置,全局的binlog日志位置

master_ip_failover_script=/usr/local/bin/master_ip_failover #添加脚本位置,这个是应用透明代理的(vip)管理的地方

user=mha

password=123

ping_interval=2

repl_password=123

repl_user=repl

ssh_user=root

[server1]

hostname=172.17.94.206

port=3307

[server2]

hostname=172.17.94.207

port=3307

[server3]

hostname=172.17.94.207

port=3308

5.如果按照上述配置完成后

5.1 节点状态检查(node03 管理节点)

masterha_check_ssh --conf=/etc/mha/app1.cnf #互信检查 masterha_check_repl --conf=/etc/mha/app1.cnf #主从状态检查

5.2 启动MHA

nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null> /var/log/mha/app1/manager.log 2>&1 &

5.3 查看MHA状态

masterha_check_status --conf=/etc/mha/app1.cnf

####通过以下命令来验证

mysql -umha -p123 -h 172.17.94.206 -e "show variables like 'server_id'" mysql -umha -p123 -h 172.17.94.207 -e "show variables like 'server_id'" mysql -umha -p123 -h 172.17.94.208 -e "show variables like 'server_id'"

6.故障模拟及处理:

### 停主库: /etc/init.d/mysqld stop 或者systemctl stop mysqld3307 观察manager 日志 tail -f /var/log/mha/app1/manager 末尾必须显示successfully,才算正常切换成功。

####修复主库

systemctl start mysqld3307

恢复主从架构:

change master to

master_host='目前主库ip',

master_port='目前主库端口号',

master_auto_position=1,

master_user='rep',

master_password='123',

###启动复制线程

start slave;

###修改配置文件

[server1]

hostname=

port=

#####最后在启动MHA

nohup masterha_manager --conf=/etc/mha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null> /var/log/mha/app1/manager.log 2>&1 &

补充说明:

1. MHA启动程序会在其中的一个数据库(主库or从库?)挂掉后先会开始选取一个新的主库并且让其他的从库可以复制然后会在自动从后台退出(也就说这个程序只要数据库一挂掉,就退出来了)

2. 当主库挂掉后,MHA会在配置文件中选取一个节点接替主从工作,并且会将挂掉的主库从配置文件中删除

7. MHA的Failover过程详解

7.1 什么是Failover? 故障转移 主库宕机一直到业务恢复正常的处理过程 7.2Failover让你实现怎么做? (1)快速监控主库宕机 (2)选择新的主库 (3)数据 补偿 (4)解除从库身份 (5)剩余从库和新主库构建主从关系 (6)应用透明 (7)故障节点自愈(待开发) (8)故障提醒 4.3 MHA的Failover如何实现? 从启动 --> 故障 -->转移-->业务恢复 (1)MHA通过masterha_manager脚本自动启动MHA的功能 (2)在manager启动之前,会自动检查ssh互信(masterha_check_ssh)和主从状态(master_check_repl) (3)MHA-manager 通过masterha_master_monitor脚本(每隔ping_interval秒) (4)masterha_master_monitor探测主库3次无心跳之后,就认为主库宕机了。 (5)进行选主过程 算法一: 读取配置文件中是否有强制选主的参数? candidate_master=1 ###设置为候选master,如果设置该参数以后,发生主从切换以后会将此从库提升为主库,即使这个主库不是集群中事件最新的slave check_repl_delay=0 ### 默认情况下如果一个slave落后master 100M的relay logs 的话,MHA将不会选择该slave作为一个新的master,因为对于这个slave的恢复需要花费很长时间,

通过设置check_repl_delay=0,MHA触发切换在选择一个新的master的时候将会忽略复制延时,这个参数对于设置了candidate_master=1的主机非常有用,因为这个候选主在切换的过程中一定是新的master 算法二: 自动判读所有从库的日志量,将最接近的主库数据的从库作为新主 算法三: 按照配置文件先后顺序的进行选新主 (6)数据补偿 1.判断主库ssh的连通性 情况一:能连 调用save_binary_logs脚本,立即保存缺失部分的binlog到各个节点 情况二:ssh无法连接 调用apply_diff_relay_logs脚本,计算从库的relaylog的差异,恢复到2号从库 (7)解除从库身份 (8)剩余从库和新主库构建主从关系 (9)应用透明

8 应用透明(VIP) 不能在ECS云服务器上应用。

前提:需要我们要准备一个master_ip_failover脚本,这个脚本来源于mha4mysql-manager-0.56.tar.gz 软件包,cd samples/scripts/ 这个目录下,我们需要将它复制到/usr/local/bin目录下,这个等会会和配置文件是一一对应的,

看看这个脚本(修改后的部分)

my $vip = '172.17.94.100/20'; ###这个ip地址一定是可以访问的,在虚拟机中可以在相应的网段中找出一个没有使用的即可,但是如果是ECS服务器则不能使用VIP,就不能搭建透明代理 my $key = '0'; my $ssh_start_vip = "/sbin/ifconfig eth0:$key $vip"; my $ssh_stop_vip = "/sbin/ifconfig eth0:$key down";

9.额外的补偿:

9.1 配置binlog-server备份服务器

作用:主机宕机,也许会造成主库binlog复制不及时而导致数据丢失的情况出现,因此配置binlog-server进行时时同步备份,是一种不要的安全手段

修改配置文件:

vi /etc/mha/app1.cnf

和上面配置一样,但是需要在末尾添加上如下几行

[binlog1]

no_master=1

hostname=主库上面的ip地址

master_binlog_dir=/data/3307/binlog/ ###binlog存放位置优先级比全局的高

9.2 拉取主库上面的binlog日志到一个数据库中存放

1.mkidr -p /data/mysql/binlog ###创建存放目录

2.cd /data/mysql/binlog ###进入目录

3. mysqlbinlog -R --host=主库的ip地址 -P端口号 --user=mha --password=123 --raw --stop-never mysql-bin.000001 & #拉取主库binlog

4.启动MHA进程 nohup masterha_manager --conf=/etc/mha/mha1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/mha/mha1/manager.log 2>&1 &

附录:

[server default] manager_log=/etc/mha/log/app1.log manager_workdir=/etc/mha/app1/ master_binlog_dir=/data/mysql3306 master_ip_failover_script=/etc/mha/master_ip_failover master_ip_online_change_script=/etc/mha/master_ip_online_change ping_interval=1 remote_workdir=/tmp secondary_check_script=/bin/masterha_secondary_check -s ip235 -s ip236 --user=root --master_host=ip230 --master_ip=192.168.0.230 --master_port=3306 ssh_user=root user=mha password=mha repl_password=repl repl_user=repl

#report_script=/usr/local/send_report ##告警脚本,可自行修改,这里没有使用

#设置故障发生后关闭故障主机的脚本(主要作用是关闭主机防止发生脑裂,这里没有使用,类似Fence功能)

#shutdown_script="/usr/local/bin/power_manager --command=stopssh2 --host=test-1 --ssh_user=root" [server1] hostname=192.168.0.230 port=3306 [server2] candidate_master=1 check_repl_delay=0 hostname=192.168.0.235 port=3306 [server3] hostname=192.168.0.236 port=3306

配置解释:

manager_log=/etc/mha/log/app1.log #日志位置 manager_workdir=/etc/mha/app1/ # MHA工作目录 master_binlog_dir=/data/mysql3306 #设置Master保存binlog位置的目录 master_ip_failover_script=/etc/mha/master_ip_failover #自动Failover脚本 master_ip_online_change_script=/etc/mha/master_ip_online_change #设置手动切换脚本 ping_interval=1 #设置监控主库的间隔 remote_workdir=/tmp #binlog临时保存目录 secondary_check_script=/bin/masterha_secondary_check -s ip235 -s ip236 --user=root --master_host=ip230 --master_ip=192.168.0.230 --master_port=3306 #检查存活脚本 ssh_user=root #ssh登录名 user=mha #MHA监控所有MySQL节点的用户名 password=mha #MHA监控所有MySQL节点的密码 repl_user=repl #MySQL复制的用户名 repl_password=repl #MySQL复制的密码

# 告警脚本,可自行修改,这里没有使用 #report_script=/usr/local/send_report [server2] candidate_master=1 #候选主库 check_repl_delay=0 #忽略延迟大小 hostname=192.168.0.235

master_ip_failover

#!/usr/bin/env perl use strict; use warnings FATAL => 'all'; use Getopt::Long; my ( $command, $ssh_user, $orig_master_host, $orig_master_ip, $orig_master_port, $new_master_host, $new_master_ip, $new_master_port ); my $vip = '192.168.0.20/24'; my $key = 'wvip'; my $ssh_start_vip = "/sbin/ifconfig eth0:$key $vip"; my $ssh_stop_vip = "/sbin/ifconfig eth0:$key down"; GetOptions( 'command=s' => $command, 'ssh_user=s' => $ssh_user, 'orig_master_host=s' => $orig_master_host, 'orig_master_ip=s' => $orig_master_ip, 'orig_master_port=i' => $orig_master_port, 'new_master_host=s' => $new_master_host, 'new_master_ip=s' => $new_master_ip, 'new_master_port=i' => $new_master_port, ); exit &main(); sub main { print " IN SCRIPT TEST====$ssh_stop_vip==$ssh_start_vip=== "; if ( $command eq "stop" || $command eq "stopssh" ) { my $exit_code = 1; eval { print "Disabling the VIP on old master: $orig_master_host "; &stop_vip(); $exit_code = 0; }; if ($@) { warn "Got Error: $@ "; exit $exit_code; } exit $exit_code; } elsif ( $command eq "start" ) { my $exit_code = 10; eval { print "Enabling the VIP - $vip on the new master - $new_master_host "; &start_vip(); $exit_code = 0; }; if ($@) { warn $@; exit $exit_code; } exit $exit_code; } elsif ( $command eq "status" ) { print "Checking the Status of the script.. OK "; exit 0; } else { &usage(); exit 1; } } sub start_vip() { `ssh $ssh_user@$new_master_host " $ssh_start_vip "`; } sub stop_vip() { return 0 unless ($ssh_user); `ssh $ssh_user@$orig_master_host " $ssh_stop_vip "`; } sub usage { print "Usage: master_ip_failover --command=start|stop|stopssh|status --orig_master_host=host --orig_master_ip=ip --orig_master_port=port --new_master_host=host --new_master_ip=ip --new_master_port=port "; }

master_ip_online_change

#!/usr/bin/env perl use strict; use warnings FATAL =>'all'; use Getopt::Long; my $vip = '192.168.0.20/24'; # Virtual IP my $key = "wvip"; my $ssh_start_vip = "/sbin/ifconfig eth0:$key $vip"; my $ssh_stop_vip = "/sbin/ifconfig eth0:$key down"; my $exit_code = 0; my $orig_master_ssh_user='root'; my $new_master_ssh_user='root'; my ( $command, $orig_master_is_new_slave, $orig_master_host, $orig_master_ip, $orig_master_port, $orig_master_user, $orig_master_password, $new_master_host, $new_master_ip, $new_master_port, $new_master_user, $new_master_password, ); GetOptions( 'command=s' => $command, 'orig_master_is_new_slave' => $orig_master_is_new_slave, 'orig_master_host=s' => $orig_master_host, 'orig_master_ip=s' => $orig_master_ip, 'orig_master_port=i' => $orig_master_port, 'orig_master_user=s' => $orig_master_user, 'orig_master_password=s' => $orig_master_password, 'orig_master_ssh_user=s' => $orig_master_ssh_user, 'new_master_host=s' => $new_master_host, 'new_master_ip=s' => $new_master_ip, 'new_master_port=i' => $new_master_port, 'new_master_user=s' => $new_master_user, 'new_master_password=s' => $new_master_password, 'new_master_ssh_user=s' => $new_master_ssh_user, ); exit &main(); sub main { #print " IN SCRIPT TEST====$ssh_stop_vip==$ssh_start_vip=== "; if ( $command eq "stop" || $command eq "stopssh" ) { # $orig_master_host, $orig_master_ip, $orig_master_port are passed. # If you manage master ip address at global catalog database, # invalidate orig_master_ip here. my $exit_code = 1; eval { print " *************************************************************** "; print "Disabling the VIP - $vip on old master: $orig_master_host "; print "*************************************************************** "; &stop_vip(); $exit_code = 0; }; if ($@) { warn "Got Error: $@ "; exit $exit_code; } exit $exit_code; } elsif ( $command eq "start" ) { # all arguments are passed. # If you manage master ip address at global catalog database, # activate new_master_ip here. # You can also grant write access (create user, set read_only=0, etc) here. my $exit_code = 10; eval { print " *************************************************************** "; print "Enabling the VIP - $vip on new master: $new_master_host "; print "*************************************************************** "; &start_vip(); $exit_code = 0; }; if ($@) { warn $@; exit $exit_code; } exit $exit_code; } elsif ( $command eq "status" ) { print "Checking the Status of the script.. OK "; `ssh $orig_master_ssh_user@$orig_master_host " $ssh_start_vip "`; exit 0; } else { &usage(); exit 1; } } # A simple system call that enable the VIP on the new master sub start_vip() { `ssh $new_master_ssh_user@$new_master_host " $ssh_start_vip "`; } # A simple system call that disable the VIP on the old_master sub stop_vip() { `ssh $orig_master_ssh_user@$orig_master_host " $ssh_stop_vip "`; } sub usage { print "Usage: master_ip_failover –command=start|stop|stopssh|status –orig_master_host=host –orig_master_ip=ip –orig_master_port=po rt –new_master_host=host –new_master_ip=ip –new_master_port=port "; }

附录2:

当主节点点挂掉后所产生的日志

[root@node04 app1]# ls app1.master_status.health manager manager.log [root@node04 app1]# tail -f manager 192.168.11.4(192.168.11.4:3307) (current master) +--192.168.11.5(192.168.11.5:3307) +--192.168.11.6(192.168.11.6:3307) Sat Feb 27 08:03:21 2021 - [warning] master_ip_failover_script is not defined. Sat Feb 27 08:03:21 2021 - [warning] shutdown_script is not defined. Sat Feb 27 08:03:21 2021 - [info] Set master ping interval 2 seconds. Sat Feb 27 08:03:21 2021 - [warning] secondary_check_script is not defined. It is highly recommended setting it to check master reachability from two or more routes. Sat Feb 27 08:03:21 2021 - [info] Starting ping health check on 192.168.11.4(192.168.11.4:3307).. Sat Feb 27 08:03:21 2021 - [info] Ping(SELECT) succeeded, waiting until MySQL doesn't respond.. #####这里是当主节点挂掉后所产生的日志 Sat Feb 27 09:57:45 2021 - [warning] Got error on MySQL select ping: 2006 (MySQL server has gone away) Sat Feb 27 09:57:45 2021 - [info] Executing SSH check script: exit 0 Sat Feb 27 09:57:45 2021 - [info] HealthCheck: SSH to 192.168.11.4 is reachable. Sat Feb 27 09:57:47 2021 - [warning] Got error on MySQL connect: 2003 (Can't connect to MySQL server on '192.168.11.4' (111)) Sat Feb 27 09:57:47 2021 - [warning] Connection failed 2 time(s).. Sat Feb 27 09:57:49 2021 - [warning] Got error on MySQL connect: 2003 (Can't connect to MySQL server on '192.168.11.4' (111)) Sat Feb 27 09:57:49 2021 - [warning] Connection failed 3 time(s).. Sat Feb 27 09:57:51 2021 - [warning] Got error on MySQL connect: 2003 (Can't connect to MySQL server on '192.168.11.4' (111)) Sat Feb 27 09:57:51 2021 - [warning] Connection failed 4 time(s).. Sat Feb 27 09:57:51 2021 - [warning] Master is not reachable from health checker! Sat Feb 27 09:57:51 2021 - [warning] Master 192.168.11.4(192.168.11.4:3307) is not reachable! Sat Feb 27 09:57:51 2021 - [warning] SSH is reachable. Sat Feb 27 09:57:51 2021 - [info] Connecting to a master server failed. Reading configuration file /etc/masterha_default.cnf and /etc/mha/app1.cnf again, and trying to connect to all servers to check server status.. Sat Feb 27 09:57:51 2021 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Sat Feb 27 09:57:51 2021 - [info] Reading application default configuration from /etc/mha/app1.cnf.. Sat Feb 27 09:57:51 2021 - [info] Reading server configuration from /etc/mha/app1.cnf.. Sat Feb 27 09:57:52 2021 - [info] GTID failover mode = 1 Sat Feb 27 09:57:52 2021 - [info] Dead Servers: Sat Feb 27 09:57:52 2021 - [info] 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:52 2021 - [info] Alive Servers: Sat Feb 27 09:57:52 2021 - [info] 192.168.11.5(192.168.11.5:3307) Sat Feb 27 09:57:52 2021 - [info] 192.168.11.6(192.168.11.6:3307) Sat Feb 27 09:57:52 2021 - [info] Alive Slaves: Sat Feb 27 09:57:52 2021 - [info] 192.168.11.5(192.168.11.5:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:52 2021 - [info] GTID ON Sat Feb 27 09:57:52 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:52 2021 - [info] 192.168.11.6(192.168.11.6:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:52 2021 - [info] GTID ON Sat Feb 27 09:57:52 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:52 2021 - [info] Checking slave configurations.. Sat Feb 27 09:57:52 2021 - [info] read_only=1 is not set on slave 192.168.11.5(192.168.11.5:3307). Sat Feb 27 09:57:52 2021 - [info] read_only=1 is not set on slave 192.168.11.6(192.168.11.6:3307). Sat Feb 27 09:57:52 2021 - [info] Checking replication filtering settings.. Sat Feb 27 09:57:52 2021 - [info] Replication filtering check ok. Sat Feb 27 09:57:52 2021 - [info] Master is down! Sat Feb 27 09:57:52 2021 - [info] Terminating monitoring script. Sat Feb 27 09:57:52 2021 - [info] Got exit code 20 (Master dead). Sat Feb 27 09:57:52 2021 - [info] MHA::MasterFailover version 0.56. Sat Feb 27 09:57:52 2021 - [info] Starting master failover. Sat Feb 27 09:57:52 2021 - [info] Sat Feb 27 09:57:52 2021 - [info] * Phase 1: Configuration Check Phase.. Sat Feb 27 09:57:52 2021 - [info] Sat Feb 27 09:57:53 2021 - [info] GTID failover mode = 1 Sat Feb 27 09:57:53 2021 - [info] Dead Servers: Sat Feb 27 09:57:53 2021 - [info] 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:53 2021 - [info] Checking master reachability via MySQL(double check)... Sat Feb 27 09:57:53 2021 - [info] ok. Sat Feb 27 09:57:53 2021 - [info] Alive Servers: Sat Feb 27 09:57:53 2021 - [info] 192.168.11.5(192.168.11.5:3307) Sat Feb 27 09:57:53 2021 - [info] 192.168.11.6(192.168.11.6:3307) Sat Feb 27 09:57:53 2021 - [info] Alive Slaves: Sat Feb 27 09:57:53 2021 - [info] 192.168.11.5(192.168.11.5:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:53 2021 - [info] GTID ON Sat Feb 27 09:57:53 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:53 2021 - [info] 192.168.11.6(192.168.11.6:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:53 2021 - [info] GTID ON Sat Feb 27 09:57:53 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:53 2021 - [info] Starting GTID based failover. Sat Feb 27 09:57:53 2021 - [info] Sat Feb 27 09:57:53 2021 - [info] ** Phase 1: Configuration Check Phase completed. Sat Feb 27 09:57:53 2021 - [info] Sat Feb 27 09:57:53 2021 - [info] * Phase 2: Dead Master Shutdown Phase.. Sat Feb 27 09:57:53 2021 - [info] Sat Feb 27 09:57:53 2021 - [info] Forcing shutdown so that applications never connect to the current master.. Sat Feb 27 09:57:53 2021 - [warning] master_ip_failover_script is not set. Skipping invalidating dead master IP address. Sat Feb 27 09:57:53 2021 - [warning] shutdown_script is not set. Skipping explicit shutting down of the dead master. Sat Feb 27 09:57:54 2021 - [info] * Phase 2: Dead Master Shutdown Phase completed. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] * Phase 3: Master Recovery Phase.. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] * Phase 3.1: Getting Latest Slaves Phase.. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] The latest binary log file/position on all slaves is mysql-bin.000001:2293 Sat Feb 27 09:57:54 2021 - [info] Retrieved Gtid Set: 3f08024d-764f-11eb-b3ce-000c2900ea19:1-8 Sat Feb 27 09:57:54 2021 - [info] Latest slaves (Slaves that received relay log files to the latest): Sat Feb 27 09:57:54 2021 - [info] 192.168.11.5(192.168.11.5:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:54 2021 - [info] GTID ON Sat Feb 27 09:57:54 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:54 2021 - [info] 192.168.11.6(192.168.11.6:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:54 2021 - [info] GTID ON Sat Feb 27 09:57:54 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:54 2021 - [info] The oldest binary log file/position on all slaves is mysql-bin.000001:2293 Sat Feb 27 09:57:54 2021 - [info] Retrieved Gtid Set: 3f08024d-764f-11eb-b3ce-000c2900ea19:1-8 Sat Feb 27 09:57:54 2021 - [info] Oldest slaves: Sat Feb 27 09:57:54 2021 - [info] 192.168.11.5(192.168.11.5:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:54 2021 - [info] GTID ON Sat Feb 27 09:57:54 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:54 2021 - [info] 192.168.11.6(192.168.11.6:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled Sat Feb 27 09:57:54 2021 - [info] GTID ON Sat Feb 27 09:57:54 2021 - [info] Replicating from 192.168.11.4(192.168.11.4:3307) Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] * Phase 3.3: Determining New Master Phase.. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] Searching new master from slaves.. Sat Feb 27 09:57:54 2021 - [info] Candidate masters from the configuration file: Sat Feb 27 09:57:54 2021 - [info] Non-candidate masters: Sat Feb 27 09:57:54 2021 - [info] New master is 192.168.11.5(192.168.11.5:3307) Sat Feb 27 09:57:54 2021 - [info] Starting master failover.. Sat Feb 27 09:57:54 2021 - [info] From: 192.168.11.4(192.168.11.4:3307) (current master) +--192.168.11.5(192.168.11.5:3307) +--192.168.11.6(192.168.11.6:3307) To: 192.168.11.5(192.168.11.5:3307) (new master) +--192.168.11.6(192.168.11.6:3307) Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] * Phase 3.3: New Master Recovery Phase.. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] Waiting all logs to be applied.. Sat Feb 27 09:57:54 2021 - [info] done. Sat Feb 27 09:57:54 2021 - [info] Getting new master's binlog name and position.. Sat Feb 27 09:57:54 2021 - [info] mysql-bin.000001:2271 Sat Feb 27 09:57:54 2021 - [info] All other slaves should start replication from here. Statement should be: CHANGE MASTER TO MASTER_HOST='192.168.11.5', MASTER_PORT=3307, MASTER_AUTO_POSITION=1, MASTER_USER='rpl', MASTER_PASSWORD='xxx'; Sat Feb 27 09:57:54 2021 - [info] Master Recovery succeeded. File:Pos:Exec_Gtid_Set: mysql-bin.000001, 2271, 3f08024d-764f-11eb-b3ce-000c2900ea19:1-8 Sat Feb 27 09:57:54 2021 - [warning] master_ip_failover_script is not set. Skipping taking over new master IP address. Sat Feb 27 09:57:54 2021 - [info] ** Finished master recovery successfully. Sat Feb 27 09:57:54 2021 - [info] * Phase 3: Master Recovery Phase completed. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] * Phase 4: Slaves Recovery Phase.. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] * Phase 4.1: Starting Slaves in parallel.. Sat Feb 27 09:57:54 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] -- Slave recovery on host 192.168.11.6(192.168.11.6:3307) started, pid: 13473. Check tmp log /var/log/mha/app1/192.168.11.6_3307_20210227095752.log if it takes time.. Sat Feb 27 09:57:56 2021 - [info] Sat Feb 27 09:57:56 2021 - [info] Log messages from 192.168.11.6 ... Sat Feb 27 09:57:56 2021 - [info] Sat Feb 27 09:57:54 2021 - [info] Resetting slave 192.168.11.6(192.168.11.6:3307) and starting replication from the new master 192.168.11.5(192.168.11.5:3307).. Sat Feb 27 09:57:54 2021 - [info] Executed CHANGE MASTER. Sat Feb 27 09:57:56 2021 - [info] Slave started. Sat Feb 27 09:57:56 2021 - [info] gtid_wait(3f08024d-764f-11eb-b3ce-000c2900ea19:1-8) completed on 192.168.11.6(192.168.11.6:3307). Executed 0 events. Sat Feb 27 09:57:56 2021 - [info] End of log messages from 192.168.11.6. Sat Feb 27 09:57:56 2021 - [info] -- Slave on host 192.168.11.6(192.168.11.6:3307) started. Sat Feb 27 09:57:56 2021 - [info] All new slave servers recovered successfully. Sat Feb 27 09:57:56 2021 - [info] Sat Feb 27 09:57:56 2021 - [info] * Phase 5: New master cleanup phase.. Sat Feb 27 09:57:56 2021 - [info] Sat Feb 27 09:57:56 2021 - [info] Resetting slave info on the new master.. Sat Feb 27 09:57:56 2021 - [info] 192.168.11.5: Resetting slave info succeeded. Sat Feb 27 09:57:56 2021 - [info] Master failover to 192.168.11.5(192.168.11.5:3307) completed successfully. Sat Feb 27 09:57:56 2021 - [info] Deleted server1 entry from /etc/mha/app1.cnf . Sat Feb 27 09:57:56 2021 - [info] ----- Failover Report ----- app1: MySQL Master failover 192.168.11.4(192.168.11.4:3307) to 192.168.11.5(192.168.11.5:3307) succeeded Master 192.168.11.4(192.168.11.4:3307) is down! Check MHA Manager logs at node04:/var/log/mha/app1/manager for details. Started automated(non-interactive) failover. Selected 192.168.11.5(192.168.11.5:3307) as a new master. 192.168.11.5(192.168.11.5:3307): OK: Applying all logs succeeded. 192.168.11.6(192.168.11.6:3307): OK: Slave started, replicating from 192.168.11.5(192.168.11.5:3307) 192.168.11.5(192.168.11.5:3307): Resetting slave info succeeded. Master failover to 192.168.11.5(192.168.11.5:3307) completed successfully.

####恢复节点(就是之前down掉的master节点,现在成为slave节点)

Sat Feb 27 10:44:24 2021 - [info] MHA::MasterMonitor version 0.56.

Sat Feb 27 10:44:25 2021 - [info] GTID failover mode = 1

Sat Feb 27 10:44:25 2021 - [info] Dead Servers:

Sat Feb 27 10:44:25 2021 - [info] Alive Servers:

Sat Feb 27 10:44:25 2021 - [info] 192.168.11.4(192.168.11.4:3307)

Sat Feb 27 10:44:25 2021 - [info] 192.168.11.5(192.168.11.5:3307)

Sat Feb 27 10:44:25 2021 - [info] 192.168.11.6(192.168.11.6:3307)

Sat Feb 27 10:44:25 2021 - [info] Alive Slaves:

Sat Feb 27 10:44:25 2021 - [info] 192.168.11.4(192.168.11.4:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled

Sat Feb 27 10:44:25 2021 - [info] GTID ON

Sat Feb 27 10:44:25 2021 - [info] Replicating from 192.168.11.5(192.168.11.5:3307)

Sat Feb 27 10:44:25 2021 - [info] 192.168.11.6(192.168.11.6:3307) Version=5.7.26-log (oldest major version between slaves) log-bin:enabled

Sat Feb 27 10:44:25 2021 - [info] GTID ON

Sat Feb 27 10:44:25 2021 - [info] Replicating from 192.168.11.5(192.168.11.5:3307)

Sat Feb 27 10:44:25 2021 - [info] Current Alive Master: 192.168.11.5(192.168.11.5:3307)

Sat Feb 27 10:44:25 2021 - [info] Checking slave configurations..

Sat Feb 27 10:44:25 2021 - [info] read_only=1 is not set on slave 192.168.11.4(192.168.11.4:3307).

Sat Feb 27 10:44:25 2021 - [info] read_only=1 is not set on slave 192.168.11.6(192.168.11.6:3307).

Sat Feb 27 10:44:25 2021 - [info] Checking replication filtering settings..

Sat Feb 27 10:44:25 2021 - [info] binlog_do_db= , binlog_ignore_db=

Sat Feb 27 10:44:25 2021 - [info] Replication filtering check ok.

Sat Feb 27 10:44:25 2021 - [info] GTID (with auto-pos) is supported. Skipping all SSH and Node package checking.

Sat Feb 27 10:44:25 2021 - [info] Checking SSH publickey authentication settings on the current master..

Sat Feb 27 10:44:25 2021 - [info] HealthCheck: SSH to 192.168.11.5 is reachable.

Sat Feb 27 10:44:25 2021 - [info]

192.168.11.5(192.168.11.5:3307) (current master)

+--192.168.11.4(192.168.11.4:3307)

+--192.168.11.6(192.168.11.6:3307)

Sat Feb 27 10:44:25 2021 - [warning] master_ip_failover_script is not defined.

Sat Feb 27 10:44:25 2021 - [warning] shutdown_script is not defined.

Sat Feb 27 10:44:25 2021 - [info] Set master ping interval 2 seconds.

Sat Feb 27 10:44:25 2021 - [warning] secondary_check_script is not defined. It is highly recommended setting it to check master reachability from two or more routes.

Sat Feb 27 10:44:25 2021 - [info] Starting ping health check on 192.168.11.5(192.168.11.5:3307)..

Sat Feb 27 10:44:25 2021 - [info] Ping(SELECT) succeeded, waiting until MySQL doesn't respond..