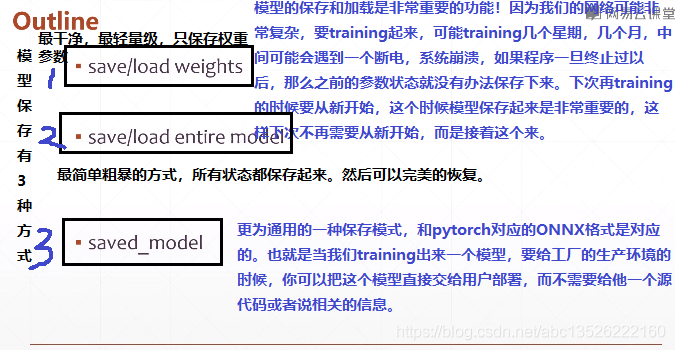

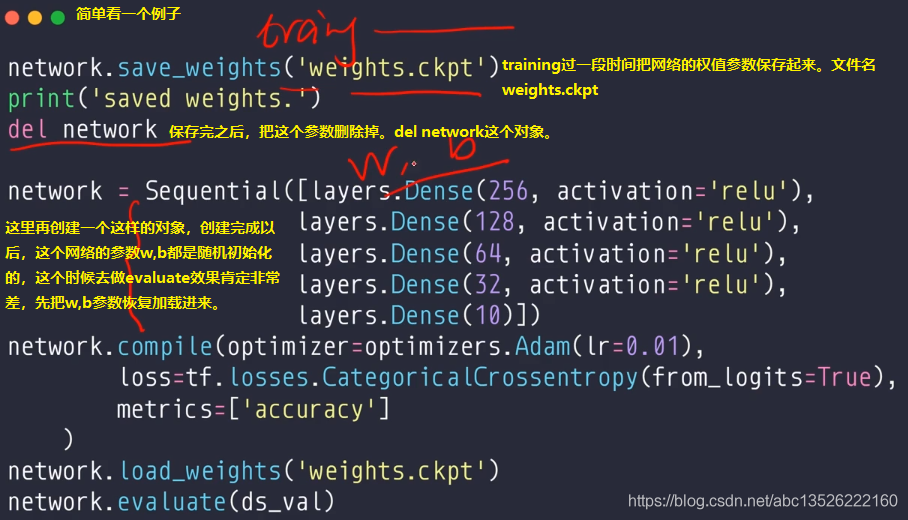

一、第一种:只保存权值

import tensorflow as tf from tensorflow.python.keras import datasets, layers, optimizers, Sequential, metrics import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' def preprocess(x, y): """ x is a simple image, not a batch """ x = tf.cast(x, dtype=tf.float32) / 255. x = tf.reshape(x, [28*28]) y = tf.cast(y, dtype=tf.int32) y = tf.one_hot(y, depth=10) return x, y batchsz = 256*2 (x, y), (x_val, y_val) = datasets.mnist.load_data() print('datasets:', x.shape, y.shape, x.min(), x.max()) db = tf.data.Dataset.from_tensor_slices((x, y)) db = db.map(preprocess).shuffle(60000).batch(batchsz) ds_val = tf.data.Dataset.from_tensor_slices((x_val, y_val)) ds_val = ds_val.map(preprocess).batch(batchsz) sample = next(iter(db)) print(sample[0].shape, sample[1].shape) network = Sequential([layers.Dense(256, activation='relu'), layers.Dense(128, activation='relu'), layers.Dense(64, activation='relu'), layers.Dense(32, activation='relu'), layers.Dense(10)]) network.build(input_shape=(None, 28 * 28)) network.summary() network.compile(optimizer=optimizers.Adam(lr=0.01), loss=tf.losses.CategoricalCrossentropy(from_logits=True), metrics=['accuracy'] ) network.fit(db, epochs=4, validation_data=ds_val, validation_freq=2) network.evaluate(ds_val) network.save_weights('weight.ckpt') print('saved weights') del network # 这个创建过程必须和上面的过程一模一样。 network = Sequential([layers.Dense(256, activation='relu'), layers.Dense(128, activation='relu'), layers.Dense(64, activation='relu'), layers.Dense(32, activation='relu'), layers.Dense(10)]) network.compile(optimizer=optimizers.Adam(lr=0.01), loss=tf.losses.CategoricalCrossentropy(from_logits=True), metrics=['accuracy']) network.load_weights('weight.ckpt') print('loaded weights!') network.evaluate(ds_val)

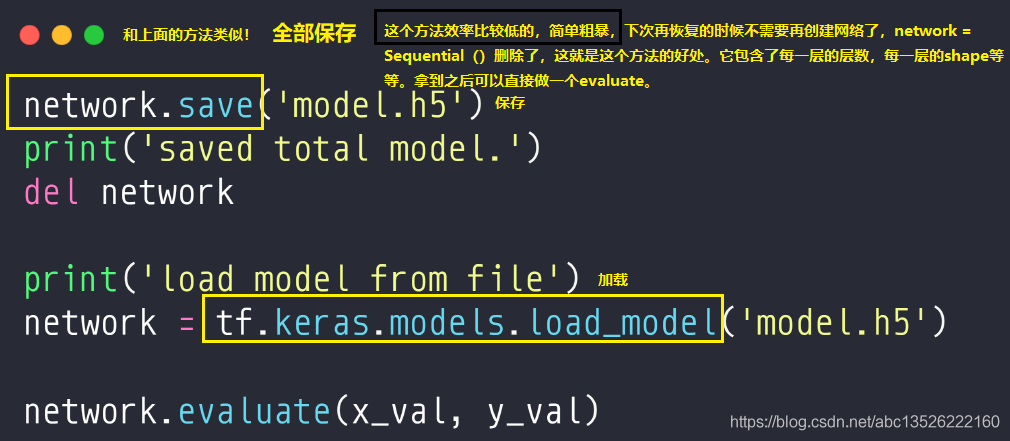

二、保存所有模型

import tensorflow as tf from tensorflow.keras import datasets, layers, optimizers, Sequential, metrics import os os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2' def preprocess(x, y): """ x is a simple image, not a batch """ x = tf.cast(x, dtype=tf.float32) / 255. x = tf.reshape(x, [28 * 28]) y = tf.cast(y, dtype=tf.int32) y = tf.one_hot(y, depth=10) return x, y batchsz = 128 (x, y), (x_val, y_val) = datasets.mnist.load_data() print('datasets:', x.shape, y.shape, x.min(), x.max()) db = tf.data.Dataset.from_tensor_slices((x, y)) db = db.map(preprocess).shuffle(60000).batch(batchsz) ds_val = tf.data.Dataset.from_tensor_slices((x_val, y_val)) ds_val = ds_val.map(preprocess).batch(batchsz) sample = next(iter(db)) print(sample[0].shape, sample[1].shape) network = Sequential([layers.Dense(256, activation='relu'), layers.Dense(128, activation='relu'), layers.Dense(64, activation='relu'), layers.Dense(32, activation='relu'), layers.Dense(10)]) network.build(input_shape=(None, 28 * 28)) network.summary() network.compile(optimizer=optimizers.Adam(lr=0.01), loss=tf.losses.CategoricalCrossentropy(from_logits=True), metrics=['accuracy'] ) network.fit(db, epochs=3, validation_data=ds_val, validation_freq=2) network.evaluate(ds_val) network.save('./savemodel/model.h5') print('saved total model.') del network print('load model from file') network = tf.keras.models.load_model('./savemodel/model.h5') network.compile(optimizer=optimizers.Adam(lr=0.01), loss=tf.losses.CategoricalCrossentropy(from_logits=True), metrics=['accuracy']) x_val = tf.cast(x_val, dtype=tf.float32) / 255. x_val = tf.reshape(x_val, [-1, 28 * 28]) y_val = tf.cast(y_val, dtype=tf.int32) y_val = tf.one_hot(y_val, depth=10) ds_val = tf.data.Dataset.from_tensor_slices((x_val, y_val)).batch(128) network.evaluate(ds_val)

三、更通用(其他语言也能加载)