逻辑回归框架2

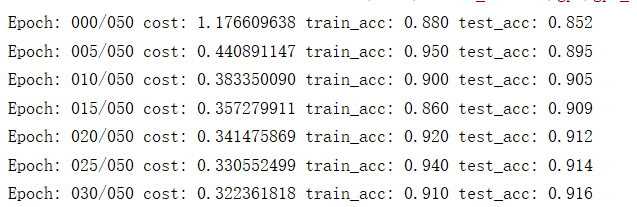

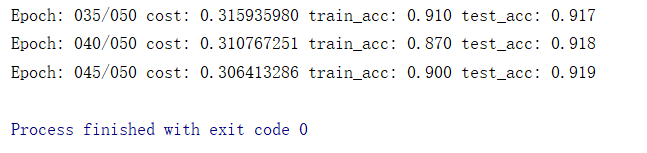

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt import input_data mnist = input_data.read_data_sets('data/',one_hot=True) #one_hot=True编码格式为01编码 trainimg = mnist.train.images trainlabel = mnist.train.labels testimg = mnist.test.images testlabel = mnist.test.labels print(trainimg.shape) print(trainlabel.shape) print(testimg.shape) print(testlabel.shape) print(trainlabel[0]) #初始化变量 x = tf.placeholder("float",[None,784]) #不知道多少样本,先用None占位 y = tf.placeholder("float",[None,10]) W = tf.Variable(tf.zeros([784,10])) b = tf.Variable(tf.zeros([10])) actv = tf.nn.softmax(tf.matmul(x,W) + b) cost = tf.reduce_mean(-tf.reduce_sum(y*tf.log(actv),reduction_indices=1)) learning_rate = 0.01 optm = tf.compat.v1.train.GradientDescentOptimizer(learning_rate).minimize(cost) #测试 pred = tf.equal(tf.argmax(actv,1),tf.argmax(y,1)) #验证预测值的索引和真实值的索引是否一样 accr = tf.reduce_mean(tf.cast(pred,"float")) init = tf.compat.v1.global_variables_initializer() training_epochs = 50 #一共迭代50次 batch_size = 100 #每一次迭代选择100个样本 display_step = 5 sess = tf.compat.v1.Session() sess.run(init) for epoch in range(training_epochs): avg_cost = 0 num_batch = int(mnist.train.num_examples/batch_size) for i in range(num_batch): batch_xs, batch_ys = mnist.train.next_batch(batch_size) sess.run(optm,feed_dict={x:batch_xs,y:batch_ys}) feeds = {x:batch_xs,y:batch_ys} avg_cost += sess.run(cost,feed_dict=feeds)/num_batch if epoch % display_step == 0: #每五轮打印一次 feeds_train = {x:batch_xs,y:batch_ys} feeds_test = {x:mnist.test.images,y:mnist.test.labels} train_acc = sess.run(accr,feed_dict=feeds_train) test_acc = sess.run(accr,feed_dict=feeds_test) print("Epoch: %03d/%03d cost: %.9f train_acc: %.3f test_acc: %.3f" % (epoch,training_epochs,avg_cost,train_acc,test_acc))