1,环境部署

1.1.1 硬件信息

|

主机名 |

IP地址 |

| lb |

k8s-master-lb |

192.168.2.99 |

| master/node |

k8s-master01/k8s-node01/k8s-node01 |

192.168.2.100~102 |

| nfs |

k8s-node01 |

192.168.2.101 |

1.2 安装前准备

1.2.1 配置时区

# ln -sf /usr/share/zoneinfo/UTC /etc/localtime

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

ntpdate time.windows.com

1.2.2 下载常用命令

yum -y install vim tree wget lrzsz

1.2.3 配置登录超时以及历史显示格式

cat << EOF >> /etc/profile

###########################

export TMOUT=1800

export PS1='[e[32;1m][u@h W]$ [e[0m]'

export HISTTIMEFORMAT="`whoami`_%F %T : "

alias grep='grep --color=auto'

EOF

1.2.4 配置yum源

mkdir /etc/yum.repos.d/bak

cp /etc/yum.repos.d/*.repo /etc/yum.repos.d/bak/

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

# 删除已安装的Docker

yum remove docker

docker-client

docker-client-latest

docker-common

docker-latest

docker-latest-logrotate

docker-logrotate

docker-selinux

docker-engine-selinux

docker-engine

# 安装 docker-ce 使用命令

yum install -y yum-utils device-mapper-persistent-data lvm2

# 配置docker-ce 官方源

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

# 配置docker-ce 阿里云源

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum makecache

yum update -y

1.2.5 设置SELINUX为permissive模式

vi /etc/selinux/config

SELINUX=permissive

setenforce 0

1.2.6 设置iptables参数

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

sysctl --system

1.2.7 禁用swap

swapoff -a

# 禁用fstab中的swap项目

vi /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

# 确认swap已经被禁用

cat /proc/swaps

Filename Type Size Used Priority

1.2.8 limit配置

ulimit -SHn 65535

1.2.9 hosts文件配置

cat << EOF >> /etc/hosts

192.168.2.99 k8s-master-lb

192.168.2.100 k8s-master01

192.168.2.101 k8s-node01

192.168.2.102 k8s-node02

EOF

[root@k8s-master01 ~]# more /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.2.99 k8s-master-lb

192.168.2.100 k8s-master01

192.168.2.101 k8s-node01

192.168.2.102 k8s-node02

1.2.10 所有节点加载ipvs模块

modprobe ip_vs

modprobe ip_vs_rr

modprobe ip_vs_wrr

modprobe ip_vs_sh

modprobe nf_conntrack_ipv4

1.2.11 重启主机

reboot

1.3 kubernetes安装

1.3.1 firewalld和iptables相关端口设置

systemctl enable firewalld

systemctl restart firewalld

systemctl status firewalld

| 协议 |

方向 |

端口 |

说明 |

| TCP |

Inbound |

16443* |

Load balancer Kubernetes API server port |

| TCP |

Inbound |

6443* |

Kubernetes API server |

| TCP |

Inbound |

4001 |

etcd listen client port |

| TCP |

Inbound |

2379-2380 |

etcd server client API |

| TCP |

Inbound |

10250 |

Kubelet API |

| TCP |

Inbound |

10251 |

kube-scheduler |

| TCP |

Inbound |

10252 |

kube-controller-manager |

| TCP |

Inbound |

10255 |

Read-only Kubelet API (Deprecated) |

| TCP |

Inbound |

30000-32767 |

NodePort Services |

firewall-cmd --zone=public --add-port=16443/tcp --permanent

firewall-cmd --zone=public --add-port=6443/tcp --permanent

firewall-cmd --zone=public --add-port=4001/tcp --permanent

firewall-cmd --zone=public --add-port=2379-2380/tcp --permanent

firewall-cmd --zone=public --add-port=10250/tcp --permanent

firewall-cmd --zone=public --add-port=10251/tcp --permanent

firewall-cmd --zone=public --add-port=10252/tcp --permanent

firewall-cmd --zone=public --add-port=30000-32767/tcp --permanent

firewall-cmd --reload

firewall-cmd --list-all --zone=public

[root@k8s-master01 ~]# firewall-cmd --list-all --zone=public

public (active)

target: default

icmp-block-inversion: no

interfaces: ens160

sources:

services: ssh dhcpv6-client

ports: 16443/tcp 6443/tcp 4001/tcp 2379-2380/tcp 10250/tcp 10251/tcp 10252/tcp 30000-32767/tcp

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

| 协议 |

方向 |

端口 |

说明 |

| TCP |

Inbound |

10250 |

Kubelet API |

| TCP |

Inbound |

30000-32767 |

NodePort Services |

firewall-cmd --zone=public --add-port=10250/tcp --permanent

firewall-cmd --zone=public --add-port=30000-32767/tcp --permanent

firewall-cmd --reload

firewall-cmd --list-all --zone=public

[root@k8s-node01 ~]# firewall-cmd --list-all --zone=public

public (active)

target: default

icmp-block-inversion: no

interfaces: ens2f1 ens1f0 nm-bond

sources:

services: ssh dhcpv6-client

ports: 10250/tcp 30000-32767/tcp

protocols:

masquerade: no

forward-ports:

source-ports:

icmp-blocks:

rich rules:

- 在所有kubernetes节点上允许kube-proxy的forward

firewall-cmd --permanent --direct --add-rule ipv4 filter INPUT 1 -i docker0 -j ACCEPT -m comment --comment "kube-proxy redirects"

firewall-cmd --permanent --direct --add-rule ipv4 filter FORWARD 1 -o docker0 -j ACCEPT -m comment --comment "docker subnet"

firewall-cmd --reload

[root@k8s-master01 ~]# firewall-cmd --direct --get-all-rules

ipv4 filter INPUT 1 -i docker0 -j ACCEPT -m comment --comment 'kube-proxy redirects'

ipv4 filter FORWARD 1 -o docker0 -j ACCEPT -m comment --comment 'docker subnet'

systemctl restart firewalld

- 解决kube-proxy无法启用nodePort,重启firewalld必须执行以下命令,在所有节点设置定时任务

[root@k8s-master01 ~]# crontab -l

# 解决kube-proxy无法启用nodePort

*/5 * * * * /usr/sbin/iptables -D INPUT -j REJECT --reject-with icmp-host-prohibited

1.3.2 安装 docker-ce

# 要先安装docker-ce-selinux-17.03.2.ce,否则安装 docker-ce-17.03.2.ce-1.el7.centos 会报错

yum install https://download.docker.com/linux/centos/7/x86_64/stable/Packages/docker-ce-selinux-17.03.2.ce-1.el7.centos.noarch.rpm -y

# 指定版本安装 docker-ce (推荐)

yum install -y docker-ce-17.03.2.ce-1.el7.centos

yum list docker-ce --showduplicates | sort -r

systemctl enable docker && systemctl start docker

[root@k8s-master01 ~]# docker version

Client:

Version: 17.03.2-ce

API version: 1.27

Go version: go1.7.5

Git commit: f5ec1e2

Built: Tue Jun 27 02:21:36 2017

OS/Arch: linux/amd64

Server:

Version: 17.03.2-ce

API version: 1.27 (minimum version 1.12)

Go version: go1.7.5

Git commit: f5ec1e2

Built: Tue Jun 27 02:21:36 2017

OS/Arch: linux/amd64

Experimental: false

1.3.3 安装 kubernetes

yum install -y kubelet-1.12.3-0.x86_64 kubeadm-1.12.3-0.x86_64 kubectl-1.12.3-0.x86_64

# 配置kubelet使用国内pause镜像

# 配置kubelet的cgroups

# 获取docker的cgroups

DOCKER_CGROUPS=$(docker info | grep 'Cgroup' | cut -d' ' -f3)

echo $DOCKER_CGROUPS

cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=$DOCKER_CGROUPS --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1"

EOF

systemctl daemon-reload

systemctl enable kubelet && systemctl start kubelet

1.3.4 在所有master节点安装并启动keepalived及docker-compose

yum install -y keepalived docker-compose

systemctl enable keepalived && systemctl restart keepalived

1.3.5 所有master节点互信

[root@k8s-master01 ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:kj9fwIGc8OEgkjmbYN4xn5QJIfzbCpdhAHSNdYedSQo root@k8s-master01

The key's randomart image is:

+---[RSA 2048]----+

|=o.*BE=.=oo |

|.+*= *oBoB |

|o.++= ..* . |

| .o= o . . . |

| . = o S o |

| . + . o . |

| o . o . |

| . o . |

| . |

+----[SHA256]-----+

You have new mail in /var/spool/mail/root

[root@k8s-master01 ~]# for i in k8s-master01 k8s-node01 k8s-node02;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub"

The authenticity of host 'k8s-master01 (192.168.2.100)' can't be established.

ECDSA key fingerprint is SHA256:6ql+t72p2Y0as39Vva8wxF/LFCF7YihQI3frNk3Y7Ns.

ECDSA key fingerprint is MD5:8c:0b:2f:85:e1:e8:49:c9:a7:82:a7:c6:33:dc:b2:02.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-master01's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'k8s-master01'"

and check to make sure that only the key(s) you wanted were added.

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub"

The authenticity of host 'k8s-node01 (192.168.2.101)' can't be established.

ECDSA key fingerprint is SHA256:u7n4RdHb4VQUuR6+EzanduelF4fQHYEg+i84qUU7HMM.

ECDSA key fingerprint is MD5:17:a8:af:1d:d0:54:6a:f6:cf:8c:08:0c:c0:39:41:55.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-node01's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'k8s-node01'"

and check to make sure that only the key(s) you wanted were added.

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub"

The authenticity of host 'k8s-node02 (192.168.2.102)' can't be established.

ECDSA key fingerprint is SHA256:qWdrqlPd4GRmWQ2uOemQWrXrlOJE+R/lbMK5wif3wiQ.

ECDSA key fingerprint is MD5:15:b4:ff:5e:e8:4e:f3:c2:ac:07:13:bd:b3:35:90:96.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-node02's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'k8s-node02'"

and check to make sure that only the key(s) you wanted were added.

1.4 高可用安装基本配置

1.4.1 以下操作在master01

yum install git -y

git clone https://github.com/xiaoqshuo/k8-ha-install.git

- 修改对应的配置信息,注意nm-bond修改为服务器对应网卡名称

[root@k8s-master01 k8-ha-install]# more create-config.sh

#!/bin/bash

#######################################

# set variables below to create the config files, all files will create at ./config directory

#######################################

# master keepalived virtual ip address

export K8SHA_VIP=192.168.2.99

# master01 ip address

export K8SHA_IP1=192.168.2.100

# node01 ip address

export K8SHA_IP2=192.168.2.101

# node02 ip address

export K8SHA_IP2=192.168.2.102

# master keepalived virtual ip hostname

export K8SHA_VHOST=k8s-master-lb

# master01 hostname

export K8SHA_HOST1=k8s-master01

# node01 hostname

export K8SHA_HOST2=k8s-node01

# node02 hostname

export K8SHA_HOST2=k8s-node02

# master01 network interface name

export K8SHA_NETINF1=ens160

# master02 network interface name

export K8SHA_NETINF2=ens160

# master03 network interface name

export K8SHA_NETINF3=ens160

# keepalived auth_pass config

export K8SHA_KEEPALIVED_AUTH=412f7dc3bfed32194d1600c483e10ad1d

# calico reachable ip address

export K8SHA_CALICO_REACHABLE_IP=192.168.2.1

# kubernetes CIDR pod subnet, if CIDR pod subnet is "172.168.0.0/16" please set to "172.168.0.0"

export K8SHA_CIDR=172.168.0.0

- 创建3个master节点的kubeadm配置文件,keepalived配置文件,nginx负载均衡配置文件,以及calico配置文件

[root@k8s-master01 k8-ha]# ./create-config.sh

create kubeadm-config.yaml files success. config/k8s-master01/kubeadm-config.yaml

create kubeadm-config.yaml files success. config/k8s-node02/kubeadm-config.yaml

create kubeadm-config.yaml files success. config//kubeadm-config.yaml

create keepalived files success. config/k8s-master01/keepalived/

create keepalived files success. config/k8s-node02/keepalived/

create keepalived files success. config//keepalived/

create nginx-lb files success. config/k8s-master01/nginx-lb/

create nginx-lb files success. config/k8s-node02/nginx-lb/

create nginx-lb files success. config//nginx-lb/

create calico.yaml file success. calico/calico.yaml

cat >> /etc/profile << EOF

# 设置相关hostname变量

export HOST1=k8s-master01

export HOST2=k8s-node01

export HOST3=k8s-node02

EOF

- 把kubeadm配置文件放到各个master节点的/root/目录

scp -r config/$HOST1/kubeadm-config.yaml $HOST1:/root/

scp -r config/$HOST2/kubeadm-config.yaml $HOST2:/root/

scp -r config/$HOST3/kubeadm-config.yaml $HOST3:/root/

- 把keepalived配置文件放到各个master节点的/etc/keepalived/目录

scp -r config/$HOST1/keepalived/* $HOST1:/etc/keepalived/

scp -r config/$HOST2/keepalived/* $HOST2:/etc/keepalived/

scp -r config/$HOST3/keepalived/* $HOST3:/etc/keepalived/

- 把nginx负载均衡配置文件放到各个master节点的/root/目录

scp -r config/$HOST1/nginx-lb $HOST1:/root/

scp -r config/$HOST2/nginx-lb $HOST2:/root/

scp -r config/$HOST3/nginx-lb $HOST3:/root/

1.4.2 以下操作在所有master操作

# 修改nginx-lb下的nginx配置文件

proxy_connect_timeout 60s;proxy_timeout 10m;

# 启动nginx-lb

docker-compose --file=/root/nginx-lb/docker-compose.yaml up -d

# 查看容器状态

docker-compose --file=/root/nginx-lb/docker-compose.yaml ps

[root@k8s-master01 ~]# more /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

# 注释健康检查

#vrrp_script chk_apiserver {

# script "/etc/keepalived/check_apiserver.sh"

# interval 2

# weight -5

# fall 3

# rise 2

#}

vrrp_instance VI_1 {

state MASTER

interface nm-bond

mcast_src_ip 192.168.2.100

virtual_router_id 51

priority 102

advert_int 2

authentication {

auth_type PASS

auth_pass 412f7dc3bfed32194d1600c483e10ad1d

}

virtual_ipaddress {

192.168.2.99

}

track_script {

chk_apiserver

}

}

- 重启keepalived,并确认能ping通虚拟IP

systemctl restart keepalived

[root@k8s-master01 ~]# ping 192.168.2.99

PING 192.168.2.99 (192.168.2.99) 56(84) bytes of data.

64 bytes from 192.168.2.99: icmp_seq=1 ttl=64 time=0.035 ms

64 bytes from 192.168.2.99: icmp_seq=2 ttl=64 time=0.032 ms

64 bytes from 192.168.2.99: icmp_seq=3 ttl=64 time=0.030 ms

64 bytes from 192.168.2.99: icmp_seq=4 ttl=64 time=0.032 ms

64 bytes from 192.168.2.99: icmp_seq=5 ttl=64 time=0.037 ms

64 bytes from 192.168.2.99: icmp_seq=6 ttl=64 time=0.032 ms

- 修改kubeadm-config.yaml,注意:此时是在原有基础上添加了imageRepository、controllerManagerExtraArgs和api,其中advertiseAddress为每个master本机IP

[root@k8s-master01 ~]# more kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1alpha2

kind: MasterConfiguration

kubernetesVersion: v1.12.3

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

apiServerCertSANs:

- k8s-master01

- k8s-node01

- k8s-node02

- k8s-master-lb

- 192.168.2.100

- 192.168.2.101

- 192.168.2.102

- 192.168.2.99

api:

advertiseAddress: 192.168.2.100

controlPlaneEndpoint: 192.168.2.99:16443

etcd:

local:

extraArgs:

listen-client-urls: "https://127.0.0.1:2379,https://192.168.2.100:2379"

advertise-client-urls: "https://192.168.2.100:2379"

listen-peer-urls: "https://192.168.2.100:2380"

initial-advertise-peer-urls: "https://192.168.2.100:2380"

initial-cluster: "k8s-master01=https://192.168.2.100:2380"

serverCertSANs:

- k8s-master01

- 192.168.2.100

peerCertSANs:

- k8s-master01

- 192.168.2.100

controllerManagerExtraArgs:

node-monitor-grace-period: 10s

pod-eviction-timeout: 10s

networking:

# This CIDR is a Calico default. Substitute or remove for your CNI provider.

podSubnet: "172.168.0.0/16"

1.4.2 以下操作在master01操作

kubeadm config images pull --config /root/kubeadm-config.yaml

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.12.3 k8s.gcr.io/kube-apiserver:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.12.3 k8s.gcr.io/kube-controller-manager:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.12.3 k8s.gcr.io/kube-scheduler:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.12.3 k8s.gcr.io/kube-proxy:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.2.2 k8s.gcr.io/coredns:1.2.2

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1 k8s.gcr.io/pause-amd64:3.1

kubeadm init --config /root/kubeadm-config.yaml

[root@k8s-master01 ~]# kubeadm init --config /root/kubeadm-config.yaml

kubeadm init --config /root/kubeadm-config.yaml

[init] using Kubernetes version: v1.12.3

[preflight] running pre-flight checks

[WARNING Firewalld]: firewalld is active, please ensure ports [6443 10250] are open or your cluster may not function correctly

[preflight/images] Pulling images required for setting up a Kubernetes cluster

[preflight/images] This might take a minute or two, depending on the speed of your internet connection

[preflight/images] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[preflight] Activating the kubelet service

[certificates] Generated etcd/ca certificate and key.

[certificates] Generated etcd/server certificate and key.

[certificates] etcd/server serving cert is signed for DNS names [k8s-master01 localhost k8s-master01] and IPs [127.0.0.1 ::1 192.168.2.100]

[certificates] Generated etcd/peer certificate and key.

[certificates] etcd/peer serving cert is signed for DNS names [k8s-master01 localhost k8s-master01] and IPs [192.168.2.100 127.0.0.1 ::1 192.168.2.100]

[certificates] Generated etcd/healthcheck-client certificate and key.

[certificates] Generated apiserver-etcd-client certificate and key.

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [k8s-master01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local k8s-master01 k8s-node01 k8s-node02 k8s-master-lb] and IPs [10.96.0.1 192.168.2.100 192.168.2.99 192.168.2.100 192.168.2.101 192.168.2.102 192.168.2.99]

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] valid certificates and keys now exist in "/etc/kubernetes/pki"

[certificates] Generated sa key and public key.

[endpoint] WARNING: port specified in controlPlaneEndpoint overrides bindPort in the controlplane address

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/scheduler.conf"

[controlplane] wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests"

[init] this might take a minute or longer if the control plane images have to be pulled

[apiclient] All control plane components are healthy after 32.519847 seconds

[uploadconfig] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.12" in namespace kube-system with the configuration for the kubelets in the cluster

[markmaster] Marking the node k8s-master01 as master by adding the label "node-role.kubernetes.io/master=''"

[markmaster] Marking the node k8s-master01 as master by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "k8s-master01" as an annotation

[bootstraptoken] using token: 1s1g4k.rsnrw7t1njkjz6zk

[bootstraptoken] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[endpoint] WARNING: port specified in controlPlaneEndpoint overrides bindPort in the controlplane address

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join 192.168.2.99:16443 --token 1s1g4k.rsnrw7t1njkjz6zk --discovery-token-ca-cert-hash sha256:357d5c7da9a3dc6edd8edda2dded629913fd601151a571fb020ae5f8cbf19224

1.4.3 所有master节点配置变量

cat <<EOF >> ~/.bashrc

export KUBECONFIG=/etc/kubernetes/admin.conf

EOF

source ~/.bashrc

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady master 3m35s v1.12.3

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

coredns-6c66ffc55b-8rlpp 0/1 Pending 0 3m36s <none> <none> <none>

coredns-6c66ffc55b-qdvfg 0/1 Pending 0 3m36s <none> <none> <none>

etcd-k8s-master01 1/1 Running 0 3m4s 192.168.2.100 k8s-master01 <none>

kube-apiserver-k8s-master01 1/1 Running 0 2m52s 192.168.2.100 k8s-master01 <none>

kube-controller-manager-k8s-master01 1/1 Running 0 3m6s 192.168.2.100 k8s-master01 <none>

kube-proxy-jsnhl 1/1 Running 0 3m36s 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-master01 1/1 Running 0 3m1s 192.168.2.100 k8s-master01 <none>

- 注:网络插件未安装,所以coredns状态为Pending

1.5 高可用配置

1.5.1 以下操作在master01

USER=root

CONTROL_PLANE_IPS="k8s-node01 k8s-node02"

for host in $CONTROL_PLANE_IPS; do

ssh "${USER}"@$host "mkdir -p /etc/kubernetes/pki/etcd"

scp /etc/kubernetes/pki/ca.crt "${USER}"@$host:/etc/kubernetes/pki/ca.crt

scp /etc/kubernetes/pki/ca.key "${USER}"@$host:/etc/kubernetes/pki/ca.key

scp /etc/kubernetes/pki/sa.key "${USER}"@$host:/etc/kubernetes/pki/sa.key

scp /etc/kubernetes/pki/sa.pub "${USER}"@$host:/etc/kubernetes/pki/sa.pub

scp /etc/kubernetes/pki/front-proxy-ca.crt "${USER}"@$host:/etc/kubernetes/pki/front-proxy-ca.crt

scp /etc/kubernetes/pki/front-proxy-ca.key "${USER}"@$host:/etc/kubernetes/pki/front-proxy-ca.key

scp /etc/kubernetes/pki/etcd/ca.crt "${USER}"@$host:/etc/kubernetes/pki/etcd/ca.crt

scp /etc/kubernetes/pki/etcd/ca.key "${USER}"@$host:/etc/kubernetes/pki/etcd/ca.key

scp /etc/kubernetes/admin.conf "${USER}"@$host:/etc/kubernetes/admin.conf

done

1.5.2 以下操作在master02(node01)

- 把node01加入集群

- 修改kubeadm-config.yaml 如下

[root@k8s-node01 ~]# more kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1alpha2

kind: MasterConfiguration

kubernetesVersion: v1.12.3

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

apiServerCertSANs:

- k8s-master01

- k8s-node01

- k8s-node02

- k8s-master-lb

- 192.168.2.100

- 192.168.2.101

- 192.168.2.102

- 192.168.2.99

api:

advertiseAddress: 192.168.2.101

controlPlaneEndpoint: 192.168.2.99:16443

etcd:

local:

extraArgs:

listen-client-urls: "https://127.0.0.1:2379,https://192.168.2.101:2379"

advertise-client-urls: "https://192.168.2.101:2379"

listen-peer-urls: "https://192.168.2.101:2380"

initial-advertise-peer-urls: "https://192.168.2.101:2380"

initial-cluster: "k8s-master01=https://192.168.2.100:2380,k8s-node01=https://192.168.2.101:2380"

initial-cluster-state: existing

serverCertSANs:

- k8s-node01

- 192.168.2.101

peerCertSANs:

- k8s-node01

- 192.168.2.101

controllerManagerExtraArgs:

node-monitor-grace-period: 10s

pod-eviction-timeout: 10s

networking:

# This CIDR is a calico default. Substitute or remove for your CNI provider.

podSubnet: "172.168.0.0/16"

kubeadm config images pull --config /root/kubeadm-config.yaml

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.12.3 k8s.gcr.io/kube-apiserver:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.12.3 k8s.gcr.io/kube-controller-manager:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.12.3 k8s.gcr.io/kube-scheduler:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.12.3 k8s.gcr.io/kube-proxy:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.2.2 k8s.gcr.io/coredns:1.2.2

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1 k8s.gcr.io/pause-amd64:3.1

kubeadm alpha phase certs all --config /root/kubeadm-config.yaml

kubeadm alpha phase kubeconfig controller-manager --config /root/kubeadm-config.yaml

kubeadm alpha phase kubeconfig scheduler --config /root/kubeadm-config.yaml

kubeadm alpha phase kubelet config write-to-disk --config /root/kubeadm-config.yaml

kubeadm alpha phase kubelet write-env-file --config /root/kubeadm-config.yaml

kubeadm alpha phase kubeconfig kubelet --config /root/kubeadm-config.yaml

systemctl restart kubelet

- 设置k8s-master01以及k8s-node01的HOSTNAME以及地址

export CP0_IP=192.168.2.100

export CP0_HOSTNAME=k8s-master01

export CP1_IP=192.168.2.101

export CP1_HOSTNAME=k8s-node01

kubectl exec -n kube-system etcd-${CP0_HOSTNAME} -- etcdctl --ca-file /etc/kubernetes/pki/etcd/ca.crt --cert-file /etc/kubernetes/pki/etcd/peer.crt --key-file /etc/kubernetes/pki/etcd/peer.key --endpoints=https://${CP0_IP}:2379 member add ${CP1_HOSTNAME} https://${CP1_IP}:2380

[root@k8s-node01 ~]# kubectl exec -n kube-system etcd-${CP0_HOSTNAME} -- etcdctl --ca-file /etc/kubernetes/pki/etcd/ca.crt --cert-file /etc/kubernetes/pki/etcd/peer.crt --key-file /etc/kubernetes/pki/etcd/peer.key --endpoints=https://${CP0_IP}:2379 member add ${CP1_HOSTNAME} https://${CP1_IP}:2380

Added member named k8s-node01 with ID eeb43c3071ab6260 to cluster

ETCD_NAME="k8s-node01"

ETCD_INITIAL_CLUSTER="k8s-master01=https://192.168.2.100:2380,k8s-node01=https://192.168.2.101:2380"

ETCD_INITIAL_CLUSTER_STATE="existing"

kubeadm alpha phase etcd local --config /root/kubeadm-config.yaml

[root@k8s-node01 ~]# kubeadm alpha phase etcd local --config /root/kubeadm-config.yaml

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

kubeadm alpha phase kubeconfig all --config /root/kubeadm-config.yaml

kubeadm alpha phase controlplane all --config /root/kubeadm-config.yaml

kubeadm alpha phase mark-master --config /root/kubeadm-config.yaml

1.5.2 以下操作在master03(node02)

- 把node02加入集群

- 修改kubeadm-config.yaml 如下

[root@k8s-node02 ~]# more kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1alpha2

kind: MasterConfiguration

kubernetesVersion: v1.12.3

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

apiServerCertSANs:

- k8s-master01

- k8s-node01

- k8s-node02

- k8s-master-lb

- 192.168.2.100

- 192.168.2.101

- 192.168.2.102

- 192.168.2.99

api:

advertiseAddress: 192.168.2.102

controlPlaneEndpoint: 192.168.2.99:16443

etcd:

local:

extraArgs:

listen-client-urls: "https://127.0.0.1:2379,https://192.168.2.102:2379"

advertise-client-urls: "https://192.168.2.102:2379"

listen-peer-urls: "https://192.168.2.102:2380"

initial-advertise-peer-urls: "https://192.168.2.102:2380"

initial-cluster: "k8s-master01=https://192.168.2.100:2380,k8s-node01=https://192.168.2.101:2380,k8s-node02=https://192.168.2.102:2380"

initial-cluster-state: existing

serverCertSANs:

- k8s-node02

- 192.168.2.102

peerCertSANs:

- k8s-node02

- 192.168.2.102

controllerManagerExtraArgs:

node-monitor-grace-period: 10s

pod-eviction-timeout: 10s

networking:

# This CIDR is a calico default. Substitute or remove for your CNI provider.

podSubnet: "172.168.0.0/16"

kubeadm config images pull --config /root/kubeadm-config.yaml

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.12.3 k8s.gcr.io/kube-apiserver:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.12.3 k8s.gcr.io/kube-controller-manager:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.12.3 k8s.gcr.io/kube-scheduler:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.12.3 k8s.gcr.io/kube-proxy:v1.12.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.2.24 k8s.gcr.io/etcd:3.2.24

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.2.2 k8s.gcr.io/coredns:1.2.2

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1 k8s.gcr.io/pause-amd64:3.1

kubeadm alpha phase certs all --config /root/kubeadm-config.yaml

kubeadm alpha phase kubeconfig controller-manager --config /root/kubeadm-config.yaml

kubeadm alpha phase kubeconfig scheduler --config /root/kubeadm-config.yaml

kubeadm alpha phase kubelet config write-to-disk --config /root/kubeadm-config.yaml

kubeadm alpha phase kubelet write-env-file --config /root/kubeadm-config.yaml

kubeadm alpha phase kubeconfig kubelet --config /root/kubeadm-config.yaml

systemctl restart kubelet

- 设置k8s-master01以及k8s-node01的HOSTNAME以及地址

export CP0_IP=192.168.2.100

export CP0_HOSTNAME=k8s-master01

export CP2_IP=192.168.2.102

export CP2_HOSTNAME=k8s-node02

kubectl exec -n kube-system etcd-${CP0_HOSTNAME} -- etcdctl --ca-file /etc/kubernetes/pki/etcd/ca.crt --cert-file /etc/kubernetes/pki/etcd/peer.crt --key-file /etc/kubernetes/pki/etcd/peer.key --endpoints=https://${CP0_IP}:2379 member add ${CP2_HOSTNAME} https://${CP2_IP}:2380

[root@k8s-node02 ~]# kubectl exec -n kube-system etcd-${CP0_HOSTNAME} -- etcdctl --ca-file /etc/kubernetes/pki/etcd/ca.crt --cert-file /etc/kubernetes/pki/etcd/peer.crt --key-file /etc/kubernetes/pki/etcd/peer.key --endpoints=https://${CP0_IP}:2379 member add ${CP2_HOSTNAME} https://${CP2_IP}:2380

Added member named k8s-node02 with ID ce5849fac4f917df to cluster

ETCD_NAME="k8s-node02"

ETCD_INITIAL_CLUSTER="k8s-node02=https://192.168.2.102:2380,k8s-master01=https://192.168.2.100:2380,k8s-node01=https://192.168.2.101:2380"

ETCD_INITIAL_CLUSTER_STATE="existing"

kubeadm alpha phase etcd local --config /root/kubeadm-config.yaml

[root@k8s-node02 ~]# kubeadm alpha phase etcd local --config /root/kubeadm-config.yaml

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

kubeadm alpha phase kubeconfig all --config /root/kubeadm-config.yaml

kubeadm alpha phase controlplane all --config /root/kubeadm-config.yaml

kubeadm alpha phase mark-master --config /root/kubeadm-config.yaml

1.5.3 所有master节点打开keepalived的监控检查,并重启keepalived

[root@k8s-node01 ~]# more /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

# 健康检查

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

}

vrrp_instance VI_1 {

state BACKUP

interface ens160

mcast_src_ip 192.168.2.101

virtual_router_id 51

priority 101

advert_int 2

authentication {

auth_type PASS

auth_pass 412f7dc3bfed32194d1600c483e10ad1d

}

virtual_ipaddress {

192.168.2.99

}

track_script {

chk_apiserver

}

}

systemctl restart keepalived

1.6 检查

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady master 38m v1.12.3

k8s-node01 NotReady master 10m v1.12.3

k8s-node02 NotReady master 10m v1.12.3

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

coredns-6c66ffc55b-8rlpp 0/1 ContainerCreating 0 39m <none> k8s-node02 <none>

coredns-6c66ffc55b-qdvfg 0/1 ContainerCreating 0 39m <none> k8s-node02 <none>

etcd-k8s-master01 1/1 Running 0 38m 192.168.2.100 k8s-master01 <none>

etcd-k8s-node01 1/1 Running 0 8m32s 192.168.2.101 k8s-node01 <none>

etcd-k8s-node02 1/1 Running 0 7m7s 192.168.2.102 k8s-node02 <none>

kube-apiserver-k8s-master01 1/1 Running 0 38m 192.168.2.100 k8s-master01 <none>

kube-apiserver-k8s-node01 1/1 Running 0 6m28s 192.168.2.101 k8s-node01 <none>

kube-apiserver-k8s-node02 1/1 Running 0 6m23s 192.168.2.102 k8s-node02 <none>

kube-controller-manager-k8s-master01 1/1 Running 1 38m 192.168.2.100 k8s-master01 <none>

kube-controller-manager-k8s-node01 1/1 Running 0 6m28s 192.168.2.101 k8s-node01 <none>

kube-controller-manager-k8s-node02 1/1 Running 0 6m23s 192.168.2.102 k8s-node02 <none>

kube-proxy-62hjm 1/1 Running 0 11m 192.168.2.102 k8s-node02 <none>

kube-proxy-79fbq 1/1 Running 0 11m 192.168.2.101 k8s-node01 <none>

kube-proxy-jsnhl 1/1 Running 0 39m 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-master01 1/1 Running 1 38m 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-node01 1/1 Running 0 6m28s 192.168.2.101 k8s-node01 <none>

kube-scheduler-k8s-node02 1/1 Running 0 6m23s 192.168.2.102 k8s-node02 <none>

1.7 部署网络插件

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

- 在所有master节点上允许hpa通过接口采集数据,修改 /etc/kubernetes/manifests/kube-controller-manager.yaml

- --horizontal-pod-autoscaler-use-rest-clients=false

[root@k8s-master01 ~]# more /etc/kubernetes/manifests/kube-controller-manager.yaml

apiVersion: v1

kind: Pod

metadata:

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ""

creationTimestamp: null

labels:

component: kube-controller-manager

tier: control-plane

name: kube-controller-manager

namespace: kube-system

spec:

containers:

- command:

- kube-controller-manager

- --node-monitor-grace-period=10s

- --pod-eviction-timeout=10s

- --address=127.0.0.1

- --allocate-node-cidrs=true

- --authentication-kubeconfig=/etc/kubernetes/controller-manager.conf

- --authorization-kubeconfig=/etc/kubernetes/controller-manager.conf

- --client-ca-file=/etc/kubernetes/pki/ca.crt

- --cluster-cidr=172.168.0.0/16

- --cluster-signing-cert-file=/etc/kubernetes/pki/ca.crt

- --cluster-signing-key-file=/etc/kubernetes/pki/ca.key

- --controllers=*,bootstrapsigner,tokencleaner

- --kubeconfig=/etc/kubernetes/controller-manager.conf

- --leader-elect=true

- --node-cidr-mask-size=24

- --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt

- --root-ca-file=/etc/kubernetes/pki/ca.crt

- --service-account-private-key-file=/etc/kubernetes/pki/sa.key

- --use-service-account-credentials=true

- --horizontal-pod-autoscaler-use-rest-clients=false

- 在所有master上允许istio的自动注入,修改 /etc/kubernetes/manifests/kube-apiserver.yaml

- --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota

[root@k8s-master01 ~]# more /etc/kubernetes/manifests/kube-apiserver.yaml

apiVersion: v1

kind: Pod

metadata:

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ""

creationTimestamp: null

labels:

component: kube-apiserver

tier: control-plane

name: kube-apiserver

namespace: kube-system

spec:

containers:

- command:

- kube-apiserver

- --authorization-mode=Node,RBAC

- --advertise-address=192.168.2.100

- --allow-privileged=true

- --client-ca-file=/etc/kubernetes/pki/ca.crt

# - --enable-admission-plugins=NodeRestriction

- --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota

- --enable-bootstrap-token-auth=true

- --etcd-cafile=/etc/kubernetes/pki/etcd/ca.crt

- --etcd-certfile=/etc/kubernetes/pki/apiserver-etcd-client.crt

- --etcd-keyfile=/etc/kubernetes/pki/apiserver-etcd-client.key

- --etcd-servers=https://127.0.0.1:2379,https://192.168.2.100:2379

- --insecure-port=0

- --kubelet-client-certificate=/etc/kubernetes/pki/apiserver-kubelet-client.crt

- --kubelet-client-key=/etc/kubernetes/pki/apiserver-kubelet-client.key

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.crt

- --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client.key

- --requestheader-allowed-names=front-proxy-client

- --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt

- --requestheader-extra-headers-prefix=X-Remote-Extra-

- --requestheader-group-headers=X-Remote-Group

- --requestheader-username-headers=X-Remote-User

- --secure-port=6443

- --service-account-key-file=/etc/kubernetes/pki/sa.pub

- --service-cluster-ip-range=10.96.0.0/12

- --tls-cert-file=/etc/kubernetes/pki/apiserver.crt

- --tls-private-key-file=/etc/kubernetes/pki/apiserver.key

systemctl restart kubelet

docker pull quay.io/calico/typha:v0.7.4

docker pull quay.io/calico/node:v3.1.3

docker pull quay.io/calico/cni:v3.1.3

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.8.3

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.8.3 gcr.io/google_containers/kubernetes-dashboard-amd64:v1.8.3

# prometheus

docker pull prom/prometheus:v2.3.1

# traefik

docker pull traefik:v1.6.3

# istio

docker pull docker.io/jaegertracing/all-in-one:1.5

docker pull docker.io/prom/prometheus:v2.3.1

docker pull docker.io/prom/statsd-exporter:v0.6.0

docker pull quay.io/coreos/hyperkube:v1.7.6_coreos.0

docker pull istio/citadel:1.0.0

docker tag istio/citadel:1.0.0 gcr.io/istio-release/citadel:1.0.0

docker pull istio/galley:1.0.0

docker tag istio/galley:1.0.0 gcr.io/istio-release/galley:1.0.0

docker pull istio/grafana:1.0.0

docker tag istio/grafana:1.0.0 gcr.io/istio-release/grafana:1.0.0

docker pull istio/mixer:1.0.0

docker tag istio/mixer:1.0.0 gcr.io/istio-release/mixer:1.0.0

docker pull istio/pilot:1.0.0

docker tag istio/pilot:1.0.0 gcr.io/istio-release/pilot:1.0.0

docker pull istio/proxy_init:1.0.0

docker tag istio/proxy_init:1.0.0 gcr.io/istio-release/proxy_init:1.0.0

docker pull istio/proxyv2:1.0.0

docker tag istio/proxyv2:1.0.0 gcr.io/istio-release/proxyv2:1.0.0

docker pull istio/servicegraph:1.0.0

docker tag istio/servicegraph:1.0.0 gcr.io/istio-release/servicegraph:1.0.0

docker pull istio/sidecar_injector:1.0.0

docker tag istio/sidecar_injector:1.0.0 gcr.io/istio-release/sidecar_injector:1.0.0

docker pull xiaoqshuo/heapster-amd64:v1.5.4

docker pull xiaoqshuo/heapster-grafana-amd64:v5.0.4

docker pull xiaoqshuo/heapster-influxdb-amd64:v1.5.2

docker pull xiaoqshuo/metrics-server-amd64:v0.2.1

docker tag xiaoqshuo/heapster-amd64:v1.5.4 k8s.gcr.io/heapster-amd64:v1.5.4

docker tag xiaoqshuo/heapster-grafana-amd64:v5.0.4 k8s.gcr.io/heapster-influxdb-amd64:v1.5.2

docker tag xiaoqshuo/heapster-influxdb-amd64:v1.5.2 k8s.gcr.io/heapster-grafana-amd64:v5.0.4

docker tag xiaoqshuo/metrics-server-amd64:v0.2.1 gcr.io/google_containers/metrics-server-amd64:v0.2.1

- 在任意master节点上安装calico,安装calico网络组件后,nodes状态才会恢复正常

kubectl apply -f /opt/k8-ha-install/calico/

[root@k8s-master01 ~]# kubectl apply -f /opt/k8-ha-install/calico/

configmap/calico-config created

service/calico-typha created

deployment.apps/calico-typha created

daemonset.extensions/calico-node created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

serviceaccount/calico-node created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

[root@k8s-master01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 170m v1.12.3

k8s-node01 Ready master 143m v1.12.3

k8s-node02 Ready master 143m v1.12.3

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

calico-node-9cw9r 2/2 Running 0 65s 192.168.2.101 k8s-node01 <none>

calico-node-l6m78 2/2 Running 0 65s 192.168.2.102 k8s-node02 <none>

calico-node-lhqg9 2/2 Running 0 66s 192.168.2.100 k8s-master01 <none>

coredns-6c66ffc55b-8rlpp 1/1 Running 0 170m 172.168.1.3 k8s-node02 <none>

coredns-6c66ffc55b-qdvfg 1/1 Running 0 170m 172.168.1.2 k8s-node02 <none>

etcd-k8s-master01 1/1 Running 0 169m 192.168.2.100 k8s-master01 <none>

etcd-k8s-node01 1/1 Running 0 139m 192.168.2.101 k8s-node01 <none>

etcd-k8s-node02 1/1 Running 0 138m 192.168.2.102 k8s-node02 <none>

kube-apiserver-k8s-master01 1/1 Running 0 3m7s 192.168.2.100 k8s-master01 <none>

kube-apiserver-k8s-node01 1/1 Running 0 4m16s 192.168.2.101 k8s-node01 <none>

kube-apiserver-k8s-node02 1/1 Running 0 4m7s 192.168.2.102 k8s-node02 <none>

kube-controller-manager-k8s-master01 1/1 Running 0 3m49s 192.168.2.100 k8s-master01 <none>

kube-controller-manager-k8s-node01 1/1 Running 0 125m 192.168.2.101 k8s-node01 <none>

kube-controller-manager-k8s-node02 1/1 Running 1 125m 192.168.2.102 k8s-node02 <none>

kube-proxy-62hjm 1/1 Running 0 143m 192.168.2.102 k8s-node02 <none>

kube-proxy-79fbq 1/1 Running 0 143m 192.168.2.101 k8s-node01 <none>

kube-proxy-jsnhl 1/1 Running 0 170m 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-master01 1/1 Running 2 169m 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-node01 1/1 Running 0 137m 192.168.2.101 k8s-node01 <none>

kube-scheduler-k8s-node02 1/1 Running 0 137m 192.168.2.102 k8s-node02 <none>

1.8 确认所有服务开启自启动

systemctl enable kubelet

systemctl enable docker

systemctl disable firewalld

systemctl enable keepalived

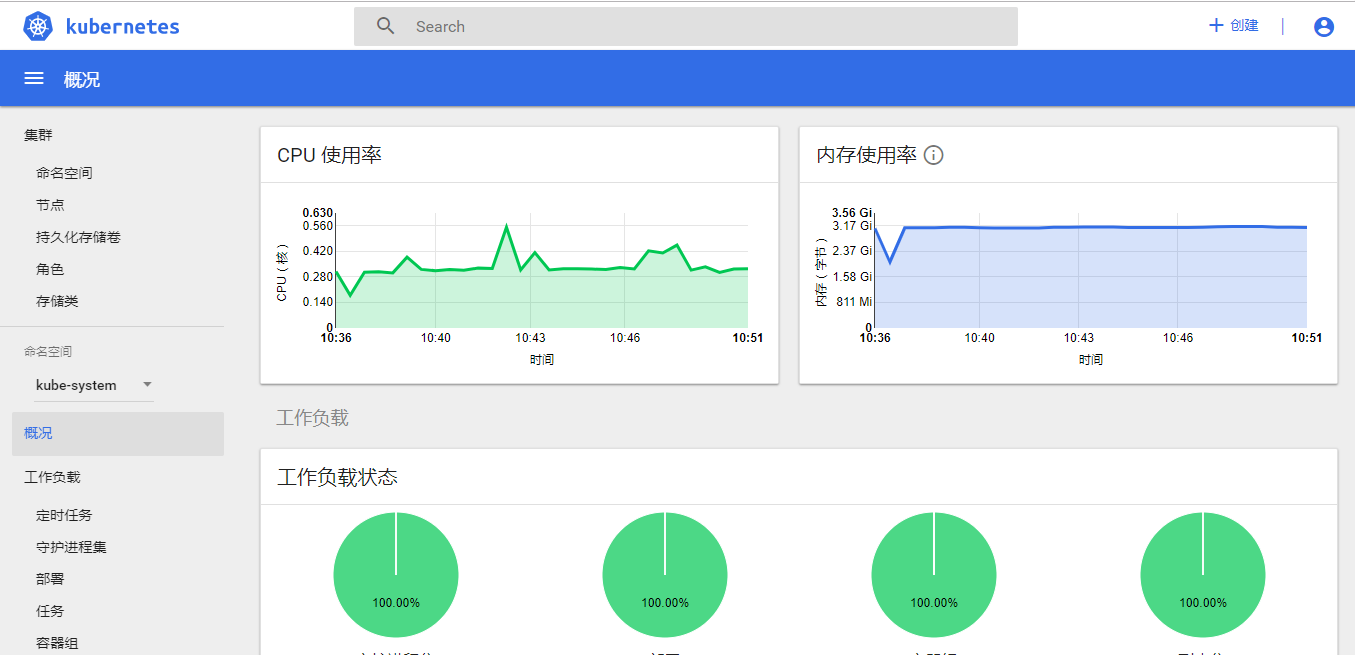

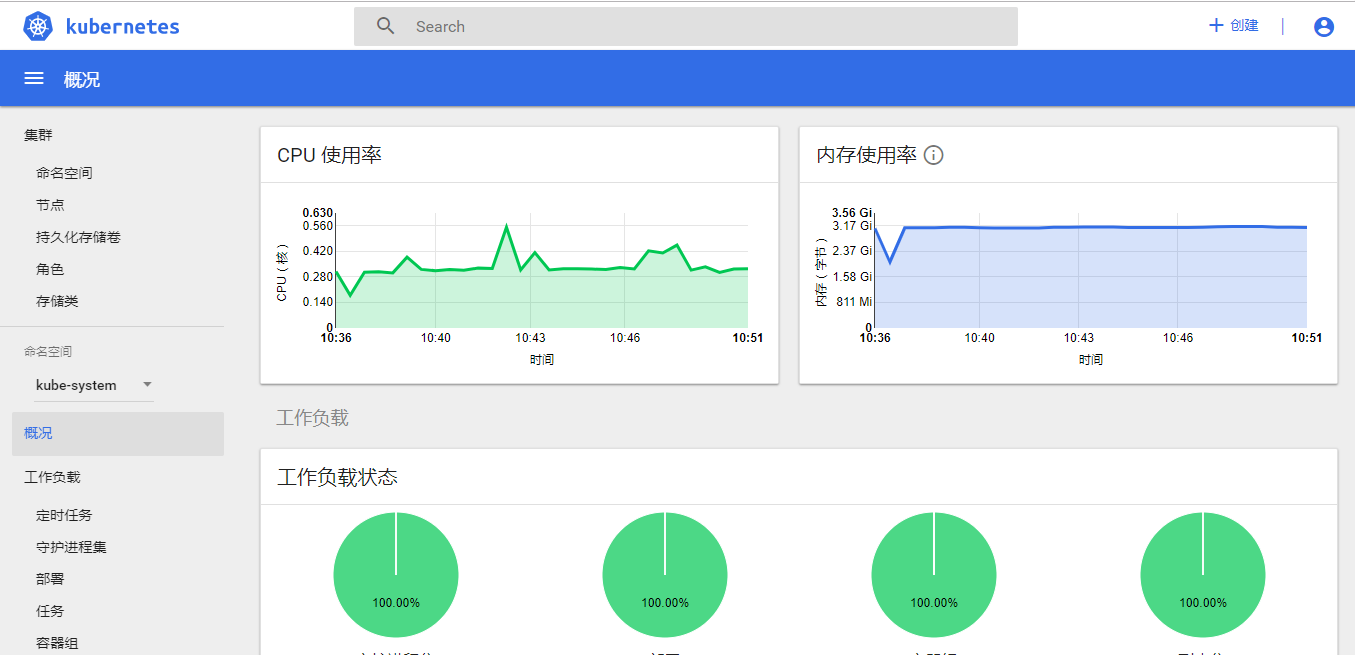

1.9 监控及dashboard安装

kubectl taint nodes --all node-role.kubernetes.io/master-

[root@k8s-master01 ~]# kubectl taint nodes --all node-role.kubernetes.io/master-

node/k8s-master01 untainted

node/k8s-node01 untainted

node/k8s-node02 untainted

- 在任意master节点上安装metrics-server,从v1.11.0开始,性能采集不再采用heapster采集pod性能数据,而是使用metrics-server

[root@k8s-master01 ~]# kubectl apply -f /opt/k8-ha-install/metrics-server/

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

serviceaccount/metrics-server created

deployment.extensions/metrics-server created

service/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

...

metrics-server-b6bc985c4-8twwg 1/1 Running 0 18m 172.168.0.3 k8s-master01 <none>

....

- 在任意master节点上安装heapster,从v1.11.0开始,性能采集不再采用heapster采集pod性能数据,而是使用metrics-server,但是dashboard依然使用heapster呈现性能数据

[root@k8s-master01 ~]# kubectl apply -f /opt/k8-ha-install/heapster/

deployment.extensions/monitoring-grafana created

service/monitoring-grafana created

clusterrolebinding.rbac.authorization.k8s.io/heapster created

clusterrole.rbac.authorization.k8s.io/heapster created

serviceaccount/heapster created

deployment.extensions/heapster created

service/heapster created

deployment.extensions/monitoring-influxdb created

service/monitoring-influxdb created

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

...

heapster-56c97d8b49-qp97p 1/1 Running 0 2m57s 172.168.1.4 k8s-node02 <none>

...

monitoring-grafana-6f8dc9f99f-bwrrt 1/1 Running 0 2m57s 172.168.2.2 k8s-node01 <none>

monitoring-influxdb-556dcc774d-45p9t 1/1 Running 0 2m56s 172.168.0.2 k8s-master01 <none>

...

[root@k8s-master01 ~]# kubectl apply -f /opt/k8-ha-install/dashboard/

secret/kubernetes-dashboard-certs created

serviceaccount/kubernetes-dashboard created

role.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created

deployment.apps/kubernetes-dashboard created

service/kubernetes-dashboard created

serviceaccount/admin-user created

clusterrolebinding.rbac.authorization.k8s.io/admin-user created

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

...

kubernetes-dashboard-64d4f8997d-h47f7 1/1 Running 0 2m9s 172.168.1.5 k8s-node02 <none>

...

[root@k8s-master01 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

calico-node-9cw9r 2/2 Running 0 24m 192.168.2.101 k8s-node01 <none>

calico-node-l6m78 2/2 Running 0 24m 192.168.2.102 k8s-node02 <none>

calico-node-lhqg9 2/2 Running 0 24m 192.168.2.100 k8s-master01 <none>

coredns-6c66ffc55b-8rlpp 1/1 Running 0 3h14m 172.168.1.3 k8s-node02 <none>

coredns-6c66ffc55b-qdvfg 1/1 Running 0 3h14m 172.168.1.2 k8s-node02 <none>

etcd-k8s-master01 1/1 Running 0 3h13m 192.168.2.100 k8s-master01 <none>

etcd-k8s-node01 1/1 Running 0 163m 192.168.2.101 k8s-node01 <none>

etcd-k8s-node02 1/1 Running 0 162m 192.168.2.102 k8s-node02 <none>

heapster-56c97d8b49-qp97p 1/1 Running 0 16m 172.168.1.4 k8s-node02 <none>

kube-apiserver-k8s-master01 1/1 Running 0 26m 192.168.2.100 k8s-master01 <none>

kube-apiserver-k8s-node01 1/1 Running 0 28m 192.168.2.101 k8s-node01 <none>

kube-apiserver-k8s-node02 1/1 Running 0 27m 192.168.2.102 k8s-node02 <none>

kube-controller-manager-k8s-master01 1/1 Running 0 27m 192.168.2.100 k8s-master01 <none>

kube-controller-manager-k8s-node01 1/1 Running 0 149m 192.168.2.101 k8s-node01 <none>

kube-controller-manager-k8s-node02 1/1 Running 1 149m 192.168.2.102 k8s-node02 <none>

kube-proxy-62hjm 1/1 Running 0 167m 192.168.2.102 k8s-node02 <none>

kube-proxy-79fbq 1/1 Running 0 167m 192.168.2.101 k8s-node01 <none>

kube-proxy-jsnhl 1/1 Running 0 3h14m 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-master01 1/1 Running 2 3h13m 192.168.2.100 k8s-master01 <none>

kube-scheduler-k8s-node01 1/1 Running 0 161m 192.168.2.101 k8s-node01 <none>

kube-scheduler-k8s-node02 1/1 Running 0 161m 192.168.2.102 k8s-node02 <none>

kubernetes-dashboard-64d4f8997d-h47f7 1/1 Running 0 16m 172.168.1.5 k8s-node02 <none>

metrics-server-b6bc985c4-8twwg 1/1 Running 0 18m 172.168.0.3 k8s-master01 <none>

monitoring-grafana-6f8dc9f99f-bwrrt 1/1 Running 0 16m 172.168.2.2 k8s-node01 <none>

monitoring-influxdb-556dcc774d-45p9t 1/1 Running 0 16m 172.168.0.2 k8s-master01 <none>

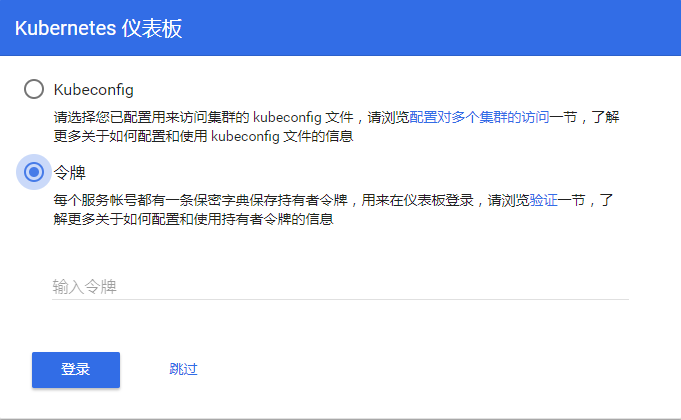

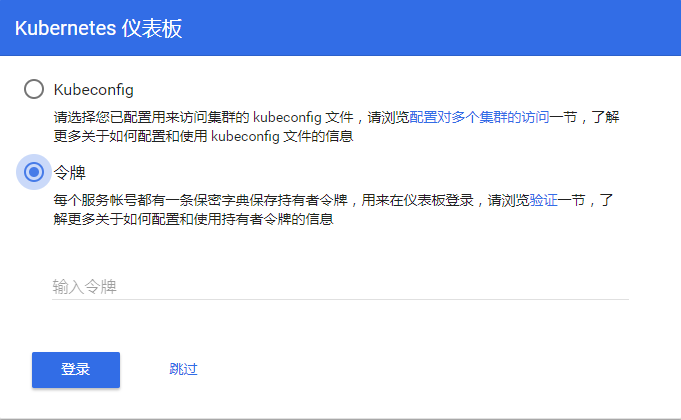

1.10 dashboard配置

[root@k8s-master01 ~]# kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

Name: admin-user-token-dp28v

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: admin-user

kubernetes.io/service-account.uid: d07db348-f867-11e8-bf75-000c295582bd

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1025 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLWRwMjh2Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiJkMDdkYjM0OC1mODY3LTExZTgtYmY3NS0wMDBjMjk1NTgyYmQiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.pynqnVbZyS5YbZv6LRFePdnaZWVR6c1EIyi31eycLgqP9xfCWOs9wfdLLYkjS2yAsUg2ry7mDC1PVKrxke2pzU3l9agUpo34j_cF8mJ8rL0kfL6AiuTIcTy7FmgFOzY44G76qG-jJO2B31ZLwjP96Sxh8p7pptd5V-lxxE7mZNhFcTYIJpfqY7SycRu8zCmeZXgAjYBzJMlq8lOTaq3k_0orsHvujbYy81_GOhA_ASieKioNKsgl9U-41P5DadfaMKacQkxNllvGNIFJti1yMPtXCO6me8EhcqFP2o2ilq7bJjZYrWSE4Emc_ACBK9TtiRdMs6HTLe3xXxtCVFTZEg

1.11 node节点加入集群

[root@k8s-node01 ~]# kubeadm reset

[reset] WARNING: changes made to this host by 'kubeadm init' or 'kubeadm join' will be reverted.

[reset] are you sure you want to proceed? [y/N]: y

[preflight] running pre-flight checks

[reset] stopping the kubelet service

[reset] unmounting mounted directories in "/var/lib/kubelet"

[reset] removing kubernetes-managed containers

[reset] cleaning up running containers using crictl with socket /var/run/dockershim.sock

[reset] failed to list running pods using crictl: exit status 1. Trying to use docker instead[reset] no etcd manifest found in "/etc/kubernetes/manifests/etcd.yaml". Assuming external etcd

[reset] deleting contents of stateful directories: [/var/lib/kubelet /etc/cni/net.d /var/lib/dockershim /var/run/kubernetes]

[reset] deleting contents of config directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

- master节点的token 24小时过期后,可以通过命令产生新的token:

kubeadm token create

kubeadm token list

- master节点上运行命令,可查询discovery-token-ca-cert-hash值:

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null |

openssl dgst -sha256 -hex | sed 's/^.* //'

[root@k8s-node01 ~]# kubeadm join 192.168.2.99:16443 --token 1s1g4k.rsnrw7t1njkjz6zk --discovery-token-ca-cert-hash sha256:357d5c7da9a3dc6edd8edda2dded629913fd601151a571fb020ae5f8cbf19224

[root@k8s-master01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 21h v1.12.3

k8s-node01 Ready master 20h v1.12.3

k8s-node02 Ready master 20h v1.12.3

[root@k8s-master01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 21h v1.12.3

k8s-node01 Ready master 20h v1.12.3

k8s-node02 Ready master 20h v1.12.3