昨日内容拾遗

打开昨天写的DianShang项目,查看items.py

class AmazonItem(scrapy.Item): name = scrapy.Field() # 商品名 price= scrapy.Field() # 价格 delivery=scrapy.Field() # 配送方式

这里的AmazonItem类名,可以随意。这里定义的3个属性,和spidersamazon.py定义的3个key,是一一对应的

# 生成标准化数据 item = AmazonItem() # 执行函数,默认是一个空字典 # 增加键值对 item["name"] = name item["price"] = price item["delivery"] = delivery

查看 pipelines.py

class MongodbPipeline(object): def __init__(self, host, port, db, table): self.host = host self.port = port self.db = db self.table = table @classmethod def from_crawler(cls, crawler): """ Scrapy会先通过getattr判断我们是否自定义了from_crawler,有则调它来完 成实例化 """ HOST = crawler.settings.get('HOST') PORT = crawler.settings.get('PORT') DB = crawler.settings.get('DB') TABLE = crawler.settings.get('TABLE') return cls(HOST, PORT, DB, TABLE)

如果有from_crawler方法,它会优先执行!之后再执行__init__方法。

from_crawler方法必须返回一个对象,这个cls对象,其实是执行了__init__方法。它传送的4个值和__init__是一一对应的!

pipelines.py 可以放多个pipeline,比如文件处理

修改 pipelines.py,增加FilePipeline,它会将爬取的信息写入到文件中

# -*- coding: utf-8 -*- # Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html from pymongo import MongoClient class MongodbPipeline(object): def __init__(self, host, port, db, table): self.host = host self.port = port self.db = db self.table = table @classmethod def from_crawler(cls, crawler): """ Scrapy会先通过getattr判断我们是否自定义了from_crawler,有则调它来完 成实例化 """ HOST = crawler.settings.get('HOST') PORT = crawler.settings.get('PORT') DB = crawler.settings.get('DB') TABLE = crawler.settings.get('TABLE') return cls(HOST, PORT, DB, TABLE) def open_spider(self, spider): """ 爬虫刚启动时执行一次 """ # self.client = MongoClient('mongodb://%s:%s@%s:%s' %(self.user,self.pwd,self.host,self.port)) self.client = MongoClient(host=self.host, port=self.port) def close_spider(self, spider): """ 爬虫关闭时执行一次 """ self.client.close() def process_item(self, item, spider): # 操作并进行持久化 d = dict(item) if all(d.values()): self.client[self.db][self.table].insert(d) print("添加成功一条") class FilePipeline(object): def __init__(self, file_path): self.file_path=file_path @classmethod def from_crawler(cls, crawler): """ Scrapy会先通过getattr判断我们是否自定义了from_crawler,有则调它来完 成实例化 """ file_path = crawler.settings.get('FILE_PATH') return cls(file_path) def open_spider(self, spider): """ 爬虫刚启动时执行一次 """ print('==============>爬虫程序刚刚启动') self.fileobj=open(self.file_path,'w',encoding='utf-8') def close_spider(self, spider): """ 爬虫关闭时执行一次 """ print('==============>爬虫程序运行完毕') self.fileobj.close() def process_item(self, item, spider): # 操作并进行持久化 print("items----->",item) # return表示会被后续的pipeline继续处理 d = dict(item) if all(d.values()): self.fileobj.write("%s " %str(d)) return item # 表示将item丢弃,不会被后续pipeline处理

如果写了raise,表示将item丢弃,不会被后续pipeline处理。

由于file_path指定的文件路径,需要在settings中获取。

修改 setting.py,最后一行增加配置项FILE_PATH

FILE_PATH='pipe.txt'

这里写的是相对路由,实际路径是项目根目录

修改 setting.py,增加pipeline

ITEM_PIPELINES = { 'DianShang.pipelines.MongodbPipeline': 300, 'DianShang.pipelines.FilePipeline': 500, }

修改 pipelines.py,修改MongodbPipeline中的process_item方法,它必须要return

def process_item(self, item, spider): # 操作并进行持久化 d = dict(item) if all(d.values()): self.client[self.db][self.table].insert(d) print("添加成功一条") return item

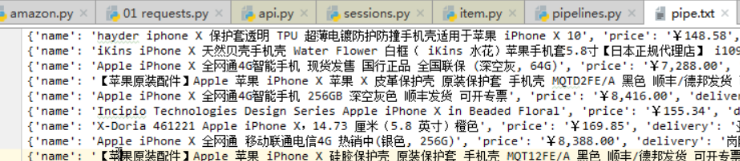

执行bin.py,查看pipe.txt,内容如下:

修改 spiders-->amazon.py,增加close方法。这个命令不能变动!

# -*- coding: utf-8 -*- import scrapy from scrapy import Request # 导入模块 from DianShang.items import AmazonItem # 导入item class AmazonSpider(scrapy.Spider): name = 'amazon' allowed_domains = ['amazon.cn'] # start_urls = ['http://amazon.cn/'] # 自定义配置,注意:变量名必须是custom_settings custom_settings = { 'REQUEST_HEADERS': { 'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.75 Safari/537.36', } } def start_requests(self): r1 = Request(url="https://www.amazon.cn/s/ref=nb_sb_ss_i_3_6?field-keywords=iphone+x", headers=self.settings.get('REQUEST_HEADERS'),) yield r1 def parse(self, response): # 商品详细链接 detail_urls = response.xpath('//li[contains(@id,"result_")]/div/div[3]/div[1]/a/@href').extract() # print(detail_urls) for url in detail_urls: yield Request(url=url, headers=self.settings.get('REQUEST_HEADERS'), # 请求头 callback=self.parse_detail, # 回调函数 dont_filter=True # 不去重 ) def parse_detail(self, response): # 获取商品详细信息 # 商品名,获取第一个结果 name = response.xpath('//*[@id="productTitle"]/text()').extract_first() if name: name = name.strip() # 商品价格 price = response.xpath('//*[@id="priceblock_ourprice"]/text()').extract_first() # 配送方式,*[1]表示取第一个标签,也就是b标签 delivery = response.xpath('//*[@id="ddmMerchantMessage"]/*[1]/text()').extract_first() print(name,price,delivery) # 生成标准化数据 item = AmazonItem() # 执行函数,默认是一个空字典 # 增加键值对 item["name"] = name item["price"] = price item["delivery"] = delivery return item # 必须要返回 def close(self,reason): print("spider is closed")

这个方法,在每次请求执行完毕后,会调用。它可以打印一些日志信息,或者做一些收尾工作!

一、下载中间件

class MyDownMiddleware(object): def process_request(self, request, spider): """ 请求需要被下载时,经过所有下载器中间件的process_request调用 :param request: :param spider: :return: None,继续后续中间件去下载; Response对象,停止process_request的执行,开始执行process_response Request对象,停止中间件的执行,将Request重新调度器 raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception """ pass def process_response(self, request, response, spider): """ spider处理完成,返回时调用 :param response: :param result: :param spider: :return: Response 对象:转交给其他中间件process_response Request 对象:停止中间件,request会被重新调度下载 raise IgnoreRequest 异常:调用Request.errback """ print('response1') return response def process_exception(self, request, exception, spider): """ 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常 :param response: :param exception: :param spider: :return: None:继续交给后续中间件处理异常; Response对象:停止后续process_exception方法 Request对象:停止中间件,request将会被重新调用下载 """ return None

这个主要用来做代理IP更换的!比如某些网站,一分钟只能下载3次。超过3次之后,就会封锁IP。

这个时候再去访问已经没有意义了,需要更改IP地址才行!

在scrapy架构中,主要有8个步骤。有可能第4步-->第5步时,就会出现问题,需要重新访问才行!

中间的蓝色条块,就是中间件!如果在中间件里面做更改IP操作,那么就可以保证每次请求都是不同的IP地址访问。

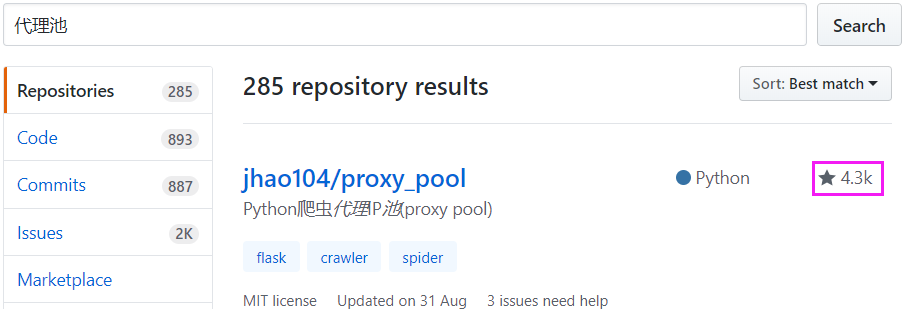

这里需要做一个IP代理池,有一个请求过来,通过中间件,就取一个IP地址做封装!

只要每次IP不一样,某些网站就无法封锁你!

推荐在中间件中做更改IP操作,为什么呢?目前在spiders中,只有一个亚马逊爬虫程序。

假设还有一个淘宝爬虫程序,它也需要做更好IP操作,怎么办?每一个爬虫程序里面,用代码实现更换IP操作吗?

这样代码就重复了,如果在中间中做更改IP的操作,那么不管有多少个爬虫程序,都会自动更换IP。

所以:对于所有请求做同一批量操作时,推荐使用中间件!

不管针对于换IP,还以做cookie池,账户池(花钱买一堆真实账户)

在django的中间中,如果遇到return HttpResponse或者异常,它会原路返回!

但是在scrapy框架中,它是从最里面的Response返回。每一个中间件的Response都会被执行!

看上面蓝色块中的Request对象,它帮你做了封装。那么更换IP操作,是在这里面封装的!

如果遇到报错,会交给SCHEDULER,也就是调度器。

举例:

修改 middlewares.py,增加2个下载中间件

由于时间关系,步骤略...

项目链接如下:

https://github.com/jhao104/proxy_pool

使用方法,请先查看README.md

由于时间关系,步骤略...

二、settings配置

# -*- coding: utf-8 -*- # Scrapy settings for step8_king project # # For simplicity, this file contains only settings considered important or # commonly used. You can find more settings consulting the documentation: # # http://doc.scrapy.org/en/latest/topics/settings.html # http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html # http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html # 1. 爬虫名称 BOT_NAME = 'step8_king' # 2. 爬虫应用路径 SPIDER_MODULES = ['step8_king.spiders'] NEWSPIDER_MODULE = 'step8_king.spiders' # Crawl responsibly by identifying yourself (and your website) on the user-agent # 3. 客户端 user-agent请求头 # USER_AGENT = 'step8_king (+http://www.yourdomain.com)' # Obey robots.txt rules # 4. 禁止爬虫配置 # ROBOTSTXT_OBEY = False # Configure maximum concurrent requests performed by Scrapy (default: 16) # 5. 并发请求数 # CONCURRENT_REQUESTS = 4 # Configure a delay for requests for the same website (default: 0) # See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay # See also autothrottle settings and docs # 6. 延迟下载秒数 # DOWNLOAD_DELAY = 2 # The download delay setting will honor only one of: # 7. 单域名访问并发数,并且延迟下次秒数也应用在每个域名 # CONCURRENT_REQUESTS_PER_DOMAIN = 2 # 单IP访问并发数,如果有值则忽略:CONCURRENT_REQUESTS_PER_DOMAIN,并且延迟下次秒数也应用在每个IP # CONCURRENT_REQUESTS_PER_IP = 3 # Disable cookies (enabled by default) # 8. 是否支持cookie,cookiejar进行操作cookie # COOKIES_ENABLED = True # COOKIES_DEBUG = True # Disable Telnet Console (enabled by default) # 9. Telnet用于查看当前爬虫的信息,操作爬虫等... # 使用telnet ip port ,然后通过命令操作 # TELNETCONSOLE_ENABLED = True # TELNETCONSOLE_HOST = '127.0.0.1' # TELNETCONSOLE_PORT = [6023,] # 10. 默认请求头 # Override the default request headers: # DEFAULT_REQUEST_HEADERS = { # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', # 'Accept-Language': 'en', # } # Configure item pipelines # See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html # 11. 定义pipeline处理请求 # ITEM_PIPELINES = { # 'step8_king.pipelines.JsonPipeline': 700, # 'step8_king.pipelines.FilePipeline': 500, # } # 12. 自定义扩展,基于信号进行调用 # Enable or disable extensions # See http://scrapy.readthedocs.org/en/latest/topics/extensions.html # EXTENSIONS = { # # 'step8_king.extensions.MyExtension': 500, # } # 13. 爬虫允许的最大深度,可以通过meta查看当前深度;0表示无深度 # DEPTH_LIMIT = 3 # 14. 爬取时,0表示深度优先Lifo(默认);1表示广度优先FiFo # 后进先出,深度优先 # DEPTH_PRIORITY = 0 # SCHEDULER_DISK_QUEUE = 'scrapy.squeue.PickleLifoDiskQueue' # SCHEDULER_MEMORY_QUEUE = 'scrapy.squeue.LifoMemoryQueue' # 先进先出,广度优先 # DEPTH_PRIORITY = 1 # SCHEDULER_DISK_QUEUE = 'scrapy.squeue.PickleFifoDiskQueue' # SCHEDULER_MEMORY_QUEUE = 'scrapy.squeue.FifoMemoryQueue' # 15. 调度器队列 # SCHEDULER = 'scrapy.core.scheduler.Scheduler' # from scrapy.core.scheduler import Scheduler # 16. 访问URL去重 # DUPEFILTER_CLASS = 'step8_king.duplication.RepeatUrl' # Enable and configure the AutoThrottle extension (disabled by default) # See http://doc.scrapy.org/en/latest/topics/autothrottle.html """ 17. 自动限速算法 from scrapy.contrib.throttle import AutoThrottle 自动限速设置 1. 获取最小延迟 DOWNLOAD_DELAY 2. 获取最大延迟 AUTOTHROTTLE_MAX_DELAY 3. 设置初始下载延迟 AUTOTHROTTLE_START_DELAY 4. 当请求下载完成后,获取其"连接"时间 latency,即:请求连接到接受到响应头之间的时间 5. 用于计算的... AUTOTHROTTLE_TARGET_CONCURRENCY target_delay = latency / self.target_concurrency new_delay = (slot.delay + target_delay) / 2.0 # 表示上一次的延迟时间 new_delay = max(target_delay, new_delay) new_delay = min(max(self.mindelay, new_delay), self.maxdelay) slot.delay = new_delay """ # 开始自动限速 # AUTOTHROTTLE_ENABLED = True # The initial download delay # 初始下载延迟 # AUTOTHROTTLE_START_DELAY = 5 # The maximum download delay to be set in case of high latencies # 最大下载延迟 # AUTOTHROTTLE_MAX_DELAY = 10 # The average number of requests Scrapy should be sending in parallel to each remote server # 平均每秒并发数 # AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: # 是否显示 # AUTOTHROTTLE_DEBUG = True # Enable and configure HTTP caching (disabled by default) # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings """ 18. 启用缓存 目的用于将已经发送的请求或相应缓存下来,以便以后使用 from scrapy.downloadermiddlewares.httpcache import HttpCacheMiddleware from scrapy.extensions.httpcache import DummyPolicy from scrapy.extensions.httpcache import FilesystemCacheStorage """ # 是否启用缓存策略 # HTTPCACHE_ENABLED = True # 缓存策略:所有请求均缓存,下次在请求直接访问原来的缓存即可 # HTTPCACHE_POLICY = "scrapy.extensions.httpcache.DummyPolicy" # 缓存策略:根据Http响应头:Cache-Control、Last-Modified 等进行缓存的策略 # HTTPCACHE_POLICY = "scrapy.extensions.httpcache.RFC2616Policy" # 缓存超时时间 # HTTPCACHE_EXPIRATION_SECS = 0 # 缓存保存路径 # HTTPCACHE_DIR = 'httpcache' # 缓存忽略的Http状态码 # HTTPCACHE_IGNORE_HTTP_CODES = [] # 缓存存储的插件 # HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage' """ 19. 代理,需要在环境变量中设置 from scrapy.contrib.downloadermiddleware.httpproxy import HttpProxyMiddleware 方式一:使用默认 os.environ { http_proxy:http://root:woshiniba@192.168.11.11:9999/ https_proxy:http://192.168.11.11:9999/ } 方式二:使用自定义下载中间件 def to_bytes(text, encoding=None, errors='strict'): if isinstance(text, bytes): return text if not isinstance(text, six.string_types): raise TypeError('to_bytes must receive a unicode, str or bytes ' 'object, got %s' % type(text).__name__) if encoding is None: encoding = 'utf-8' return text.encode(encoding, errors) class ProxyMiddleware(object): def process_request(self, request, spider): PROXIES = [ {'ip_port': '111.11.228.75:80', 'user_pass': ''}, {'ip_port': '120.198.243.22:80', 'user_pass': ''}, {'ip_port': '111.8.60.9:8123', 'user_pass': ''}, {'ip_port': '101.71.27.120:80', 'user_pass': ''}, {'ip_port': '122.96.59.104:80', 'user_pass': ''}, {'ip_port': '122.224.249.122:8088', 'user_pass': ''}, ] proxy = random.choice(PROXIES) if proxy['user_pass'] is not None: request.meta['proxy'] = to_bytes("http://%s" % proxy['ip_port']) encoded_user_pass = base64.encodestring(to_bytes(proxy['user_pass'])) request.headers['Proxy-Authorization'] = to_bytes('Basic ' + encoded_user_pass) print "**************ProxyMiddleware have pass************" + proxy['ip_port'] else: print "**************ProxyMiddleware no pass************" + proxy['ip_port'] request.meta['proxy'] = to_bytes("http://%s" % proxy['ip_port']) DOWNLOADER_MIDDLEWARES = { 'step8_king.middlewares.ProxyMiddleware': 500, } """ """ 20. Https访问 Https访问时有两种情况: 1. 要爬取网站使用的可信任证书(默认支持) DOWNLOADER_HTTPCLIENTFACTORY = "scrapy.core.downloader.webclient.ScrapyHTTPClientFactory" DOWNLOADER_CLIENTCONTEXTFACTORY = "scrapy.core.downloader.contextfactory.ScrapyClientContextFactory" 2. 要爬取网站使用的自定义证书 DOWNLOADER_HTTPCLIENTFACTORY = "scrapy.core.downloader.webclient.ScrapyHTTPClientFactory" DOWNLOADER_CLIENTCONTEXTFACTORY = "step8_king.https.MySSLFactory" # https.py from scrapy.core.downloader.contextfactory import ScrapyClientContextFactory from twisted.internet.ssl import (optionsForClientTLS, CertificateOptions, PrivateCertificate) class MySSLFactory(ScrapyClientContextFactory): def getCertificateOptions(self): from OpenSSL import crypto v1 = crypto.load_privatekey(crypto.FILETYPE_PEM, open('/Users/wupeiqi/client.key.unsecure', mode='r').read()) v2 = crypto.load_certificate(crypto.FILETYPE_PEM, open('/Users/wupeiqi/client.pem', mode='r').read()) return CertificateOptions( privateKey=v1, # pKey对象 certificate=v2, # X509对象 verify=False, method=getattr(self, 'method', getattr(self, '_ssl_method', None)) ) 其他: 相关类 scrapy.core.downloader.handlers.http.HttpDownloadHandler scrapy.core.downloader.webclient.ScrapyHTTPClientFactory scrapy.core.downloader.contextfactory.ScrapyClientContextFactory 相关配置 DOWNLOADER_HTTPCLIENTFACTORY DOWNLOADER_CLIENTCONTEXTFACTORY """ """ 21. 爬虫中间件 class SpiderMiddleware(object): def process_spider_input(self,response, spider): ''' 下载完成,执行,然后交给parse处理 :param response: :param spider: :return: ''' pass def process_spider_output(self,response, result, spider): ''' spider处理完成,返回时调用 :param response: :param result: :param spider: :return: 必须返回包含 Request 或 Item 对象的可迭代对象(iterable) ''' return result def process_spider_exception(self,response, exception, spider): ''' 异常调用 :param response: :param exception: :param spider: :return: None,继续交给后续中间件处理异常;含 Response 或 Item 的可迭代对象(iterable),交给调度器或pipeline ''' return None def process_start_requests(self,start_requests, spider): ''' 爬虫启动时调用 :param start_requests: :param spider: :return: 包含 Request 对象的可迭代对象 ''' return start_requests 内置爬虫中间件: 'scrapy.contrib.spidermiddleware.httperror.HttpErrorMiddleware': 50, 'scrapy.contrib.spidermiddleware.offsite.OffsiteMiddleware': 500, 'scrapy.contrib.spidermiddleware.referer.RefererMiddleware': 700, 'scrapy.contrib.spidermiddleware.urllength.UrlLengthMiddleware': 800, 'scrapy.contrib.spidermiddleware.depth.DepthMiddleware': 900, """ # from scrapy.contrib.spidermiddleware.referer import RefererMiddleware # Enable or disable spider middlewares # See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html SPIDER_MIDDLEWARES = { # 'step8_king.middlewares.SpiderMiddleware': 543, } """ 22. 下载中间件 class DownMiddleware1(object): def process_request(self, request, spider): ''' 请求需要被下载时,经过所有下载器中间件的process_request调用 :param request: :param spider: :return: None,继续后续中间件去下载; Response对象,停止process_request的执行,开始执行process_response Request对象,停止中间件的执行,将Request重新调度器 raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception ''' pass def process_response(self, request, response, spider): ''' spider处理完成,返回时调用 :param response: :param result: :param spider: :return: Response 对象:转交给其他中间件process_response Request 对象:停止中间件,request会被重新调度下载 raise IgnoreRequest 异常:调用Request.errback ''' print('response1') return response def process_exception(self, request, exception, spider): ''' 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常 :param response: :param exception: :param spider: :return: None:继续交给后续中间件处理异常; Response对象:停止后续process_exception方法 Request对象:停止中间件,request将会被重新调用下载 ''' return None 默认下载中间件 { 'scrapy.contrib.downloadermiddleware.robotstxt.RobotsTxtMiddleware': 100, 'scrapy.contrib.downloadermiddleware.httpauth.HttpAuthMiddleware': 300, 'scrapy.contrib.downloadermiddleware.downloadtimeout.DownloadTimeoutMiddleware': 350, 'scrapy.contrib.downloadermiddleware.useragent.UserAgentMiddleware': 400, 'scrapy.contrib.downloadermiddleware.retry.RetryMiddleware': 500, 'scrapy.contrib.downloadermiddleware.defaultheaders.DefaultHeadersMiddleware': 550, 'scrapy.contrib.downloadermiddleware.redirect.MetaRefreshMiddleware': 580, 'scrapy.contrib.downloadermiddleware.httpcompression.HttpCompressionMiddleware': 590, 'scrapy.contrib.downloadermiddleware.redirect.RedirectMiddleware': 600, 'scrapy.contrib.downloadermiddleware.cookies.CookiesMiddleware': 700, 'scrapy.contrib.downloadermiddleware.httpproxy.HttpProxyMiddleware': 750, 'scrapy.contrib.downloadermiddleware.chunked.ChunkedTransferMiddleware': 830, 'scrapy.contrib.downloadermiddleware.stats.DownloaderStats': 850, 'scrapy.contrib.downloadermiddleware.httpcache.HttpCacheMiddleware': 900, } """ # from scrapy.contrib.downloadermiddleware.httpauth import HttpAuthMiddleware # Enable or disable downloader middlewares # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html # DOWNLOADER_MIDDLEWARES = { # 'step8_king.middlewares.DownMiddleware1': 100, # 'step8_king.middlewares.DownMiddleware2': 500, # }

三、亚马逊项目

完整代码,请参考:

未完待续...