1、logstash简介

logstash是一个数据分析软件,主要目的是分析log日志。整一套软件可以当作一个MVC模型,logstash是controller层,Elasticsearch是一个model层,kibana是view层。

首先将数据传给logstash,它将数据进行过滤和格式化(转成JSON格式),然后传给Elasticsearch进行存储、建搜索的索引,kibana提供前端的页面再进行搜索和图表可视化,

它是调用Elasticsearch的接口返回的数据进行可视化。logstash和Elasticsearch是用Java写的,kibana使用node.js框架。架构图:

logstash工作时,主要设置3个部分的工作属性:

input:设置数据来源; 常用:File、syslog、redis、beats(如:Filebeats)

filter:对数据进行一定的加工处理过滤,但不建议做复杂的处理逻辑。此步骤不是必须的;常用:grok、mutate、drop、clone、geoip

output:设置输出目标; 常用:elasticsearch、file、graphite、statsd

四种类型的插件:

input,filter,codec,output

数据类型:

Array: [iteml,item2,.…]

Boolean: true,false

Bytes:

Codec:编码器

Hash: key => value

Number:

Password:

Path:文件系统路径;

String:字符串

2、logstash安装

(1)

logstash-1.5.4-1.noarch.rpm

下载地址:https://download.elastic.co/logstash/logstash/packages/centos/logstash-1.5.4-1.noarch.rpm

安装JDK:

yum -y install java-1.8.0-openjdk.x86_64

yum -y install java-1.8.0-openjdk-devel.x86_64

yum -y install java-1.8.0-openjdk-headless.x86_64

安装logstash, 新开了一台node4:

yum -y install logstash-1.5.4-1.noarch.rpm

(2)测试:

#PATH [root@node4 ~]# ls /opt/ #默认安装到了opt下 logstash [root@node4 ~]# cat /etc/profile.d/logstash.sh export PATH=/opt/logstash/bin:$PATH [root@node4 ~]# cd /etc/logstash/conf.d/ #配置文件目录

[root@node4 conf.d]# vim sample.conf #写个配置文件,内容如下 input { stdin {} } output { stdout { codec => rubydebug } } [root@node4 conf.d]# logstash -f /etc/logstash/conf.d/sample.conf --configtest #检查配置文件语法

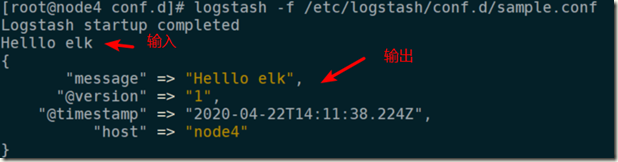

执行:

3、详解

(1)inpute

File

File:从指定的文件中读取事件流; 使用FileWatch(Ruby Gem库)监听文件的变化。 .sincedb:记录了每个被监听的文件的inode,major number,minor nubmer,pos; #----- #从/var/log/messages读取日志 [root@node4 conf.d]# cat filesample.conf input { file { path => ["/var/log/messages"] type => "system" start_position => "beginning" } } output { stdout { codec => rubydebug } } #----- #日志输出到了标准输出 [root@node4 conf.d]# logstash -f /etc/logstash/conf.d/filesample.conf --configtest [root@node4 conf.d]# logstash -f /etc/logstash/conf.d/filesample.conf |head -n 8 { "message" => "Mar 29 04:09:26 localhost journal: Runtime journal is using 6.0M .", "@version" => "1", "@timestamp" => "2020-04-22T15:09:19.310Z", "host" => "node4", "path" => "/var/log/messages", "type" => "system" }

udp

udp:通过udp协议从网络连接来读取Message,其必备参数为port,用于指明自己监听的端口,host则用于指明自己监听的地址; #在node3上安装collectd,用它发送系统信息日志给node4,node4监听node3 #collectd是一个守护(daemon)进程,用来定期收集系统和应用程序的性能指标 #---- [root@node3 ~]# yum -y install collectd #/etc/collectd.conf是配置文件,LoadPlugin是collectd启用的插件(收集哪些数据), [root@node3 ~]# grep -v "^#" /etc/collectd.conf | grep -v "^$" Hostname "node3" LoadPlugin syslog LoadPlugin cpu LoadPlugin df LoadPlugin interface LoadPlugin load LoadPlugin memory LoadPlugin network <Plugin network> #有些插件需要做配置, 192.168.3.114是node4 <Server "192.168.3.114" "25826"> #发给谁,以及发送数据的端口(udp) </Server> </Plugin> Include "/etc/collectd.d" [root@node3 ~]# systemctl start collectd [root@node3 ~]# systemctl status collectd

#----node4 [root@node4 conf.d]# cat udpsample.conf input { udp { port => 25826 codec => collectd {} type => "collectd" } } output { stdout { codec => rubydebug } } #---- [root@node4 conf.d]# logstash -f /etc/logstash/conf.d/udpsample.conf Logstash startup completed { "host" => "node3", "@timestamp" => "2020-04-22T15:55:08.119Z", "plugin" => "df", "plugin_instance" => "run-user-0", "collectd_type" => "df_complex", "type_instance" => "free", "value" => 190799872.0, "@version" => "1", "type" => "collectd" } { "host" => "node3", "@timestamp" => "2020-04-22T15:55:08.119Z", "type_instance" => "nice", "plugin" => "cpu", "plugin_instance" => "0", "collectd_type" => "cpu", "value" => 62, "@version" => "1", "type" => "collectd" } ......

redis

从redis读取数据,支持redis channel和lists两种方式;

(2)filter

grok

grok:用于分析并结构化文本数据;目前是logstash中将非结构化日志数据转化为结构化的可查询数据的不二之选。

grok是一种采用组合多个预定义的正则表达式,用来匹配分割文本并映射到关键字的工具。通常用来对日志数据进行预处理。

可处理syslog,apache,nginx…等日志;

grok默认内置120个预定义匹配字段(可直接用),可查看以下文件:

/opt/logstash/vendor/bundle/jruby/1.9/gems/logstash-patterns-core-0.3.0/patterns/grok-patterns

也可以自己定义,自定义的加入上面的文件中即可,或者在/opt/logstash/vendor/bundle/jruby/1.9/gems/logstash-patterns-core-0.3.0/patterns中新建一个专门的文件,也行;

例子:

[root@node4 conf.d]# cat groksample.conf input { stdin {} } filter { grok { match => { "message" => "%{IP:clientip} %{WORD:method} %{URIPATHPARAM:request} %{NUMBER:bytes} %{NUMBER:duration}" } } } output { stdout { codec => rubydebug } }

# {IP:clientip} ip是匹配时的字段,clientip是输出时显示的字段名字

%{IP:clientip} %{WORD:method} %{URIPATHPARAM:request} %{NUMBER:bytes} %{NUMBER:duration}

检查&启动:

[root@node4 conf.d]# logstash -f /etc/logstash/conf.d/groksample.conf --configtest

[root@node4 conf.d]# logstash -f /etc/logstash/conf.d/groksample.conf

自定义grok模式:

grok的模式是基于正则表达式编写,其元字符与其它用到正则表达式的工具awk/sed/grep/pcre差别不大。

自定义nginx日志模式:

# 文件末尾加入解析nginx日志的模型 [root@node4 ~]# vim /opt/logstash/vendor/bundle/jruby/1.9/gems/logstash-patterns-core-0.3.0/patterns/grok-patterns #nginx NGUSERNAME [a-zA-Z.@-+_%]+ NGUSER %{NGUSERNAME} NGINXACCESS %{IPORHOST:clientip} - %{NOTSPACE:remote_user} [%{HTTPDATE:timestamp}] "(?:%{WORD:verb} %{NOTSPACE:request}

(?: HTTP/%{NUMBER:httpversion})?|%{DATA:rawrequest})" %{NUMBER:response} (?:%{NUMBER:bytes}|-) %{QS:referrer} %{QS:agent} %{NOTSPACE:http_x_forwarded_for}

#---- # 在node4上安装一个nginx,并产生一些访问日志 yum -y install nginx systemctl start nginx 浏览器访问一下,生成日志:http://192.168.3.114/

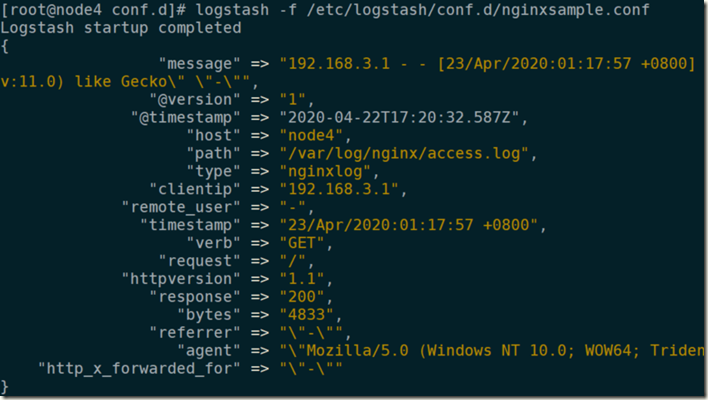

#---- [root@node4 conf.d]# cat nginxsample.conf input { file { path => ["/var/log/nginx/access.log"] type => "nginxlog" start_position => "beginning" } } filter { grok { match => { "message" => "%{NGINXACCESS}" } #这里直接引用变量即可 } } output { stdout { codec => rubydebug } }

检查&启动:

[root@node4 conf.d]# logstash -f /etc/logstash/conf.d/nginxsample.conf –configtest

可见nginx的日志已经解析出来了:

(3)output

stdout

elasticsearch

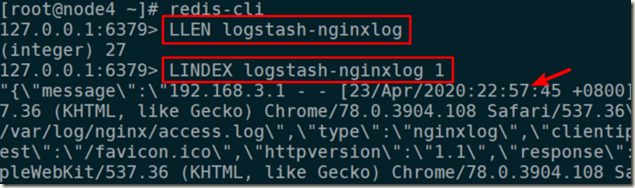

让收集的nginx日志进入redis:

node4安装redis:

[root@node4 ~]# yum -y install redis

/etc/redis.conf 设置:bind 0.0.0.0[root@node4 ~]# systemctl start redis.service

logstash配置文件:

cd /etc/logstash/conf.d

[root@node4 conf.d]# vim nglogredissample.conf input { file { path => ["/var/log/nginx/access.log"] type => "nginxlog" start_position => "beginning" } } filter { grok { match => { "message" => "%{NGINXACCESS}" } } } output { redis { port => "6379" #redis的端口 host => ["127.0.0.1"] #redis主机 data_type => "list" #数据类型 key => "logstash-%{type}" #key } }

#检查&启动

[root@node4 conf.d]# logstash -f ./nglogredissample.conf --configtest

[root@node4 conf.d]# logstash -f ./nglogredissample.conf #此时刷几下nginx的页面,日志就进入redis中了