python信用评分卡建模(附代码,博主录制)

机器学习合作项目可联系

QQ:231469242

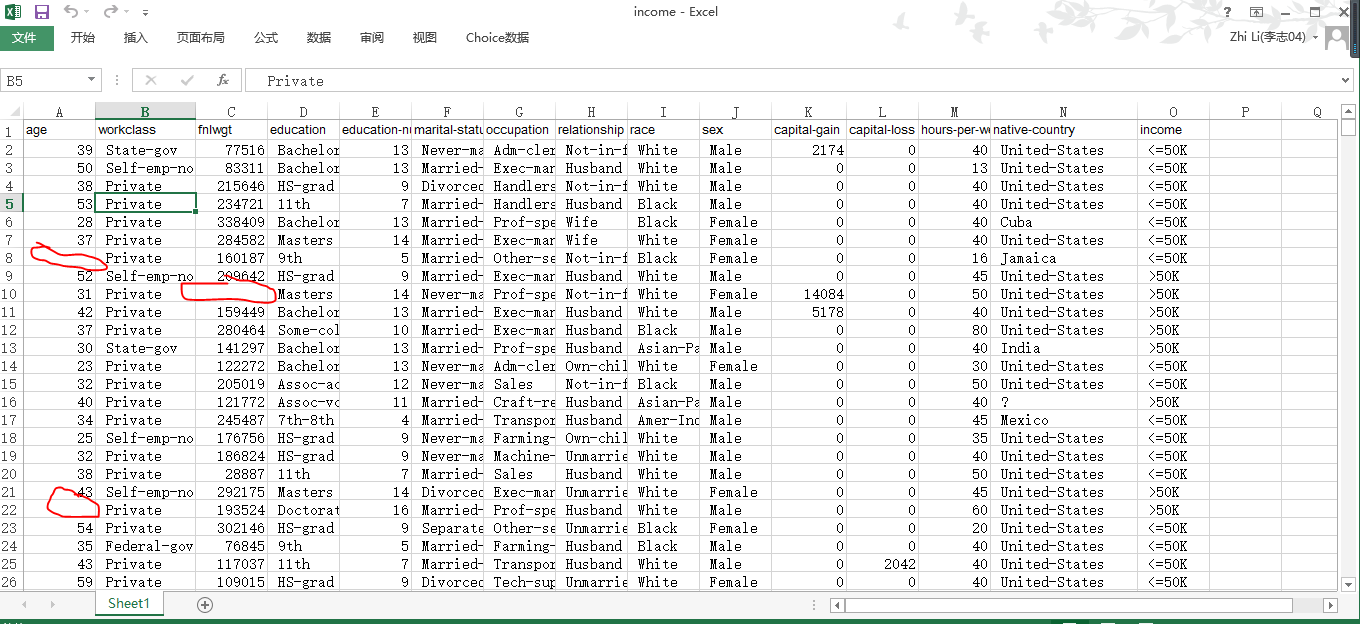

数据源

https://archive.ics.uci.edu/ml/machine-learning-databases/adult/adult.names

fnlwgt (final weight)

Description of fnlwgt (final weight) | | The weights on the CPS files are controlled to independent estimates of the | civilian noninstitutional population of the US. These are prepared monthly | for us by Population Division here at the Census Bureau. We use 3 sets of | controls. | These are: | 1. A single cell estimate of the population 16+ for each state. | 2. Controls for Hispanic Origin by age and sex. | 3. Controls by Race, age and sex. | | We use all three sets of controls in our weighting program and "rake" through | them 6 times so that by the end we come back to all the controls we used. | | The term estimate refers to population totals derived from CPS by creating | "weighted tallies" of any specified socio-economic characteristics of the | population. | | People with similar demographic characteristics should have | similar weights. There is one important caveat to remember | about this statement. That is that since the CPS sample is | actually a collection of 51 state samples, each with its own | probability of selection, the statement only applies within | state.

fnlwgt的描述(最终重量)

当前人口调查(CPS)档案中的权重受到对美国民间非机构人口的独立估计的控制。这些是由人口司每月为我们在人口普查局这里准备的。我们使用3套控件。这些是:

-

单个细胞估计每个州16岁以上的人口。

-

按年龄和性别控制西班牙裔。

-

按种族,年龄和性别控制。

我们在加权程序中使用所有三组控件,并通过它们“耙”6次,最终我们回到所有我们使用的控件。术语“估计”指的是通过创建人口任何特定社会经济特征的“加权统计”来源于CPS的人口总数。具有相似人口特征的人应具有相似的权重。要记住这个声明有一个重要的警告。这就是说,由于CPS样本实际上是51个状态样本的集合,每个样本都有自己的选择概率,所以该语句仅适用于状态。

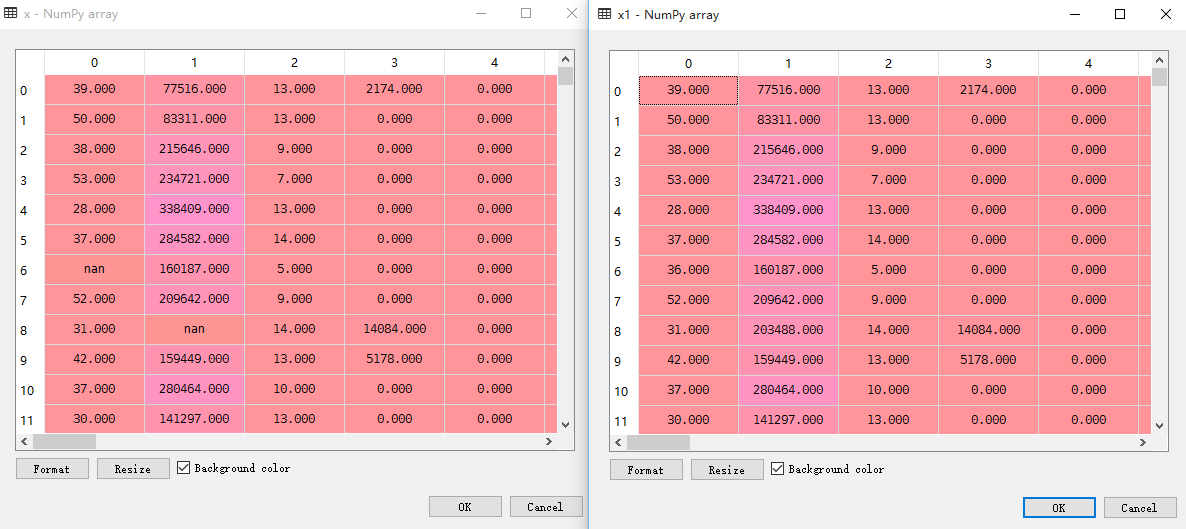

对比原始数据和imputer处理后数据

数据集里删除了几个值,作为缺失数据

最后逻辑回归准确率8%左右

# -*- coding: utf-8 -*-

"""

Created on Tue Aug 14 10:34:11 2018

@author: zhi.li04

哑变量可以解决分类变量得缺失数据

连续变量缺失数据必须用Imputer 函数处理

"""

import pandas as pd

from sklearn import metrics

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn.preprocessing import Imputer

#读取文件

readFileName="income.xlsx"

#读取excel

data=pd.read_excel(readFileName)

#data=data[['age','workclass','education','sex','hours-per-week','occupation','income']]

data_dummies=pd.get_dummies(data)

#存入Excel

#data_dummies.to_excel("data_dummies.xlsx")

print('features after one-hot encoding:

',list(data_dummies.columns))

#features_test=data_dummies.ix[:,"age":'occupation_Transport-moving']

features=data_dummies.ix[:,"age":'native-country_ Yugoslavia']

x=features.values

#缺失数据处理

imp = Imputer(missing_values='NaN', strategy='most_frequent', axis=0)

imp.fit(x)

#x1是处理缺失数据后的值

x1=imp.transform(x)

y=data_dummies['income_ >50K'].values

x_train,x_test,y_train,y_test=train_test_split(x1,y,random_state=0)

logreg=LogisticRegression()

logreg.fit(x_train,y_train)

print("logistic regression:")

print("accuracy on the training subset:{:.3f}".format(logreg.score(x_train,y_train)))

print("accuracy on the test subset:{:.3f}".format(logreg.score(x_test,y_test)))

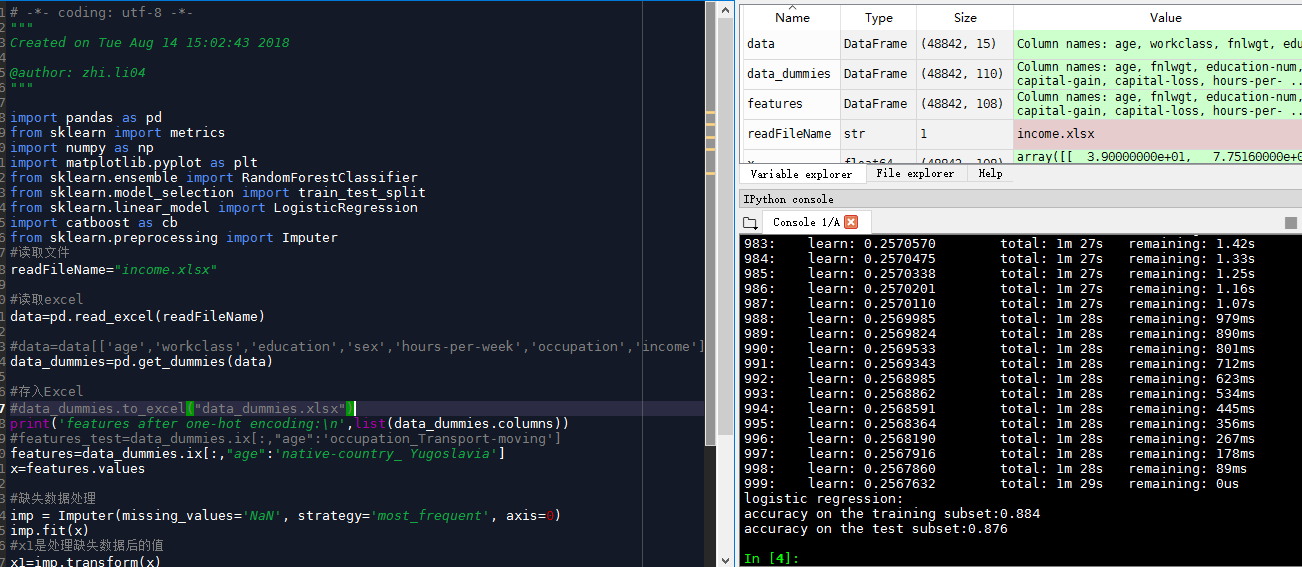

catboost.py

准确率达到0.88左右

# -*- coding: utf-8 -*-

"""

Created on Tue Aug 14 15:02:43 2018

@author: zhi.li04

"""

import pandas as pd

from sklearn import metrics

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

import catboost as cb

from sklearn.preprocessing import Imputer

#读取文件

readFileName="income.xlsx"

#读取excel

data=pd.read_excel(readFileName)

#data=data[['age','workclass','education','sex','hours-per-week','occupation','income']]

data_dummies=pd.get_dummies(data)

#存入Excel

#data_dummies.to_excel("data_dummies.xlsx")

print('features after one-hot encoding:

',list(data_dummies.columns))

#features_test=data_dummies.ix[:,"age":'occupation_Transport-moving']

features=data_dummies.ix[:,"age":'native-country_ Yugoslavia']

x=features.values

#缺失数据处理

imp = Imputer(missing_values='NaN', strategy='most_frequent', axis=0)

imp.fit(x)

#x1是处理缺失数据后的值

x1=imp.transform(x)

y=data_dummies['income_ >50K'].values

x_train,x_test,y_train,y_test=train_test_split(x1,y,random_state=0)

cb=cb.CatBoostClassifier()

cb.fit(x_train,y_train)

print("logistic regression:")

print("accuracy on the training subset:{:.3f}".format(cb.score(x_train,y_train)))

print("accuracy on the test subset:{:.3f}".format(cb.score(x_test,y_test)))

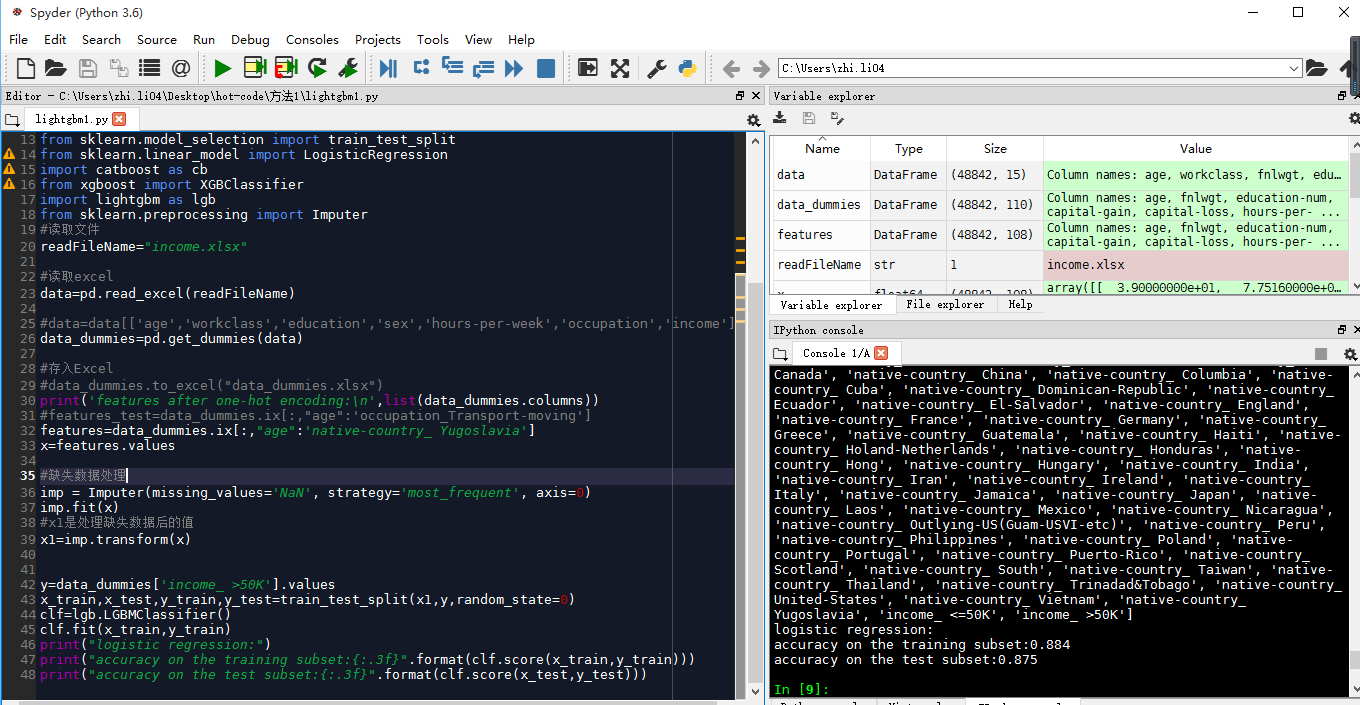

lightgbm1.py

准确性0.87左右

# -*- coding: utf-8 -*-

"""

Created on Tue Aug 14 15:24:14 2018

@author: zhi.li04

"""

import pandas as pd

from sklearn import metrics

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

import catboost as cb

from xgboost import XGBClassifier

import lightgbm as lgb

from sklearn.preprocessing import Imputer

#读取文件

readFileName="income.xlsx"

#读取excel

data=pd.read_excel(readFileName)

#data=data[['age','workclass','education','sex','hours-per-week','occupation','income']]

data_dummies=pd.get_dummies(data)

#存入Excel

#data_dummies.to_excel("data_dummies.xlsx")

print('features after one-hot encoding:

',list(data_dummies.columns))

#features_test=data_dummies.ix[:,"age":'occupation_Transport-moving']

features=data_dummies.ix[:,"age":'native-country_ Yugoslavia']

x=features.values

#缺失数据处理

imp = Imputer(missing_values='NaN', strategy='most_frequent', axis=0)

imp.fit(x)

#x1是处理缺失数据后的值

x1=imp.transform(x)

y=data_dummies['income_ >50K'].values

x_train,x_test,y_train,y_test=train_test_split(x1,y,random_state=0)

clf=lgb.LGBMClassifier()

clf.fit(x_train,y_train)

print("logistic regression:")

print("accuracy on the training subset:{:.3f}".format(clf.score(x_train,y_train)))

print("accuracy on the test subset:{:.3f}".format(clf.score(x_test,y_test)))

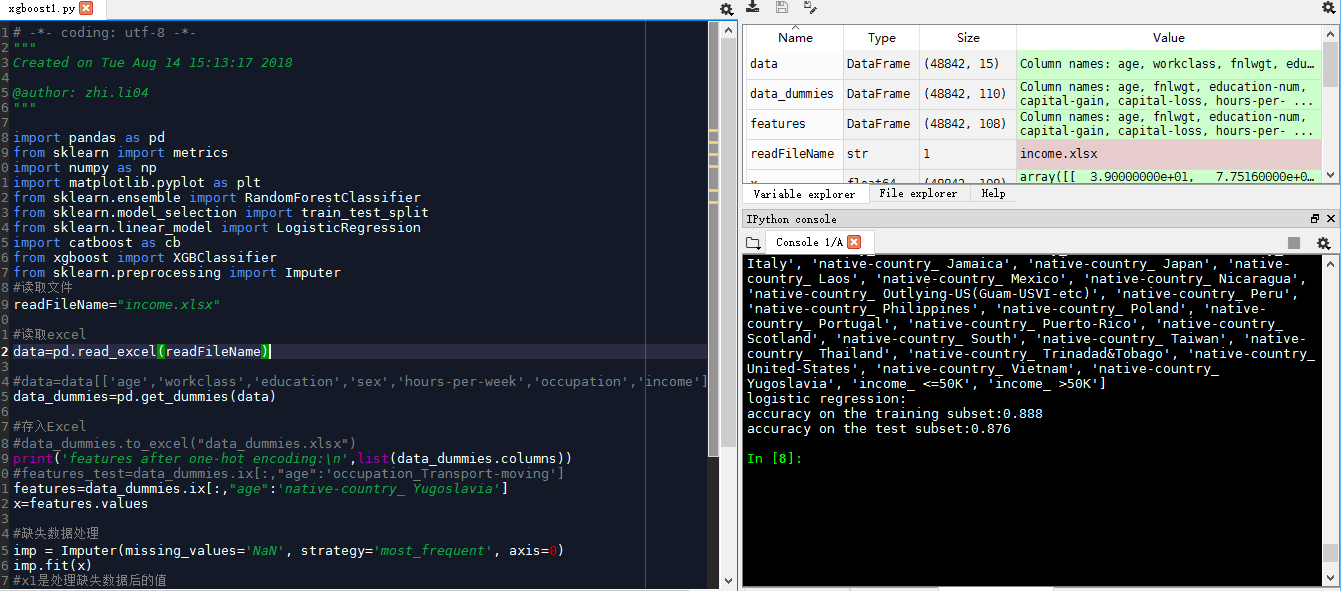

xgboost模型

准确率0.87左右

# -*- coding: utf-8 -*-

"""

Created on Tue Aug 14 15:13:17 2018

@author: zhi.li04

"""

import pandas as pd

from sklearn import metrics

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

import catboost as cb

from xgboost import XGBClassifier

from sklearn.preprocessing import Imputer

#读取文件

readFileName="income.xlsx"

#读取excel

data=pd.read_excel(readFileName)

#data=data[['age','workclass','education','sex','hours-per-week','occupation','income']]

data_dummies=pd.get_dummies(data)

#存入Excel

#data_dummies.to_excel("data_dummies.xlsx")

print('features after one-hot encoding:

',list(data_dummies.columns))

#features_test=data_dummies.ix[:,"age":'occupation_Transport-moving']

features=data_dummies.ix[:,"age":'native-country_ Yugoslavia']

x=features.values

#缺失数据处理

imp = Imputer(missing_values='NaN', strategy='most_frequent', axis=0)

imp.fit(x)

#x1是处理缺失数据后的值

x1=imp.transform(x)

y=data_dummies['income_ >50K'].values

x_train,x_test,y_train,y_test=train_test_split(x1,y,random_state=0)

clf=XGBClassifier(n_estimators=1000)

clf.fit(x_train,y_train)

print("logistic regression:")

print("accuracy on the training subset:{:.3f}".format(clf.score(x_train,y_train)))

print("accuracy on the test subset:{:.3f}".format(clf.score(x_test,y_test)))

AUC: 0.9107 ACC: 0.8547 Recall: 0.5439 F1-score: 0.6457 Precesion: 0.7944

# -*- coding: utf-8 -*-

"""

Created on Tue Apr 24 22:42:47 2018

@author: Administrator

出现module 'xgboost' has no attribute 'DMatrix'的临时解决方法

初学者或者说不太了解Python才会犯这种错误,其实只需要注意一点!不要使用任何模块名作为文件名,任何类型的文件都不可以!我的错误根源是在文件夹中使用xgboost.*的文件名,当import xgboost时会首先在当前文件中查找,才会出现这样的问题。

所以,再次强调:不要用任何的模块名作为文件名!

"""

import xgboost as xgb

from sklearn.cross_validation import train_test_split

import pandas as pd

import matplotlib.pylab as plt

#读取文件

readFileName="income.xlsx"

#读取excel

data=pd.read_excel(readFileName)

#data=data[['age','workclass','education','sex','hours-per-week','occupation','income']]

data_dummies=pd.get_dummies(data)

print('features after one-hot encoding:

',list(data_dummies.columns))

features=data_dummies.ix[:,"age":'native-country_Yugoslavia']

x=features.values

y=data_dummies['income_>50K'].values

x_train,x_test,y_train,y_test=train_test_split(x,y,random_state=0)

names=features.columns

dtrain=xgb.DMatrix(x_train,label=y_train)

dtest=xgb.DMatrix(x_test)

params={'booster':'gbtree',

#'objective': 'reg:linear',

'objective': 'binary:logistic',

'eval_metric': 'auc',

'max_depth':4,

'lambda':10,

'subsample':0.75,

'colsample_bytree':0.75,

'min_child_weight':2,

'eta': 0.025,

'seed':0,

'nthread':8,

'silent':1}

watchlist = [(dtrain,'train')]

bst=xgb.train(params,dtrain,num_boost_round=100,evals=watchlist)

ypred=bst.predict(dtest)

# 设置阈值, 输出一些评价指标

y_pred = (ypred >= 0.5)*1

#模型校验

from sklearn import metrics

print ('AUC: %.4f' % metrics.roc_auc_score(y_test,ypred))

print ('ACC: %.4f' % metrics.accuracy_score(y_test,y_pred))

print ('Recall: %.4f' % metrics.recall_score(y_test,y_pred))

print ('F1-score: %.4f' %metrics.f1_score(y_test,y_pred))

print ('Precesion: %.4f' %metrics.precision_score(y_test,y_pred))

metrics.confusion_matrix(y_test,y_pred)

'''

AUC: 0.9107

ACC: 0.8547

Recall: 0.5439

F1-score: 0.6457

Precesion: 0.7944

Out[28]:

array([[5880, 279],

[ 904, 1078]], dtype=int64)

'''

print("xgboost:")

print('Feature importances:{}'.format(bst.get_fscore()))

'''

Feature importances:{'f33': 76, 'f3': 273, 'f4': 157, 'f25': 11, 'f0': 167,

'f42': 34, 'f2': 193, 'f5': 132, 'f56': 1, 'f64': 14, 'f24': 11, 'f53': 15,

'f58': 24, 'f39': 2, 'f1': 20, 'f29': 3, 'f35': 9, 'f48': 20, 'f12': 11,

'f65': 3, 'f27': 3, 'f50': 3, 'f26': 7, 'f60': 2, 'f43': 8, 'f85': 1,

'f10': 1, 'f46': 5, 'f11': 1, 'f49': 1, 'f7': 1, 'f52': 3, 'f66': 1,

'f54': 1, 'f23': 1}

'''

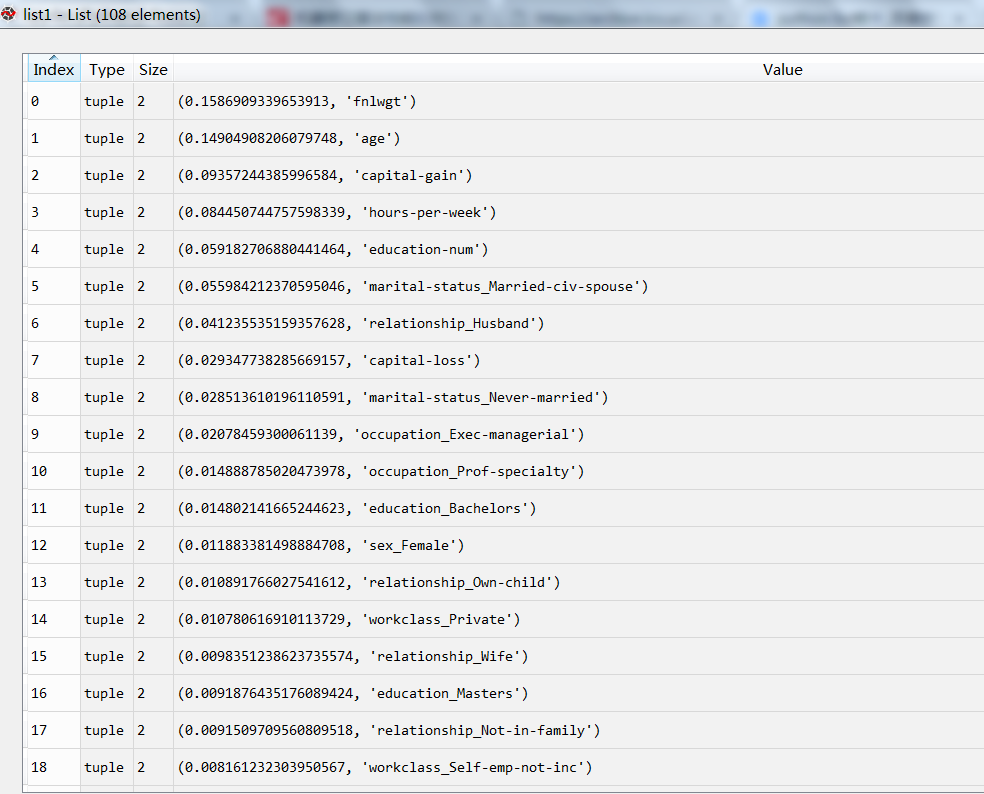

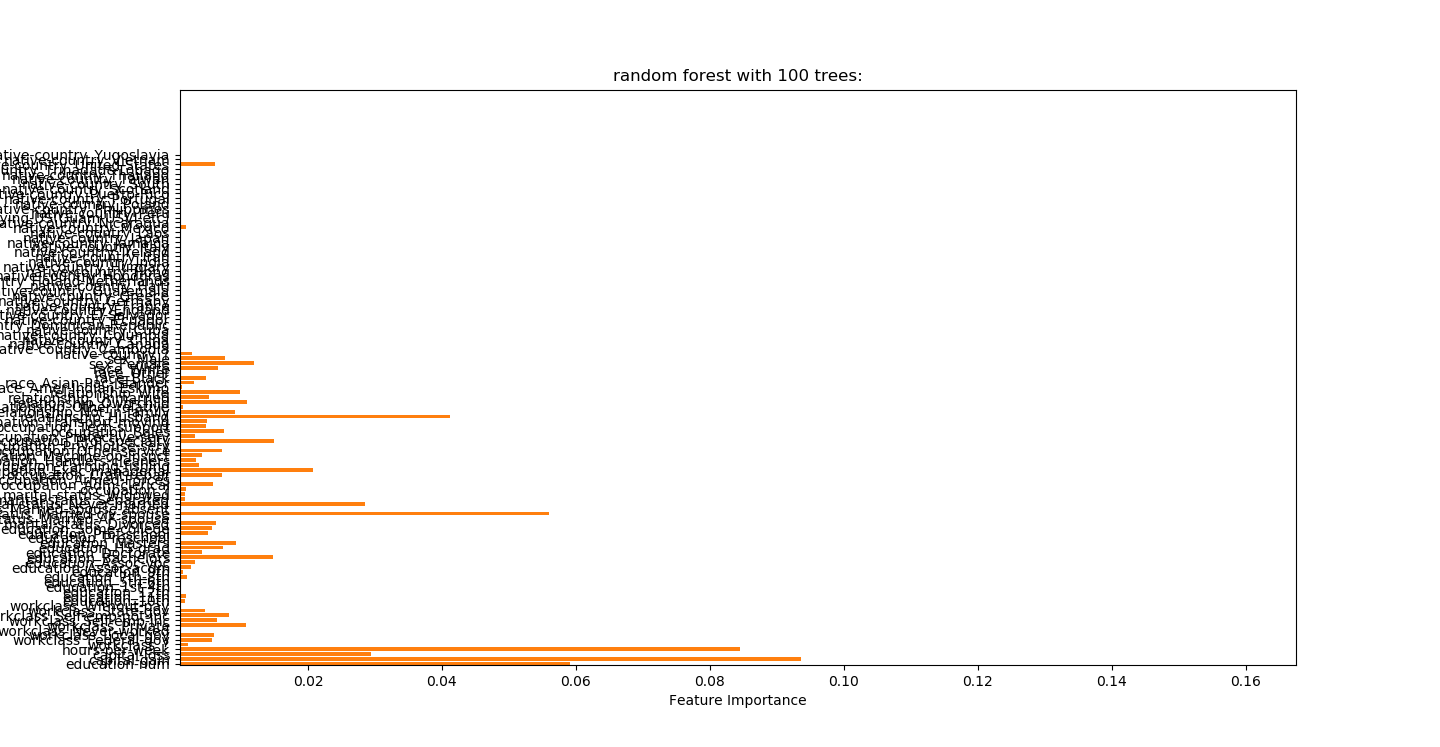

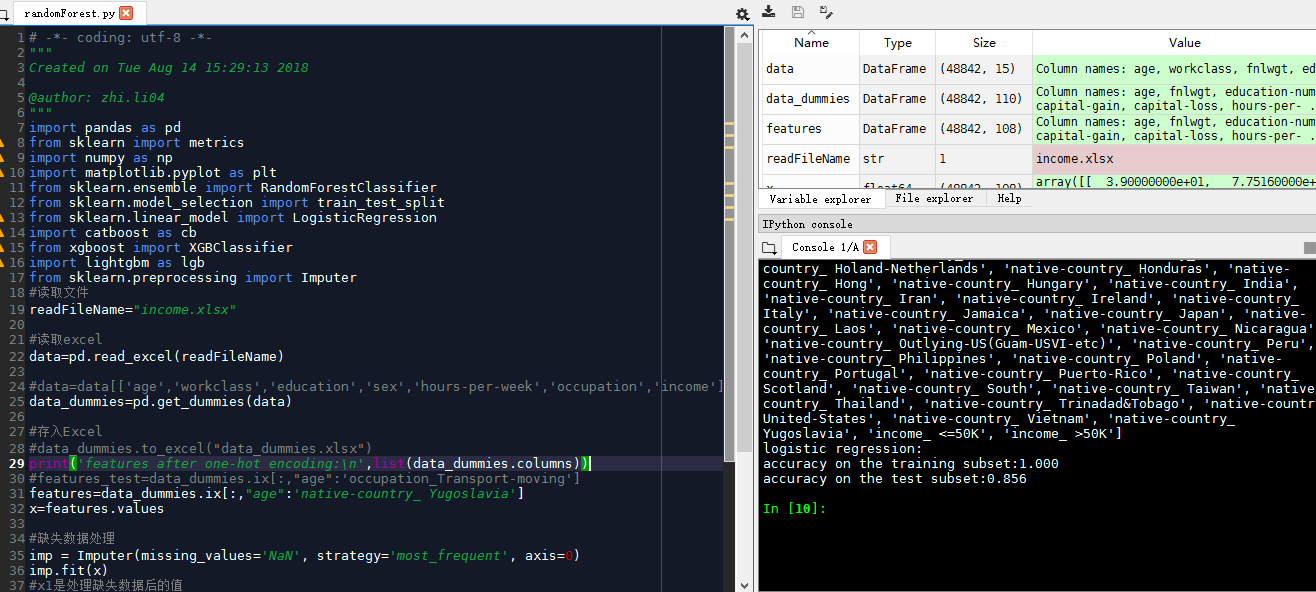

随机森林randomForest.py

0.856左右准确性

# -*- coding: utf-8 -*-

"""

Created on Tue Aug 14 15:29:13 2018

@author: zhi.li04

"""

import pandas as pd

from sklearn import metrics

import numpy as np

import matplotlib.pyplot as plt

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

import catboost as cb

from xgboost import XGBClassifier

import lightgbm as lgb

from sklearn.preprocessing import Imputer

#读取文件

readFileName="income.xlsx"

#读取excel

data=pd.read_excel(readFileName)

#data=data[['age','workclass','education','sex','hours-per-week','occupation','income']]

data_dummies=pd.get_dummies(data)

#存入Excel

#data_dummies.to_excel("data_dummies.xlsx")

print('features after one-hot encoding:

',list(data_dummies.columns))

#features_test=data_dummies.ix[:,"age":'occupation_Transport-moving']

features=data_dummies.ix[:,"age":'native-country_ Yugoslavia']

x=features.values

#缺失数据处理

imp = Imputer(missing_values='NaN', strategy='most_frequent', axis=0)

imp.fit(x)

#x1是处理缺失数据后的值

x1=imp.transform(x)

y=data_dummies['income_ >50K'].values

x_train,x_test,y_train,y_test=train_test_split(x1,y,random_state=0)

clf=RandomForestClassifier(n_estimators=1000,random_state=0)

clf.fit(x_train,y_train)

print("logistic regression:")

print("accuracy on the training subset:{:.3f}".format(clf.score(x_train,y_train)))

print("accuracy on the test subset:{:.3f}".format(clf.score(x_test,y_test)))

python风控建模实战lendingClub(博主录制,catboost,lightgbm建模,2K超清分辨率)

https://study.163.com/course/courseMain.htm?courseId=1005988013&share=2&shareId=400000000398149

微信扫二维码,免费学习更多python资源