Mapreduce实例——二次排序

实验步骤

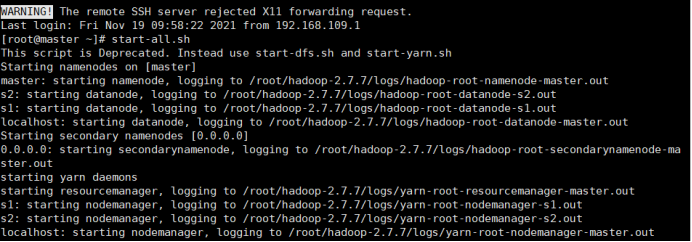

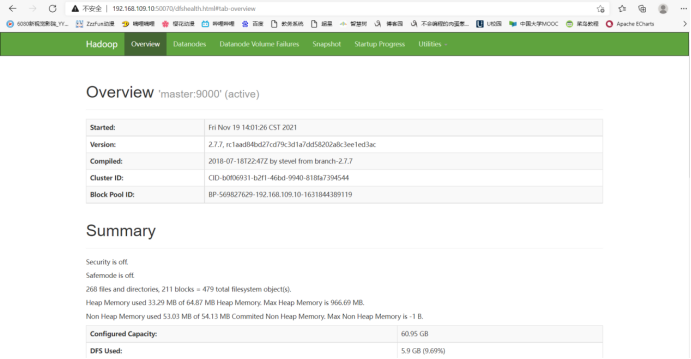

1.开启Hadoop

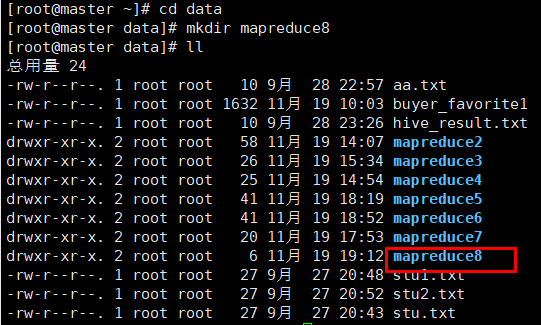

2.新建mapreduce8目录

在Linux本地新建/data/mapreduce8目录

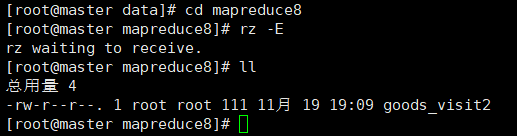

3. 上传文件到linux中

(自行生成文本文件,放到个人指定文件夹下)

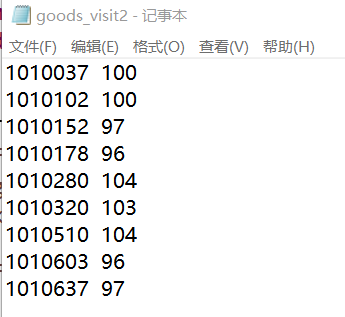

goods_visit2

1010037 100

1010102 100

1010152 97

1010178 96

1010280 104

1010320 103

1010510 104

1010603 96

1010637 97

4.在HDFS中新建目录

首先在HDFS上新建/mymapreduce8/in目录,然后将Linux本地/data/mapreduce8目录下的goods_visit2文件导入到HDFS的/mymapreduce8/in目录中。

hadoop fs -mkdir -p /mymapreduce8/in

hadoop fs -put /root/data/mapreduce8/goods_visit2 /mymapreduce8/in

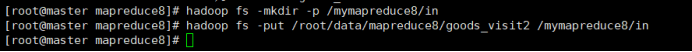

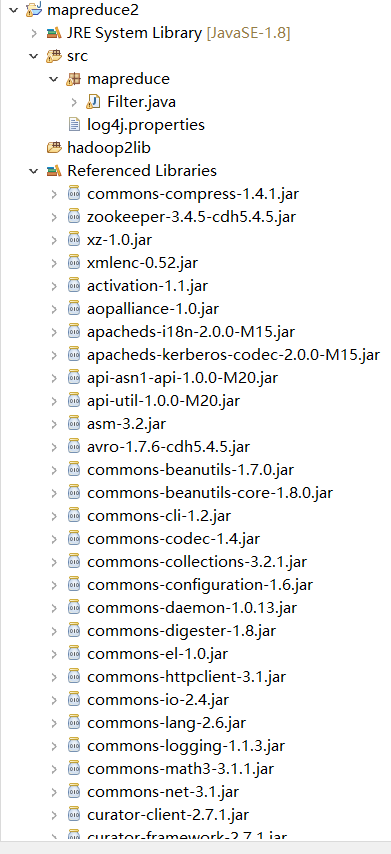

5.新建Java Project项目

新建Java Project项目,项目名为mapreduce。

在mapreduce项目下新建包,包名为mapreduce7。

在mapreduce7包下新建类,类名为SecondarySort。

6.添加项目所需依赖的jar包

右键项目,新建一个文件夹,命名为:hadoop2lib,用于存放项目所需的jar包。

将/data/mapreduce2目录下,hadoop2lib目录中的jar包,拷贝到eclipse中mapreduce2项目的hadoop2lib目录下。

hadoop2lib为自己从网上下载的,并不是通过实验教程里的命令下载的

选中所有项目hadoop2lib目录下所有jar包,并添加到Build Path中。

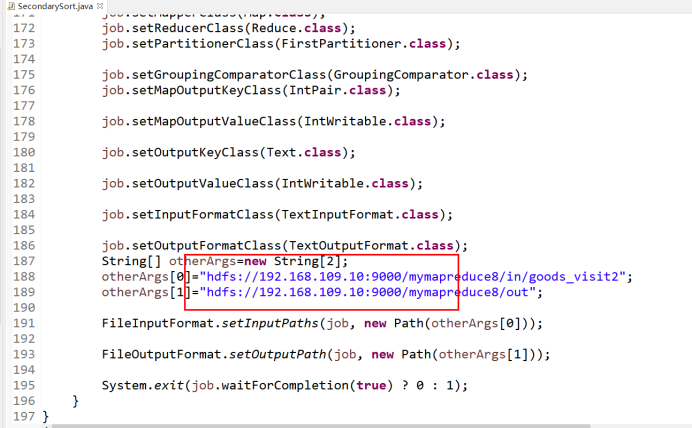

7.编写程序代码

SecondarySort.java

package mapreduce7; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import java.util.StringTokenizer; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.io.WritableComparable; import org.apache.hadoop.io.WritableComparator; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Partitioner; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.input.TextInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; public class SecondarySort { public static class IntPair implements WritableComparable<IntPair> { int first; int second; public void set(int left, int right) { first = left; second = right; } public int getFirst() { return first; } public int getSecond() { return second; } @Override public void readFields(DataInput in) throws IOException { // TODO Auto-generated method stub first = in.readInt(); second = in.readInt(); } @Override public void write(DataOutput out) throws IOException { // TODO Auto-generated method stub out.writeInt(first); out.writeInt(second); } @Override public int compareTo(IntPair o) { // TODO Auto-generated method stub if (first != o.first) { return first < o.first ? 1 : -1; } else if (second != o.second) { return second < o.second ? -1 : 1; } else { return 0; } } @Override public int hashCode() { return first * 157 + second; } @Override public boolean equals(Object right) { if (right == null) return false; if (this == right) return true; if (right instanceof IntPair) { IntPair r = (IntPair) right; return r.first == first && r.second == second; } else { return false; } } } public static class FirstPartitioner extends Partitioner<IntPair, IntWritable> { @Override public int getPartition(IntPair key, IntWritable value,int numPartitions) { return Math.abs(key.getFirst() * 127) % numPartitions; } } public static class GroupingComparator extends WritableComparator { protected GroupingComparator() { super(IntPair.class, true); } @Override //Compare two WritableComparables. public int compare(WritableComparable w1, WritableComparable w2) { IntPair ip1 = (IntPair) w1; IntPair ip2 = (IntPair) w2; int l = ip1.getFirst(); int r = ip2.getFirst(); return l == r ? 0 : (l < r ? -1 : 1); } } public static class Map extends Mapper<LongWritable, Text, IntPair, IntWritable> { private final IntPair intkey = new IntPair(); private final IntWritable intvalue = new IntWritable(); public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { String line = value.toString(); StringTokenizer tokenizer = new StringTokenizer(line); int left = 0; int right = 0; if (tokenizer.hasMoreTokens()) { left = Integer.parseInt(tokenizer.nextToken()); if (tokenizer.hasMoreTokens()) right = Integer.parseInt(tokenizer.nextToken()); intkey.set(right, left); intvalue.set(left); context.write(intkey, intvalue); } } } public static class Reduce extends Reducer<IntPair, IntWritable, Text, IntWritable> { private final Text left = new Text(); private static final Text SEPARATOR = new Text("------------------------------------------------"); public void reduce(IntPair key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException { context.write(SEPARATOR, null); left.set(Integer.toString(key.getFirst())); System.out.println(left); for (IntWritable val : values) { context.write(left, val); //System.out.println(val); } } } public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException { Configuration conf = new Configuration(); Job job = new Job(conf, "secondarysort"); job.setJarByClass(SecondarySort.class); job.setMapperClass(Map.class); job.setReducerClass(Reduce.class); job.setPartitionerClass(FirstPartitioner.class); job.setGroupingComparatorClass(GroupingComparator.class); job.setMapOutputKeyClass(IntPair.class); job.setMapOutputValueClass(IntWritable.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); job.setInputFormatClass(TextInputFormat.class); job.setOutputFormatClass(TextOutputFormat.class); String[] otherArgs=new String[2]; otherArgs[0]="hdfs://192.168.109.10:9000/mymapreduce8/in/goods_visit2"; otherArgs[1]="hdfs://192.168.109.10:9000/mymapreduce8/out"; FileInputFormat.setInputPaths(job, new Path(otherArgs[0])); FileOutputFormat.setOutputPath(job, new Path(otherArgs[1])); System.exit(job.waitForCompletion(true) ? 0 : 1); } }

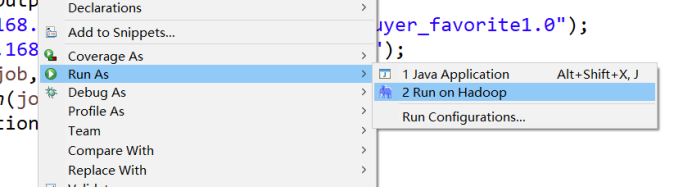

8.运行代码

在SecondarySort类文件中,右键并点击=>Run As=>Run on Hadoop选项,将MapReduce任务提交到Hadoop中。

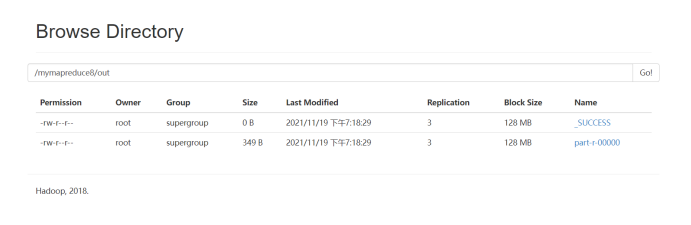

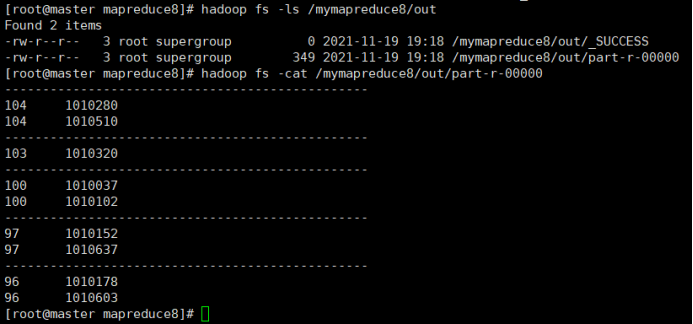

9.查看实验结果

待执行完毕后,进入命令模式下,在HDFS中/mymapreduce8/out查看实验结果。

hadoop fs -ls /mymapreduce8/out

hadoop fs -cat /mymapreduce8/out/part-r-00000

图一为我的运行结果,图二为实验结果

经过对比,发现结果一样

此处为浏览器截图