一,准备工作。

工具:win10+Python3.6

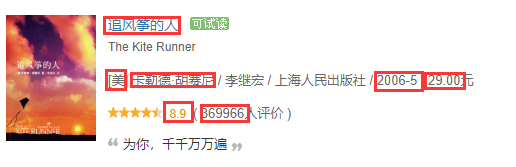

爬取目标:爬取图中红色方框的内容。

原则:能在源码中看到的信息都能爬取出来。

信息表现方式:CSV转Excel。

二,具体步骤。

先给出具体代码吧:

1 import requests 2 import re 3 from bs4 import BeautifulSoup 4 import pandas as pd 5 6 def gethtml(url): 7 try: 8 r = requests.get(url,timeout = 30) 9 r.raise_for_status() 10 r.encoding = r.apparent_encoding 11 return r.text 12 except: 13 return "It is failed to get html!" 14 15 def getcontent(url): 16 html = gethtml(url) 17 soup = BeautifulSoup(html,"html.parser") 18 # print(soup.prettify()) 19 div = soup.find("div",class_="indent") 20 tables = div.find_all("table") 21 22 price = [] 23 date = [] 24 nationality = [] 25 nation = [] #standard 26 bookname=[] 27 link = [] 28 score = [] 29 comment = [] 30 people = [] 31 peo = [] #standard 32 author = [] 33 for table in tables: 34 bookname.append(table.find_all("a")[1]['title']) #bookname 35 link.append(table.find_all("a")[1]['href']) #link 36 score.append(table.find("span",class_="rating_nums").string) #score 37 comment.append(table.find_all("span")[-1].string) #comment in a word 38 39 people_info = table.find_all("span")[-2].text 40 people.append(re.findall(r'd+', people_info)) #How many people comment on this book? Note:But there are sublist in the list. 41 42 navistr = (table.find("p").string) #nationality,author,translator,press,date,price 43 infos = str(navistr.split("/")) #Note this info:The string has been interrupted. 44 infostr = str(navistr) #Note this info:The string has not been interrupted. 45 s = infostr.split("/") 46 if re.findall(r'[', s[0]): # If the first character is "[",match the author. 47 w = re.findall(r'sD+', s[0]) 48 author.append(w[0]) 49 else: 50 author.append(s[0]) 51 52 #Find all infomations from infos.Just like price,nationality,author,translator,press,date 53 price_info = re.findall(r'd+.d+', infos) 54 price.append((price_info[0])) #We can get price. 55 date.append(s[-2]) #We can get date. 56 nationality_info = re.findall(r'[[](D)[]]', infos) 57 nationality.append(nationality_info) #We can get nationality.Note:But there are sublist in the list. 58 for i in nationality: 59 if len(i) == 1: 60 nation.append(i[0]) 61 else: 62 nation.append("中") 63 64 for i in people: 65 if len(i) == 1: 66 peo.append(i[0]) 67 68 print(bookname) 69 print(author) 70 print(nation) 71 print(score) 72 print(peo) 73 print(date) 74 print(price) 75 print(link) 76 77 # 字典中的key值即为csv中列名 78 dataframe = pd.DataFrame({'书名': bookname, '作者': author,'国籍': nation,'评分': score,'评分人数': peo,'出版时间': date,'价格': price,'链接': link,}) 79 80 # 将DataFrame存储为csv,index表示是否显示行名,default=True 81 dataframe.to_csv("C:/Users/zhengyong/Desktop/test.csv", index=False, encoding='utf-8-sig',sep=',') 82 83 84 if __name__ == '__main__': 85 url = "https://book.douban.com/top250?start=0" #If you want to add next pages,you have to alter the code. 86 getcontent(url)

1,爬取大致信息。

选用如下轮子:

1 import requests 2 import re 3 from bs4 import BeautifulSoup 4 5 def gethtml(url): 6 try: 7 r = requests.get(url,timeout = 30) 8 r.raise_for_status() 9 r.encoding = r.apparent_encoding 10 return r.text 11 except: 12 return "It is failed to get html!" 13 14 def getcontent(url): 15 html = gethtml(url) 16 bsObj = BeautifulSoup(html,"html.parser") 17 18 19 if __name__ == '__main__': 20 url = "https://book.douban.com/top250?icn=index-book250-all" 21 getcontent(url)

这样就能从bsObj获取我们想要的信息。

2,信息具体提取。

所有信息都在一个div中,这个div下有25个table,其中每个table都是独立的信息单元,我们只用造出提取一个table的轮子(前提是确保这个轮子的兼容性)。我们发现:一个div父节点下有25个table子节点,用如下方式提取:

div = soup.find("div",class_="indent") tables = div.find_all("table")

书名可以直接在节点中的title中提取(原始代码确实这么丑,但不影响):

<a href="https://book.douban.com/subject/1770782/" onclick=""moreurl(this,{i:'0'})"" title="追风筝的人"> 追风筝的人 </a>

据如下代码提取:

bookname.append(table.find_all("a")[1]['title']) #bookname

相似的不赘述。

评价人数打算用正则表达式提取:

people.append(re.findall(r'd+', people_info)) #How many people comment on this book? Note:But there are sublist in the list.

people_info = 13456人评价。

在看其余信息:

<p class="pl">[美] 卡勒德·胡赛尼 / 李继宏 / 上海人民出版社 / 2006-5 / 29.00元</p>

其中国籍有个“【】”符号,如何去掉?第一行给出回答。

nationality_info = re.findall(r'[[](D)[]]', infos) nationality.append(nationality_info) #We can get nationality.Note:But there are sublist in the list. for i in nationality: if len(i) == 1: nation.append(i[0]) else: nation.append("中")

其中有国籍的都写出了,但是没写出的我们发现都是中国,所以我们把国籍为空白的改写为“中”:

for i in nationality: if len(i) == 1: nation.append(i[0]) else: nation.append("中")

还有list中存在list的问题也很好解决:

for i in people: if len(i) == 1: peo.append(i[0])

长度为1证明不是空序列,就加上序号填写处具体值,使变为一个没有子序列的序列。

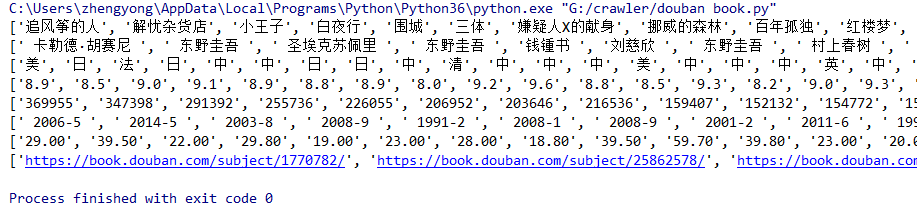

打印结果如下图:

基本是我们想要的了。

然后写入csv:

dataframe = pd.DataFrame({'书名': bookname, '作者': author,'国籍': nation,'评分': score,'评分人数': peo,'出版时间': date,'价格': price,'链接': link,})

# 将DataFrame存储为csv,index表示是否显示行名,default=True

dataframe.to_csv("C:/Users/zhengyong/Desktop/test.csv", index=False, encoding='utf-8-sig',sep=',')

注意:如果没有加上encoding='utf-8-sig'会存在乱码问题,所以这里必须得加,当然你用其他方法也可。

最后一个翻页的问题,这里由于我没做好兼容性问题,所以后面的页码中提取信息老是出问题,但是这里还是写一下方法:

for i in range(10): url = "https://book.douban.com/top250?start=" + str(i*25) getcontent(url)

注意要加上str。

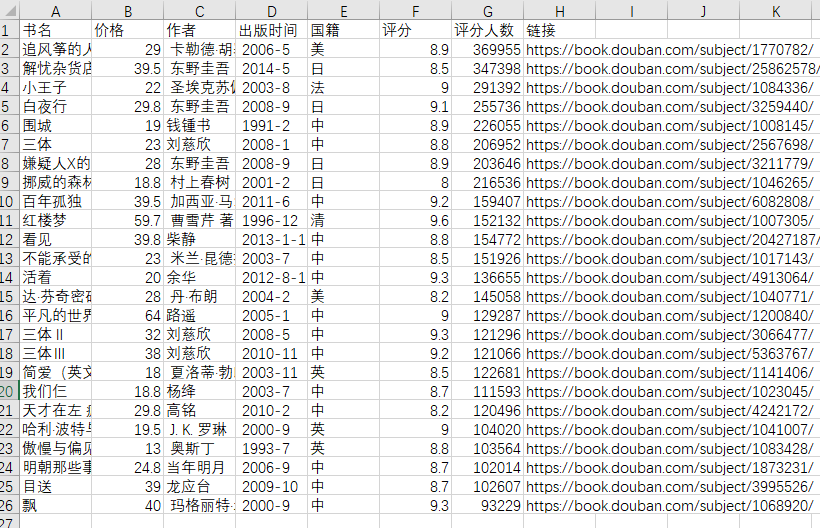

效果图:

其实这里的效果图与我写入csv的传人顺序不一致,后期我会看看原因。

三,总结。

大胆细心,这里一定要细心,很多细节不好好深究后面会有很多东西修改。