该程序有输入层,中间层和输出层

运行环境:ubuntun

(menpo) queen@queen-X550LD:~/Downloads/py $ python nonliner_regression.py

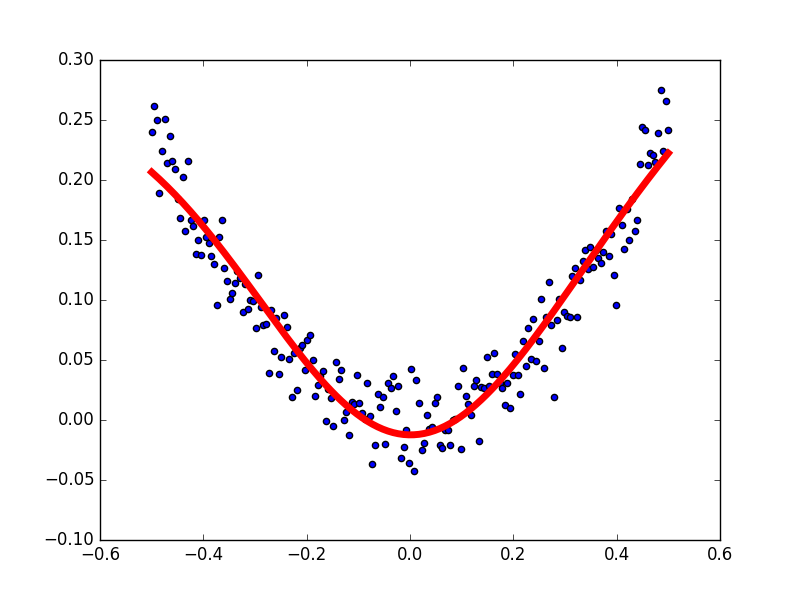

# -*- coding: UTF-8 -*- #定义一个神经网络:输入层一个元素,中间层10个神经元,输出层1个元素 import numpy as np import matplotlib.pyplot as plt import tensorflow as tf #使用numpy生成200个随机点 x_data = np.linspace(-0.5,0.5,200)[:,np.newaxis] noise = np.random.normal(0,0.02,x_data.shape) y_data = np.square(x_data)+noise #定义两个placeholder x = tf.placeholder(tf.float32,[None,1]) y = tf.placeholder(tf.float32,[None,1]) #定义神经网络中间层 Weights_L1 = tf.Variable(tf.random_normal([1,10])) #输入层1个元素,中间层10个神经元 biases_L1 = tf.Variable(tf.zeros([1,10])) Wx_plus_b_L1 = tf.matmul(x,Weights_L1) + biases_L1 L1 = tf.tanh(Wx_plus_b_L1) #定义神经网络输出层 Weights_L2 = tf.Variable(tf.random_normal([10,1])) #中间层10个神经元,输出层1个元素 biases_L2 = tf.Variable(tf.zeros([1,1])) Wx_plus_b_L2 = tf.matmul(L1,Weights_L2) + biases_L2 prediction = tf.tanh(Wx_plus_b_L2) #二次代价函数 loss = tf.reduce_mean(tf.square(y-prediction)) #使用梯度下降法 train_step = tf.train.GradientDescentOptimizer(0.1).minimize(loss) with tf.Session() as sess: #变量初始化 sess.run(tf.global_variables_initializer()) for _ in range(2000): sess.run(train_step,feed_dict={x:x_data,y:y_data}) #获取预测值 prediction_value = sess.run(prediction,feed_dict={x:x_data}) #画图 plt.figure() plt.scatter(x_data,y_data) plt.plot(x_data,prediction_value,'r-',lw=5) plt.show()

运行结果图