一:在项目中引入对应的JAR包,如下,注意对应的包与之前包的冲突

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>3.8.1</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>commons-codec</groupId>

<artifactId>commons-codec</artifactId>

<version>1.10</version>

</dependency>

<dependency>

<groupId>org.apache.flume</groupId>

<artifactId>flume-ng-core</artifactId>

<version>1.7.0</version>

</dependency>

<dependency>

<groupId>org.apache.flume.flume-ng-clients</groupId>

<artifactId>flume-ng-log4jappender</artifactId>

<version>1.7.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.10</artifactId>

<version>0.8.2.2</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-log4j-appender</artifactId>

<version>0.10.2.1</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.5</version>

</dependency>

</dependencies>

二:log4j配置文件配置

log4j.rootLogger=INFO, stdout, R, L,flume,KAFKA

log4j.appender.flume = org.apache.flume.clients.log4jappender.Log4jAppender

log4j.appender.flume.Hostname = 127.0.0.1

log4j.appender.flume.Port = 5555

log4j.appender.flume.UnsafeMode = true

## appender KAFKA

log4j.appender.KAFKA=kafka.producer.KafkaLog4jAppender

log4j.appender.KAFKA.topic=testsong

log4j.appender.KAFKA.brokerList=127.0.0.1:9092

log4j.appender.KAFKA.pa=127.0.0.1:9092

log4j.appender.KAFKA.compressionType=none

log4j.appender.KAFKA.syncSend=true

log4j.appender.KAFKA.layout=org.apache.log4j.PatternLayout

log4j.appender.KAFKA.ThresholdFilter.level=INFO

log4j.appender.KAFKA.ThresholdFilter.onMatch=ACCEPT

log4j.appender.KAFKA.ThresholdFilter.onMismatch=DENY

log4j.appender.KAFKA.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss} %-5p %c{1}:%L %% - %m%n

## appender console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.out

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d (%t) [%p - %l] %m%n

三:测试代码

package com.songflume.flume; import java.util.Date; import org.apache.log4j.Logger; public class WriteLog { private static Logger logger = Logger.getLogger(WriteLog.class); public static void main(String[] args) throws InterruptedException { logger.debug("This is debug message."); logger.info("This is info message."); logger.error("This is error message."); int i = 0; while (i < 1000) { logger.debug(new Date().getTime()); logger.info("songceshi" + i); Thread.sleep(100); i++; } } }

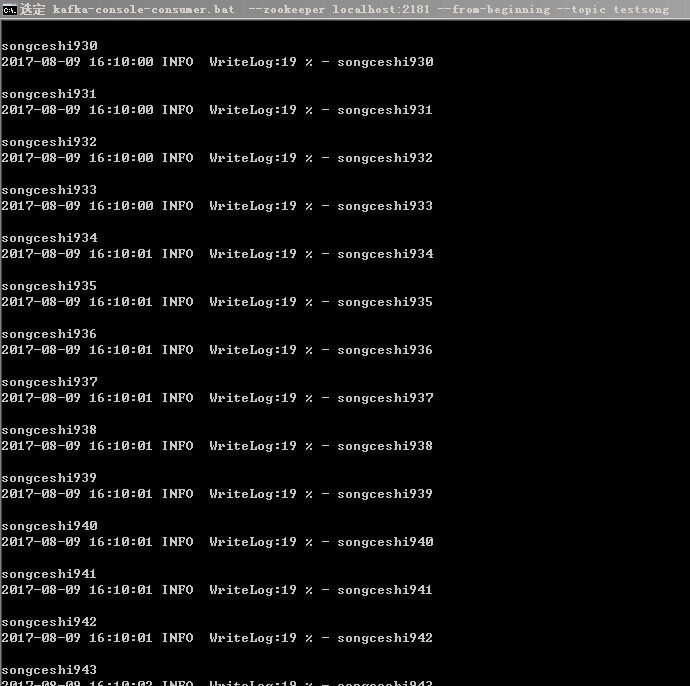

四:kafka消费测试结果(由于是,支持了双通道 :一种是将数据打入到Flume然后再写入kafka,另一种是log4j直接写入kafka),所以kafka消费端出现了2次数据。