摘要

使用Elasticsearch、Logstash、Kibana与Redis(作为缓冲区)对Nginx日志进行收集

版本

elasticsearch版本: elasticsearch-2.2.0

logstash版本: logstash-2.2.2

kibana版本: kibana-4.3.1-darwin-x64

jdk版本: jdk1.8.0_65

内容

目标架构

准备工作

参考以下文章安装好ELK与Redis

ELK Stack (1) —— ELK + Redis安装

以CAS系列中的使用的Nginx负载均衡器为例

CAS (1) —— Mac下配置CAS到Tomcat(服务端)

CAS (5) —— Nginx代理模式下浏览器访问CAS服务器配置详解

ELK配置

-

Nginx

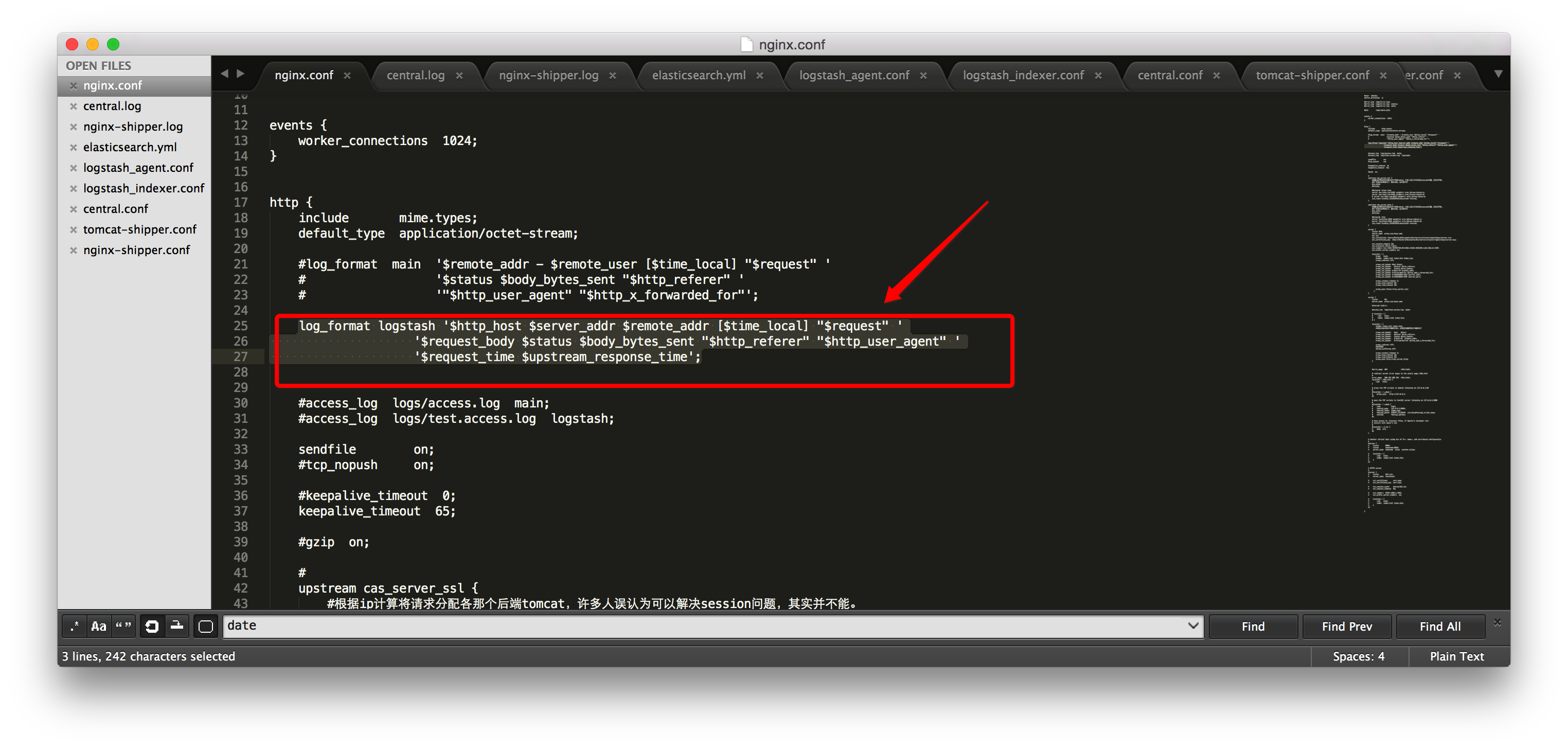

修改nginx.conf

log_format logstash '$http_host $server_addr $remote_addr [$time_local] "$request" ' '$request_body $status $body_bytes_sent "$http_referer" "$http_user_agent" ' '$request_time $upstream_response_time';

-

Elasticsearch

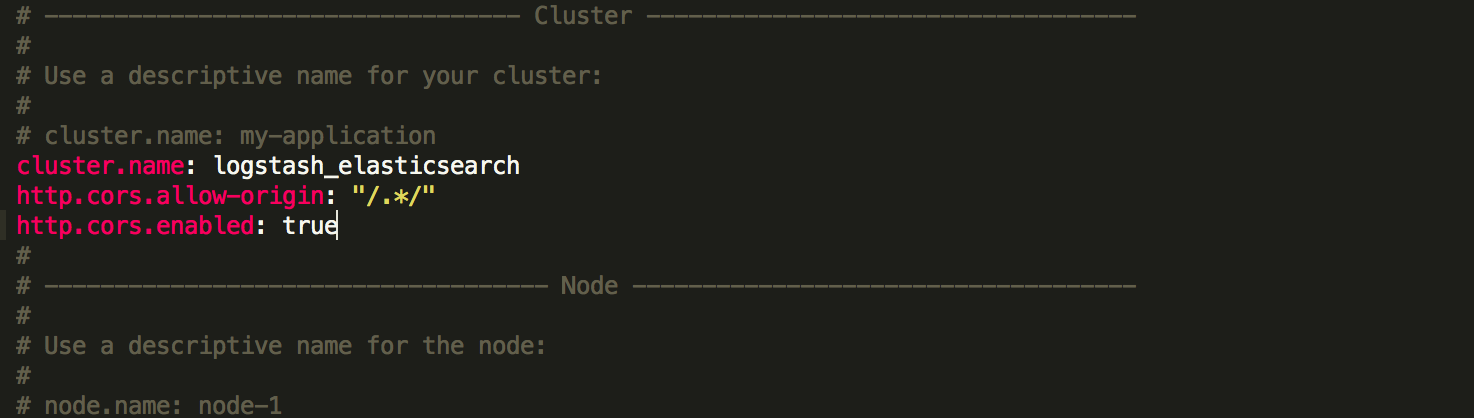

修改elasticsearch.yml

cluster.name: logstash_elasticsearch http.cors.allow-origin: "/.*/" http.cors.enabled: true

-

Logstash

-

/logstash/conf/logstash_agent.conf

input { file { type => "nginx_access" path => ["/usr/share/nginx/logs/test.access.log"] } } output { redis { host => "localhost" data_type => "list" key => "logstash:redis" } } -

/logstash/conf/logstash_indexer.conf

input { redis { host => "localhost" data_type => "list" key => "logstash:redis" type => "redis-input" } } filter { grok { match => [ "message", "%{WORD:http_host} %{URIHOST:api_domain} %{IP:inner_ip} %{IP:lvs_ip} [%{HTTPDATE:timestamp}] "%{WORD:http_verb} %{URIPATH:baseurl}(?:?%{NOTSPACE:request}|) HTTP/%{NUMBER:http_version}" (?:-|%{NOTSPACE:request}) %{NUMBER:http_status_code} (?:%{NUMBER:bytes_read}|-) %{QS:referrer} %{QS:agent} %{NUMBER:time_duration:float} (?:%{NUMBER:time_backend_response:float}|-)" ] } kv { prefix => "request." field_split => "&" source => "request" } urldecode { all_fields => true } #date { # type => "log-date" # match => ["timestamp" , "dd/MMM/YYYY:HH:mm:ss Z"] #} date { match => ["logdate" , "dd/MMM/YYYY:HH:mm:ss Z"] } } output { elasticsearch { #embedded => false #protocol => "http" hosts => "localhost:9200" index => "access-%{+YYYY.MM.dd}" } }

注意有些网络示例为旧版本配置,新版本下output的embedded、protocol以及filter的date都有所更新。

-

-

Kibana

创建Index Pattern: access-*

测试

访问本地Kibana http://localhost:5601/

参考

参考来源:

logstash elasticsearch redis Kibana 收集Nginx 和Tomcat日志配置

Elastic + kibana + logstash + redis 对mongodb, nginx日志进行分析

http://www.cnblogs.com/richaaaard/p/5210118.html