本文基于PaddlePaddle 1.7版本,解析动态图下的Transformer encoder源码实现。

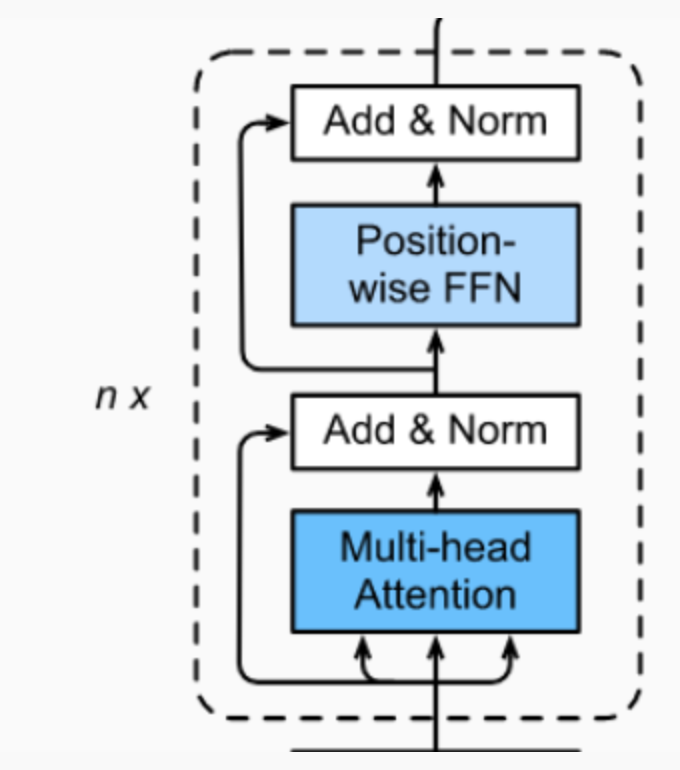

Transformer的每个Encoder子层(bert_base中包含12个encoder子层)包含 2 个小子层 :

- Multi-Head Attention

- Feed Forward

(Decoder中还包含Masked Multi-Head Attention)

class 有如下几个:

| PrePostProcessLayer | 用于添加残差连接、正则化、dropout |

| PositionwiseFeedForwardLayer | 全连接前馈神经网络 |

| MultiHeadAttentionLayer | 多头注意力层 |

| EncoderSubLayer | encoder子层 |

| EncoderLayer | transformer encoder层 |

在PaddlePaddle动态图中,网络层的实现继承paddle.fluid.dygraph.Layer,类内方法__init__是对网络层的定义,forward是跑前向时所需的计算。

具体实现如下,对代码的解释在注释中:

一些必要的导入

"dygraph transformer layers" from __future__ import absolute_import from __future__ import division from __future__ import print_function import numpy as np import paddle import paddle.fluid as fluid from paddle.fluid.dygraph import Embedding, LayerNorm, Linear, Layer

PrePostProcessLayer

可选模式:{ a: 残差连接,n: 层归一化,d: dropout}

残差连接

图中Add+Norm层。每经过一个模块的运算, 都要把运算之前的值和运算之后的值相加, 从而得到残差连接,残差可以使梯度直接走捷径反传到最初始层。

残差连接公式:

y=f(x)+x

x 表示输入的变量,实际就是跨层相加。

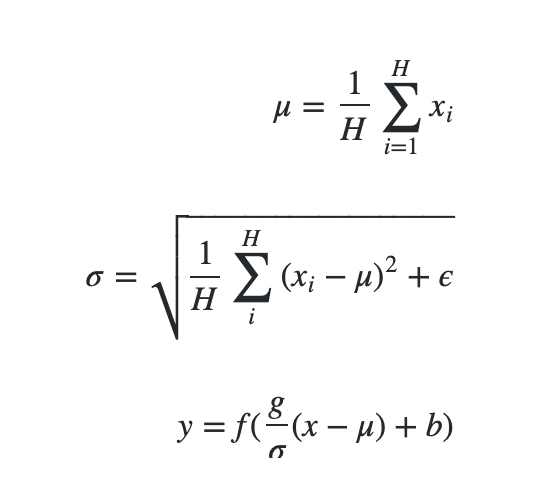

层归一化

LayerNorm实际就是对隐含层做层归一化,即对某一层的所有神经元的输入进行归一化(沿着通道channel方向),使得其加快训练速度:

层归一化公式:

x : 该层神经元的向量表示

H : 层中隐藏神经元个数

ϵ : 添加较小的值到方差中以防止除零

g : 可训练的比例参数

b : 可训练的偏差参数

dropout

丢弃或者保持x的每个元素独立。Dropout是一种正则化手段,通过在训练过程中阻止神经元节点间的相关性来减少过拟合。根据给定的丢弃概率,dropout操作符按丢弃概率随机将一些神经元输出设置为0,其他的仍保持不变。

dropout op可以从Program中删除,提高执行效率。

class PrePostProcessLayer(Layer): """ PrePostProcessLayer """ def __init__(self, process_cmd, d_model, dropout_rate, name): super(PrePostProcessLayer, self).__init__() self.process_cmd = process_cmd # 处理模式 a n d, 可选多个 self.functors = [] # 处理层 self.exec_order = "" # 根据处理模式,为处理层添加子层 for cmd in self.process_cmd: if cmd == "a": # add residual connection self.functors.append(lambda x, y: x + y if y else x) self.exec_order += "a" elif cmd == "n": # add layer normalization self.functors.append( self.add_sublayer( # name "layer_norm_%d" % len( self.sublayers(include_sublayers=False)), LayerNorm( normalized_shape=d_model, # 需规范化的shape,如果是单个整数,则此模块将在最后一个维度上规范化(此时最后一维的维度需与该参数相同)。 param_attr=fluid.ParamAttr( # 权重参数 name=name + "_layer_norm_scale", # 常量初始化函数,通过输入的value值初始化输入变量 initializer=fluid.initializer.Constant(1.)), bias_attr=fluid.ParamAttr( # 偏置参数 name=name + "_layer_norm_bias", initializer=fluid.initializer.Constant(0.))))) self.exec_order += "n" elif cmd == "d": # add dropout if dropout_rate: self.functors.append(lambda x: fluid.layers.dropout( x, dropout_prob=dropout_rate, is_test=False)) self.exec_order += "d" def forward(self, x, residual=None): for i, cmd in enumerate(self.exec_order): if cmd == "a": x = self.functors[i](x, residual) else: x = self.functors[i](x) return x

PositionwiseFeedForwardLayer

bert中hidden_act(激活函数)是gelu。

![]()

class PositionwiseFeedForwardLayer(Layer): """ PositionwiseFeedForwardLayer """ def __init__(self, hidden_act, # 激活函数 d_inner_hid, # 中间隐层的维度 d_model, # 最终输出的维度 dropout_rate, param_initializer=None, name=""): super(PositionwiseFeedForwardLayer, self).__init__() # 两个fc层 self._i2h = Linear( input_dim=d_model, output_dim=d_inner_hid, param_attr=fluid.ParamAttr( name=name + '_fc_0.w_0', initializer=param_initializer), bias_attr=name + '_fc_0.b_0', act=hidden_act) self._h2o = Linear( input_dim=d_inner_hid, output_dim=d_model, param_attr=fluid.ParamAttr( name=name + '_fc_1.w_0', initializer=param_initializer), bias_attr=name + '_fc_1.b_0') self._dropout_rate = dropout_rate def forward(self, x): """ forward :param x: :return: """ hidden = self._i2h(x) # dropout if self._dropout_rate: hidden = fluid.layers.dropout( hidden, dropout_prob=self._dropout_rate, upscale_in_train="upscale_in_train", is_test=False) out = self._h2o(hidden) return out

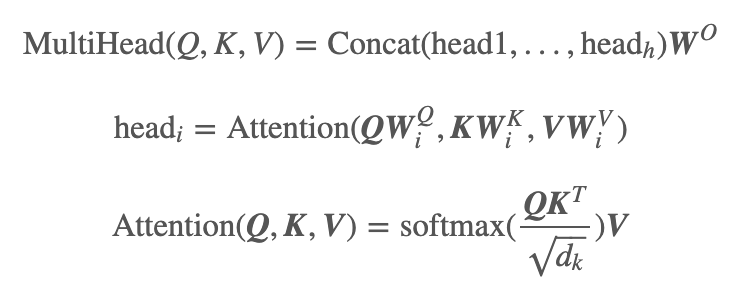

MultiHeadAttentionLayer

几个维度:

self._emb_size = config['hidden_size'] # 768

d_key=self._emb_size // self._n_head,

d_value=self._emb_size // self._n_head,

d_model=self._emb_size,

d_inner_hid=self._emb_size * 4

class MultiHeadAttentionLayer(Layer): """ MultiHeadAttentionLayer """ def __init__(self, d_key, d_value, d_model, n_head=1, dropout_rate=0., cache=None, gather_idx=None, static_kv=False, param_initializer=None, name=""): super(MultiHeadAttentionLayer, self).__init__() self._n_head = n_head self._d_key = d_key self._d_value = d_value self._d_model = d_model self._dropout_rate = dropout_rate self._q_fc = Linear( input_dim=d_model, output_dim=d_key * n_head, param_attr=fluid.ParamAttr( name=name + '_query_fc.w_0', initializer=param_initializer), bias_attr=name + '_query_fc.b_0') self._k_fc = Linear( input_dim=d_model, output_dim=d_key * n_head, param_attr=fluid.ParamAttr( name=name + '_key_fc.w_0', initializer=param_initializer), bias_attr=name + '_key_fc.b_0') self._v_fc = Linear( input_dim=d_model, output_dim=d_value * n_head, param_attr=fluid.ParamAttr( name=name + '_value_fc.w_0', initializer=param_initializer), bias_attr=name + '_value_fc.b_0') self._proj_fc = Linear( input_dim=d_value * n_head, output_dim=d_model, param_attr=fluid.ParamAttr( name=name + '_output_fc.w_0', initializer=param_initializer), bias_attr=name + '_output_fc.b_0') def forward(self, queries, keys, values, attn_bias): """ forward :param queries: :param keys: :param values: :param attn_bias: :return: """ # compute q ,k ,v keys = queries if keys is None else keys values = keys if values is None else values # 得到q k v 矩阵 q = self._q_fc(queries) k = self._k_fc(keys) v = self._v_fc(values) # split head q_hidden_size = q.shape[-1] eshaped_q = fluid.layers.reshape( x=q, shape=[0, 0, self._n_head, q_hidden_size // self._n_head], inplace=False) transpose_q = fluid.layers.transpose(x=reshaped_q, perm=[0, 2, 1, 3]) k_hidden_size = k.shape[-1] reshaped_k = fluid.layers.reshape( x=k, shape=[0, 0, self._n_head, k_hidden_size // self._n_head], inplace=False) transpose_k = fluid.layers.transpose(x=reshaped_k, perm=[0, 2, 1, 3]) v_hidden_size = v.shape[-1] reshaped_v = fluid.layers.reshape( x=v, shape=[0, 0, self._n_head, v_hidden_size // self._n_head], inplace=False) transpose_v = fluid.layers.transpose(x=reshaped_v, perm=[0, 2, 1, 3]) scaled_q = fluid.layers.scale(x=transpose_q, scale=self._d_key**-0.5) # scale dot product attention product = fluid.layers.matmul( #x=transpose_q, x=scaled_q, y=transpose_k, transpose_y=True) #alpha=self._d_model**-0.5) if attn_bias: product += attn_bias weights = fluid.layers.softmax(product) if self._dropout_rate: weights_droped = fluid.layers.dropout( weights, dropout_prob=self._dropout_rate, dropout_implementation="upscale_in_train", is_test=False) out = fluid.layers.matmul(weights_droped, transpose_v) else: out = fluid.layers.matmul(weights, transpose_v) # combine heads if len(out.shape) != 4: raise ValueError("Input(x) should be a 4-D Tensor.") trans_x = fluid.layers.transpose(out, perm=[0, 2, 1, 3]) final_out = fluid.layers.reshape( x=trans_x, shape=[0, 0, trans_x.shape[2] * trans_x.shape[3]], inplace=False) # fc to output proj_out = self._proj_fc(final_out) return proj_out

EncoderSubLayer

class EncoderSubLayer(Layer): """ EncoderSubLayer """ def __init__(self, hidden_act, n_head, d_key, d_value, d_model, d_inner_hid, prepostprocess_dropout, attention_dropout, relu_dropout, preprocess_cmd="n", postprocess_cmd="da", param_initializer=None, name=""): super(EncoderSubLayer, self).__init__() self.name = name self._preprocess_cmd = preprocess_cmd self._postprocess_cmd = postprocess_cmd self._prepostprocess_dropout = prepostprocess_dropout # 预处理 self._preprocess_layer = PrePostProcessLayer( self._preprocess_cmd, d_model, prepostprocess_dropout, name=name + "_pre_att") # 多头注意力 self._multihead_attention_layer = MultiHeadAttentionLayer( d_key, d_value, d_model, n_head, attention_dropout, None, None, False, param_initializer, name=name + "_multi_head_att") self._postprocess_layer = PrePostProcessLayer( self._postprocess_cmd, d_model, self._prepostprocess_dropout, name=name + "_post_att") self._preprocess_layer2 = PrePostProcessLayer( self._preprocess_cmd, d_model, self._prepostprocess_dropout, name=name + "_pre_ffn") self._positionwise_feed_forward = PositionwiseFeedForwardLayer( hidden_act, d_inner_hid, d_model, relu_dropout, param_initializer, name=name + "_ffn") self._postprocess_layer2 = PrePostProcessLayer( self._postprocess_cmd, d_model, self._prepostprocess_dropout, name=name + "_post_ffn") def forward(self, enc_input, attn_bias): """ forward :param enc_input: encoder 输入 :param attn_bias: attention 偏置 :return: 一层encoder encode输入之后的结果 """ # 在进行多头attention前,先进行预处理 pre_process_multihead = self._preprocess_layer(enc_input) # 预处理之后的结果给到多头attention层 attn_output = self._multihead_attention_layer(pre_process_multihead, None, None, attn_bias) # 经过attention之后进行后处理 attn_output = self._postprocess_layer(attn_output, enc_input) # 在给到FFN层前进行预处理 pre_process2_output = self._preprocess_layer2(attn_output) # 得到FFN层的结果 ffd_output = self._positionwise_feed_forward(pre_process2_output) # 返回后处理后的结果 return self._postprocess_layer2(ffd_output, attn_output)

EncoderLayer

class EncoderLayer(Layer): """ encoder """ def __init__(self, hidden_act, n_layer, # encoder子层数量 / encoder深度 n_head, # 注意力机制中head数量 d_key, d_value, d_model, d_inner_hid, prepostprocess_dropout, # 处理层的dropout概率 attention_dropout, # attention层的dropout概率 relu_dropout, # 激活函数层的dropout概率 preprocess_cmd="n", # 前处理,正则化 postprocess_cmd="da", # 后处理,dropout + 残差连接 param_initializer=None, name=""): super(EncoderLayer, self).__init__() self._preprocess_cmd = preprocess_cmd self._encoder_sublayers = list() self._prepostprocess_dropout = prepostprocess_dropout self._n_layer = n_layer self._hidden_act = hidden_act # 后处理层,这里是层正则化 self._preprocess_layer = PrePostProcessLayer( self._preprocess_cmd, 3, self._prepostprocess_dropout, "post_encoder") # 根据n_layer的设置(bert_base中是12)迭代定义几个encoder子层 for i in range(n_layer): self._encoder_sublayers.append( # 使用add_sublayer方法添加子层 self.add_sublayer( 'esl_%d' % i, EncoderSubLayer( hidden_act, n_head, d_key, d_value, d_model, d_inner_hid, prepostprocess_dropout, attention_dropout, relu_dropout, preprocess_cmd, postprocess_cmd, param_initializer, name=name + '_layer_' + str(i)))) def forward(self, enc_input, attn_bias): """ forward :param enc_input: 模型输入 :param attn_bias: bias项可根据具体情况选择是否保留 :return: encode之后的结果 """ # 迭代多个encoder子层,例如 bert base 的encoder子层数为12(self._n_layer) for i in range(self._n_layer): # 得到子层的输出,参数为 enc_input, attn_bias enc_output = self._encoder_sublayers[i](enc_input, attn_bias) # 该子层的输出作为下一子层的输入 enc_input = enc_output # 返回处理过的层 return self._preprocess_layer(enc_output)