抓取代码:

# coding=utf-8

import os

import re

from selenium import webdriver

import selenium.webdriver.support.ui as ui

from selenium.webdriver.common.keys import Keys

import time

from selenium.webdriver.common.action_chains import ActionChains

import IniFile

class weibo:

def __init__(self):

#通过配置文件获取IEDriverServer.exe路径

configfile = os.path.join(os.getcwd(),'config.conf')

cf = IniFile.ConfigFile(configfile)

IEDriverServer = cf.GetValue("section", "IEDriverServer")

#每抓取一页数据延迟的时间,单位为秒,默认为5秒

self.pageDelay = 5

pageInteralDelay = cf.GetValue("section", "pageInteralDelay")

if pageInteralDelay:

self.pageDelay = int(pageInteralDelay)

os.environ["webdriver.ie.driver"] = IEDriverServer

self.driver = webdriver.Ie(IEDriverServer)

def scroll_top(self):

'''

滚动条拉到顶部

:return:

'''

if self.driver.name == "chrome":

js = "var q=document.body.scrollTop=0"

else:

js = "var q=document.documentElement.scrollTop=0"

return self.driver.execute_script(js)

def scroll_foot(self):

'''

滚动条拉到底部

:return:

'''

if self.driver.name == "chrome":

js = "var q=document.body.scrollTop=10000"

else:

js = "var q=document.documentElement.scrollTop=10000"

return self.driver.execute_script(js)

def printTopic(self,topic):

print '原始数据: %s' % topic

print ' '

author_time_nums_index = topic.rfind('@')

ht = topic[:author_time_nums_index]

ht = ht.replace(' ', '')

print '话题: %s' % ht

author_time_nums = topic[author_time_nums_index:]

author_time = author_time_nums.split('ñ')[0]

nums = author_time_nums.split('ñ')[1]

pattern1 = re.compile(r'd{1,2}分钟前|今天s{1}d{2}:d{2}|d{1,2}月d{1,2}日s{1}d{2}:d{2}')

time1 = re.findall(pattern1, author_time)

print '话题作者: %s' % author_time.split(' ')[0]

# print '时间: %s' % author_time.split(' ')[1]

print '时间: %s' % time1[0]

print '点赞量: %s' % nums.split(' ')[0]

print '评论量: %s' % nums.split(' ')[1]

print '转发量: %s' % nums.split(' ')[2]

print ' '

def CatchData(self,listClass,firstUrl):

'''

抓取数据

:param id: 要获取元素标签的ID

:param firstUrl: 首页Url

:return:

'''

start = time.clock()

#加载首页

wait = ui.WebDriverWait(self.driver, 20)

self.driver.get(firstUrl)

#打印标题

print self.driver.title

# # 聚焦元素

# target = self.driver.find_element_by_id('J_ItemList')

# self.driver.execute_script("arguments[0].scrollIntoView();", target)

#滚动5次滚动条

Scrollcount = 5

while Scrollcount > 0:

Scrollcount = Scrollcount -1

self.scroll_foot() #滚动一次滚动条,定位查找一次

total = 0

for className in listClass:

time.sleep(10)

wait.until(lambda driver: self.driver.find_elements_by_xpath(className))

Elements = self.driver.find_elements_by_xpath(className)

for element in Elements:

print ' '

txt = element.text.encode('utf8')

self.printTopic(txt)

total = total + 1

self.driver.close()

self.driver.quit()

end = time.clock()

print ' '

print "共抓取了: %d 个话题" % total

print "整个过程用时间: %f 秒" % (end - start)

# #测试抓取微博数据

obj = weibo()

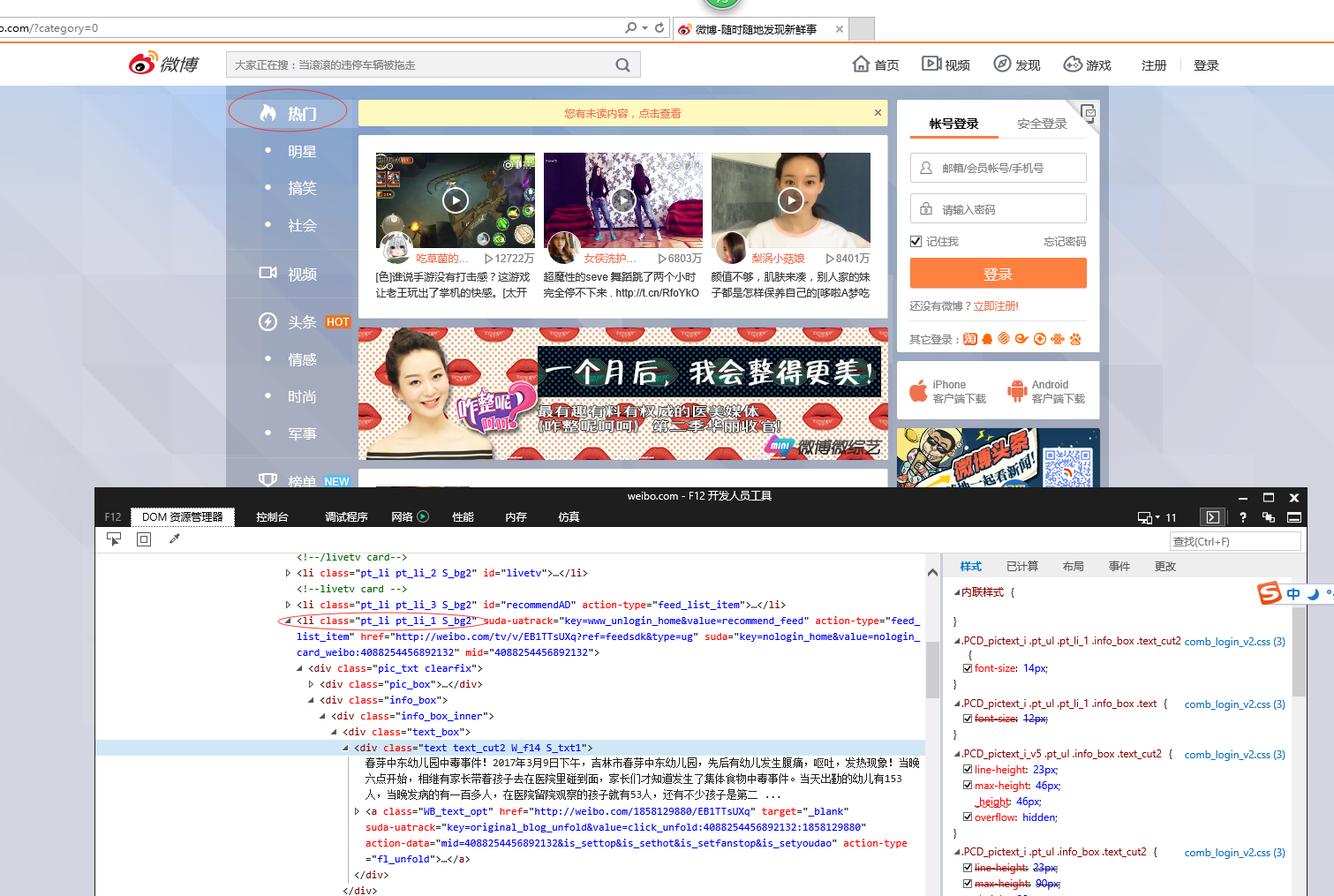

#pt_li pt_li_2 S_bg2

#pt_li pt_li_1 S_bg2

# firstUrl = "http://weibo.com/?category=0"

firstUrl = "http://weibo.com/?category=1760"

listClass = []

listClass.append("//li[@class='pt_li pt_li_1 S_bg2']")

listClass.append("//li[@class='pt_li pt_li_2 S_bg2']")

obj.CatchData(listClass,firstUrl)

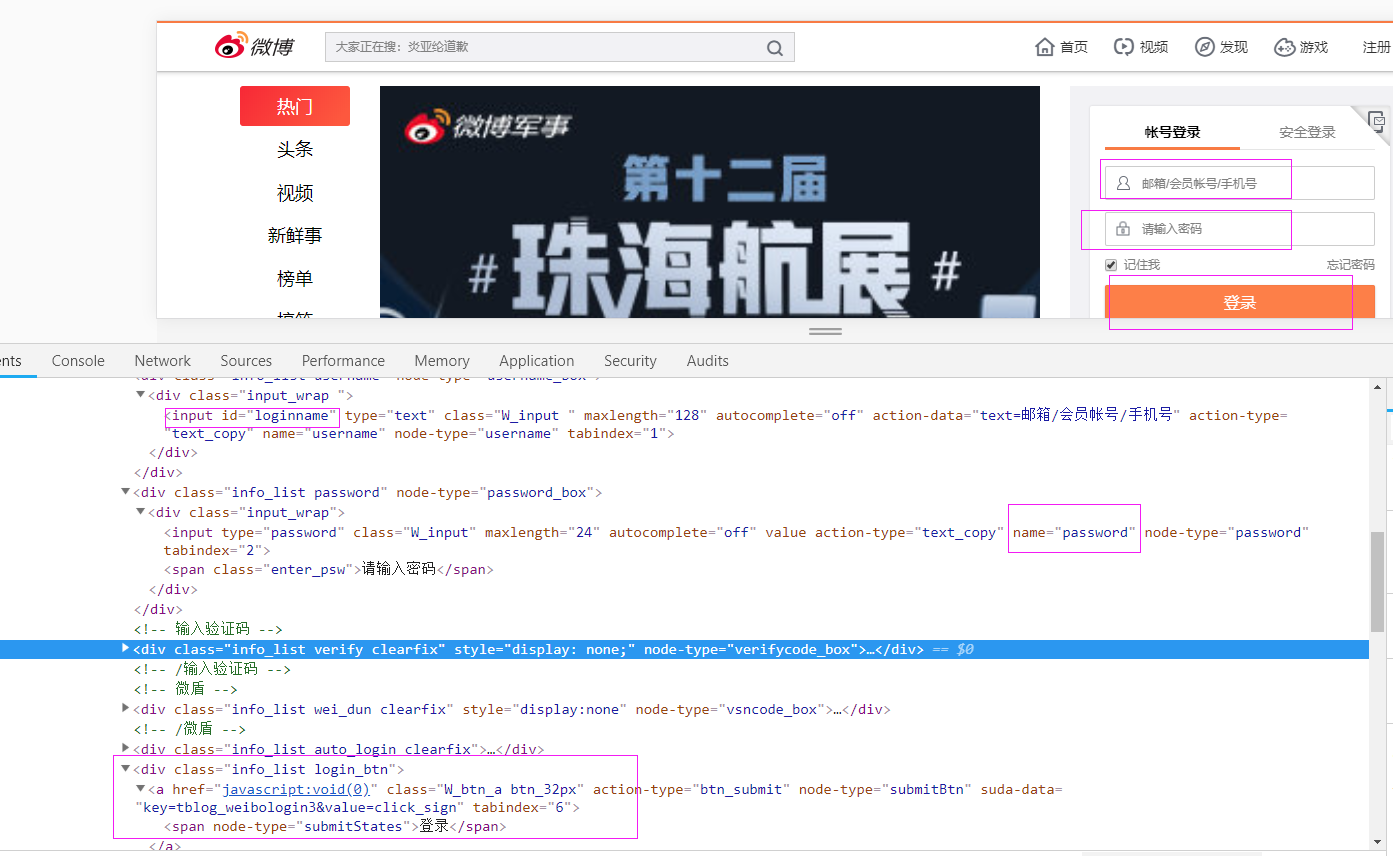

登录窗口

def longon(self): flag = True try: self.driver.get('https://weibo.com/') self.driver.maximize_window() time.sleep(2) accname = self.driver.find_element_by_id("loginname") accname.send_keys('username') accpwd = self.driver.find_element_by_name("password") accpwd.send_keys('password') submit = self.driver.find_element_by_xpath("//div[@class='info_list login_btn']/a") submit.click() time.sleep(2) except Exception as e1: message = str(e1.args) flag = False return flag