记一个比较初级的笔记。

===流程===

1. 创建一张表

2. 插入10条数据

3. 查看HFile

===操作===

1.创建表

package api; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.hbase.HBaseConfiguration; import org.apache.hadoop.hbase.HColumnDescriptor; import org.apache.hadoop.hbase.HTableDescriptor; import org.apache.hadoop.hbase.TableName; import org.apache.hadoop.hbase.client.Admin; import org.apache.hadoop.hbase.client.Connection; import org.apache.hadoop.hbase.client.ConnectionFactory; import org.apache.hadoop.hbase.client.Durability; import org.apache.hadoop.hbase.io.compress.Compression; import org.apache.hadoop.hbase.regionserver.BloomType; public class create_table_sample1 { public static void main(String[] args) throws Exception { Configuration conf = HBaseConfiguration.create(); conf.set("hbase.zookeeper.quorum", "192.168.1.80,192.168.1.81,192.168.1.82"); Connection connection = ConnectionFactory.createConnection(conf); Admin admin = connection.getAdmin(); HTableDescriptor desc = new HTableDescriptor(TableName.valueOf("TEST1")); desc.setMemStoreFlushSize(2097152L); //2M(默认128M) desc.setMaxFileSize(10485760L); //10M(默认10G) desc.setDurability(Durability.SYNC_WAL); //WAL落盘方式:同步刷盘 HColumnDescriptor family1 = new HColumnDescriptor(constants.COLUMN_FAMILY_DF.getBytes()); family1.setTimeToLive(2 * 60 * 60 * 24); //过期时间 family1.setMaxVersions(2); //版本数 family1.setBlockCacheEnabled(false); desc.addFamily(family1); HColumnDescriptor family2 = new HColumnDescriptor(constants.COLUMN_FAMILY_EX.getBytes()); family2.setTimeToLive(3 * 60 * 60 * 24); //过期时间 family2.setMinVersions(2); //最小版本数 family2.setMaxVersions(3); //版本数 family2.setBloomFilterType(BloomType.ROW); //布隆过滤方式 family2.setBlocksize(1024); family2.setBlockCacheEnabled(false); desc.addFamily(family2); admin.createTable(desc); admin.close(); connection.close(); } }

2.插入10条数据

package api; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.hbase.HBaseConfiguration; import org.apache.hadoop.hbase.TableName; import org.apache.hadoop.hbase.client.*; import java.util.ArrayList; import java.util.List; import java.util.UUID; public class table_put_sample4 { public static void main(String[] args) throws Exception { Configuration conf = HBaseConfiguration.create(); conf.set("hbase.zookeeper.quorum", "192.168.1.80,192.168.1.81,192.168.1.82"); conf.set("hbase.client.write.buffer", "1048576");//1M Connection connection = ConnectionFactory.createConnection(conf); BufferedMutator table = connection.getBufferedMutator(TableName.valueOf(constants.TABLE_NAME)); List<Put> puts = new ArrayList<>(); for(int i = 0; i < 10; i++) { Put put = new Put(("row" + UUID.randomUUID().toString()).getBytes()); put.addColumn(constants.COLUMN_FAMILY_DF.getBytes(), "name".getBytes(), random.getName()); put.addColumn(constants.COLUMN_FAMILY_DF.getBytes(), "sex".getBytes(), random.getSex()); put.addColumn(constants.COLUMN_FAMILY_EX.getBytes(), "height".getBytes(), random.getHeight()); put.addColumn(constants.COLUMN_FAMILY_EX.getBytes(), "weight".getBytes(), random.getWeight()); puts.add(put); } table.mutate(puts); table.flush(); table.close(); connection.close(); } }

3. 查看HFile

命令:hbase hfile -v -p -m -f hdfs://ns/hbase/data/default/TEST1/5cd31c374a3b30bb859175495cbd6905/df/9df89dc0db7f401e943c5ded6d49d956

Scanning -> hdfs://ns/hbase/data/default/TEST1/5cd31c374a3b30bb859175495cbd6905/df/9df89dc0db7f401e943c5ded6d49d956

2017-09-29 03:53:57,233 INFO [main] hfile.CacheConfig: Created cacheConfig: CacheConfig:disabled

K: row0324f6ce-dec9-474a-b3fd-202b0c482756/df:name/1506670800587/Put/vlen=7/seqid=8 V: wang wu

K: row0324f6ce-dec9-474a-b3fd-202b0c482756/df:sex/1506670800587/Put/vlen=3/seqid=8 V: men

K: row284986a4-66c3-4ac6-96f1-76cbf66ec0b0/df:name/1506670800410/Put/vlen=7/seqid=4 V: wei liu

K: row284986a4-66c3-4ac6-96f1-76cbf66ec0b0/df:sex/1506670800410/Put/vlen=3/seqid=4 V: men

K: row5b3796d7-0d95-4114-b8fe-15a194b87172/df:name/1506670800559/Put/vlen=5/seqid=7 V: li si

K: row5b3796d7-0d95-4114-b8fe-15a194b87172/df:sex/1506670800559/Put/vlen=3/seqid=7 V: men

K: row620c7f4b-cb20-4175-b12b-5f71349ca52e/df:name/1506670800699/Put/vlen=7/seqid=12 V: wang wu

K: row620c7f4b-cb20-4175-b12b-5f71349ca52e/df:sex/1506670800699/Put/vlen=5/seqid=12 V: women

K: row91963615-e76f-4911-be04-fcfb1e47cf64/df:name/1506670800733/Put/vlen=7/seqid=13 V: wei liu

K: row91963615-e76f-4911-be04-fcfb1e47cf64/df:sex/1506670800733/Put/vlen=5/seqid=13 V: women

K: row98e7aeea-bd63-45f3-ad28-690256303b6a/df:name/1506670800677/Put/vlen=7/seqid=11 V: wang wu

K: row98e7aeea-bd63-45f3-ad28-690256303b6a/df:sex/1506670800677/Put/vlen=3/seqid=11 V: men

K: rowa0d3ac08-188a-4869-8dcd-43cd874ae34e/df:name/1506670800476/Put/vlen=7/seqid=5 V: wang wu

K: rowa0d3ac08-188a-4869-8dcd-43cd874ae34e/df:sex/1506670800476/Put/vlen=3/seqid=5 V: men

K: rowd0584d40-bf2c-4f07-90c9-394470cc54c7/df:name/1506670800611/Put/vlen=7/seqid=9 V: wei liu

K: rowd0584d40-bf2c-4f07-90c9-394470cc54c7/df:sex/1506670800611/Put/vlen=5/seqid=9 V: women

K: rowd5e46f02-7d22-444a-a086-f0936ca81728/df:name/1506670800652/Put/vlen=7/seqid=10 V: wang wu

K: rowd5e46f02-7d22-444a-a086-f0936ca81728/df:sex/1506670800652/Put/vlen=3/seqid=10 V: men

K: rowf17bfb40-f658-4b4b-a9da-82abf455f4e6/df:name/1506670800531/Put/vlen=5/seqid=6 V: li si

K: rowf17bfb40-f658-4b4b-a9da-82abf455f4e6/df:sex/1506670800531/Put/vlen=3/seqid=6 V: men

Block index size as per heapsize: 432

reader=hdfs://ns/hbase/data/default/TEST1/5cd31c374a3b30bb859175495cbd6905/df/9df89dc0db7f401e943c5ded6d49d956,

compression=none,

cacheConf=CacheConfig:disabled,

firstKey=row0324f6ce-dec9-474a-b3fd-202b0c482756/df:name/1506670800587/Put,

lastKey=rowf17bfb40-f658-4b4b-a9da-82abf455f4e6/df:sex/1506670800531/Put,

avgKeyLen=56,

avgValueLen=5,

entries=20,

length=6440

Trailer:

fileinfoOffset=1646,

loadOnOpenDataOffset=1502,

dataIndexCount=1,

metaIndexCount=0,

totalUncomressedBytes=6313,

entryCount=20,

compressionCodec=NONE,

uncompressedDataIndexSize=70,

numDataIndexLevels=1,

firstDataBlockOffset=0,

lastDataBlockOffset=0,

comparatorClassName=org.apache.hadoop.hbase.KeyValue$KeyComparator,

encryptionKey=NONE,

majorVersion=3,

minorVersion=0

Fileinfo:

BLOOM_FILTER_TYPE = ROW

DELETE_FAMILY_COUNT = x00x00x00x00x00x00x00x00

EARLIEST_PUT_TS = x00x00x01^xCCx93xEEx1A

KEY_VALUE_VERSION = x00x00x00x01

LAST_BLOOM_KEY = rowf17bfb40-f658-4b4b-a9da-82abf455f4e6

MAJOR_COMPACTION_KEY = x00

MAX_MEMSTORE_TS_KEY = x00x00x00x00x00x00x00x0D

MAX_SEQ_ID_KEY = 15

TIMERANGE = 1506670800410....1506670800733

hfile.AVG_KEY_LEN = 56

hfile.AVG_VALUE_LEN = 5

hfile.CREATE_TIME_TS = x00x00x01^xCCx9BxADxCF

hfile.LASTKEY = x00'rowf17bfb40-f658-4b4b-a9da-82abf455f4e6x02dfsexx00x00x01^xCCx93xEEx93x04

Mid-key: x00'row0324f6ce-dec9-474a-b3fd-202b0c482756x02dfnamex00x00x01^xCCx93xEExCBx04

Bloom filter:

BloomSize: 16

No of Keys in bloom: 10

Max Keys for bloom: 13

Percentage filled: 77%

Number of chunks: 1

Comparator: RawBytesComparator

Delete Family Bloom filter:

Not present

Scanned kv count -> 20

===Tips===:

1. HFile放在哪里了?

查看方式一:

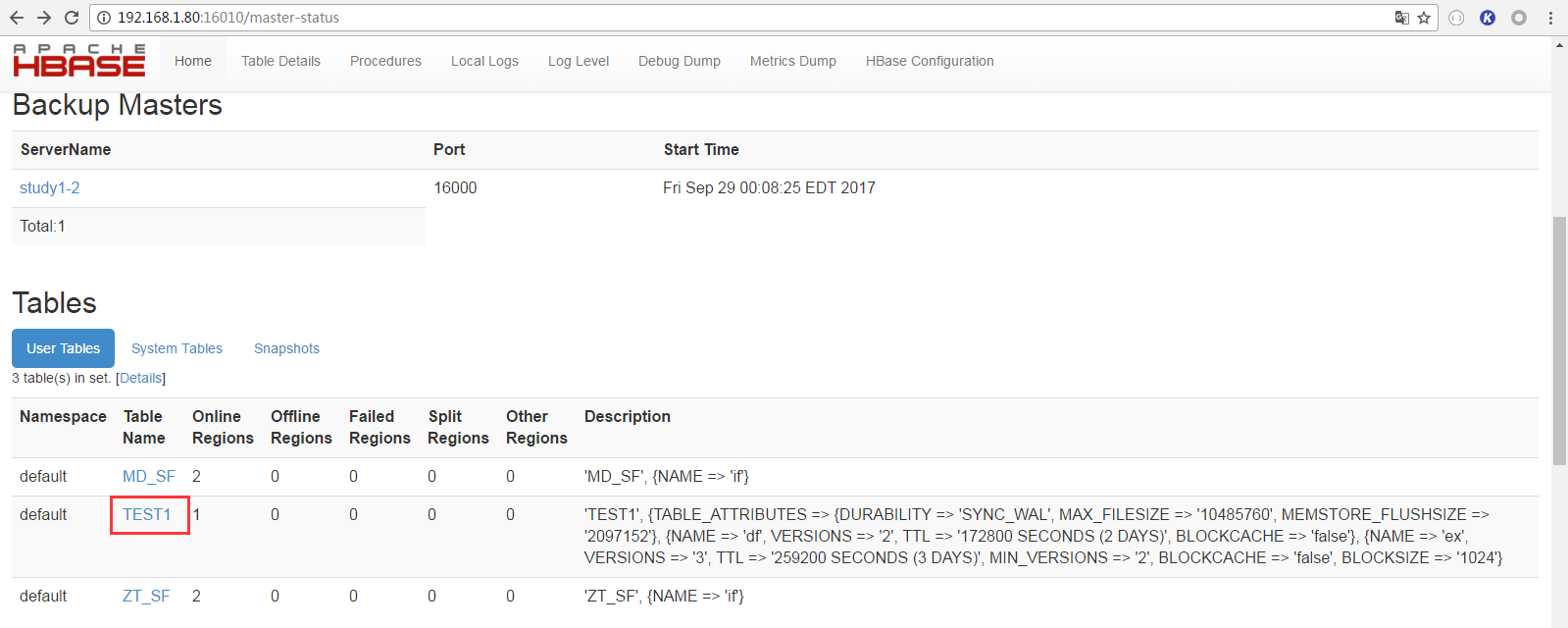

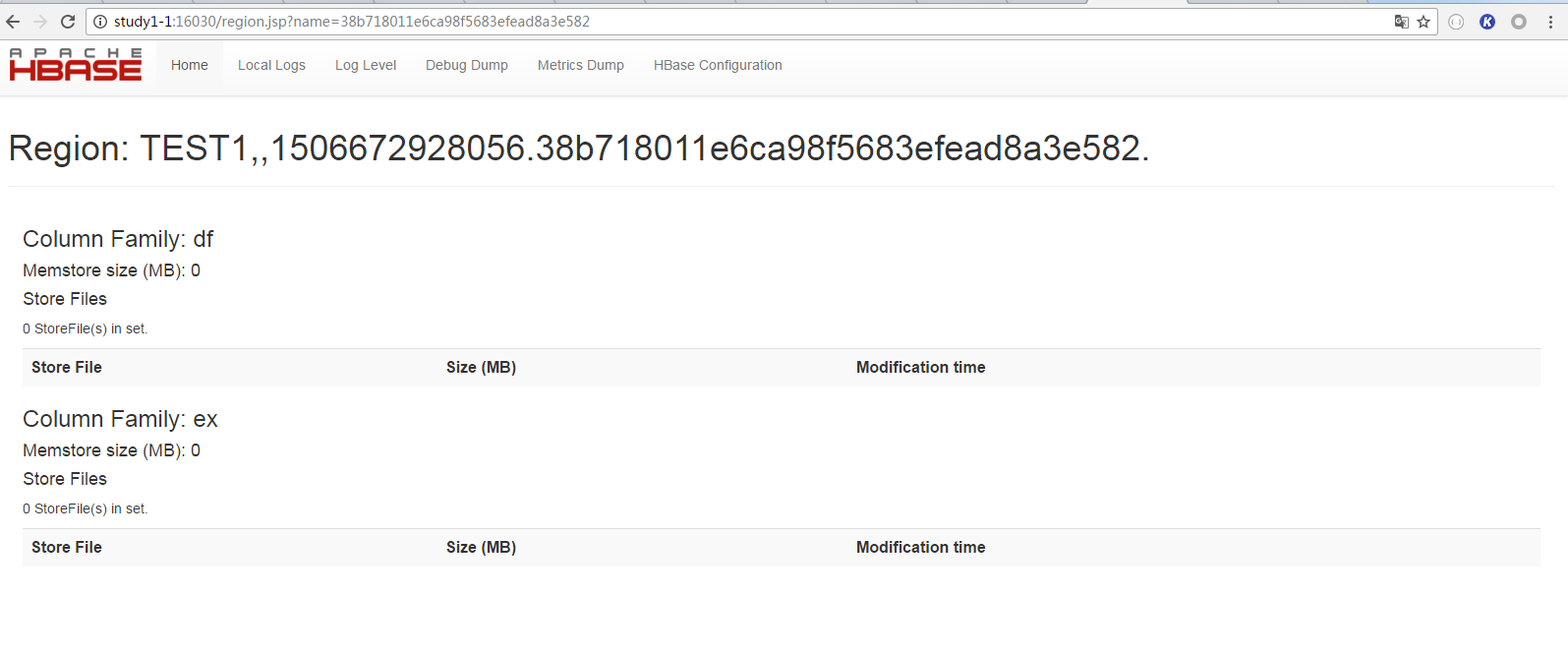

可以通过HBase的web页面查看HFile名称及路径。步骤如下:

① 打开Web管理页面,选择表

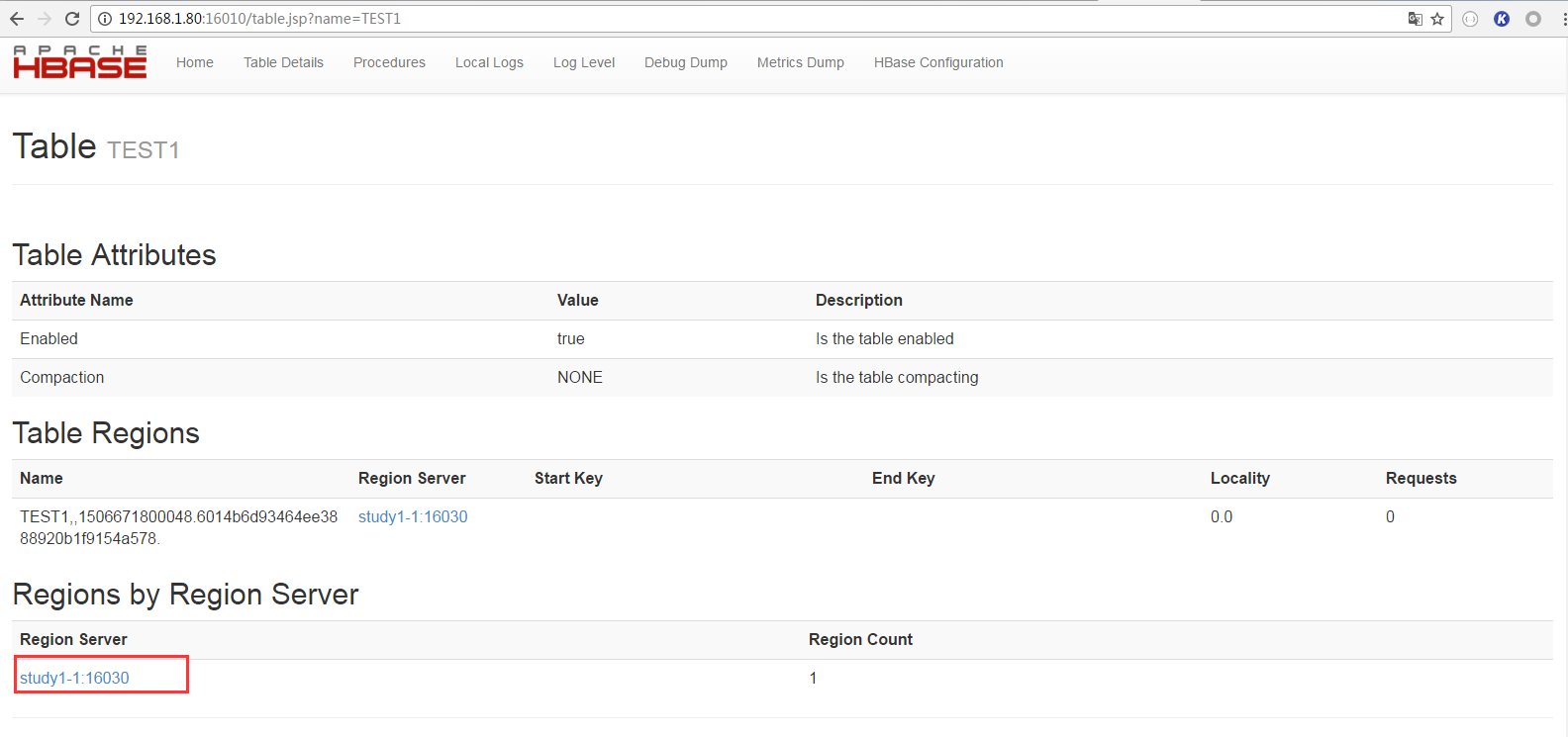

② 选择HRegion Server

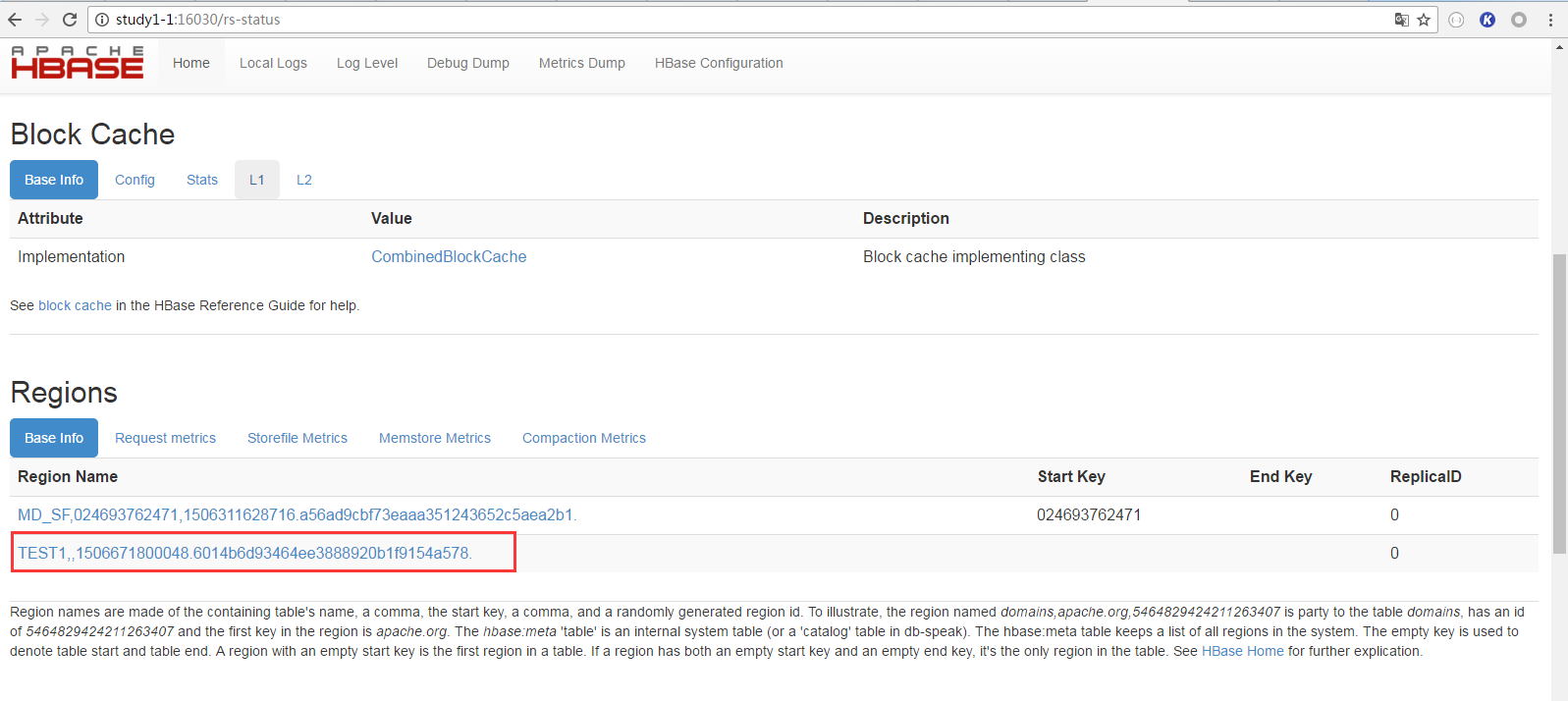

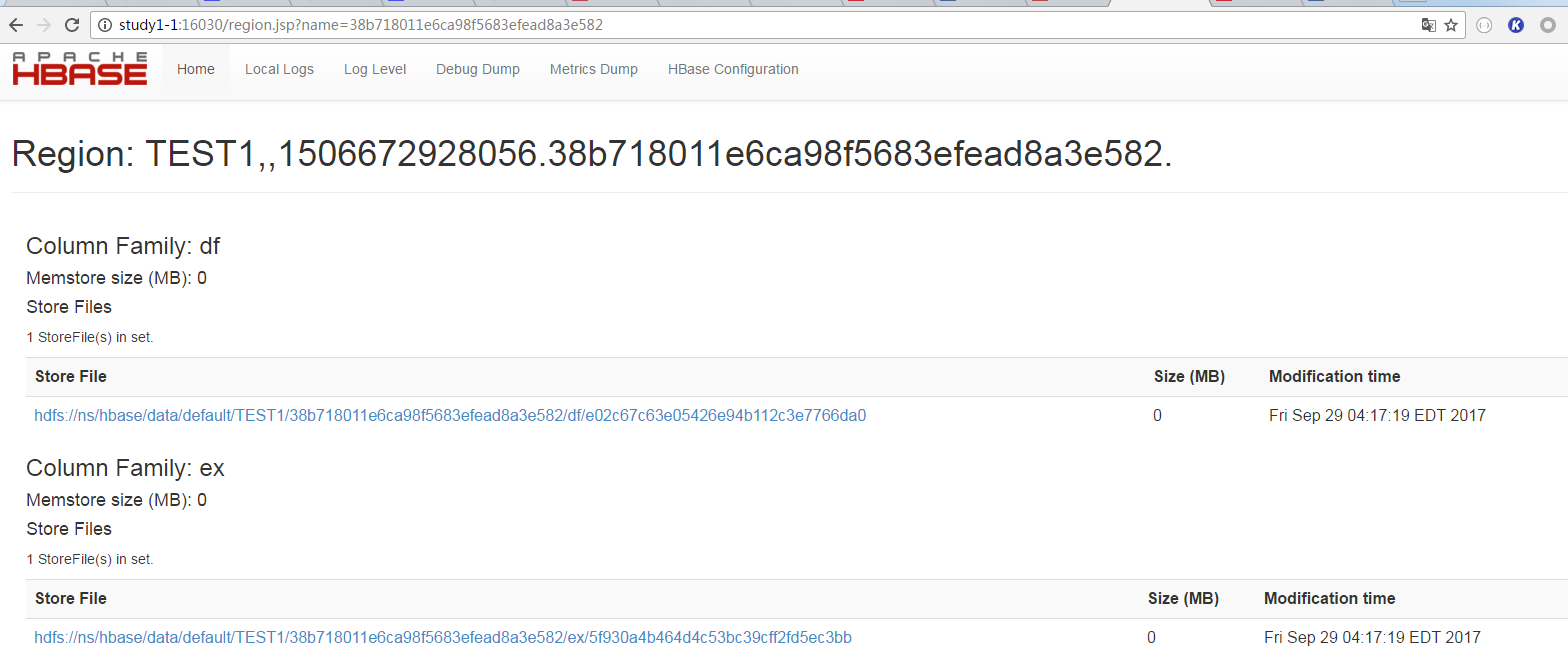

③ 选择Region

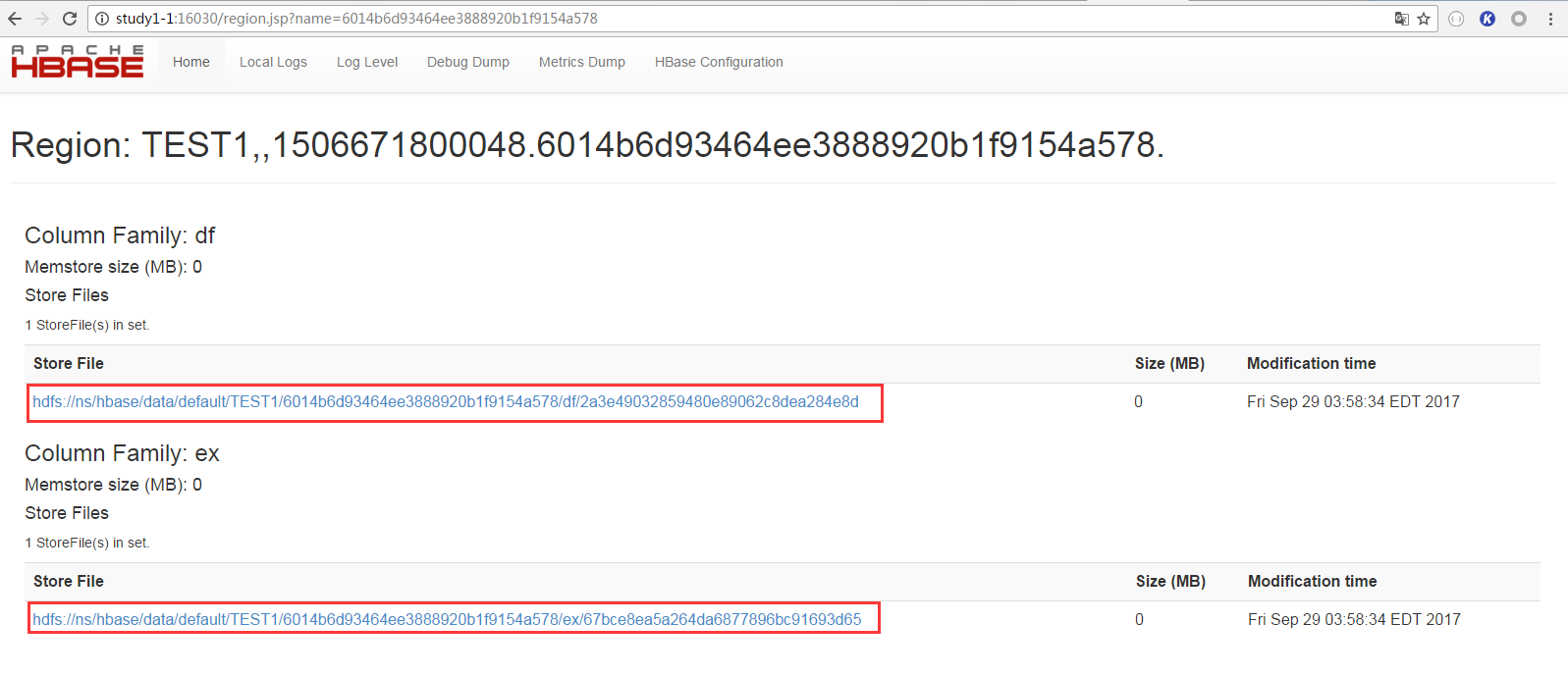

④ 查看HFile路径

HFile是以列族为单位的,我建立的表有两个列族,所以这里就有两个HFile

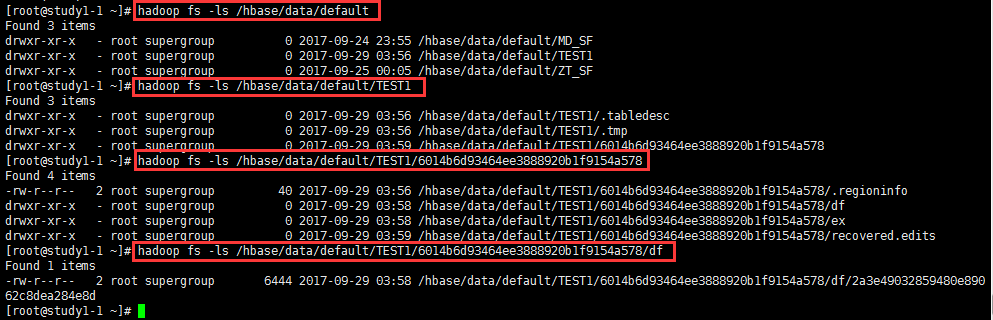

查看方式二:

直接使用hdfs命令,逐层查看

命令样例:hadoop fs -ls /hbase/data/default

2. 为什么能scan到数据,却没有hfile?

通过程序向HBase插入数据之后,能够scan到数据,不过hdfs上确没有hfile。

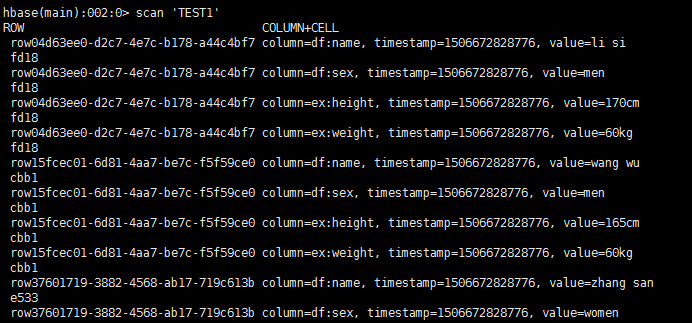

如下图所示:scan 'TEST1' 能够看到表中有数据。

从Web页面上却看不到hfile

原因:

插入的数据在memstore(写缓存)中,还没有flush到hdfs上。

解决办法:

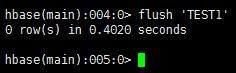

手动flush。在hbase shell环境下,有一个flush命令,可以手动刷某张表

flush之后,就可以看到hfile了

--END--