http://blog.javachen.com/2014/08/25/install-azkaban.html

azkaban 的hdfs 插件配置azkaban的官方文档 http://azkaban.github.io/azkaban/docs/2.5/#plugins 描述的很简单,网上也有很多教程,但是配置到最后去浏览器上查看都是如下这个毫无提示信息的错误

没有办法,只能去下载了azkaban与azkaban-plugin的源码来一点点排查.

azkaban 源码地址: github.com/azkaban/azkaban

azkaban-plugin 源码地址: github.com/azkaban/azkaban-plugins

前面的安装步骤就不说了,请参考上面的地址.

第一个出错的问题点排查

在azkaban-web 启动的时候,我们会看到如下这部分信息

1 2015/07/30 14:21:39.730 +0800 INFO [HdfsBrowserServlet] [Azkaban] Initializing hadoop security manager azkaban.security.HadoopSecurityManager_H_2_0 2 2015/07/30 14:21:39.737 +0800 INFO [HadoopSecurityManager] [Azkaban] getting new instance 3 2015/07/30 14:21:39.738 +0800 INFO [HadoopSecurityManager] [Azkaban] Using hadoop config found in file:/usr/local/hadoop/etc/hadoop/ 4 2015/07/30 14:21:39.756 +0800 INFO [HadoopSecurityManager] [Azkaban] Setting fs.hdfs.impl.disable.cache to true 5 2015/07/30 14:21:39.807 +0800 INFO [HadoopSecurityManager] [Azkaban] hadoop.security.authentication set to null 6 2015/07/30 14:21:39.808 +0800 INFO [HadoopSecurityManager] [Azkaban] hadoop.security.authorization set to null 7 2015/07/30 14:21:39.808 +0800 INFO [deprecation] [Azkaban] fs.default.name is deprecated. Instead, use fs.defaultFS 8 2015/07/30 14:21:39.813 +0800 INFO [HadoopSecurityManager] [Azkaban] DFS name hdfs://namenode:9000

先看第一行,看使用的HadoopSecurityManager版本与你的hadoop版本是否一致.如果不一致修改 plugins/viewer/hdfs/conf/plugin.properties 配置,将其改为跟你hadoop版本一致的

1.x 使用 azkaban.security.HadoopSecurityManager_H_1_0

2.x 使用 azkaban.security.HadoopSecurityManager_H_2_0

hadoop.security.manager.class=azkaban.security.HadoopSecurityManager_H_2_0

再看第8行,看看打印的dfs地址与你真实的是否一致. 如果不一致在 /etc/profile 中配置好 HADOOP_HOME 与HADOOP_CONF_DIR.因为在azkaban的代码中会直接来获取系统中这2个环境变量

如果没有设置,会自动给你设置一个默认值,当然无法使用了.

1 export HADOOP_HOME=/usr/local/hadoop/ 2 export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop/

第二个错误点排查

我改完上面问题之后重启,满怀希望的点开浏览器,还是无法访问.继续看代码

具体调用的方法是

HadoopSecurityManager_H_2_0 类的 getFSAsUser方法,方法如下

这个类是azkaban-plugin 项目里的

1 @Override 2 public FileSystem getFSAsUser(String user) throws HadoopSecurityManagerException { 3 FileSystem fs; 4 try { 5 logger.info("Getting file system as " + user); 6 UserGroupInformation ugi = getProxiedUser(user); 7 8 if (ugi != null) { 9 fs = ugi.doAs(new PrivilegedAction<FileSystem>(){ 10 11 @Override 12 public FileSystem run() { 13 try { 14 return FileSystem.get(conf); 15 } catch (IOException e) { 16 e.printStackTrace(); 17 throw new RuntimeException(e); 18 } 19 } 20 }); 21 } 22 else { 23 fs = FileSystem.get(conf); 24 } 25 } 26 catch (Exception e) 27 { 28 e.printStackTrace(); 29 throw new HadoopSecurityManagerException("Failed to get FileSystem. ", e); 30 } 31 return fs; 32 }

加上错误输出后替换部署里相应的包,再次启动,终于可以看到错误了:

java.io.IOException: No FileSystem for scheme: hdfs at org.apache.hadoop.fs.FileSystem.getFileSystemClass(FileSystem.java:2385) at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:2392) at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:365) at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:167) at azkaban.security.HadoopSecurityManager_H_2_0$1.run(HadoopSecurityManager_H_2_0.java:270) at azkaban.security.HadoopSecurityManager_H_2_0$1.run(HadoopSecurityManager_H_2_0.java:265) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:356) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1534) at azkaban.security.HadoopSecurityManager_H_2_0.getFSAsUser(HadoopSecurityManager_H_2_0.java:265) at azkaban.viewer.hdfs.HdfsBrowserServlet.getFileSystem(HdfsBrowserServlet.java:150) at azkaban.viewer.hdfs.HdfsBrowserServlet.handleFsDisplay(HdfsBrowserServlet.java:274) at azkaban.viewer.hdfs.HdfsBrowserServlet.handleGet(HdfsBrowserServlet.java:198) at azkaban.webapp.servlet.LoginAbstractAzkabanServlet.doGet(LoginAbstractAzkabanServlet.java:106) at javax.servlet.http.HttpServlet.service(HttpServlet.java:707) at javax.servlet.http.HttpServlet.service(HttpServlet.java:820) at org.mortbay.jetty.servlet.ServletHolder.handle(ServletHolder.java:511) at org.mortbay.jetty.servlet.ServletHandler.handle(ServletHandler.java:401) at org.mortbay.jetty.servlet.SessionHandler.handle(SessionHandler.java:182) at org.mortbay.jetty.handler.ContextHandler.handle(ContextHandler.java:766) at org.mortbay.jetty.handler.HandlerWrapper.handle(HandlerWrapper.java:152) at org.mortbay.jetty.Server.handle(Server.java:326) at org.mortbay.jetty.HttpConnection.handleRequest(HttpConnection.java:542) at org.mortbay.jetty.HttpConnection$RequestHandler.headerComplete(HttpConnection.java:928) at org.mortbay.jetty.HttpParser.parseNext(HttpParser.java:549) at org.mortbay.jetty.HttpParser.parseAvailable(HttpParser.java:212) at org.mortbay.jetty.HttpConnection.handle(HttpConnection.java:404) at org.mortbay.jetty.bio.SocketConnector$Connection.run(SocketConnector.java:228) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:713) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582)

这个错误是因为缺少包,我们导入必要的几个包,版本根据自己的hadoop版本一直

[hadoop@datanode1 azkaban-web-2.5.0]$ ll extlib/ total 10108 -rwxr-xr-x. 1 hadoop hdfs 41123 Jul 30 14:59 commons-cli-1.2.jar -rwxr-xr-x. 1 hadoop hdfs 57043 Jul 30 14:59 hadoop-auth-2.3.0-cdh5.1.0.jar -rwxr-xr-x. 1 hadoop hdfs 2844022 Jul 30 14:59 hadoop-common-2.3.0-cdh5.1.0.jar -rwxr-xr-x. 1 hadoop hdfs 6861199 Jul 30 14:59 hadoop-hdfs-2.3.0-cdh5.1.0.jar -rwxr-xr-x. 1 hadoop hdfs 533455 Jul 30 14:59 protobuf-java-2.5.0.jar

把这些包拷贝到 $AZKABAN_WEB_HOME/extlib 下,再次重启.点开浏览器

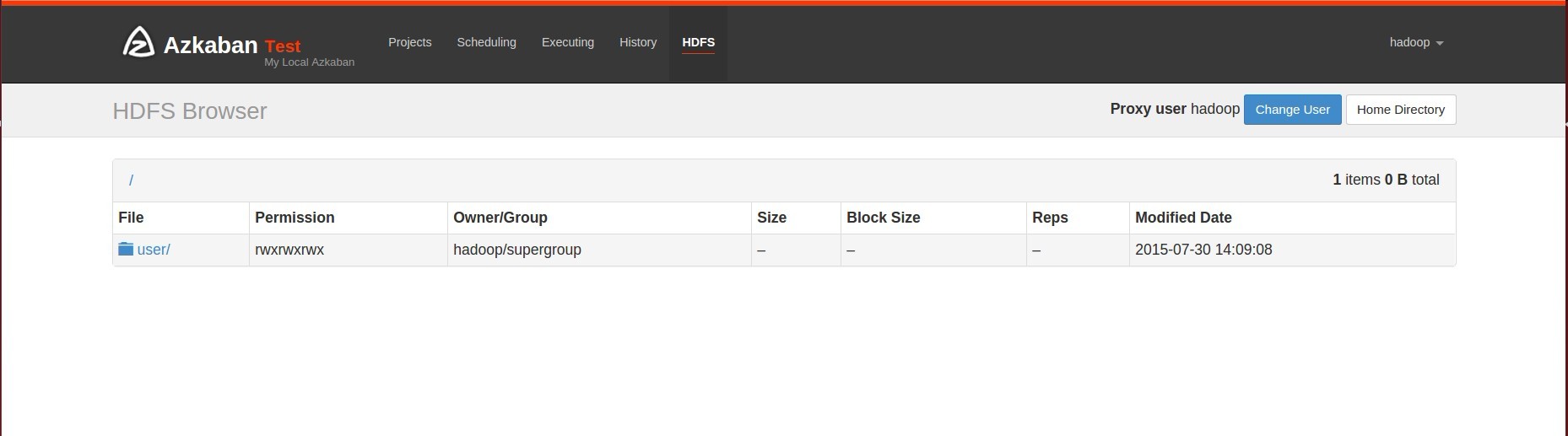

我们看到成功了

二 设置了HadoopSecurity的集群,hdfs 插件配置

修改plugins/viewer/hdfs/conf/plugin.properties

azkaban.should.proxy=true

proxy.user=hadoop

proxy.keytab.location=/etc/hadoop.keytab

修改这3个配置

azkaban.should.proxy 改为 true

proxy.user 为hadoop kerberos的使用的用户

proxy.keytab.location 为 keytab的位置

修改之后,重启看页面

HTTP ERROR 500 Problem accessing /hdfs. Reason: Error processing request: SIMPLE authentication is not enabled. Available:[TOKEN, KERBEROS] Caused by: java.lang.IllegalStateException: Error processing request: SIMPLE authentication is not enabled. Available:[TOKEN, KERBEROS] at azkaban.viewer.hdfs.HdfsBrowserServlet.handleGet(HdfsBrowserServlet.java:203) at azkaban.webapp.servlet.LoginAbstractAzkabanServlet.doGet(LoginAbstractAzkabanServlet.java:106) at javax.servlet.http.HttpServlet.service(HttpServlet.java:707) at javax.servlet.http.HttpServlet.service(HttpServlet.java:820) at org.mortbay.jetty.servlet.ServletHolder.handle(ServletHolder.java:511) at org.mortbay.jetty.servlet.ServletHandler.handle(ServletHandler.java:401) at org.mortbay.jetty.servlet.SessionHandler.handle(SessionHandler.java:182) at org.mortbay.jetty.handler.ContextHandler.handle(ContextHandler.java:766) at org.mortbay.jetty.handler.HandlerWrapper.handle(HandlerWrapper.java:152) at org.mortbay.jetty.Server.handle(Server.java:326) at org.mortbay.jetty.HttpConnection.handleRequest(HttpConnection.java:542) at org.mortbay.jetty.HttpConnection$RequestHandler.headerComplete(HttpConnection.java:928) at org.mortbay.jetty.HttpParser.parseNext(HttpParser.java:549) at org.mortbay.jetty.HttpParser.parseAvailable(HttpParser.java:212) at org.mortbay.jetty.HttpConnection.handle(HttpConnection.java:404) at org.mortbay.jetty.bio.SocketConnector$Connection.run(SocketConnector.java:228) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:713) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582)

报错竟然说, authentication simple 没有开启.我们明明已经是配置了kerberos认证了,为什么程序还认定authentication是simple的呢.

在看下 HadoopSecurityManager_H_2_0 类的构造方法

private HadoopSecurityManager_H_2_0(Props props) throws HadoopSecurityManagerException, IOException { ...省略 logger.info("hadoop.security.authentication set to " + conf.get("hadoop.security.authentication")); logger.info("hadoop.security.authorization set to " + conf.get("hadoop.security.authorization")); logger.info("DFS name " + conf.get("fs.default.name")); UserGroupInformation.setConfiguration(conf); securityEnabled = UserGroupInformation.isSecurityEnabled(); if(securityEnabled) { logger.info("The Hadoop cluster has enabled security"); shouldProxy = true; try { ...省略

截取一部分来看,显然 securityEnabled 现在是false,所以进入到下面那个判断中,那我们hadoop已经配置了 Security啊.

继续看 UserGroupInformation.isSecurityEnabled() 方法

1 private static synchronized void initialize(Configuration conf) { 2 String value = conf.get("hadoop.security.authentication"); 3 if(value != null && !"simple".equals(value)) { 4 if(!"kerberos".equals(value)) { 5 throw new IllegalArgumentException("Invalid attribute value for hadoop.security.authentication of " + value); 6 } 7 8 useKerberos = true; 9 } else { 10 useKerberos = false; 11 } 12 13 if(!(groups instanceof UserGroupInformation.TestingGroups)) { 14 groups = Groups.getUserToGroupsMappingService(conf); 15 } 16 17 try { 18 KerberosName.setConfiguration(conf); 19 } catch (IOException var3) { 20 throw new RuntimeException("Problem with Kerberos auth_to_local name configuration", var3); 21 } 22 23 isInitialized = true; 24 conf = conf; 25 metrics = UgiInstrumentation.create(conf); 26 }

看第一行,是通过读取 hadoop.security.authentication 来获取这个值.

继续看代码知道azkaban读取这些配置是从我们加入到extlib中的hadoop-common-2.3.0-cdh5.1.0.jar里的core-site.xml里获取的,我们修改的hadoop的core-site.xml配置文件,azkaban是肯定是不会知道的.所以我们用hadoop配置目录的里core-site.xml替换掉hadoop-common-2.3.0-cdh5.1.0.jar 中的 core-site.xml.

再次重启,查看页面

HTTP ERROR 500 Problem accessing /hdfs. Reason: Error processing request: User: hadoop/datanode1@HADOOP is not allowed to impersonate hadoop Caused by: java.lang.IllegalStateException: Error processing request: User: hadoop/datanode1@HADOOP is not allowed to impersonate hadoop at azkaban.viewer.hdfs.HdfsBrowserServlet.handleGet(HdfsBrowserServlet.java:203) at azkaban.webapp.servlet.LoginAbstractAzkabanServlet.doGet(LoginAbstractAzkabanServlet.java:106) at javax.servlet.http.HttpServlet.service(HttpServlet.java:707) at javax.servlet.http.HttpServlet.service(HttpServlet.java:820) at org.mortbay.jetty.servlet.ServletHolder.handle(ServletHolder.java:511) at org.mortbay.jetty.servlet.ServletHandler.handle(ServletHandler.java:401) at org.mortbay.jetty.servlet.SessionHandler.handle(SessionHandler.java:182) at org.mortbay.jetty.handler.ContextHandler.handle(ContextHandler.java:766) at org.mortbay.jetty.handler.HandlerWrapper.handle(HandlerWrapper.java:152) at org.mortbay.jetty.Server.handle(Server.java:326) at org.mortbay.jetty.HttpConnection.handleRequest(HttpConnection.java:542) at org.mortbay.jetty.HttpConnection$RequestHandler.headerComplete(HttpConnection.java:928) at org.mortbay.jetty.HttpParser.parseNext(HttpParser.java:549) at org.mortbay.jetty.HttpParser.parseAvailable(HttpParser.java:212) at org.mortbay.jetty.HttpConnection.handle(HttpConnection.java:404) at org.mortbay.jetty.bio.SocketConnector$Connection.run(SocketConnector.java:228) at org.mortbay.jetty.security.SslSocketConnector$SslConnection.run(SslSocketConnector.java:713) at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582)

看到刚才那个错误已经没了,变成现这个.

解决方案:

修改hadoop core-site.xml 配置,增加如下内容.

extlib中的hadoop-common-2.3.0-cdh5.1.0.jar里的core-site.xml也做同样修改.

<property> <name>hadoop.proxyuser.hadoop.hosts</name> <value>*</value> <description>The superuser can connect only from host1 and host2 to impersonate a user</description> </property> <property> <name>hadoop.proxyuser.hadoop.groups</name> <value>*</value> <description>Allow the superuser oozie to impersonate any members of the group group1 and group2</description> </property>

这个标红的hadoop,是你启动azkaban的用户.

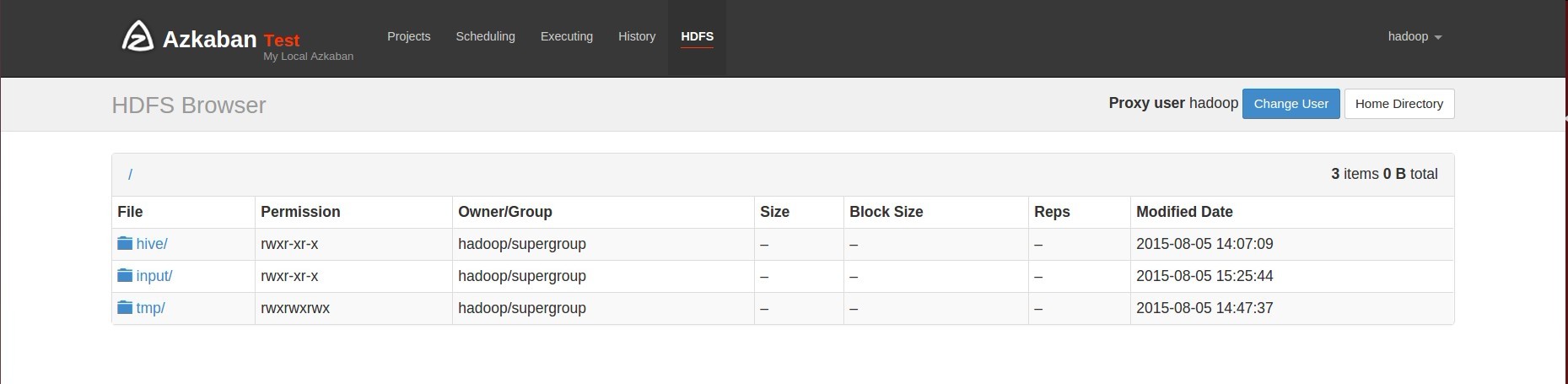

再次重启

完成.