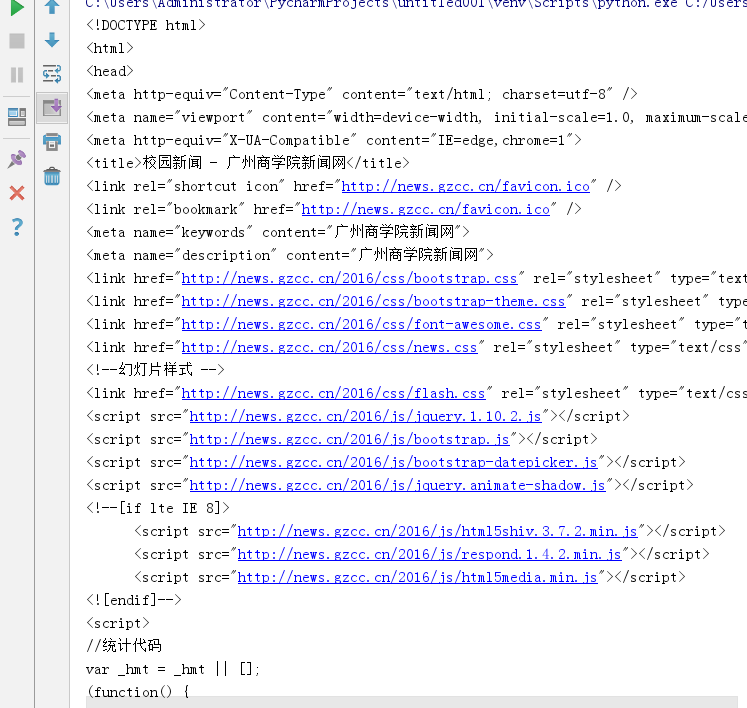

1.利用requests.get(url)获取网页页面的html文件

import requests

newsurl='http://news.gzcc.cn/html/xiaoyuanxinwen/'

res = requests.get(newsurl) #返回response对象

res.encoding='utf-8'

2.利用BeautifulSoup的HTML解析器,生成结构树

from bs4 import BeautifulSoup

soup = BeautifulSoup(res.text,'html.parser')

3.找出特定标签的html元素

soup.p #标签名,返回第一个

soup.head

soup.p.name #字符串

soup.p. attrs #字典,标签的所有属性

soup.p. contents # 列表,所有子标签

soup.p.text #字符串

soup.p.string

soup.select(‘li')

4.取得含有特定CSS属性的元素

soup.select('#p1Node')

soup.select('.news-list-title')

5.练习:

取出h1标签的文本

import requests

url='http://localhost:63342/untitled001/index.html?_ijt=qha65g9m3uvkp5ijfh0b7h041t'

res = requests.get(url)

res.encoding='utf-8'

from bs4 import BeautifulSoup

soup = BeautifulSoup(res.text,'html.parser')

print(soup.h1.text)

取出a标签的链接

import requests

url='http://localhost:63342/untitled001/index.html?_ijt=1jot1o2jp7hl0cfc7hs6vhl2j3'

res = requests.get(url)

res.encoding='utf-8'

from bs4 import BeautifulSoup

soup = BeautifulSoup(res.text,'html.parser')

# print(soup.h1.text)

print(soup.a.attrs['href'])

取出所有li标签的所有内容

print(soup.li.attrs)

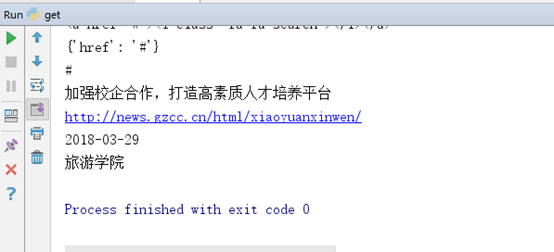

取出一条新闻的标题、链接、发布时间、来源

print(soup.a.attrs['href'])

print(soup.select('.news-list-title')[0].text)

print(soup.select('li')[2].a.attrs['href'])

print(soup.select('.news-list-info')[0].contents[0].text)

print(soup.select('.news-list-info')[0].contents[1].text)