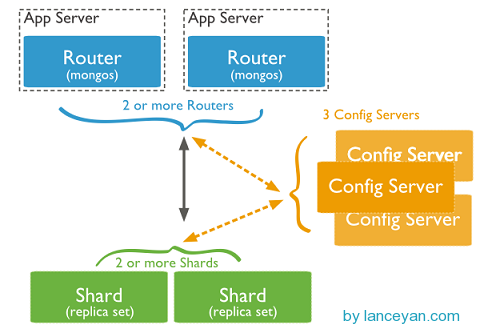

拓扑图如下:

从图中可以看到有四个组件:mongos、config server、shard、replica set。

mongos,数据库集群请求的入口,所有的请求都通过mongos进行协调,不需要在应用程序添加一个路由选择器,mongos自己就是一个请求分发中心,它负责把对应的数据请求请求转发到对应的shard服务器上。在生产环境通常有多mongos作为请求的入口,防止其中一个挂掉所有的mongodb请求都没有办法操作。

config server,顾名思义为配置服务器,存储所有数据库元信息(路由、分片)的配置。mongos本身没有物理存储分片服务器和数据路由信息,只是缓存在内存里,配置服务器则实际存储这些数据。mongos第一次启动或者关掉重启就会从 config server 加载配置信息,以后如果配置服务器信息变化会通知到所有的 mongos 更新自己的状态,这样 mongos 就能继续准确路由。在生产环境通常有多个 config server 配置服务器,因为它存储了分片路由的元数据,这个可不能丢失!就算挂掉其中一台,只要还有存货, mongodb集群就不会挂掉。

shard,这就是传说中的分片了。上面提到一个机器就算能力再大也有天花板,就像军队打仗一样,一个人再厉害喝血瓶也拼不过对方的一个师。俗话说三个臭皮匠顶个诸葛亮,这个时候团队的力量就凸显出来了。在互联网也是这样,一台普通的机器做不了的多台机器来做。

环境配置如下:

主机 IP 服务及端口

Server A 192.168.10.88

mongod shard1:27017

mongod shard2:27018

mongod shard3:27019

mongod config1:20000

mongs1:30000

Server B 192.168.10.89

mongod shard1:27017

mongod shard2:27018

mongod shard3:27019

mongod config2:20000

mongs2:30000

Server C 192.168.10.90

mongod shard1:27017

mongod shard2:27018

mongod shard3:27019

mongod config3:20000

mongs3:30000

1. 创建数据目录

在Server A 上:

mkdir -p /data0/mongodbdata/shard1

mkdir -p /data0/mongodbdata/shard2

mkdir -p /data0/mongodbdata/shard3

mkdir -p /data0/mongodbdata/config

在Server B 上:

mkdir -p /data0/mongodbdata/shard1

mkdir -p /data0/mongodbdata/shard2

mkdir -p /data0/mongodbdata/shard3

mkdir -p /data0/mongodbdata/config

在Server C 上:

mkdir -p /data0/mongodbdata/shard1

mkdir -p /data0/mongodbdata/shard2

mkdir -p /data0/mongodbdata/shard3

mkdir -p /data0/mongodbdata/config

2. 配置Replica Sets

2.1 配置shard1所用到的Replica Sets

在Server A 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard1 --port 27017 --dbpath /data0/mongodbdata/shard1 --logpath /data0/mongodbdata/shard1/shard1.log --logappend --fork

在Server B 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard1 --port 27017 --dbpath /data0/mongodbdata/shard1 --logpath /data0/mongodbdata/shard1/shard1.log --logappend --fork

[root@localhost bin]#

在Server C 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard1 --port 27017 --dbpath /data0/mongodbdata/shard1 --logpath /data0/mongodbdata/shard1/shard1.log --logappend --fork

[root@localhost bin]#

用mongo 连接其中一台机器的27017 端口的mongod,初始化Replica Sets“shard1”,执行:

./mongo --port 27017

MongoDB shell version: 2.6.6

connecting to: 127.0.0.1:27017/test

> config = {_id: 'shard1', members: [

{_id: 0, host: '192.168.10.88:27017'},

{_id: 1, host: '192.168.10.89:27017'},

{_id: 2, host: '192.168.10.90:27017'}]

}

> rs.initiate(config)

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

2.2 配置shard2所用到的Replica Sets

在Server A 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard2 --port 27018 --dbpath /data0/mongodbdata/shard2 --logpath /data0/mongodbdata/shard2/shard2.log --logappend --fork

[root@localhost bin]#

在Server B 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard2 --port 27018 --dbpath /data0/mongodbdata/shard2 --logpath /data0/mongodbdata/shard2/shard2.log --logappend --fork

[root@localhost bin]#

在Server C 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard2 --port 27018 --dbpath /data0/mongodbdata/shard2 --logpath /data0/mongodbdata/shard2/shard2.log --logappend --fork

[root@localhost bin]#

用mongo 连接其中一台机器的27018 端口的mongod,初始化Replica Sets “shard2”,执行:

./mongo --port 27018

MongoDB shell version: 2.6.6

connecting to: 127.0.0.1:27018/test

> config = {_id: 'shard2', members: [

{_id: 0, host: '192.168.10.88:27018'},

{_id: 1, host: '192.168.10.89:27018'},

{_id: 2, host: '192.168.10.90:27018'}]

}

> rs.initiate(config)

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

2.3 配置shard3所用到的Replica Sets

在Server A 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard3 --port 27019 --dbpath /data0/mongodbdata/shard3 --logpath /data0/mongodbdata/shard3/shard3.log --logappend --fork

[root@localhost bin]#

在Server B 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard3 --port 27019 --dbpath /data0/mongodbdata/shard3 --logpath /data0/mongodbdata/shard3/shard3.log --logappend --fork

[root@localhost bin]#

在Server C 上:

/usr/local/mongodb/bin/mongod --shardsvr --replSet shard3 --port 27019 --dbpath /data0/mongodbdata/shard3 --logpath /data0/mongodbdata/shard3/shard3.log --logappend --fork

[root@localhost bin]#

用mongo 连接其中一台机器的27019 端口的mongod,初始化Replica Sets “shard3”,执行:

./mongo --port 27019

MongoDB shell version: 2.6.6

connecting to: 127.0.0.1:27019/test

> config = {_id: 'shard3', members: [

{_id: 0, host: '192.168.10.88:27019'},

{_id: 1, host: '192.168.10.89:27019'},

{_id: 2, host: '192.168.10.90:27019'}]

}

> rs.initiate(config)

{

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

3. 配置3 台Config Server

在Server A、B、C上执行:

/usr/local/mongodb/bin/mongod --configsvr --dbpath /data0/mongodbdata/config --port 20000 --logpath /data0/mongodbdata/config/config.log --logappend --fork

4. 配置3 台Route Process

在Server A、B、C上执行:

/usr/local/mongodb/bin/mongos --configdb 192.168.10.88:20000,192.168.10.89:20000,192.168.10.90:20000 --port 30000 --chunkSize 1 --logpath /data0/mongodbdata/mongos.log --logappend --fork

5. 配置Shard Cluster

连接到其中一台机器的端口30000 的mongos 进程,并切换到admin 数据库做以下配置

./mongo --port 30000

MongoDB shell version: 2.6.6

connecting to: 127.0.0.1:30000/test

> use admin

switched to db admin

>db.runCommand({addshard:"shard1/192.168.10.88:27017,192.168.10.89:27017,192.168.10.90:27017"});

{ "shardAdded" : "shard1", "ok" : 1 }

>db.runCommand({addshard:"shard2/192.168.10.88:27018,192.168.10.89:27018,192.168.10.90:27018"});

{ "shardAdded" : "shard2", "ok" : 1 }

>db.runCommand({addshard:"shard3/192.168.10.88:27019,192.168.10.89:27019,192.168.10.90:27019"});

{ "shardAdded" : "shard3", "ok" : 1 }

激活数据库及集合的分片

db.runCommand({ enablesharding:"test" })

db.runCommand({ shardcollection: "test.users", key: { _id:1 }}) --->id为片键

use test

for(var i=1;i<=200000;i++) db.users.insert({id:i,addr_1:"Beijing",addr_2:"Shanghai"});

db.users.stats()

{

"sharded" : true,

"systemFlags" : 1,

"userFlags" : 1,

"ns" : "test.users",

"count" : 200000,

"numExtents" : 18,

"size" : 22400000,

"storageSize" : 33546240,

"totalIndexSize" : 6532624,

"indexSizes" : {

"_id_" : 6532624

},

"avgObjSize" : 112,

"nindexes" : 1,

"nchunks" : 24,

"shards" : {

"shard1" : {

"ns" : "test.users",

"count" : 60928,

"size" : 6823936,

"avgObjSize" : 112,

"storageSize" : 11182080,

"numExtents" : 6,

"nindexes" : 1,

"lastExtentSize" : 8388608,

"paddingFactor" : 1,

"systemFlags" : 1,

"userFlags" : 1,

"totalIndexSize" : 1986768,

"indexSizes" : {

"_id_" : 1986768

},

"ok" : 1

},

"shard2" : {

"ns" : "test.users",

"count" : 73738,

"size" : 8258656,

"avgObjSize" : 112,

"storageSize" : 11182080,

"numExtents" : 6,

"nindexes" : 1,

"lastExtentSize" : 8388608,

"paddingFactor" : 1,

"systemFlags" : 1,

"userFlags" : 1,

"totalIndexSize" : 2411920,

"indexSizes" : {

"_id_" : 2411920

},

"ok" : 1

},

"shard3" : {

"ns" : "test.users",

"count" : 65334,

"size" : 7317408,

"avgObjSize" : 112,

"storageSize" : 11182080,

"numExtents" : 6,

"nindexes" : 1,

"lastExtentSize" : 8388608,

"paddingFactor" : 1,

"systemFlags" : 1,

"userFlags" : 1,

"totalIndexSize" : 2133936,

"indexSizes" : {

"_id_" : 2133936

},

"ok" : 1

}

},

"ok" : 1

}

可以看到Sharding搭建成功了,不过分的好像不是很均匀,所以这个分片还是很有讲究的,后续再深入讨论。