VMXNET3 vs E1000E and E1000

用户为什么要从E1000调整为VMXNET3,理由如下:

- E1000是千兆网路卡,而VMXNET3是万兆网路卡;

- E1000的性能相对较低,而VMXNET3的性能相对较高;

- VMXNET3支持TCP/IP Offload Engine,E1000不支持;

- VMXNET3可以直接和vmkernel通讯,执行内部数据处理;

eg. VMXNET3 will show the bandwidth as 10 GBps. However, the actual bandwidth will depend on your uplink speed. A physical CentOS or a Windows installation might show it as 40 GBps but, not the VMs. You will have to perform a bandwidth test to see how much you are getting in actual.

来源 http://blog.51cto.com/shipyard/1930183

Network performance with VMXNET3 compared to E1000E and E1000. This article explains the difference between the virtual network adapters and part 2 will demonstrate how much network performance could be gained by selecting the paravirtualized adapter.

The VMware administrator has several different virtual network adapters available to attach to the virtual machines. The virtual adapters belong to either of two groups:

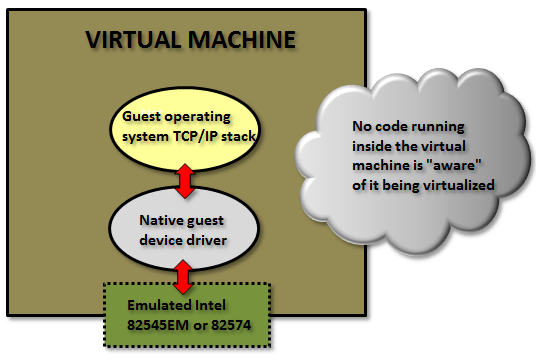

Emulated:

These are virtual hardware who emulates real existing physical network adapters. (Note that the physical network cards in the physical ESXi host is totally unrelated.) The VMkernel will present something that to the guest operating system will look exactly as some specific real world hardware and the guest could detect them through plug and play and use a native device driver.

Examples for the emulated devices are:

E1000 – which will emulate a 1 Gbit Intel 82545EM card, and is available for most operating systems since the generation of Windows Server 2003. This card is the default when creating almost all virtual machines and is by that widely used.

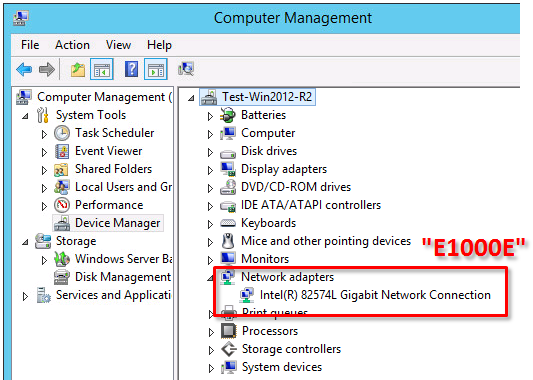

E1000E – emulates a newer real network adapter, the 1 Gbit Intel 82574, and is available for Windows 2012 and later. The E1000E needs VM hardware version 8 or later.

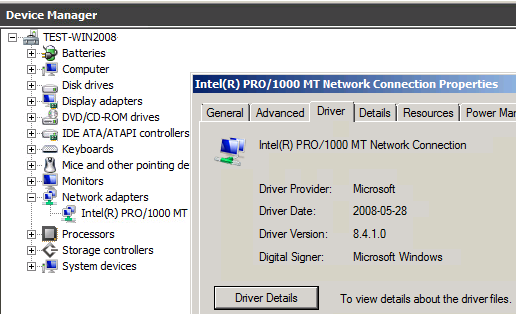

Above in Windows 2008 R2 with an emulated E1000 adapter the native guest operating system device driver is in use.

The positive side of the emulated network adapters are that they work “out of the box” and need no external code from VMware. It could be used to even (if needed) install the guest operating system by PXE since the E1000 device is available already from the BIOS start up.

The negative side is when using the default emulated adapters extra work is needed for every frame being sent or received from the guest operating system (which could be many thousands each second).

The VMkernel has to in “real time” emulate the exact behavior of the specific Intel 82545EM or 82574 cards, which will cost time and CPU cycles.

Paravirtualized:

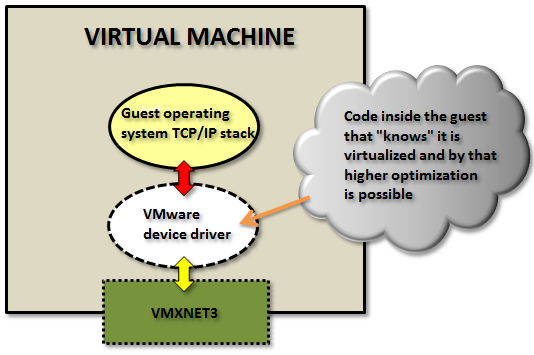

The other type of virtual network adapters are the “paravirtualized”. The most recent one is calledVMXNET3.

The paravirtualized network card does not exist as a physical NIC, but is a device “made up” entirely by VMware. For the guest operating system this will mean that it typically during the OS installation phase only senses that an unknown device is located in a PCI slot on the (virtual) motherboard, but it has no driver to actually use it.

(Note: some Linux distributions do even have the VMXNET3 driver pre-installed.)

For Windows Server, when a device driver is supplied, typically through the installation of VMware Tools, the guest operating system will perceive this as a real NIC from some network card manufacturer called “VMware” and use it as an ordinary network adapter. It has no reason to believe anything else than this is a NIC just as any other NIC around.

To the guest operating system the VMXNET3 card looks like a 10 Gbit physical device.

Note: there are also two obsolete paravirtualized adapters called VMXNET and VMXNET2 (sometimes the “Enhanced VMXNET”), however as long as the virtual machine has at least hardware version 7 only the VMXNET3 adapter should be used.

Since VMware with the VMXNET3 card owns much more of the network components even inside the VM there are many performance enhancements that could done. With the emulated E1000/E1000E the kernel has to mimic the exact behavior of existing adapters to the guest but with the VMXNET3 it could create a “perfect” virtual adapter optimized to be used in a virtual environment.

In the first article the general difference between the adapter types was explained.

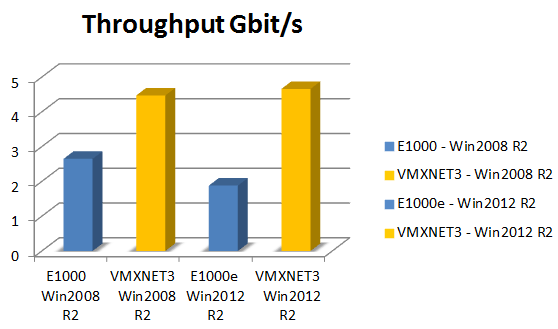

In this article we will test the network throughput in the two most common Windows operating systems today: Windows 2008 R2 and Windows 2012 R2, and see the performance of the VMXNET3 vs the E1000 and the E1000E.

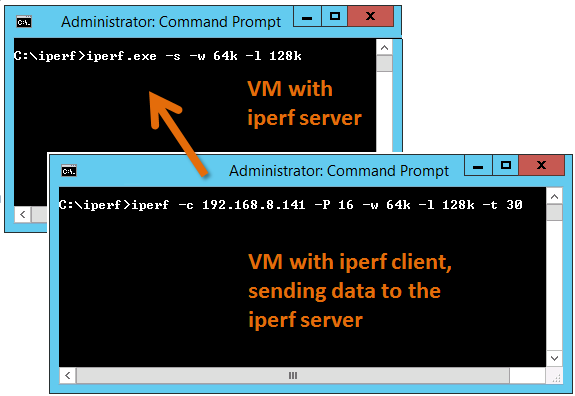

To generate large amounts of network traffic I used the iperf tool running on two virtual machines, one iperf “client” and the other as “server”. I have found that the following iperf settings generates the best combination for network throughput tests on Windows Server:

Server: iperf -s -w 64k -l 128k

Client: iperf -c <SERVER-IP> -P 16 -w 64k -l 128k -t 30

The test was done on HP Proliant Bl460c Gen8 with the virtual machines were running on the same physical host to be able to see the network performance regardless of the physical network connection between physical hosts/blades.

All settings on the E1000, E1000E and VMXNET3 was default. More on possible tweakings of the VMXNET3 card settings will be explained in a later article.

(It shall of course be noted that the following results are just observations from tests on one specific hardware and ESXi configuration, and is not in any way a “scientific” study.)

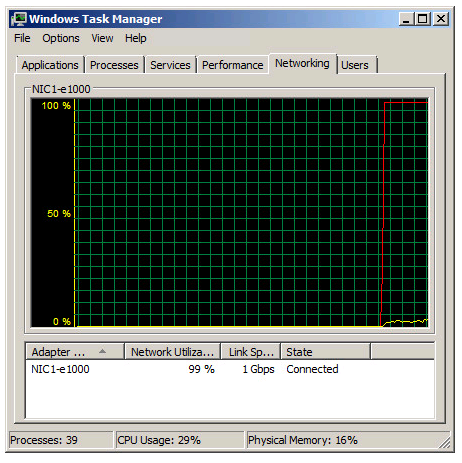

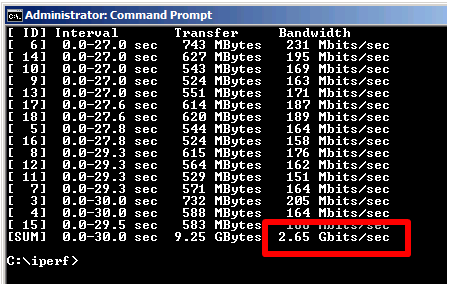

Test 1: Windows 2008 R2 with the default E1000 adapter

Two Windows 2008 R2 virtual machines, one as iperf server and the other as client, with the test running in 30 seconds.

As noted in the Task Manager view the 1 Gbit link speed was maxed out. A somewhat interesting fact is that even with the emulated E1000 adapter it is possible to use more than what “should” be possible on a 1 Gbit link.

From the iperf client output we can see that we reach a total throughput of 2.65 Gbit per second with the default E1000 virtual adapter.

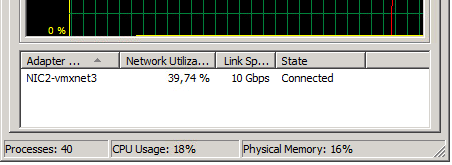

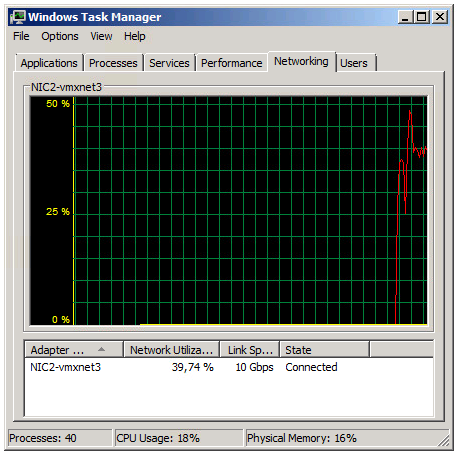

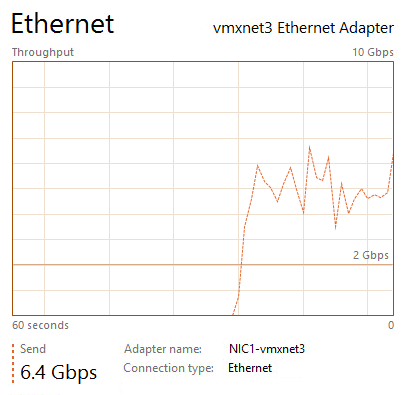

Test 2: Windows 2008 R2 with the VMXNET3 adapter

The Task Manager view reports utilization around 39% of the 10 Gbit link in the Iperf client VM.

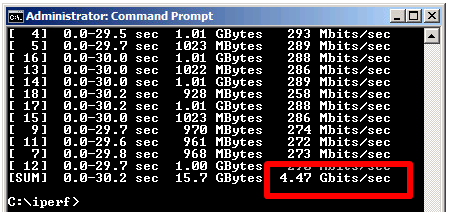

The iperf output shows a total throughput for VMXNET3 of 4.47 Gbit / second over the time the test was conducted.

The VMXNET3 adapter demonstrates almost 70 % better network throughput than the E1000 card on Windows 2008 R2.

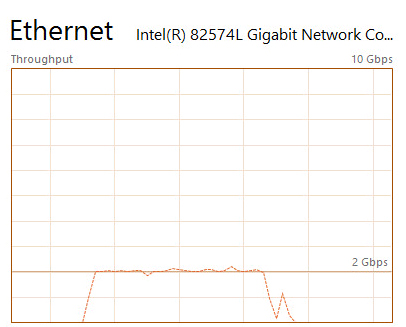

Test 3: Windows 2012 R2 with the E1000E adapter

The E1000E is a newer, and more “enhanced” version of the E1000. To the guest operating system it looks like the physical adapter Intel 82547 network interface card.

However, even if it is a newer adapter it did actually deliver lower throughput than the E1000 adapter.

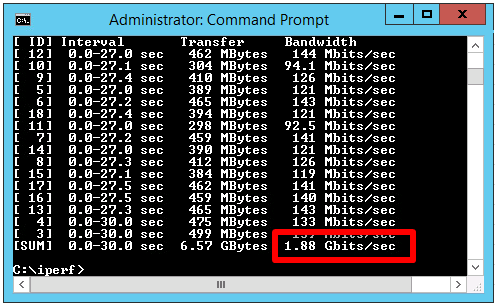

Two virtual machines running Windows 2012 R2 with the iperf tool running as client and server.

E1000E got 1.88 Gbit / sec, which is considerable lower than the 2.65 Gbit/s for the original E1000 on Windows 2008 R2.

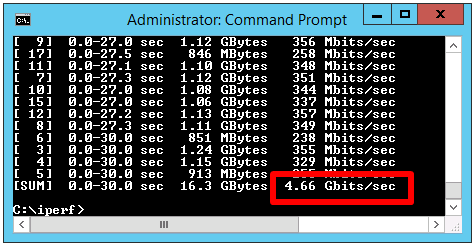

Test 4: Windows 2012 R2 with the VMXNET3 adapter

The two Windows 2012 R2 virtual machines now running with VMXNET3 adapter got the following iperf results:

The throughput was 4.66 Gbit/sec, which is very close to the result of VMXNET3 on Windows 2008 R2, but almost 150 % better than the new E1000E.

In summary the VMXNET3 adapter delivers greatly more network throughput performance than both E1000 and E1000E. Also, in at least this test setup the newer E1000E performed actually lower than the older E1000.

The test was done on Windows Server virtual machines and the top throughput of around 4.6 Gbit/sec for the VMXNET3 adapter could be the result of limitations in the TCP implementations. Other operating systems with other TCP stacks might achieve even higher numbers. It shall be noted also that these test was just for the network throughput, but there are of course other factors as well, which might be further discussed in later articles.

=============== End