1 import urllib.request 2 #获取一个get请求 3 response = urllib.request.urlopen("http://www.baidu.com")

打开网页并返回网页内容给response

print(response.read().decode('utf-8')) #对获取到的网页进行utf-8解码

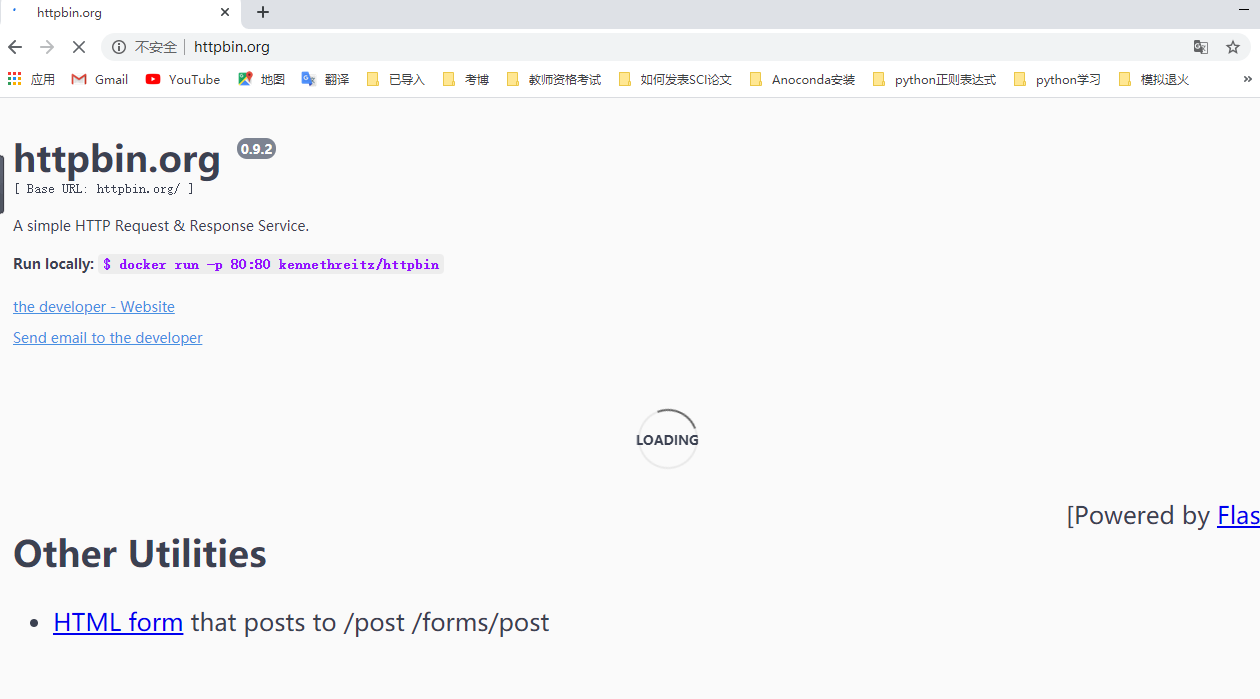

用于测试HTTP/HTTPS请求的网站

1 #获取一个post请求 2 3 import urllib.parse 4 data = bytes(urllib.parse.urlencode({"hello":"world"}),encoding="utf-8") 5 response = urllib.request.urlopen("http://httpbin.org/post",data= data) 6 print(response.read().decode("utf-8"))

#获取一个get请求 import urllib.parse response = urllib.request.urlopen("http://httpbin.org/get") print(response.read().decode("utf-8"))

如果访问超时,或者对方网页不予返回信息,(防止程序卡死)应该如何处理。

#超时处理 try: response = urllib.request.urlopen("http://httpbin.org/get", timeout=10)

print(response.read().decode("utf-8")) #对网页信息进行utf-8解码

except urllib.error.URLError as e:

print("time out!")

简单解析网页信息

response = urllib.request.urlopen("http://www.baidu.com") print(response.status) #查看状态信息(200/404/500/418) print(response.getheaders())#查看Response Headers中的信息 print(response.getheader("Server"))#查看Response Headers中的Server属性值(查看单一属性值)

将爬虫伪装成浏览器,以避免被网站识破,返回418信息。需要对request请求进行封装。(主要伪装User-Agent:用户代理)

以post方法访问httpbin.org

url = "http://httpbin.org/post" headers = { "User-Agent": "Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.106 Mobile Safari/537.36" } data = bytes(urllib.parse.urlencode({'name':'eric'}),encoding="utf-8") req = urllib.request.Request(url=url, data=data, headers=headers, method="post") response = urllib.request.urlopen(req) print(response.read().decode("utf-8"))

以get方法访问www.douban.com

url = "https://www.douban.com" headers = { "User-Agent": "Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.106 Mobile Safari/537.36" } req = urllib.request.Request(url=url, headers=headers) response = urllib.request.urlopen(req) print(response.read().decode("utf-8"))