本次抓取的是智联招聘网站搜索“数据分析师”之后的信息。

python版本: python3.5。

我用的主要package是 Beautifulsoup + Requests+csv

另外,我将招聘内容的简单描述也抓取下来了。

文件输出到csv文件后,发现用excel打开时有些乱码,但用文件软件打开(如notepad++)是没有问题的。

为了能用Excel打开时正确显示,我用pandas转换了以下,并添加上列名。转化完后,就可以正确显示了。关于用pandas转化,可以参考我的博客:

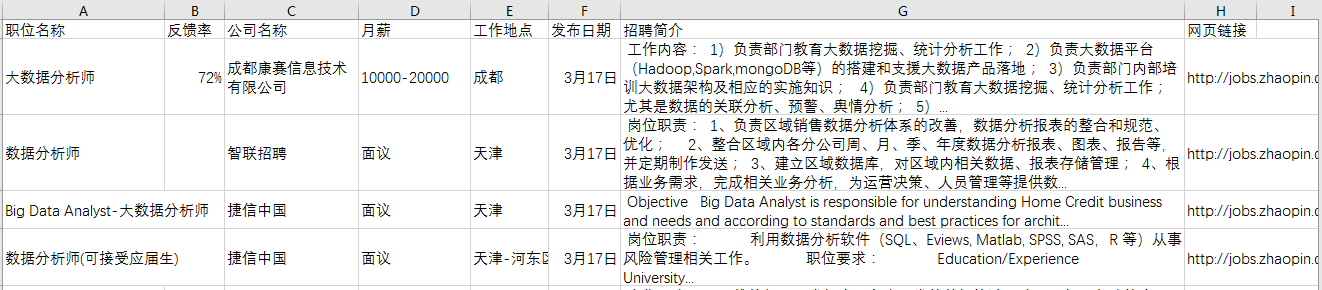

由于招聘内容的描述较多,最后将csv文件另存为excel文件,并调整下格式,以便于查看。

最后效果如下:

实现代码如下:信息爬取的代码如下:

1 # Code based on Python 3.x 2 # _*_ coding: utf-8 _*_ 3 # __Author: "LEMON" 4 5 6 from bs4 import BeautifulSoup 7 import requests 8 import csv 9 10 11 def download(url): 12 headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:51.0) Gecko/20100101 Firefox/51.0'} 13 req = requests.get(url, headers=headers) 14 return req.text 15 16 17 def get_content(html): 18 soup = BeautifulSoup(html, 'lxml') 19 body = soup.body 20 data_main = body.find('div', {'class': 'newlist_list_content'}) 21 tables = data_main.find_all('table') 22 23 zw_list = [] 24 for i,table in enumerate(tables): 25 if i == 0: 26 continue 27 temp = [] 28 tds = table.find('tr').find_all('td') 29 zwmc = tds[0].find('a').get_text() 30 zw_link = tds[0].find('a').get('href') 31 fkl = tds[1].find('span').get_text() 32 gsmc = tds[2].find('a').get_text() 33 zwyx = tds[3].get_text() 34 gzdd = tds[4].get_text() 35 gbsj = tds[5].find('span').get_text() 36 37 tr_brief = table.find('tr', {'class': 'newlist_tr_detail'}) 38 brief = tr_brief.find('li', {'class': 'newlist_deatil_last'}).get_text() 39 40 temp.append(zwmc) 41 temp.append(fkl) 42 temp.append(gsmc) 43 temp.append(zwyx) 44 temp.append(gzdd) 45 temp.append(gbsj) 46 temp.append(brief) 47 temp.append(zw_link) 48 49 zw_list.append(temp) 50 return zw_list 51 52 53 def write_data(data, name): 54 filename = name 55 with open(filename, 'a', newline='', encoding='utf-8') as f: 56 f_csv = csv.writer(f) 57 f_csv.writerows(data) 58 59 if __name__ == '__main__': 60 61 basic_url = 'http://sou.zhaopin.com/jobs/searchresult.ashx?jl=%E5%85%A8%E5%9B%BD&kw=%E6%95%B0%E6%8D%AE%E5%88%86%E6%9E%90%E5%B8%88&sm=0&p=' 62 63 number_list = list(range(90)) # total number of page is 90 64 for number in number_list: 65 num = number + 1 66 url = basic_url + str(num) 67 filename = 'zhilian_DA.csv' 68 html = download(url) 69 # print(html) 70 data = get_content(html) 71 # print(data) 72 print('start saving page:', num) 73 write_data(data, filename)

用pandas转化的代码如下:

1 # Code based on Python 3.x 2 # _*_ coding: utf-8 _*_ 3 # __Author: "LEMON" 4 5 import pandas as pd 6 7 df = pd.read_csv('zhilian_DA.csv', header=None) 8 9 10 df.columns = ['职位名称', '反馈率', '公司名称', '月薪', '工作地点', 11 '发布日期', '招聘简介', '网页链接'] 12 13 # 将调整后的dataframe文件输出到新的csv文件 14 df.to_csv('zhilian_DA_update.csv', index=False)