参考:

https://blog.csdn.net/qq_41475058/article/details/88976393

https://blog.51cto.com/ylw6006/2084403

https://www.kubernetes.org.cn/3418.html

https://blog.qikqiak.com/post/kubernetes-monitor-prometheus-grafana/

https://github.com/giantswarm/kubernetes-prometheus/tree/master/manifests

https://segmentfault.com/a/1190000013245394

监控p8s(较全):

https://www.kancloud.cn/huyipow/prometheus/527093

Promethus的持久化:

https://blog.csdn.net/wenwst/article/details/76624019?utm_medium=distribute.pc_relevant.none-task-blog-BlogCommendFromBaidu-19&depth_1-utm_source=distribute.pc_relevant.none-task-blog-BlogCommendFromBaidu-19

什么是Prometheus?

Prometheus是由SoundCloud开发的开源监控报警系统和时序列数据库(TSDB)。Prometheus使用Go语言开发,是Google BorgMon监控系统的开源版本。

2016年由Google发起Linux基金会旗下的原生云基金会(Cloud Native Computing Foundation), 将Prometheus纳入其下第二大开源项目。

Prometheus目前在开源社区相当活跃。

Prometheus和Heapster(Heapster是K8S的一个子项目,用于获取集群的性能数据。)相比功能更完善、更全面。Prometheus性能也足够支撑上万台规模的集群。

Prometheus的特点

多维度数据模型。

灵活的查询语言。

不依赖分布式存储,单个服务器节点是自主的。

通过基于HTTP的pull方式采集时序数据。

可以通过中间网关进行时序列数据推送。

通过服务发现或者静态配置来发现目标服务对象。

支持多种多样的图表和界面展示,比如Grafana等。

官网地址:https://prometheus.io/

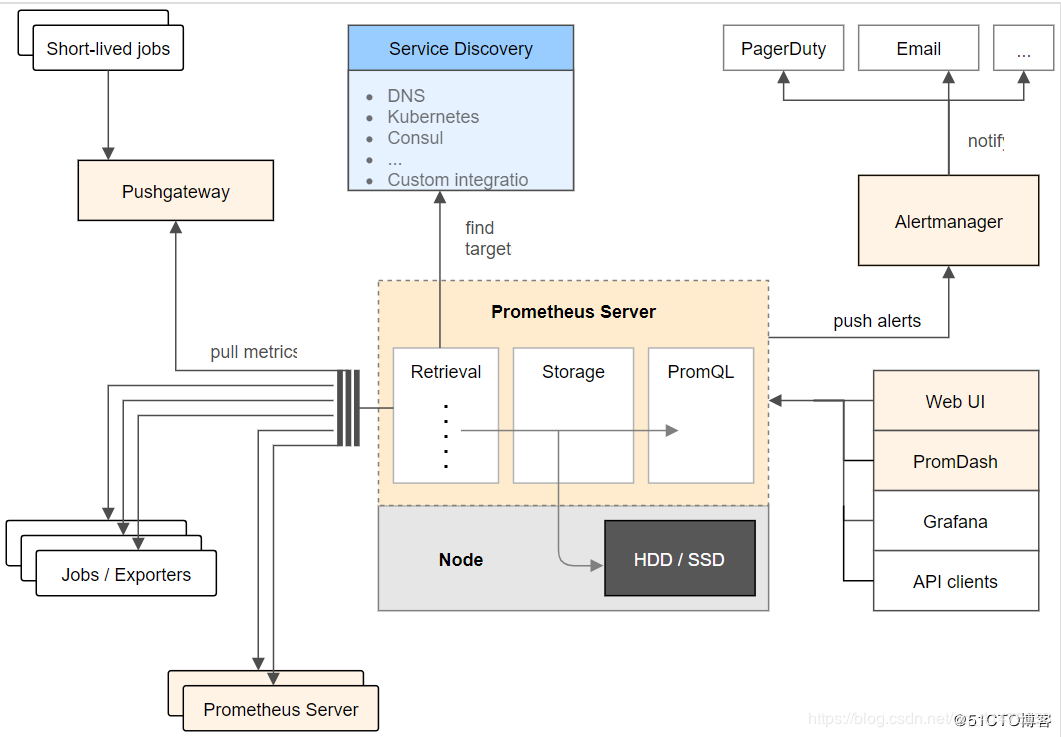

架构图

基本原理

Prometheus的基本原理是通过HTTP协议周期性抓取被监控组件的状态,任意组件只要提供对应的HTTP接口就可以接入监控。不需要任何SDK或者其他的集成过程。这样做非常适合做虚拟化环境监控系统,比如VM、Docker、Kubernetes等。输出被监控组件信息的HTTP接口被叫做exporter 。目前互联网公司常用的组件大部分都有exporter可以直接使用,比如Varnish、Haproxy、Nginx、MySQL、Linux系统信息(包括磁盘、内存、CPU、网络等等)。

服务过程

Prometheus Daemon负责定时去目标上抓取metrics(指标)数据,每个抓取目标需要暴露一个http服务的接口给它定时抓取。Prometheus支持通过配置文件、文本文件、Zookeeper、Consul、DNS SRV Lookup等方式指定抓取目标。Prometheus采用PULL的方式进行监控,即服务器可以直接通过目标PULL数据或者间接地通过中间网关来Push数据。

Prometheus在本地存储抓取的所有数据,并通过一定规则进行清理和整理数据,并把得到的结果存储到新的时间序列中。

Prometheus通过PromQL和其他API可视化地展示收集的数据。Prometheus支持很多方式的图表可视化,例如Grafana、自带的Promdash以及自身提供的模版引擎等等。Prometheus还提供HTTP API的查询方式,自定义所需要的输出。

PushGateway支持Client主动推送metrics到PushGateway,而Prometheus只是定时去Gateway上抓取数据。

Alertmanager是独立于Prometheus的一个组件,可以支持Prometheus的查询语句,提供十分灵活的报警方式。

三大套件

Server 主要负责数据采集和存储,提供PromQL查询语言的支持。

Alertmanager 警告管理器,用来进行报警。

Push Gateway 支持临时性Job主动推送指标的中间网关。

各个组件的功能

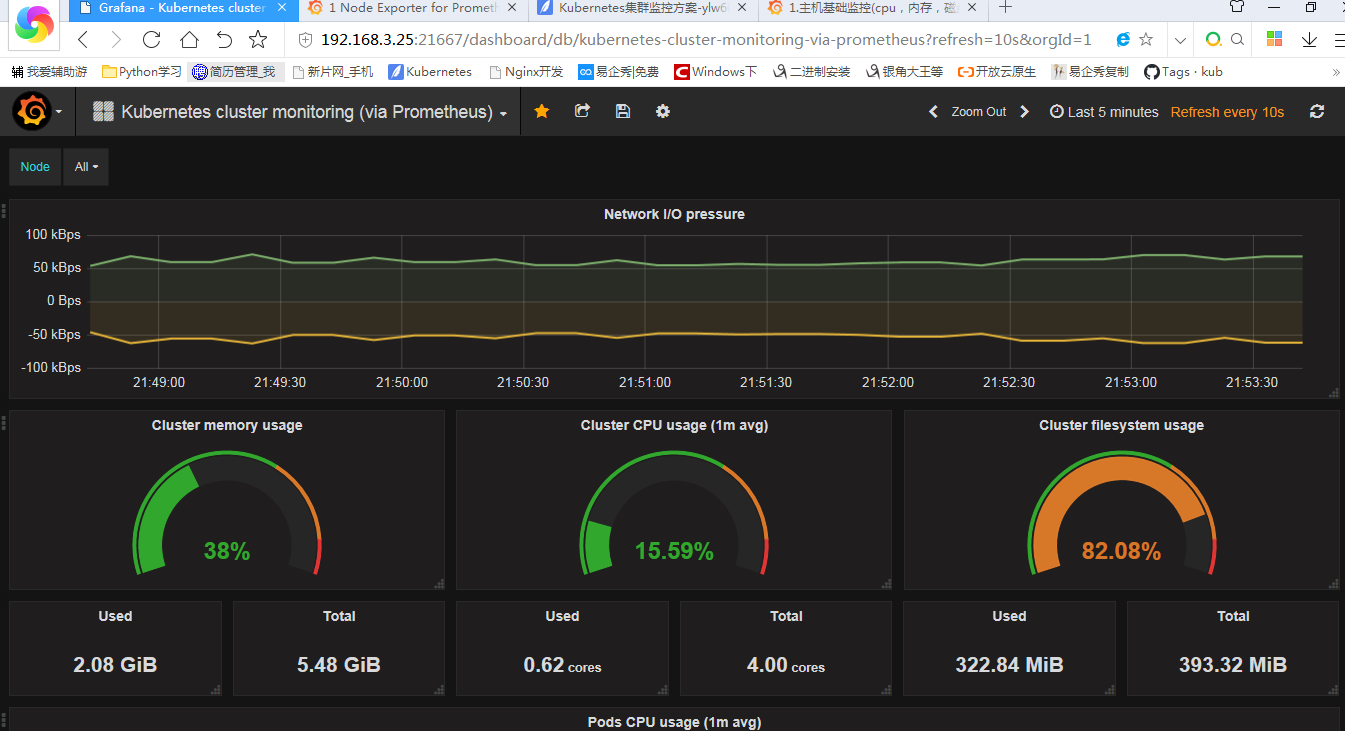

1、node-exporter组件负责收集节点上的metrics监控数据,并将数据推送给prometheus

2、prometheus负责存储这些数据

3、grafana将这些数据通过网页以图形的形式展现给用户

Prometheus的特点:

1、多维数据模型(时序列数据由metric名和一组key/value组成)

2、在多维度上灵活的查询语言(PromQl)

3、不依赖分布式存储,单主节点工作.

4、通过基于HTTP的pull方式采集时序数据

5、可以通过中间网关进行时序列数据推送(pushing)

6、目标服务器可以通过发现服务或者静态配置实现

7、多种可视化和仪表盘支持

prometheus 相关组件,Prometheus生态系统由多个组件组成,其中许多是可选的:

1、Prometheus 主服务,用来抓取和存储时序数据

2、client library 用来构造应用或 exporter 代码 (go,java,python,ruby)

3、push 网关可用来支持短连接任务

4、可视化的dashboard (两种选择,promdash 和 grafana.目前主流选择是 grafana.)

4、一些特殊需求的数据出口(用于HAProxy, StatsD, Graphite等服务)

5、实验性的报警管理端(alartmanager,单独进行报警汇总,分发,屏蔽等 )

现在我们正式开始部署工作。

一、环境介绍

操作系统环境:centos linux 7.3 64bit

K8S软件版本: 1.10.4(采用二进制方式部署)

Master节点IP: 192.168.3.25/24

Node节点IP: 192.168.3.26/24

二、Node Exporter部署

1、在k8s集群的所有节点上下载所需要的image

docker pull prom/node-exporter

docker pull prom/prometheus:v2.0.0

docker pull grafana/grafana:4.2.0

2、采用daemonset方式部署node-exporter组件

node-exporter的pod和service都是9100端口,然后映射到宿主机的31672端口

[root@k8s-master-101 prometheus]# cat node-exporter.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: node-exporter

namespace: kube-system

labels:

k8s-app: node-exporter

spec:

template:

metadata:

labels:

k8s-app: node-exporter

spec:

containers:

- image: prom/node-exporter

name: node-exporter

ports:

- containerPort: 9100

protocol: TCP

name: http

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: node-exporter

name: node-exporter

namespace: kube-system

spec:

ports:

- name: http

port: 9100

nodePort: 31672

protocol: TCP

type: NodePort

selector:

k8s-app: node-exporter

3、创建pod和service

kubectl create -f node-exporter.yaml

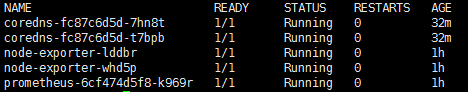

[root@master prometheus]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

node-exporter-dkk7n 1/1 Running 0 1m

node-exporter-p29d9 1/1 Running 0 1m

4、访问测试

Node-exporter对应的nodeport端口为31672,通过访问http://192.168.3.25:31672/metrics 可以看到对应的metrics

三、部署prometheus组件

1、rbac文件

[root@k8s-master-101 prometheus]# cat rbac-setup.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: kube-system

2、以configmap的形式管理prometheus组件的配置文件

[root@k8s-master-101 prometheus]# cat configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: kube-system

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-nodes'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: 'kubernetes-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::d+)?;(d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-services'

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__address__]

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

- job_name: 'kubernetes-ingresses'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::d+)?;(d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

3、Prometheus deployment 文件

[root@k8s-master-101 prometheus]# cat prometheus.deploy.yml

---

apiVersion: apps/v1beta2

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

spec:

containers:

- image: prom/prometheus:v2.0.0

name: prometheus

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus"

- "--storage.tsdb.retention=24h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: "/prometheus"

name: data

- mountPath: "/etc/prometheus"

name: config-volume

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 2500Mi

serviceAccountName: prometheus

volumes:

- name: data

emptyDir: {}

- name: config-volume

configMap:

name: prometheus-config

4、Prometheus service文件

容器的9090端口和service的9090端口

宿主机的30003端口

[root@k8s-master-101 prometheus]# cat prometheus.svc.yml

---

kind: Service

apiVersion: v1

metadata:

labels:

app: prometheus

name: prometheus

namespace: kube-system

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

nodePort: 30003

selector:

app: prometheus

5、创建相应对象

# kubectl create -f rbac-setup.yaml

# kubectl create -f configmap.yaml

# kubectl create -f prometheus.deploy.yml

# kubectl create -f prometheus.svc.yml

[root@master ~]# kubectl get pod -n kube-system

root@master ~]# kubectl get svc -n kube-system

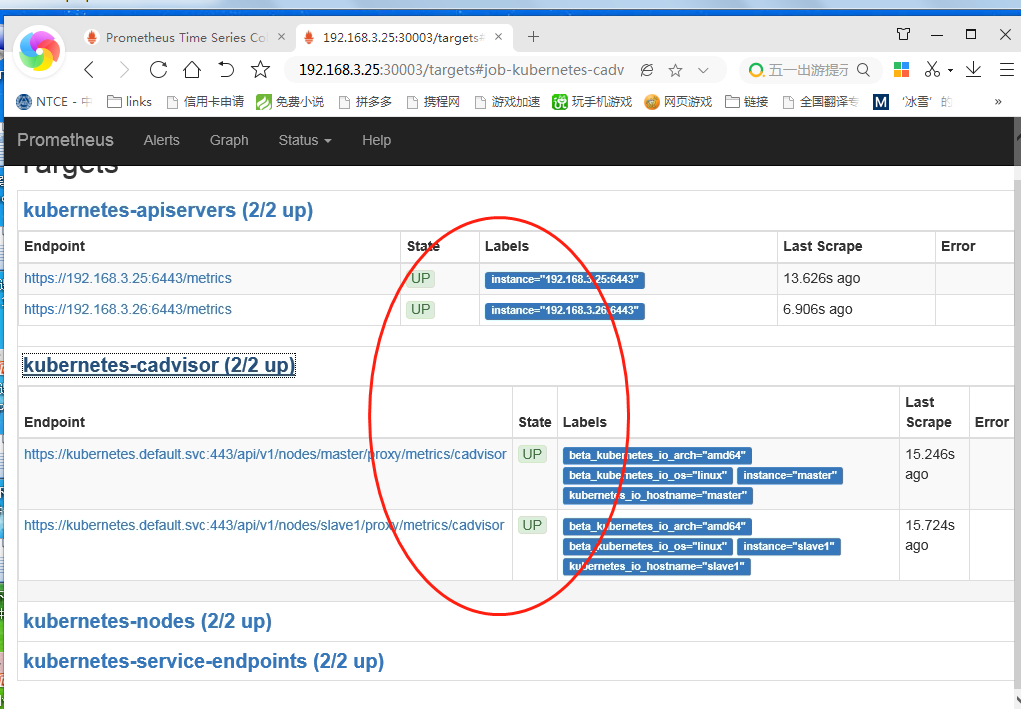

6、测试访问,prometheus对应的nodeport端口为30003,通过访问http://[node ip]:30003/targets

可以看到prometheus已经成功连接上了k8s的apiserver

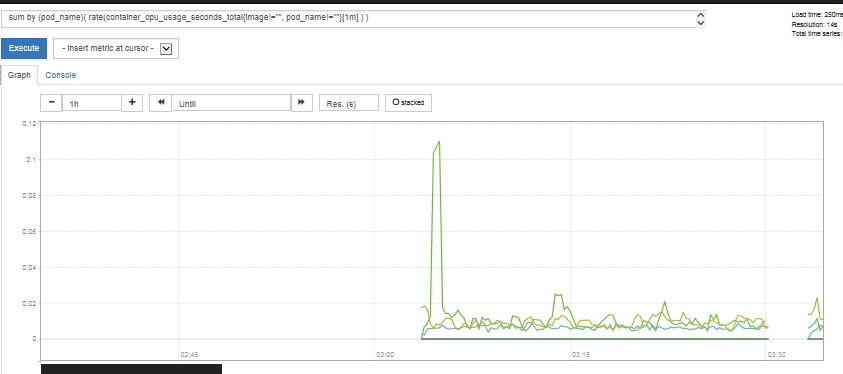

可以在prometheus的WEB界面上提供了基本的查询K8S集群中每个POD的CPU使用情况,查询条件如下:

sum by (pod_name)( rate(container_cpu_usage_seconds_total{image!="", pod_name!=""}[1m] ) )

上述的查询有出现数据,说明node-exporter往prometheus中写入数据正常,接下来我们就可以部署grafana组件,实现更友好的webui展示数据了。

三、部署grafana组件

1、grafana deployment配置文件

[root@k8s-master-101 prometheus]# cat grafana-deploy.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: grafana-core

namespace: kube-system

labels:

app: grafana

component: core

spec:

replicas: 1

template:

metadata:

labels:

app: grafana

component: core

spec:

containers:

- image: grafana/grafana:4.2.0

name: grafana-core

imagePullPolicy: IfNotPresent

# env:

resources:

# keep request = limit to keep this container in guaranteed class

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 100m

memory: 100Mi

env:

# The following env variables set up basic auth twith the default admin user and admin password.

- name: GF_AUTH_BASIC_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "false"

# - name: GF_AUTH_ANONYMOUS_ORG_ROLE

# value: Admin

# does not really work, because of template variables in exported dashboards:

# - name: GF_DASHBOARDS_JSON_ENABLED

# value: "true"

readinessProbe:

httpGet:

path: /login

port: 3000

# initialDelaySeconds: 30

# timeoutSeconds: 1

volumeMounts:

- name: grafana-persistent-storage

mountPath: /var

volumes:

- name: grafana-persistent-storage

emptyDir: {}

2、grafana service配置文件

[root@k8s-master-101 prometheus]# cat grafana-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: grafana

namespace: kube-system

labels:

app: grafana

component: core

spec:

type: NodePort

ports:

- port: 3000

selector:

app: grafana

component: core

3、grafana ingress配置文件

[root@k8s-master-101 prometheus]# cat grafana-ing.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: grafana

namespace: kube-system

spec:

rules:

- host: k8s.grafana

http:

paths:

- path: /

backend:

serviceName: grafana

servicePort: 3000

通过访问traefik的webui可以看到k8s.grafana服务发布成功

4、通过上述文件构建pod和serivce

kubectl create -f grafana-deploy.yaml

kubectl create -f grafana-svc.yaml

kubectl create -f grafana-ing.yaml

[root@master ~]# kubectl get svc -n kube-system

5、配置数据源为prometheus(访问IP:21667)

访问测试:默认用户名和密码都是admin

Add data中选择

Name: Prometheus

Type: Prometheus

url: http://prometheus.kube-system.svc.cluster.local:9090

6、导入面板之后就可以看到对应的监控数据了

添加模版的地址:

https://grafana.com/grafana/dashboards