119.监控模式分类~1.mp4

logging:日志监控,Logging 的特点是,它描述一些离散的(不连续的)事件。 例如:应用通过一个滚动的文件输出 Debug 或 Error 信息,并通过日志收集系统,存储到 Elasticsearch 中; 审批明细信息通过 Kafka,存储到数据库(BigTable)中; 又或者,特定请求的元数据信息,从服务请求中剥离出来,发送给一个异常收集服务,如 NewRelic。

tracing:链路追踪 ,例如skywalking、cat、zipkin专门做分布式链路追踪

metrics:重要的数据指标、例如统计当前http的一个请求量,数据是可以度量、累加的,数据是可以聚合和累加的。通过对数据点进行聚合查看重要的指标,里面包括一些计数器、测量仪器、直方图等,还可以

在上面打标签

- Metrics - 用于记录可聚合的数据。例如,队列的当前深度可被定义为一个度量值,在元素入队或出队时被更新;HTTP 请求个数可被定义为一个计数器,新请求到来时进行累加。

普罗米修斯重点属于metrics的监控

上面重点讲下几个工具部署的成本:

capEx表示研发人员开发的成本:metrics研发人员开发成功重点,elk最低,掌握skywalking需要一定的基础

OpEx表示运维成本,elk在运维的时候需要不断扩容,运行成功最高

reaction表示当出问题的时候哪些功能能够第一时间进行告警,metries工具最高。skywalking的告警能力一般

出了问题具体分析问题哪些工具最有效:查看调用链解决问题最有效

Logging,Metrics 和 Tracing

Logging,Metrics 和 Tracing 有各自专注的部分。

- Logging - 用于记录离散的事件。例如,应用程序的调试信息或错误信息。它是我们诊断问题的依据。

- Metrics - 用于记录可聚合的数据。例如,队列的当前深度可被定义为一个度量值,在元素入队或出队时被更新;HTTP 请求个数可被定义为一个计数器,新请求到来时进行累加。

- Tracing - 用于记录请求范围内的信息。例如,一次远程方法调用的执行过程和耗时。它是我们排查系统性能问题的利器。

这三者也有相互重叠的部分,如下图所示。

通过上述信息,我们可以对已有系统进行分类。例如,Zipkin 专注于 tracing 领域;Prometheus 开始专注于 metrics,随着时间推移可能会集成更多的 tracing 功能,但不太可能深入 logging 领域; ELK,阿里云日志服务这样的系统开始专注于 logging 领域,但同时也不断地集成其他领域的特性到系统中来,正向上图中的圆心靠近。

三者关系的一篇论文:http://peter.bourgon.org/blog/2017/02/21/metrics-tracing-and-logging.html

关于三者关系的更详细信息可参考 Metrics, tracing, and logging。下面我们重点介绍下 tracing。

Tracing 的诞生

Tracing 是在90年代就已出现的技术。但真正让该领域流行起来的还是源于 Google 的一篇论文"Dapper, a Large-Scale Distributed Systems Tracing Infrastructure",而另一篇论文"Uncertainty in Aggregate Estimates from Sampled Distributed Traces"中则包含关于采样的更详细分析。论文发表后一批优秀的 Tracing 软件孕育而生,比较流行的有:

- Dapper(Google) : 各 tracer 的基础

- StackDriver Trace (Google)

- Zipkin(twitter)

- Appdash(golang)

- 鹰眼(taobao)

- 谛听(盘古,阿里云云产品使用的Trace系统)

- 云图(蚂蚁Trace系统)

- sTrace(神马)

- X-ray(aws)

分布式追踪系统发展很快,种类繁多,但核心步骤一般有三个:代码埋点,数据存储、查询展示。

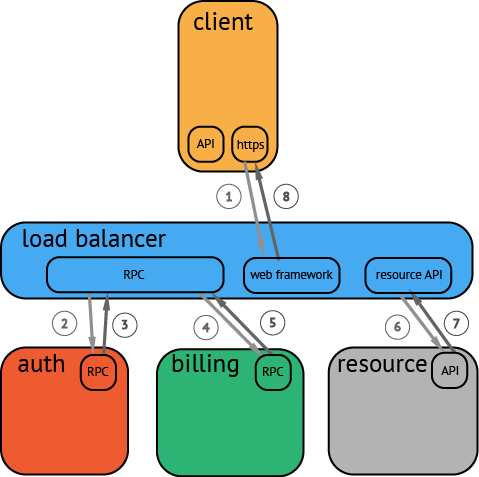

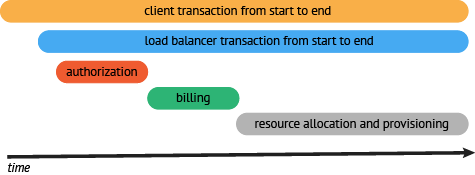

下图是一个分布式调用的例子,客户端发起请求,请求首先到达负载均衡器,接着经过认证服务,计费服务,然后请求资源,最后返回结果。

数据被采集存储后,分布式追踪系统一般会选择使用包含时间轴的时序图来呈现这个 Trace。

但在数据采集过程中,由于需要侵入用户代码,并且不同系统的 API 并不兼容,这就导致了如果您希望切换追踪系统,往往会带来较大改动。

OpenTracing

为了解决不同的分布式追踪系统 API 不兼容的问题,诞生了 OpenTracing 规范。

OpenTracing 是一个轻量级的标准化层,它位于应用程序/类库和追踪或日志分析程序之间。

+-------------+ +---------+ +----------+ +------------+

| Application | | Library | | OSS | | RPC/IPC |

| Code | | Code | | Services | | Frameworks |

+-------------+ +---------+ +----------+ +------------+

| | | |

| | | |

v v v v

+------------------------------------------------------+

| OpenTracing |

+------------------------------------------------------+

| | | |

| | | |

v v v v

+-----------+ +-------------+ +-------------+ +-----------+

| Tracing | | Logging | | Metrics | | Tracing |

| System A | | Framework B | | Framework C | | System D |

+-----------+ +-------------+ +-------------+ +-----------+OpenTracing 的优势

- OpenTracing 已进入 CNCF,正在为全球的分布式追踪,提供统一的概念和数据标准。

- OpenTracing 通过提供平台无关、厂商无关的 API,使得开发人员能够方便的添加(或更换)追踪系统的实现。

OpenTracing 数据模型

OpenTracing 中的 Trace(调用链)通过归属于此调用链的 Span 来隐性的定义。

特别说明,一条 Trace(调用链)可以被认为是一个由多个 Span 组成的有向无环图(DAG图),Span 与 Span 的关系被命名为 References。

例如:下面的示例 Trace 就是由8个 Span 组成:

单个 Trace 中,span 间的因果关系

[Span A] ←←←(the root span)

|

+------+------+

| |

[Span B] [Span C] ←←←(Span C 是 Span A 的孩子节点, ChildOf)

| |

[Span D] +---+-------+

| |

[Span E] [Span F] >>> [Span G] >>> [Span H]

↑

↑

↑

(Span G 在 Span F 后被调用, FollowsFrom)

有些时候,使用下面这种,基于时间轴的时序图可以更好的展现 Trace(调用链):

单个 Trace 中,span 间的时间关系

––|–––––––|–––––––|–––––––|–––––––|–––––––|–––––––|–––––––|–> time

[Span A···················································]

[Span B··············································]

[Span D··········································]

[Span C········································]

[Span E·······] [Span F··] [Span G··] [Span H··]每个 Span 包含以下的状态:(译者注:由于这些状态会反映在 OpenTracing API 中,所以会保留部分英文说明)

- An operation name,操作名称

- A start timestamp,起始时间

- A finish timestamp,结束时间

- Span Tag,一组键值对构成的 Span 标签集合。键值对中,键必须为 string,值可以是字符串,布尔,或者数字类型。

- Span Log,一组 span 的日志集合。

每次 log 操作包含一个键值对,以及一个时间戳。

键值对中,键必须为 string,值可以是任意类型。

但是需要注意,不是所有的支持 OpenTracing 的 Tracer,都需要支持所有的值类型。

- SpanContext,Span 上下文对象 (下面会详细说明)

- References(Span间关系),相关的零个或者多个 Span(Span 间通过 SpanContext 建立这种关系)

每一个 SpanContext 包含以下状态:

- 任何一个 OpenTracing 的实现,都需要将当前调用链的状态(例如:trace 和 span 的 id),依赖一个独特的 Span 去跨进程边界传输

- Baggage Items,Trace 的随行数据,是一个键值对集合,它存在于 trace 中,也需要跨进程边界传输

更多关于 OpenTracing 数据模型的知识,请参考 OpenTracing语义标准。

OpenTracing 实现

这篇文档列出了所有 OpenTracing 实现。在这些实现中,比较流行的为 Jaeger 和 Zipkin。

metrs主要用于监控告警,出了问题之后再通过链路追踪或者elk来查看发现问题解决问题

metries可以对系统层、应用层、业务层进行监控

121.Prometheus 简介~1.mp4

时间数据库

在t0产生数据v0,在t1产生数据v1,在t2产生数据v2,将这些数据点弄起来就是一个时间序列

infuxdb和普罗米修斯都是时间序列数据库

122.Prometheus 架构设计~1.mp4

123.Prometheus 基本概念~1.mp4

第一种计数器:例如统计的http数目、下单数目等

测量仪器:例如当前同时在线用户数目。磁盘使用率

直方图:响应时间在某个区间内的分布情况

汇总:90%的响应时间

target:可以是操作系统、机器、应用、服务等需要暴露metrries端点,每隔15秒中通过/metries抓取数据

应用直接采用:应用直接采集,直接在应用程序中埋点,直接使用普罗米修斯采集

第二种间接采集使用,使用exporter采集,执行的exporter如下所示,redis、apache、操作系统等

web ui和grafan通过promql就可以来查询对于的数据

124.【实验】Prometheus 起步查询实验(上)~1.mp4

第一步

第一步

将http-simulatror导入到eclipse中

springboot集成prometheus

Maven pom.xml引入依赖

<dependency>

<groupId>io.prometheus</groupId>

<artifactId>simpleclient_spring_boot</artifactId>

</dependency>

2 启动类引入注解

import io.prometheus.client.spring.boot.EnablePrometheusEndpoint;

import io.prometheus.client.spring.boot.EnableSpringBootMetricsCollector;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

@EnablePrometheusEndpoint

@EnableSpringBootMetricsCollector

public class Application {

public static void main(String[] args) {

SpringApplication.run(Application.class, args);

}

3 Controller类写需要监控的指标,比如Counter

import io.prometheus.client.Counter; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.RestController; import java.util.Random; @RestController public class SampleController { private static Random random = new Random(); private static final Counter requestTotal = Counter.build() .name("my_sample_counter") .labelNames("status") .help("A simple Counter to illustrate custom Counters in Spring Boot and Prometheus").register(); @RequestMapping("/endpoint") public void endpoint() { if (random.nextInt(2) > 0) { requestTotal.labels("success").inc(); } else { requestTotal.labels("error").inc(); } } } 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25

4 设置springboot应用的服务名和端口,在application.properties

spring.application.name=mydemo server.port=8888

5 配置prometheus.yml

global: scrape_interval: 15s # By default, scrape targets every 15 seconds. evaluation_interval: 15s # By default, scrape targets every 15 seconds. # scrape_timeout is set to the global default (10s). # Attach these labels to any time series or alerts when communicating with # external systems (federation, remote storage, Alertmanager). external_labels: monitor: 'codelab-monitor' # Load rules once and periodically evaluate them according to the global 'evaluation_interval'. rule_files: # - "first.rules" # - "second.rules" # A scrape configuration containing exactly one endpoint to scrape: # Here it's Prometheus itself. scrape_configs: # The job name is added as a label `job=<job_name>` to any timeseries scraped from this config. - job_name: 'prometheus' # Override the global default and scrape targets from this job every 5 seconds. scrape_interval: 5s # metrics_path defaults to '/metrics' # scheme defaults to 'http'. static_configs: - targets: ['localhost:9090'] - job_name: 'mydemo' # Override the global default and scrape targets from this job every 5 seconds. scrape_interval: 5s metrics_path: '/prometheus' # scheme defaults to 'http'. static_configs: - targets: ['10.94.20.52:8888']

最关键的配置就是targets: [‘10.94.20.52:8888’],就是springboot应用的ip和端口

注:在application.xml里设置属性:spring.metrics.servo.enabled=false,去掉重复的metrics,不然在prometheus的控制台的targets页签里,会一直显示此endpoint为down状态。

pom.xml

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>io.spring2go.promdemo</groupId> <artifactId>http-simulator</artifactId> <version>0.0.1-SNAPSHOT</version> <packaging>jar</packaging> <name>http-simulator</name> <description>Demo project for Spring Boot</description> <parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>1.5.17.RELEASE</version> <relativePath/> <!-- lookup parent from repository --> </parent> <properties> <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> <project.reporting.outputEncoding>UTF-8</project.reporting.outputEncoding> <java.version>1.8</java.version> </properties> <dependencies> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <!-- The prometheus client --> <dependency> <groupId>io.prometheus</groupId> <artifactId>simpleclient_spring_boot</artifactId> <version>0.5.0</version> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build> </project>

启动类:

package io.spring2go.promdemo.httpsimulator; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.boot.CommandLineRunner; import org.springframework.boot.SpringApplication; import org.springframework.boot.autoconfigure.SpringBootApplication; import org.springframework.context.ApplicationListener; import org.springframework.context.annotation.Bean; import org.springframework.context.event.ContextClosedEvent; import org.springframework.core.task.SimpleAsyncTaskExecutor; import org.springframework.core.task.TaskExecutor; import org.springframework.stereotype.Controller; import org.springframework.web.bind.annotation.PathVariable; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.RequestMethod; import org.springframework.web.bind.annotation.ResponseBody; import io.prometheus.client.spring.boot.EnablePrometheusEndpoint; @Controller @SpringBootApplication @EnablePrometheusEndpoint public class HttpSimulatorApplication implements ApplicationListener<ContextClosedEvent> { @Autowired private SimulatorOpts opts; private ActivitySimulator simulator; public static void main(String[] args) { SpringApplication.run(HttpSimulatorApplication.class, args); } @RequestMapping(value = "/opts") public @ResponseBody String getOps() { return opts.toString(); } @RequestMapping(value = "/spike/{mode}", method = RequestMethod.POST) public @ResponseBody String setSpikeMode(@PathVariable("mode") String mode) { boolean result = simulator.setSpikeMode(mode); if (result) { return "ok"; } else { return "wrong spike mode " + mode; } } @RequestMapping(value = "error_rate/{error_rate}", method = RequestMethod.POST) public @ResponseBody String setErrorRate(@PathVariable("error_rate") int errorRate) { simulator.setErrorRate(errorRate); return "ok"; } @Bean public TaskExecutor taskExecutor() { return new SimpleAsyncTaskExecutor(); } @Bean public CommandLineRunner schedulingRunner(TaskExecutor executor) { return new CommandLineRunner() { public void run(String... args) throws Exception { simulator = new ActivitySimulator(opts); executor.execute(simulator); System.out.println("Simulator thread started..."); } }; } @Override public void onApplicationEvent(ContextClosedEvent event) { simulator.shutdown(); System.out.println("Simulator shutdown..."); } }

application.properties

management.security.enabled=false opts.endpoints=/login, /login, /login, /login, /login, /login, /login, /users, /users, /users, /users/{id}, /register, /register, /logout, /logout, /logout, /logout opts.request_rate=1000 opts.request_rate_uncertainty=70 opts.latency_min=10 opts.latency_p50=25 opts.latency_p90=150 opts.latency_p99=750 opts.latency_max=10000 opts.latency_uncertainty=70 opts.error_rate=1 opts.spike_start_chance=5 opts.spike_end_chance=30

最关键的核心类

ActivitySimulator

package io.spring2go.promdemo.httpsimulator; import java.util.Random; import io.prometheus.client.Counter; import io.prometheus.client.Histogram; public class ActivitySimulator implements Runnable { private SimulatorOpts opts; private Random rand = new Random(); private boolean spikeMode = false; private volatile boolean shutdown = false; private final Counter httpRequestsTotal = Counter.build() .name("http_requests_total") .help("Total number of http requests by response status code") .labelNames("endpoint", "status") .register(); private final Histogram httpRequestDurationMs = Histogram.build() .name("http_request_duration_milliseconds") .help("Http request latency histogram") .exponentialBuckets(25, 2, 7) .labelNames("endpoint", "status") .register(); public ActivitySimulator(SimulatorOpts opts) { this.opts = opts; System.out.println(opts); } public void shutdown() { this.shutdown = true; } public void updateOpts(SimulatorOpts opts) { this.opts = opts; } public boolean setSpikeMode(String mode) { boolean result = true; switch (mode) { case "on": opts.setSpikeMode(SpikeMode.ON); System.out.println("Spike mode is set to " + mode); break; case "off": opts.setSpikeMode(SpikeMode.OFF); System.out.println("Spike mode is set to " + mode); break; case "random": opts.setSpikeMode(SpikeMode.RANDOM); System.out.println("Spike mode is set to " + mode); break; default: result = false; System.out.println("Can't recognize spike mode " + mode); } return result; } public void setErrorRate(int rate) { if (rate > 100) { rate = 100; } if (rate < 0) { rate = 0; } opts.setErrorRate(rate); System.out.println("Error rate is set to " + rate); } public SimulatorOpts getOpts() { return this.opts; } public void simulateActivity() { int requestRate = this.opts.getRequestRate(); if (this.giveSpikeMode()) { requestRate *= (5 + this.rand.nextInt(10)); } int nbRequests = this.giveWithUncertainty(requestRate, this.opts.getRequestRateUncertainty()); for (int i = 0; i < nbRequests; i++) { String statusCode = this.giveStatusCode(); String endpoint = this.giveEndpoint(); this.httpRequestsTotal.labels(endpoint, statusCode).inc(); int latency = this.giveLatency(statusCode); if (this.spikeMode) { latency *= 2; } this.httpRequestDurationMs.labels(endpoint, statusCode).observe(latency); } } public boolean giveSpikeMode() { switch (this.opts.getSpikeMode()) { case ON: this.spikeMode = true; break; case OFF: this.spikeMode = false; break; case RANDOM: int n = rand.nextInt(100); if (!this.spikeMode && n < this.opts.getSpikeStartChance()) { this.spikeMode = true; } else if (this.spikeMode && n < this.opts.getSpikeEndChance()) { this.spikeMode = false; } break; } return this.spikeMode; } public int giveWithUncertainty(int n, int u) { int delta = this.rand.nextInt(n * u / 50) - (n * u / 100); return n + delta; } public String giveStatusCode() { if (this.rand.nextInt(100) < this.opts.getErrorRate()) { return "500"; } else { return "200"; } } public String giveEndpoint() { int n = this.rand.nextInt(this.opts.getEndopints().length); return this.opts.getEndopints()[n]; } public int giveLatency(String statusCode) { if (!"200".equals(statusCode)) { return 5 + this.rand.nextInt(50); } int p = this.rand.nextInt(100); if (p < 50) { return this.giveWithUncertainty(this.opts.getLatencyMin() + this.rand.nextInt(this.opts.getLatencyP50() - this.opts.getLatencyMin()), this.opts.getLatencyUncertainty()); } if (p < 90) { return this.giveWithUncertainty(this.opts.getLatencyP50() + this.rand.nextInt(this.opts.getLatencyP90() - this.opts.getLatencyP50()), this.opts.getLatencyUncertainty()); } if (p < 99) { return this.giveWithUncertainty(this.opts.getLatencyP90() + this.rand.nextInt(this.opts.getLatencyP99() - this.opts.getLatencyP90()), this.opts.getLatencyUncertainty()); } return this.giveWithUncertainty(this.opts.getLatencyP99() + this.rand.nextInt(this.opts.getLatencyMax() - this.opts.getLatencyP99()), this.opts.getLatencyUncertainty()); } @Override public void run() { while(!shutdown) { System.out.println("Simulator is running..."); this.simulateActivity(); try { Thread.sleep(1000); } catch (InterruptedException e) { // TODO Auto-generated catch block e.printStackTrace(); } } } }

SimulatorOpts

package io.spring2go.promdemo.httpsimulator; import java.util.Arrays; import org.springframework.beans.factory.annotation.Value; import org.springframework.context.annotation.Configuration; import com.fasterxml.jackson.annotation.JsonAutoDetect; @Configuration @JsonAutoDetect(fieldVisibility = JsonAutoDetect.Visibility.ANY) public class SimulatorOpts { // Endpoints, Weighted map of endpoints to simulate @Value("${opts.endpoints}") private String[] endopints; // RequestRate, requests per second @Value("${opts.request_rate}") private int requestRate; // RequestRateUncertainty, Percentage of uncertainty when generating requests (+/-) @Value("${opts.request_rate_uncertainty}") private int requestRateUncertainty; // LatencyMin in milliseconds @Value("${opts.latency_min}") private int latencyMin; // LatencyP50 in milliseconds @Value("${opts.latency_p50}") private int latencyP50; // LatencyP90 in milliseconds @Value("${opts.latency_p90}") private int latencyP90; // LatencyP99 in milliseconds @Value("${opts.latency_p99}") private int latencyP99; // LatencyMax in milliseconds @Value("${opts.latency_max}") private int latencyMax; // LatencyUncertainty, Percentage of uncertainty when generating latency (+/-) @Value("${opts.latency_uncertainty}") private int latencyUncertainty; // ErrorRate, Percentage of chance of requests causing 500 @Value("${opts.error_rate}") private int errorRate; // SpikeStartChance, Percentage of chance of entering spike mode @Value("${opts.spike_start_chance}") private int spikeStartChance; // SpikeStartChance, Percentage of chance of exiting spike mode @Value("${opts.spike_end_chance}") private int spikeEndChance; // SpikeModeStatus ON/OFF/RANDOM private SpikeMode spikeMode = SpikeMode.OFF; public String[] getEndopints() { return endopints; } public void setEndopints(String[] endopints) { this.endopints = endopints; } public int getRequestRate() { return requestRate; } public void setRequestRate(int requestRate) { this.requestRate = requestRate; } public int getRequestRateUncertainty() { return requestRateUncertainty; } public void setRequestRateUncertainty(int requestRateUncertainty) { this.requestRateUncertainty = requestRateUncertainty; } public int getLatencyMin() { return latencyMin; } public void setLatencyMin(int latencyMin) { this.latencyMin = latencyMin; } public int getLatencyP50() { return latencyP50; } public void setLatencyP50(int latencyP50) { this.latencyP50 = latencyP50; } public int getLatencyP90() { return latencyP90; } public void setLatencyP90(int latencyP90) { this.latencyP90 = latencyP90; } public int getLatencyP99() { return latencyP99; } public void setLatencyP99(int latencyP99) { this.latencyP99 = latencyP99; } public int getLatencyMax() { return latencyMax; } public void setLatencyMax(int latencyMax) { this.latencyMax = latencyMax; } public int getLatencyUncertainty() { return latencyUncertainty; } public void setLatencyUncertainty(int latencyUncertainty) { this.latencyUncertainty = latencyUncertainty; } public int getErrorRate() { return errorRate; } public void setErrorRate(int errorRate) { this.errorRate = errorRate; } public int getSpikeStartChance() { return spikeStartChance; } public void setSpikeStartChance(int spikeStartChance) { this.spikeStartChance = spikeStartChance; } public int getSpikeEndChance() { return spikeEndChance; } public void setSpikeEndChance(int spikeEndChance) { this.spikeEndChance = spikeEndChance; } public SpikeMode getSpikeMode() { return spikeMode; } public void setSpikeMode(SpikeMode spikeMode) { this.spikeMode = spikeMode; } @Override public String toString() { return "SimulatorOpts [endopints=" + Arrays.toString(endopints) + ", requestRate=" + requestRate + ", requestRateUncertainty=" + requestRateUncertainty + ", latencyMin=" + latencyMin + ", latencyP50=" + latencyP50 + ", latencyP90=" + latencyP90 + ", latencyP99=" + latencyP99 + ", latencyMax=" + latencyMax + ", latencyUncertainty=" + latencyUncertainty + ", errorRate=" + errorRate + ", spikeStartChance=" + spikeStartChance + ", spikeEndChance=" + spikeEndChance + ", spikeMode=" + spikeMode + "]"; } }

SpikeMode

package io.spring2go.promdemo.httpsimulator; public enum SpikeMode { OFF, ON, RANDOM }

我们将应用运行起来

模拟一个简单的HTTP微服务,生成Prometheus Metrics,可以Spring Boot方式运行

Metrics

运行时访问端点:

http://SERVICE_URL:8080/prometheus

包括:

http_requests_total:请求计数器,endpoint和status为labelhttp_request_duration_milliseconds:请求延迟分布(histogram)

运行时options

Spike Mode

在Spike模式下,请求数会乘以一个因子(5~15),延迟加倍

Spike模式可以是on, off或者random, 改变方式:

# ON

curl -X POST http://SERVICE_URL:8080/spike/on

# OFF

curl -X POST http://SERVICE_URL:8080/spike/off

# RANDOM

curl -X POST http://SERVICE_URL:8080/spike/random

Error rate

缺省错误率1%,可以调整(0~100),方法:

# Setting error to 50%

curl -X POST http://SERVICE_URL:8080/error_rate/50

其它参数

配置在application.properties中

opts.endpoints=/login, /login, /login, /login, /login, /login, /login, /users, /users, /users, /users/{id}, /register, /register, /logout, /logout, /logout, /logout

opts.request_rate=1000

opts.request_rate_uncertainty=70

opts.latency_min=10

opts.latency_p50=25

opts.latency_p90=150

opts.latency_p99=750

opts.latency_max=10000

opts.latency_uncertainty=70

opts.error_rate=1

opts.spike_start_chance=5

opts.spike_end_chance=30

运行时校验端点:

http://SERVICE_URL:8080/opts

参考

https://github.com/PierreVincent/prom-http-simulator

我们在浏览器输入http://localhost:8080/prometheus,我们可以查看收集到了metrie信息

# HELP http_requests_total Total number of http requests by response status code # TYPE http_requests_total counter http_requests_total{endpoint="/login",status="500",} 188.0 http_requests_total{endpoint="/register",status="500",} 55.0 http_requests_total{endpoint="/login",status="200",} 18863.0 http_requests_total{endpoint="/register",status="200",} 5425.0 http_requests_total{endpoint="/users/{id}",status="500",} 26.0 http_requests_total{endpoint="/users/{id}",status="200",} 2663.0 http_requests_total{endpoint="/logout",status="200",} 10722.0 http_requests_total{endpoint="/users",status="200",} 8034.0 http_requests_total{endpoint="/users",status="500",} 94.0 http_requests_total{endpoint="/logout",status="500",} 93.0 # HELP http_request_duration_milliseconds Http request latency histogram # TYPE http_request_duration_milliseconds histogram http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="25.0",} 85.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="50.0",} 174.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="100.0",} 188.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="200.0",} 188.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="400.0",} 188.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="800.0",} 188.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="1600.0",} 188.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="+Inf",} 188.0 http_request_duration_milliseconds_count{endpoint="/login",status="500",} 188.0 http_request_duration_milliseconds_sum{endpoint="/login",status="500",} 5499.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="25.0",} 27.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="50.0",} 50.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="100.0",} 55.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="200.0",} 55.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="400.0",} 55.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="800.0",} 55.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="1600.0",} 55.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="500",le="+Inf",} 55.0 http_request_duration_milliseconds_count{endpoint="/register",status="500",} 55.0 http_request_duration_milliseconds_sum{endpoint="/register",status="500",} 1542.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="25.0",} 8479.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="50.0",} 11739.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="100.0",} 14454.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="200.0",} 17046.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="400.0",} 17882.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="800.0",} 18482.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="1600.0",} 18705.0 http_request_duration_milliseconds_bucket{endpoint="/login",status="200",le="+Inf",} 18863.0 http_request_duration_milliseconds_count{endpoint="/login",status="200",} 18863.0 http_request_duration_milliseconds_sum{endpoint="/login",status="200",} 2552014.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="25.0",} 2388.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="50.0",} 3367.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="100.0",} 4117.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="200.0",} 4889.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="400.0",} 5136.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="800.0",} 5310.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="1600.0",} 5379.0 http_request_duration_milliseconds_bucket{endpoint="/register",status="200",le="+Inf",} 5425.0 http_request_duration_milliseconds_count{endpoint="/register",status="200",} 5425.0 http_request_duration_milliseconds_sum{endpoint="/register",status="200",} 739394.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="25.0",} 14.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="50.0",} 25.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="100.0",} 26.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="200.0",} 26.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="400.0",} 26.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="800.0",} 26.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="1600.0",} 26.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="500",le="+Inf",} 26.0 http_request_duration_milliseconds_count{endpoint="/users/{id}",status="500",} 26.0 http_request_duration_milliseconds_sum{endpoint="/users/{id}",status="500",} 752.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="25.0",} 1220.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="50.0",} 1657.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="100.0",} 2030.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="200.0",} 2383.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="400.0",} 2508.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="800.0",} 2608.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="1600.0",} 2637.0 http_request_duration_milliseconds_bucket{endpoint="/users/{id}",status="200",le="+Inf",} 2663.0 http_request_duration_milliseconds_count{endpoint="/users/{id}",status="200",} 2663.0 http_request_duration_milliseconds_sum{endpoint="/users/{id}",status="200",} 402375.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="25.0",} 4790.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="50.0",} 6634.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="100.0",} 8155.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="200.0",} 9609.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="400.0",} 10113.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="800.0",} 10493.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="1600.0",} 10622.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="200",le="+Inf",} 10722.0 http_request_duration_milliseconds_count{endpoint="/logout",status="200",} 10722.0 http_request_duration_milliseconds_sum{endpoint="/logout",status="200",} 1502959.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="25.0",} 3622.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="50.0",} 4967.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="100.0",} 6117.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="200.0",} 7254.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="400.0",} 7624.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="800.0",} 7866.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="1600.0",} 7966.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="200",le="+Inf",} 8034.0 http_request_duration_milliseconds_count{endpoint="/users",status="200",} 8034.0 http_request_duration_milliseconds_sum{endpoint="/users",status="200",} 1100809.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="25.0",} 41.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="50.0",} 88.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="100.0",} 94.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="200.0",} 94.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="400.0",} 94.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="800.0",} 94.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="1600.0",} 94.0 http_request_duration_milliseconds_bucket{endpoint="/users",status="500",le="+Inf",} 94.0 http_request_duration_milliseconds_count{endpoint="/users",status="500",} 94.0 http_request_duration_milliseconds_sum{endpoint="/users",status="500",} 2685.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="25.0",} 41.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="50.0",} 85.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="100.0",} 93.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="200.0",} 93.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="400.0",} 93.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="800.0",} 93.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="1600.0",} 93.0 http_request_duration_milliseconds_bucket{endpoint="/logout",status="500",le="+Inf",} 93.0 http_request_duration_milliseconds_count{endpoint="/logout",status="500",} 93.0 http_request_duration_milliseconds_sum{endpoint="/logout",status="500",} 2683.0

我们来看具体的代码

metric有Counter、Gauge、Histogram和Summary四种类型

this.httpRequestsTotal.labels(endpoint, statusCode).inc();这里是创建一个计数器,统计http请求的信息

对不同的http请求的端点,改端点下不同的响应都会进行记录

http_requests_total{endpoint="/login",status="500",} 188.0

http_requests_total{endpoint="/register",status="500",} 55.0

http_requests_total{endpoint="/login",status="200",} 18863.0

= Histogram.build()

.name("http_request_duration_milliseconds")

.help("Http request latency histogram")

.exponentialBuckets(25, 2, 7)

.labelNames("endpoint", "status")

.register();

这里是创建一个直方图,用来统计延迟数据分布

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="25.0",} 85.0

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="50.0",} 174.0

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="100.0",} 188.0

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="200.0",} 188.0

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="400.0",} 188.0

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="800.0",} 188.0

http_request_duration_milliseconds_bucket{endpoint="/login",status="500",le="1600.0",} 188.0

125.【实验】Prometheus起步查询实验(中)~1.mp4

首先安装普罗米修斯

接下来我们要让普罗米修斯抓取我们上面的springboot http-simulation的数据http://localhost:8080/,需要修改prometheus.ym

# my global config global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. # scrape_timeout is set to the global default (10s). # Alertmanager configuration alerting: alertmanagers: - static_configs: - targets: # - alertmanager:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'. rule_files: # - "first_rules.yml" # - "second_rules.yml" # A scrape configuration containing exactly one endpoint to scrape: # Here it's Prometheus itself. scrape_configs: # The job name is added as a label `job=<job_name>` to any timeseries scraped from this config. - job_name: 'prometheus' # metrics_path defaults to '/metrics' # scheme defaults to 'http'. static_configs: - targets: ['localhost:9090'] - job_name: 'http-simulation' metrics_path: /prometheus # scheme defaults to 'http'. static_configs: - targets: ['localhost:8080']

创建一个新的job,job的名称是'http-simulation',业务metrics的路径是/prometheus,对于的tartgets是localhost:8080'

下面我们将普罗米修斯运行起来

我们使用git bash窗口将普罗米修斯运行起来。这里不要使用windows的cmd窗口

pu

pu

普罗米修斯启动的时候支撑热加载

启用数据保存时间,配置刷新

./prometheus --storage.tsdb.retention.time=180d --web.enable-admin-api --web.enable-lifecycle --config.file=prometheus.yml

3)热启动

curl -XPOST http://localhost:9090/-/reload

启动成功之后,浏览器输入http://localhost:9090/graph

点击查看target可以看到当前监控了哪些target

可以看到当前监控了哪些实例

我们要查看http-simulation的请求数目,如何做的统计了:http_requests_total{job="http-simulation"}

对于统计我们可以点击上方的graph,查看一个图形的一个统计,查看的时间是可以手动修改的

校验http-simulator在1状态 up{job="http-simulator"} 查询http请求数 http_requests_total{job="http-simulator"} 查询成功login请求数 http_requests_total{job="http-simulator", status="200", endpoint="/login"} 查询成功请求数,以endpoint区分 http_requests_total{job="http-simulator", status="200"} 查询总成功请求数 sum(http_requests_total{job="http-simulator", status="200"}) 查询成功请求率,以endpoint区分 rate(http_requests_total{job="http-simulator", status="200"}[5m]) 查询总成功请求率 sum(rate(http_requests_total{job="http-simulator", status="200"}[5m]))

http_requests_total{job="http-simulator", status="200", endpoint="/login"}

126.【实验】Prometheus起步查询实验(下)~1.mp4

4. 延迟分布(Latency distribution)查询 查询http-simulator延迟分布 http_request_duration_milliseconds_bucket{job="http-simulator"} 查询成功login延迟分布 http_request_duration_milliseconds_bucket{job="http-simulator", status="200", endpoint="/login"} 不超过200ms延迟的成功login请求占比 sum(http_request_duration_milliseconds_bucket{job="http-simulator", status="200", endpoint="/login", le="200.0"}) / sum(http_request_duration_milliseconds_count{job="http-simulator", status="200", endpoint="/login"}) 成功login请求延迟的99百分位 histogram_quantile(0.99, rate(http_request_duration_milliseconds_bucket{job="http-simulator", status="200", endpoint="/log

127.【实验】Prometheus + Grafana 展示实验(上)~1.mp4

安装grafana,下载之后解压完成之后就可以了

运行grafana之前,保证普罗米修斯以及我们要监控的应用已经正常启动起来了

我们使用git bash窗口运行

密码是admin/admin

登录成功之后,我们要给grafana设置普罗米修斯的数据源

直接点击add data source

其他参数默认不做修改

添加Proemethes数据源

Name -> prom-datasource

Type -> Prometheus

HTTP URL -> http://localhost:9090

其它缺省即可

http://localhost:9090是普罗米修斯运行的程序端口

Save & Test确保连接成功

3. 创建一个Dashboard

点击**+图标创建一个Dashbaord,点击保存**图标保存Dashboard,使用缺省Folder,给Dashboard起名为prom-demo。

4. 展示请求率

点击Add panel图标,点击Graph图标添加一个Graph,

点击Graph上的Panel Title->Edit进行编辑

修改Title:General -> Title = Request Rate

设置Metrics

sum(rate(http_requests_total{job="http-simulator"}[5m]))

调整Lagend

- 以表格展示As Table

- 显示Min/Max/Avg/Current/Total

- 根据需要调整Axis

注意保存Dahsboard。

设置完成metries之后点击下右边的那个小三角

128.【实验】Prometheus + Grafana 展示实验(下)~1.mp4

5. 展示实时错误率

点击Add panel图标,点击Singlestat图标添加一个Singlestat,

点击Graph上的Panel Title->Edit进行编辑

修改Title:General -> Title = Live Error Rate

设置Metrics

sum(rate(http_requests_total{job="http-simulator", status="500"}[5m])) / sum(rate(http_requests_total{job="http-simulator"}[5m]))

调整显示单位unit:Options->Unit,设置为None->percent(0.0-1.0)

调整显示值(目前为平均)为当前值(now):Options->Value->Stat,设置为Current

添加阀值和颜色:Options->Coloring,选中Value,将Threshold设置为0.01,0.05,表示

- 绿色:0-1%

- 橙色:1-5%

- 红色:>5%

添加测量仪效果:Options->Gauge,选中Show,并将Max设为1

添加错误率演变曲线:选中Spark lines -> Show

注意保存Dahsboard。

sum(rate(http_requests_total{job="http-simulation",status="500"}[5m])) /sum(rate(http_requests_total{job="http-simulation"}[5m]))

调整显示单位unit:Options->Unit,设置为None->percent(0.0-1.0)

调整显示值(目前为平均)为当前值(now):Options->Value->Stat,设置为Current

设置测量仪效果

6. 展示Top requested端点

点击Add panel图标,点击Table图标添加一个Table,

设置Metrics

sum(rate(http_requests_total{job="http-simulator"}[5m])) by (endpoint)

减少表中数据项,在Metrics下,选中Instant只显示当前值

隐藏Time列,在Column Sytle下,Apply to columns named为Time,将Type->Type设置为Hidden

将Value列重命名,添加一个Column Style,Apply to columns named为Value,将Column Header设置为Requests/s

点击表中的Requests/s header,让其中数据根据端点活跃度进行排序。

注意调整Widget位置并保存Dahsboard。

sum(rate(http_requests_total{job="http-simulation"}[5m])) by (endpoint)

勾选instance只看当前存在的请求类型

点击value可以按照值大小进行排序,让当前请求最大的值在前面

这样可以实时的查看当前的一个请求的统计。访问最频繁的五个端点信息等

129.【实验】Prometheus + Alertmanager 告警实验(上)~1.mp4

注意启用--web.enable-lifecycle,让Prometheus支持通过web端点动态更新配置

接下来我们做这样的一个功能,当我们之前运行的http-simulation这个应用挂了,我们发出一个告警

. HttpSimulatorDown告警

在Prometheus目录下:

添加simulator_alert_rules.yml告警配置文件

groups: - name: simulator-alert-rule rules: - alert: HttpSimulatorDown expr: sum(up{job="http-simulation"}) == 0 for: 1m labels: severity: critical

一分钟内统计

sum(up{job="http-simulation"})的值都是0,说明1分钟内实例都没有启动,发出告警

修改prometheus.yml,引用simulator_alert_rules.yml文件

# my global config global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. # scrape_timeout is set to the global default (10s). # Alertmanager configuration alerting: alertmanagers: - static_configs: - targets: # - alertmanager:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'. rule_files: - "simulator_alert_rules.yml" # - "second_rules.yml" # A scrape configuration containing exactly one endpoint to scrape: # Here it's Prometheus itself. scrape_configs: # The job name is added as a label `job=<job_name>` to any timeseries scraped from this config. - job_name: 'prometheus' # metrics_path defaults to '/metrics' # scheme defaults to 'http'. static_configs: - targets: ['localhost:9090'] - job_name: 'http-simulation' metrics_path: /prometheus static_configs: - targets: ['localhost:8080']

这样说明配置已经成功,我们将http-simulation应用停止,一分钟之后会触发报警

状态为firing表示告警已经触发了

3. ErrorRateHigh告警

假设已经执行上面的步骤2,则重新运行Prometheus HTTP Metrics Simulator

在simulator_alert_rules.yml文件中增加告警配置

- alert: ErrorRateHigh expr: sum(rate(http_requests_total{job="http-simulator", status="500"}[5m])) / sum(rate(http_requests_total{job="http-simulator"}[5m])) > 0.02 for: 1m labels: severity: major annotations: summary: "High Error Rate detected" description: "Error Rate is above 2% (current value is: {{ $value }}"

整个文件的内容如下

groups: - name: simulator-alert-rule rules: - alert: HttpSimulatorDown expr: sum(up{job="http-simulation"}) == 0 for: 1m labels: severity: critical - alert: ErrorRateHigh expr: sum(rate(http_requests_total{job="http-simulator", status="500"}[5m])) / sum(rate(http_requests_total{job="http-simulator"}[5m])) > 0.02 for: 1m labels: severity: major annotations: summary: "High Error Rate detected" description: "Error Rate is above 2% (current value is: {{ $value }}"

130.【实验】Prometheus + Alertmanager 告警实验(下)~1.mp4

上面我们已经设置了告警,接下来当产生告警的时候,能够发送邮件

首先要下载下载Alertmanager 0.15.2 for Windows,并解压到本地目录。

配置好邮箱地址之后,要启动

启动Alertmanager

./alertmanager.exe

在Prometheus目录下,修改prometheus.yml配置Alertmanager地址,默认是9093端口

# Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

- localhost:9093

通过Prometheus->Status的Configuration和Rules确认配置和告警设置生效

通过Alertmanager UI界面和设置的邮箱,校验ErrorRateHigh告警触发

Alertmanager UI访问地址:

http://localhost:9093

131.【实验】Java 应用埋点和监控实验~1.mp4

我们可以通过http api给队列添加job,worker进行干活

实验四、Java应用埋点和监控实验

实验步骤

1. Review和运行埋点样例代码

将instrumentation-example导入Eclipse IDE

Review代码理解模拟任务系统原理和埋点方式

以Spring Boot方式运行埋点案例

通过http://localhost:8080/prometheus查看metrics

pom.xml

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>io.spring2go.promdemo</groupId> <artifactId>instrumentation-example</artifactId> <version>0.0.1-SNAPSHOT</version> <packaging>jar</packaging> <name>instrumentation-example</name> <description>Demo project for Spring Boot</description> <parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>1.5.17.RELEASE</version> <relativePath/> <!-- lookup parent from repository --> </parent> <properties> <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> <project.reporting.outputEncoding>UTF-8</project.reporting.outputEncoding> <java.version>1.8</java.version> </properties> <dependencies> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <!-- The prometheus client --> <dependency> <groupId>io.prometheus</groupId> <artifactId>simpleclient_spring_boot</artifactId> <version>0.5.0</version> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build> </project>

InstrumentApplication

package io.spring2go.promdemo.instrument; import org.springframework.boot.CommandLineRunner; import org.springframework.boot.SpringApplication; import org.springframework.boot.autoconfigure.SpringBootApplication; import org.springframework.context.annotation.Bean; import org.springframework.core.task.SimpleAsyncTaskExecutor; import org.springframework.core.task.TaskExecutor; import org.springframework.stereotype.Controller; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.RequestMethod; import org.springframework.web.bind.annotation.ResponseBody; import io.prometheus.client.spring.boot.EnablePrometheusEndpoint; @Controller @SpringBootApplication @EnablePrometheusEndpoint public class InstrumentApplication { private JobQueue queue = new JobQueue(); private WorkerManager workerManager; public static void main(String[] args) { SpringApplication.run(InstrumentApplication.class, args); } @RequestMapping(value = "/hello-world") public @ResponseBody String sayHello() { return "hello, world"; } @RequestMapping(value = "/jobs", method = RequestMethod.POST) public @ResponseBody String jobs() { queue.push(new Job()); return "ok"; } @Bean public TaskExecutor taskExecutor() { return new SimpleAsyncTaskExecutor(); } @Bean public CommandLineRunner schedulingRunner(TaskExecutor executor) { return new CommandLineRunner() { public void run(String... args) throws Exception { // 10 jobs per worker workerManager = new WorkerManager(queue, 1, 4, 10); executor.execute(workerManager); System.out.println("WorkerManager thread started..."); } }; } }

Job

package io.spring2go.promdemo.instrument; import java.util.Random; import java.util.UUID; public class Job { private String id; private Random rand = new Random(); public Job() { this.id = UUID.randomUUID().toString(); } public void run() { try { // Run the job (5 - 15 seconds) Thread.sleep((5 + rand.nextInt(10)) * 1000); } catch (InterruptedException e) { // TODO Auto-generated catch block e.printStackTrace(); } } public String getId() { return id; } public void setId(String id) { this.id = id; } }

JobQueue

package io.spring2go.promdemo.instrument; import java.util.Queue; import java.util.concurrent.LinkedBlockingQueue; import io.prometheus.client.Gauge; public class JobQueue { private final Gauge jobQueueSize = Gauge.build() .name("job_queue_size") .help("Current number of jobs waiting in queue") .register(); private Queue<Job> queue = new LinkedBlockingQueue<Job>(); public int size() { return queue.size(); } public void push(Job job) { queue.offer(job); jobQueueSize.inc(); } public Job pull() { Job job = queue.poll(); if (job != null) { jobQueueSize.dec(); } return job; } }

Worker

package io.spring2go.promdemo.instrument; import java.util.UUID; import io.prometheus.client.Histogram; public class Worker extends Thread { private static final Histogram jobsCompletionDurationSeconds = Histogram.build() .name("jobs_completion_duration_seconds") .help("Histogram of job completion time") .linearBuckets(4, 1, 16) .register(); private String id; private JobQueue queue; private volatile boolean shutdown; public Worker(JobQueue queue) { this.queue = queue; this.id = UUID.randomUUID().toString(); } @Override public void run() { System.out.println(String.format("[Worker %s] Starting", this.id)); while(!shutdown) { this.pullJobAndRun(); } System.out.println(String.format("[Worker %s] Stopped", this.id)); } public void shutdown() { this.shutdown = true; System.out.println(String.format("[Worker %s] Shutting down", this.id)); } public void pullJobAndRun() { Job job = this.queue.pull(); if (job != null) { long jobStart = System.currentTimeMillis(); System.out.println(String.format("[Worker %s] Starting job: %s", this.id, job.getId())); job.run(); System.out.println(String.format("[Worker %s] Finished job: %s", this.id, job.getId())); int duration = (int)((System.currentTimeMillis() - jobStart) / 1000); jobsCompletionDurationSeconds.observe(duration); } else { System.out.println(String.format("[Worker %s] Queue is empty. Backing off 5 seconds", this.id)); try { Thread.sleep(5 * 1000); } catch (InterruptedException e) { // TODO Auto-generated catch block e.printStackTrace(); } } } }

WorkerManager

package io.spring2go.promdemo.instrument; import java.util.LinkedList; import java.util.Queue; public class WorkerManager extends Thread { private Queue<Worker> workers = new LinkedList<Worker>(); private JobQueue queue; private int minWorkers; private int maxWorkers; private int jobsWorkerRatio; public WorkerManager(JobQueue queue, int minWorkers, int maxWorkers, int jobsWorkerRatio) { this.queue = queue; this.minWorkers = minWorkers; this.maxWorkers = maxWorkers; this.jobsWorkerRatio = jobsWorkerRatio; // Initialize workerpool for (int i = 0; i < minWorkers; i++) { this.addWorker(); } } public void addWorker() { Worker worker = new Worker(queue); this.workers.offer(worker); worker.start(); } public void shutdownWorker() { if (this.workers.size() > 0) { Worker worker = this.workers.poll(); worker.shutdown(); } } public void run() { this.scaleWorkers(); } public void scaleWorkers() { while(true) { int queueSize = this.queue.size(); int workerCount = this.workers.size(); if ((workerCount + 1) * jobsWorkerRatio < queueSize && workerCount < this.maxWorkers) { System.out.println("[WorkerManager] Too much work, starting extra worker."); this.addWorker(); } if ((workerCount - 1) * jobsWorkerRatio > queueSize && workerCount > this.minWorkers) { System.out.println("[WorkerManager] Too much workers, shutting down 1 worker"); this.shutdownWorker(); } try { Thread.sleep(10 * 1000); } catch (InterruptedException e) { // TODO Auto-generated catch block e.printStackTrace(); } } } }

application.properties

management.security.enabled=false

我们要监听两个指标:第一job队列的大小,二是队列中每个任务执行的时间

job的大小我们在job中添加监听,从队列中post job的时候队列大小增加1,取出减少1

2. 配置和运行Promethus

添加针对instrumentation-example的监控job

- job_name: 'instrumentation-example'

metrics_path: /prometheus

static_configs:

- targets: ['localhost:8080']

运行Prometheus

./prometheus.exe

通过Prometheus->Status的configuration和targets校验配置正确

3. 生成测试数据和查询Metrics

查询instrumentation-example在UP1状态

up{job="instrumentation-example"}

运行queueUpJobs.sh产生100个job

./queueUpJobs.sh

查询JobQueueSize变化曲线(调整时间范围到5m):

job_queue_size{job="instrumentation-example"}

查询90分位Job执行延迟分布:

histogram_quantile(0.90, rate(jobs_completion_duration_seconds_bucket{job="instrumentation-example"}[5m]))

132.【实验】NodeExporter 系统监控实验~1.mp4

1. 下载和运行wmi-exporter

下载wmi_exporter-amd64,并解压到本地目录

校验metrics端点

http://localhost:9182/metrics

2. 配置和运行Promethus

在Prometheus安装目录下

在prometheus.yml 中添加针对wmi-exporter的监控job

# my global config global: scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute. evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute. # scrape_timeout is set to the global default (10s). # Alertmanager configuration alerting: alertmanagers: - static_configs: - targets: # - alertmanager:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'. rule_files: - "simulator_alert_rules.yml" # - "second_rules.yml" # A scrape configuration containing exactly one endpoint to scrape: # Here it's Prometheus itself. scrape_configs: # The job name is added as a label `job=<job_name>` to any timeseries scraped from this config. - job_name: 'wmi-exporter' # metrics_path defaults to '/metrics' # scheme defaults to 'http'. static_configs: - targets: ['localhost:9182']

3. Grafana Dashboard for wmi-exporter

在Grafana安装目录下启动Grafana服务器

./bin/grafana-server.exe

登录Grafana UI(admin/admin)

http://localhost:3000

通过Grafana的**+**图标导入(Import) wmi-exporter dashboard:

grafana id = 2129

注意选中prometheus数据源

查看Windows Node dashboard。

4. 参考

Grafana Dashboard仓库

https://grafana.com/dashboards

然后输入2129,会自动导入变成下面的信息

这里数据源要选择普罗米修斯数据源

133.【实验】Spring Boot Actuator 监控实验~1.mp4

实验步骤

1. 运行Spring Boot + Actuator

将actuatordemo应用导入Eclipse IDE

Review actuatordemo代码

以Spring Boot方式运行actuatordemo

校验metrics端点

http://localhost:8080/prometheus

2. 配置和运行Promethus

在Prometheus安装目录下

在prometheus.yml 中添加针对wmi-exporter的监控job

- job_name: 'actuator-demo'

metrics_path: '/prometheus'

static_configs:

- targets: ['localhost:8080']

运行Prometheus

./prometheus.exe

访问Prometheus UI

http://localhost:9090

通过Prometheus->Status的configuration和targets校验配置正确

通过Prometheus->Graph查询actuator-demo在UP=1状态

up{job="actuatordemo"}

3. Grafana Dashboard for JVM (Micrometer)

在Grafana安装目录下启动Grafana服务器

./bin/grafana-server.exe

登录Grafana UI(admin/admin)

http://localhost:3000

通过Grafana的**+**图标导入(Import) JVM (Micrometer) dashboard:

grafana id = 4701

注意选中prometheus数据源

查看JVM (Micormeter) dashboard。

4. 参考

Grafana Dashboard仓库

https://grafana.com/dashboards

Micrometer Prometheus支持

https://micrometer.io/docs/registry/prometheus

Micrometer Springboot 1.5支持

https://micrometer.io/docs/ref/spring/1.5

133.【实验】Spring Boot Actuator 监控实验~1.mp4

整个程序的代码如下

首先我们来看下代码

<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>io.spring2go.promdemo</groupId> <artifactId>actuatordemo</artifactId> <version>0.0.1-SNAPSHOT</version> <packaging>jar</packaging> <name>actuatordemo</name> <description>Demo project for Spring Boot</description> <parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>1.5.17.RELEASE</version> <relativePath/> <!-- lookup parent from repository --> </parent> <properties> <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> <project.reporting.outputEncoding>UTF-8</project.reporting.outputEncoding> <java.version>1.8</java.version> </properties> <dependencies> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-actuator</artifactId> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <dependency> <groupId>io.micrometer</groupId> <artifactId>micrometer-spring-legacy</artifactId> <version>1.0.6</version> </dependency> <dependency> <groupId>io.micrometer</groupId> <artifactId>micrometer-registry-prometheus</artifactId> <version>1.0.6</version> </dependency> <dependency> <groupId>io.github.mweirauch</groupId> <artifactId>micrometer-jvm-extras</artifactId> <version>0.1.2</version> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency> </dependencies> <build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build> </project>

在自己本地电脑上建立一个Prometheus和Grafana仪表盘,用来可视化监控Spring Boot应用产生的所有metrics。

Spring Boot使用Micrometer,一个应用metrics组件,将actuator metrics整合到外部监控系统中。

它支持很多种监控系统,比如Netflix Atalas, AWS Cloudwatch, Datadog, InfluxData, SignalFx, Graphite, Wavefront和Prometheus等。

为了整合Prometheus,你需要增加micrometer-registry-prometheus依赖:

在SpringBoot2.X中,spring-boot-starter-actuator引入了io.micrometer,对1.X中的metrics进行了重构,主要特点是支持tag/label,配合支持tag/label的监控系统,使得我们可以更加方便地对metrics进行多维度的统计查询及监控

spring-boot-starter-actuator,主要是提供了Prometheus端点,不用重复造轮子。

ActuatordemoApplication

package io.spring2go.promdemo.actuatordemo; import org.springframework.boot.SpringApplication; import org.springframework.boot.autoconfigure.SpringBootApplication; import org.springframework.context.annotation.Bean; import org.springframework.stereotype.Controller; import org.springframework.web.bind.annotation.RequestMapping; import org.springframework.web.bind.annotation.ResponseBody; import io.micrometer.core.instrument.MeterRegistry; import io.micrometer.spring.autoconfigure.MeterRegistryCustomizer; @SpringBootApplication @Controller public class ActuatordemoApplication { public static void main(String[] args) { SpringApplication.run(ActuatordemoApplication.class, args); } @RequestMapping(value = "/hello-world") public @ResponseBody String sayHello() { return "hello, world"; } @Bean MeterRegistryCustomizer<MeterRegistry> metricsCommonTags() { return registry -> registry.config().commonTags("application", "actuator-demo"); } }

上面要设置注册功能

commonTags必须叫做application

application.properties

endpoints.sensitive=false

访问actouor端点的时候不需要用户名和密码

接下来我们启动应用

如果要收到埋点,使用下面的micrometer提供的方法在springboot应用中进行埋点就可以了

# my global config

global:

scrape_interval: 5s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 5s # Evaluate rules every 15 seconds. The default is every 1 minute.

# scrape_timeout is set to the global default (10s).

# Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

# - alertmanager:9093

# Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

rule_files:

#- "simulator_alert_rules.yml"

# - "second_rules.yml"

# A scrape configuration containing exactly one endpoint to scrape:

# Here it's Prometheus itself.

scrape_configs:

# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.

- job_name: 'actuator-demo'

metrics_path: /prometheus

# metrics_path defaults to '/metrics'

# scheme defaults to 'http'.

static_configs:

- targets: ['localhost:8080']

这里代码 - job_name: 'actuator-demo'要和代码 return registry -> registry.config().commonTags("application", "actuator-demo");中的对应起来

这样整个监控就启动起来了

jvm dashboard对于的id为4701

添加4701,数据源一定要选择普罗米修斯的数据源

134.Prometheus 监控最佳实践~1.mp4

例如http的请求数目,http请求的平均演示,http500的错误数目。cpu、内存、磁盘的使用情况等

online为请求响应的系统:前台的web系统,请求、错误、db、缓存、请求延迟等进行跟踪

offline serving system:任务队列系统 队里工作线程的利用率、任务线程执行的情况

Batch Jobs:批处理系统,普罗米修斯到网关上拉去数据

普罗米修斯的高可用

普罗米修斯默认只支持15天的数据,超过整个范围的数据需要单独做处理

135.主流开源时序数据库比较~1.mp4

137.微服务监控体系总结~1.mp4

系统层监控:底层硬件,操作系统的监控,普罗米修斯做系统操作系统的监控

应用层的监控:消息队列redis、mysql spingboot 普罗米修斯进行埋点,cat skywalking做应用层的监控 elk可以统计系统的日志做监控

业务层:主要桶业务进行统计,例如转账等业务指标,普罗米修斯就可以搞定

端用户体验:客户访问网页的时间,听云等

微服务架构的监控体系

左边是对一个微服务架构做监控

主要的监控类别有下面的三种:log、Trace、Metrics

log:微服务产生log之后使用logstash进行日志的收集、然后使用kafka队列做缓冲,保证消息不被丢失,然后存储到es数据库中

trace:追踪分布式链路调用的场景

metris:在微服务里面进行埋点,抓取metris数据,然后做告警等操作

普罗米修斯也可以对kafka、cat等中间件进行监控,然后进行告警操作