http://www.elasticvision.info/

All you should know about NUMA in VMware!

Lets try answering some typical questions before we understand NUMA on VMware.

1. What is NUMA?

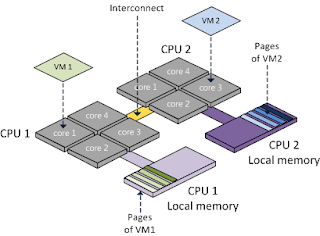

Ans: NON-UNIFORM-MEMORY-ACCESS, means that it will take longer to access some regions of memory than others. This is due to the fact that some regions of memory are on physically different busses from other regions.

or

It provides a dedicated memory (local memory) bank for each processor, The key issue in NUMA is the decision of, where to place each page to maximize performance.

2. Is there something called UMA as well?

Ans: Yes, Uniform Memory Access. The difference between UMA and NUMA machines lies in the fact that on a NUMA machine, access to a remote memory is much slower than access to a local memory.

3. Is ESXi is NUMA aware?

Ans: YES, ESXi is NUMA aware!

4. Does NUMA architecture is limited to a vendor like Intel or AMD?

Ans: NO, both Intel and AMD procs uses NUMA architecture.

5. Besides NUMA on physical layers, is there a NUMA for virtual layer (soft NUMA)?

Ans: YES, it is called as vNUMA in VMware terms. And soft NUMA in generic terms.

Can be illustrated as:

In a NUMA (Non-Uniform Memory Access) system, there are multiple NUMA nodes that consist of a set of processors and the memory. The access to memory in the same node is local; the access to the other node is remote. The remote access takes more cycles because it involves a multi-hop operation. Due to this asymmetric memory access latency, keeping the memory access local or maximizing the memory locality improves performance. On the other hand, CPU load balancing across NUMA nodes is also crucial to performance. The CPU

scheduler achieves both of these aspects of performance.

When a virtual machine powers on in a NUMA system, it is assigned a home node where memory is preferentially allocated. Since vCPUs only can be scheduled on the home node, memory access will likely be satisfied from the home node with local access latency. Note that if the home node cannot satisfy the memory request, remote nodes are looked up for available memory. This is especially true when the amount of memory allocated for a virtual machine is greater than the amount of memory per NUMA node. Because this will increase the average

memory access latency by having to access a remote node, it is best to configure the memory size of a virtual machine to fit into a NUMA node.

NUMA:

Diagram1 courtesy of Frank Denneman

UMA:

Diagram2 courtesy of Frank Denneman

Important tweaks pertaining to NUMA and vNUMA on VMware environments:

1. To configure virtual machines to use hyper-threading with NUMA in VMware

Perform either of the following tasks:

Configure one virtual machine to use hyper-threading with NUMA, addnuma.vcpu.preferHT=TRUE for per-virtual machine advanced configuration file.

To edit with vSphere Client:

Right-click on VM

Select Edit Settings

Click the Options tab.

Highlight General under Advanced options and click Configuration Parameters.

Configure all virtual machines to use hyper-threading with NUMA, addnuma.PreferHT=1 for per-host advanced configuration file.

To edit from vCenter Server:

Highlight Host.

Click the Configuration tab.

Under Software, click Advanced Settings.

Highlight Numa and browse to Numa.PreferHT.

2. In order to keep the VMs on a single NUMA node the customer set the following parameter “sched.cpu.vsmpConsolidate=true” . Benefits of this tweak:

- Optional parameter in VM Config file

- Good for cache sharing workloads

- Helps reduce the potential for remote memory access''

3. The VMkernel.Boot.sharePerNode option controls whether memory pages can be shared (de-duplicated) only within a single NUMA node or across multiple NUMA nodes.

VMkernel.Boot.sharePerNode is turned on by default, and identical pages are shared only within the same NUMA node. This improves memory locality, because all accesses to shared pages use local memory.

When you turn off the VMkernel.Boot.sharePerNode option, identical pages can be shared across different NUMA nodes. This increases the amount of sharing and de-duplication, which reduces overall memory consumption at the expense of memory locality. In memory-constrained environments, such as VMware View deployments, many similar virtual machines present an opportunity for de-duplication, and page sharing across NUMA nodes could be very beneficial.

To edit from vCenter Server:

Highlight Host.

Click the Configuration tab.

Under Software, click Advanced Settings.

Under Advanced Settings, click vmkernel.

Under boot, find for VMkernel.Boot.sharePerNode

4. vNUMA is actually enabled only for a virtual machine with 9 or more vCPUs. This is to avoid changing the behavior of an existing virtual machine by suddenly exposing NUMA topology after the virtual machine is upgraded to a newer hardware version and running on vSphere 5.x or later. Since only 9 vCPUs or wider virtual machines are supported from vSphere 5.x, it is safe to assume that such virtual machines do not have a legacy issue. This policy can be overridden with the following advanced virtual machine attribute.

To edit with vSphere Client:

Right-click on VM

Select Edit Settings

Click the Options tab.

Highlight General under Advanced options and click Configuration Parameters.

Add: numa.vcpu.min 8 (value)

Hope this helps.

I have taken some inputs from below links, you can refer: