代码比较简单,没啥好说的,就做个记录而已。大致就是现建立graph,再通过session运行即可。需要注意的就是Variable要先初始化再使用。

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import matplotlib.pyplot as plt

# 把下载的MNIST数据集放到mnist_link目录下,用TF提供的接口解析数据集

MNIST = input_data.read_data_sets('../mnist_link',one_hot = True)

learning_rate = 0.01

epoch_num = 25

batch_size = 128

X = tf.placeholder(tf.float32, [batch_size, 784], name = 'input')

Y = tf.placeholder(tf.float32, [batch_size, 10], name = 'label')

w = tf.Variable(tf.random_normal(shape = [784, 10], stddev = 0.01), name = 'weights')

b = tf.Variable(tf.zeros([1, 10]), name = 'bias')

logits = tf.matmul(X, w) + b

entropy = tf.nn.softmax_cross_entropy_with_logits(labels = Y, logits = logits)

loss = tf.reduce_mean(entropy)

optimizer = tf.train.GradientDescentOptimizer(learning_rate = learning_rate).minimize(loss)

init = tf.global_variables_initializer()

loss_array = []

with tf.Session() as sess:

sess.run(init)

# train

batch_num = int(MNIST.train.num_examples/batch_size)

for _ in range(epoch_num):

for _ in range(batch_num):

X_batch, Y_batch = MNIST.train.next_batch(batch_size)

_, v = sess.run([optimizer, loss], {X: X_batch, Y: Y_batch})

loss_array.append(v)

# test

total_correct_preds = 0

batch_num = int(MNIST.test.num_examples/batch_size)

for i in range(batch_num):

X_batch, Y_batch = MNIST.test.next_batch(batch_size)

_, loss_batch, logits_batch = sess.run([optimizer, loss, logits], {X: X_batch, Y: Y_batch})

preds = tf.nn.softmax(logits_batch)

correct_preds = tf.equal(tf.argmax(preds, 1), tf.argmax(Y_batch, 1))

accuracy = tf.reduce_sum(tf.cast(correct_preds, tf.float32))

total_correct_preds += sess.run(accuracy)

print("accuracy rate is {}".format(total_correct_preds/MNIST.test.num_examples))

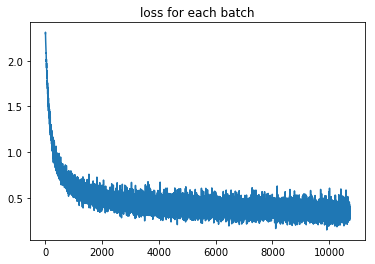

x_axis = range(len(loss_array))

plt.plot(x_axis, loss_array)

plt.title('loss for each batch')

plt.show()

最终准确率在90%左右。学习曲线如下: