1. 写在前面

Flink被誉为第四代大数据计算引擎组件,即可以用作基于离线分布式计算,也可以应用于实时计算。Flink的核心是转化为流进行计算。Flink三个核心:Source,Transformation,Sink。其中Source即为Flink计算的数据源,Transformation即为进行分布式流式计算的算子,也是计算的核心,Sink即为计算后的数据输出端。Flink Source原生支持包括Kafka,ES,RabbitMQ等一些通用的消息队列组件或基于文本的高性能非关系型数据库。而Flink Sink写原生也只支持类似Redis,Kafka,ES,RabbitMQ等一些通用的消息队列组件或基于文本的高性能非关系型数据库。而对于写入关系型数据库或Flink不支持的组件中,需要借助RichSourceFunction去实现,但这部分性能是比原生的差些,虽然Flink不建议这么做,但在大数据处理过程中,由于业务或技术架构的复杂性,有些特定的场景还是需要这样做,本篇博客就是介绍如何通过Flink RichSourceFunction来写关系型数据库,这里以写mysql为例。

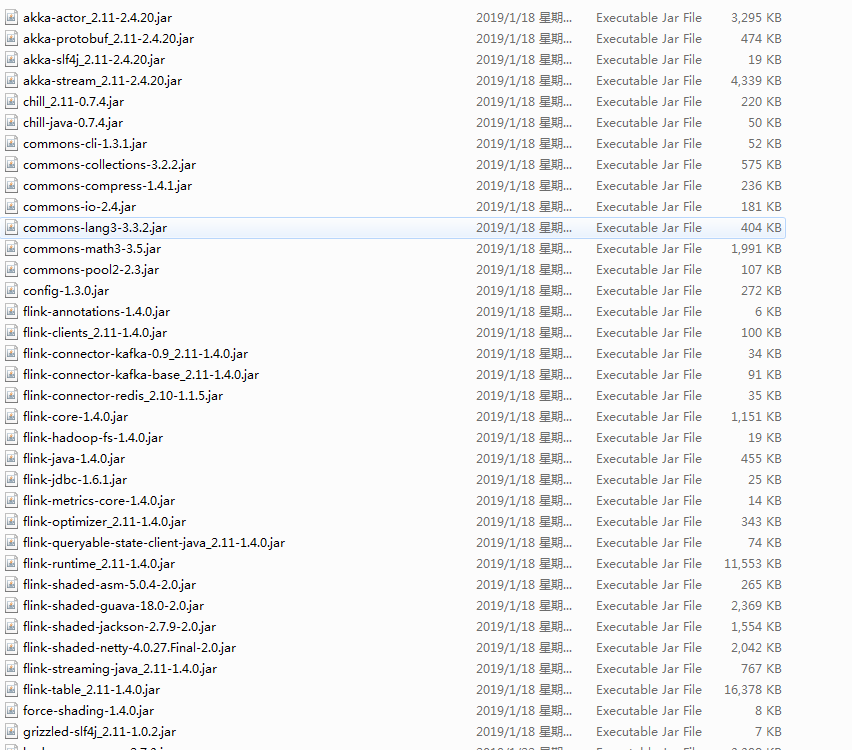

2. 引入依赖的jar包

flink基础包

flink-jdbc包

mysql-jdbc包

3. 继承RichSourceFunction包将jdbc封装读mysql

package com.run;

import java.sql.DriverManager;

import java.sql.ResultSet;

import org.apache.flink.api.java.tuple.Tuple10;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.functions.source.RichSourceFunction;

import com.mysql.jdbc.Connection;

import com.mysql.jdbc.PreparedStatement;

public class Flink2JdbcReader extends

RichSourceFunction<Tuple10<String, String, String, String, String, String, String, String, String, String>> {

private static final long serialVersionUID = 3334654984018091675L;

private Connection connect = null;

private PreparedStatement ps = null;

/*

* (non-Javadoc)

*

* @see org.apache.flink.api.common.functions.AbstractRichFunction#open(org.

* apache.flink.configuration.Configuration) to use open database connect

*/

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

Class.forName("com.mysql.jdbc.Driver");

connect = (Connection) DriverManager.getConnection("jdbc:mysql://192.168.21.11:3306", "root", "flink");

ps = (PreparedStatement) connect

.prepareStatement("select col1,col2,col3,col4,col5,col6,col7,col8,col9,col10 from flink.test_tb");

}

/*

* (non-Javadoc)

*

* @see

* org.apache.flink.streaming.api.functions.source.SourceFunction#run(org.

* apache.flink.streaming.api.functions.source.SourceFunction.SourceContext)

* to use excuted sql and return result

*/

@Override

public void run(

SourceContext<Tuple10<String, String, String, String, String, String, String, String, String, String>> collect)

throws Exception {

ResultSet resultSet = ps.executeQuery();

while (resultSet.next()) {

Tuple10<String, String, String, String, String, String, String, String, String, String> tuple = new Tuple10<String, String, String, String, String, String, String, String, String, String>();

tuple.setFields(resultSet.getString(1), resultSet.getString(2), resultSet.getString(3),

resultSet.getString(4), resultSet.getString(5), resultSet.getString(6), resultSet.getString(7),

resultSet.getString(8), resultSet.getString(9), resultSet.getString(10));

collect.collect(tuple);

}

}

/*

* (non-Javadoc)

*

* @see

* org.apache.flink.streaming.api.functions.source.SourceFunction#cancel()

* colse database connect

*/

@Override

public void cancel() {

try {

super.close();

if (connect != null) {

connect.close();

}

if (ps != null) {

ps.close();

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

4. 继承RichSourceFunction包将jdbc封装写mysql

package com.run;

import java.sql.DriverManager;

import org.apache.flink.api.java.tuple.Tuple10;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.functions.sink.RichSinkFunction;

import com.mysql.jdbc.Connection;

import com.mysql.jdbc.PreparedStatement;

public class Flink2JdbcWriter extends

RichSinkFunction<Tuple10<String, String, String, String, String, String, String, String, String, String>> {

private static final long serialVersionUID = -8930276689109741501L;

private Connection connect = null;

private PreparedStatement ps = null;

/*

* (non-Javadoc)

*

* @see org.apache.flink.api.common.functions.AbstractRichFunction#open(org.

* apache.flink.configuration.Configuration) get database connect

*/

@Override

public void open(Configuration parameters) throws Exception {

super.open(parameters);

Class.forName("com.mysql.jdbc.Driver");

connect = (Connection) DriverManager.getConnection("jdbc:mysql://192.168.21.11:3306", "root", "flink");

ps = (PreparedStatement) connect.prepareStatement("insert into flink.test_tb1 values (?,?,?,?,?,?,?,?,?,?)");

}

/*

* (non-Javadoc)

*

* @see

* org.apache.flink.streaming.api.functions.sink.SinkFunction#invoke(java.

* lang.Object,

* org.apache.flink.streaming.api.functions.sink.SinkFunction.Context) read

* data from flink DataSet to database

*/

@Override

public void invoke(Tuple10<String, String, String, String, String, String, String, String, String, String> value,

Context context) throws Exception {

ps.setString(1, value.f0);

ps.setString(2, value.f1);

ps.setString(3, value.f2);

ps.setString(4, value.f3);

ps.setString(5, value.f4);

ps.setString(6, value.f5);

ps.setString(7, value.f6);

ps.setString(8, value.f7);

ps.setString(9, value.f8);

ps.setString(10, value.f9);

ps.executeUpdate();

}

/*

* (non-Javadoc)

*

* @see org.apache.flink.api.common.functions.AbstractRichFunction#close()

* close database connect

*/

@Override

public void close() throws Exception {

try {

super.close();

if (connect != null) {

connect.close();

}

if (ps != null) {

ps.close();

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

4. 代码解释

对于Flink2JdbcReader的读

里面有三个方法open,run,cancel,其中open方法是建立与关系型数据库的链接,这里其实就是普通的jdbc链接及mysql的地址,端口,库等信息。run方法是读取mysql数据转化为Flink独有的Tuple集合类型,可以根据代码看出其中的规律和Tuple8,Tuple9,Tuple10代表什么含义。cancel就很简单了关闭数据库连接

对于Flink2JdbcWriter的写

里面有三个方法open,invoke,close,其中open方法是建立与关系型数据库的链接,这里其实就是普通的jdbc链接及mysql的地址,端口,库等信息。invoke方法是将flink的数据类型插入到mysql,这里的写法与在web程序中写jdbc插入数据不太一样,因为flink独有的Tuple,可以根据代码看出其中的规律和Tuple8,Tuple9,Tuple10代表什么含义。close关闭数据库连接

5. 测试:读mysql数据并继续写入mysql

package com.run;

import java.util.Date;

import org.apache.flink.api.java.tuple.Tuple10;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

public class FlinkReadDbWriterDb {

public static void main(String[] args) throws Exception {。

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStream<Tuple10<String, String, String, String, String, String, String, String, String, String>> dataStream = env

.addSource(new Flink2JdbcReader());

// tranfomat

dataStream.addSink(new Flink2JdbcWriter());

env.execute("Flink cost DB data to write Database");

}

}

6. 总结

从测试代码中可以很清晰的看出Flink的逻辑:Source->Transformation->Sink,可以在addSource到addSink之间加入我们的业务逻辑算子。同时这里必须注意env.execute("Flink cost DB data to write Database");这个必须有而且必须要放到结尾,否则整个代码是不会执行的,至于为什么在后续的博客会讲