kubeadm 是官方社区推出的一个用于快速部署 kubernetes 集群的工具,这个工具能通过两条指令完成一个 kubernetes 集群的部署:

kube-dns与kubeadm的使用从v1.18开始就不推荐使用,并在v1.21中删除。

第一,创建一个 Master 节点 kubeadm init

第二,将 Node 节点加入到当前集群中 $ kubeadm join <Master 节点的 IP 和端口 >

一、部署系统版本

| 软件 |

版本 |

| CentOS |

CentOS Linux release 7.9.1908 (Core) |

| Docker |

20.10.10 |

| Kubernetes |

v1.21.2 |

| Flannel |

V0.13.1 |

| Kernel-lm |

kernel-lt-4.4.245-1.el7.elrepo.x86_64.rpm |

| Kernel-lm-devel |

kernel-lt-devel-4.4.245-1.el7.elrepo.x86_64.rpm |

二、节点规划

| Hostname |

Outer-IP |

Inner-IP |

内核版本 |

| Kubernetes-master-001 |

192.168.13.100 |

172.16.1.100 |

5.4.145-1.el7.elrepo.x86_64 |

| Kubernetes-node-001 |

192.168.13.101 |

172.16.1.101 |

5.4.145-1.el7.elrepo.x86_64 |

| Kubernetes-node-002 |

192.168.13.102 |

172.16.1.102 |

5.4.145-1.el7.elrepo.x86_64 |

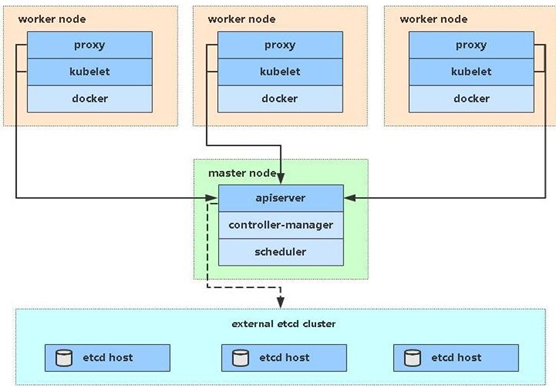

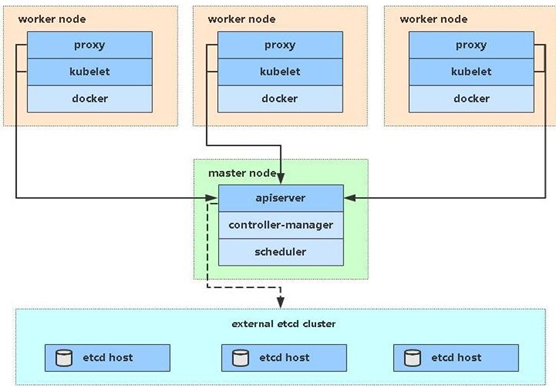

#1.在所有节点上安装 Docker 和 kubeadm

#2.部署 Kubernetes Master

#3.部署容器网络插件

#4.部署 Kubernetes Node,将节点加入 Kubernetes 集群中

#5.部署 Dashboard Web 页面,可视化查看 Kubernetes 资源

三、系统初始化(所有节点)

1.添加host解析

#1.修改主机名

[root@ip-172-16-1-100 ~]# hostnamectl set-hostname Kubernetes-master-001

[root@ip-172-16-1-101 ~]# hostnamectl set-hostname Kubernetes-node-001

[root@ip-172-16-1-102 ~]# hostnamectl set-hostname Kubernetes-node-002

#2.Master节点添加hosts解析

[root@kubernetes-master-001 ~]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.13.100 Kubernetes-master-001 m1

192.168.13.101 Kubernetes-node-001 n1

192.168.13.102 Kubernetes-node-002 n2

#3.分发hosts文件到Node节点

[root@kubernetes-master-001 ~]# scp /etc/hosts root@n1:/etc/hosts

hosts 100% 247 2.7KB/s 00:00

[root@kubernetes-master-001 ~]# scp /etc/hosts root@n2:/etc/hosts

hosts 100% 247 182.8KB/s 00:00

2.关闭防火墙和Selinux

#1.关闭防火墙

[root@kubernetes-master-001 ~]# systemctl disable --now firewalld

#2.关闭Selinux

1)临时关闭

[root@kubernetes-master-001 ~]# setenforce 0

2)永久关闭

[root@kubernetes-master-001 ~]# sed -i 's#enforcing#disabled#g' /etc/selinux/config

3.关闭swap分区

#1.临时关闭

[root@kubernetes-master-001 ~]# swapoff -a

#2.永久关闭

[root@kubernetes-master-001 ~]# sed -i.bak 's/^.*centos-swap/#&/g' /etc/fstab

[root@kubernetes-master-001 ~]# echo 'KUBELET_EXTRA_ARGS="--fail-swap-on=false"' > /etc/sysconfig/kubelet

4.配置国内yum源

默认情况下,CentOS使用的是官方yum源,所以一般情况下在国内使用是非常慢,所以我们可以替换成国内的一些比较成熟的yum源,例如:清华大学镜像源,网易云镜像源等等。

#1.更改yum源

[root@kubernetes-master-001 ~]# mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup

[root@kubernetes-master-001 ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

[root@kubernetes-master-001 ~]# curl -o /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

#2.刷新缓存

[root@kubernetes-master-001 ~]# yum makecache

#3.禁止自动更新更新内核版本

[root@kubernetes-master-001 ~]# yum update -y --exclud=kernel*

5.更新内核版本

由于Docker运行需要较新的系统内核功能,例如ipvs等等,所以一般情况下,我们需要使用4.0+以上版本的系统内核。

# 内核要求是4.18+,如果是`CentOS 8`则不需要升级内核

#1.导入elrepo的key

[root@kubernetes-master-001 ~]# rpm -import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

#2.安装elrepo的yum源

[root@kubernetes-master-001 ~]# rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

#3.仓库启用后,列出可用的内核相关包

[root@kubernetes-master-001 ~]# yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

#4.长期维护版本lt为5.4,最新主线稳定版ml为5.14,我们需要安装最新的长期维护版本内核,使用如下命令:(以后这台机器升级内核直接运行这句就可升级为最新维护版本)

[root@k8s-master-001-001 ~]# yum -y --enablerepo=elrepo-kernel install kernel-lt.x86_64 kernel-lt-devel.x86_64

#5.设置启动优先级

[root@kubernetes-master-001 ~]# grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

Generating grub configuration file ...

Found linux image: /boot/vmlinuz-5.4.158-1.el7.elrepo.x86_64

Found initrd image: /boot/initramfs-5.4.158-1.el7.elrepo.x86_64.img

Found linux image: /boot/vmlinuz-3.10.0-957.el7.x86_64

Found initrd image: /boot/initramfs-3.10.0-957.el7.x86_64.img

Found linux image: /boot/vmlinuz-0-rescue-cdbb018d1b2946f5940de9311b06dc86

Found initrd image: /boot/initramfs-0-rescue-cdbb018d1b2946f5940de9311b06dc86.img

done

#6.查看内核版本

[root@kubernetes-master-001 ~]# grubby --default-kernel

/boot/vmlinuz-5.4.158-1.el7.elrepo.x86_64

6.安装ipvs

ipvs是系统内核中的一个模块,其网络转发性能很高。一般情况下,我们首选ipvs。

#1.安装IPVS

[root@kubernetes-master-001 ~]# yum install -y conntrack-tools ipvsadm ipset conntrack libseccomp

#2.加载IPVS模块

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_fo ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in \${ipvs_modules}; do

/sbin/modinfo -F filename \${kernel_module} > /dev/null 2>&1

if [ $? -eq 0 ]; then

/sbin/modprobe \${kernel_module}

fi

done

EOF

#3.授权并查看IPVS

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

7.优化系统内核参数

内核参数优化的主要目的是使其更适合kubernetes的正常运行。

cat > /etc/sysctl.d/k8s.conf << EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp.keepaliv.probes = 3

net.ipv4.tcp_keepalive_intvl = 15

net.ipv4.tcp.max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp.max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.top_timestamps = 0

net.core.somaxconn = 16384

EOF

#查看内核参数

sysctl -p

# 重启

reboot

8.安装基础软件

安装一些基础软件,是为了方便我们的日常使用。

[root@kubernetes-master-001 ~]# yum install wget expect vim net-tools ntp bash-completion ipvsadm ipset jq iptables conntrack sysstat libseccomp -y

四、安装docker

Docker主要是作为k8s管理的常用的容器工具之一。

#1.CentOS7版

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install docker-ce -y

sudo mkdir -p /etc/docker

# 设置加速器

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://8mh75mhz.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload ; systemctl restart docker;systemctl enable --now docker.service

#2.CentOS8版

wget https://download.docker.com/linux/centos/7/x86_64/stable/Packages/containerd.io-1.2.13-3.2.el7.x86_64.rpm

yum install containerd.io-1.2.13-3.2.el7.x86_64.rpm -y

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install docker-ce -y

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://8mh75mhz.mirror.aliyuncs.com"]

}

EOF

sudo systemctl daemon-reload ; systemctl restart docker;systemctl enable --now docker.service

五、同步集群时间

在集群当中,时间是一个很重要的概念,一旦集群当中某台机器时间跟集群时间不一致,可能会导致集群面临很多问题。所以,在部署集群之前,需要同步集群当中的所有机器的时间。

#1.CentOS7版

yum install ntp -y

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' > /etc/timezone

ntpdate time2.aliyun.com

# 写入定时任务

#Timing synchronization time

* * * * * /usr/sbin/ntpdate ntp1.aliyun.com &>/dev/null

#2.CentOS8版

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install wntp -y

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' > /etc/timezone

ntpdate time2.aliyun.com

# 写入定时任务

#Timing synchronization time

* * * * * /usr/sbin/ntpdate ntp1.aliyun.com &>/dev/null

六、安装Kubernetes

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum makecache

setenforce 0

# 默认安装最新版

yum install -y kubelet kubeadm kubectl

# 指定版本安装

yum install -y kubelet-1.21.2

yum install -y kubeadm-1.21.2

yum install -y kubectl-1.21.2

systemctl enable kubelet && systemctl start kubelet

七、初始化master节点

[root@k8s-master-001-001 ~]# kubeadm init --image-repository=registry.cn-hangzhou.aliyuncs.com/k8sos --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16 --kubernetes-version=v1.21.2

... ...

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.13.100:6443 --token vb47mm.6tehbl5sbc5fqcz7 \

--discovery-token-ca-cert-hash sha256:107d3665d2b689dd124cc60ed41adcbbf215ab28e2af1b6ca03d27c901575a9d

kubeadm init \

--image-repository=registry.cn-hangzhou.aliyuncs.com/k8sos \ # 指定下载镜像的仓库

--service-cidr=10.96.0.0/12 \ # 指定k8s智能负载均衡器的网段

--pod-network-cidr=10.244.0.0/16 # 指定k8s服务的网段

--kubernetes-version=v1.21.2 \ # 指定安装的k8s版本号

八、配置kubernetes用户信息

[root@kubernetes-master-001 ~]# mkdir -p $HOME/.kube

[root@kubernetes-master-001 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@kubernetes-master-001 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@kubernetes-master-001 ~]# export KUBECONFIG=/etc/kubernetes/admin.conf

#登录配置文件

[root@kubernetes-master-001 ~]# cd .kube/

[root@kubernetes-master-001 ~/.kube]# ll config

-rw------- 1 root root 5594 Nov 8 14:11 config

[root@kubernetes-master-001 ~/.kube]# cat config

九、安装集群网络插件

kubernetes需要使用第三方的网络插件来实现kubernetes的网络功能,这样一来,安装网络插件成为必要前提;第三方网络插件有多种,常用的有flanneld、calico和cannel(flanneld+calico),不同的网络组件,都提供基本的网络功能,为各个Node节点提供IP网络等。

#1.方式一:直接安装

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

#2.方式二:编写配置文件安装

1.编写配置文件

[root@kubernetes-master-001 ~]# vim kube-flannel.yaml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.13.1-rc1

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.13.1-rc1

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

2.查看镜像

[root@kubernetes-master-001 ~]# cat kube-flannel.yaml |grep image

3.替换镜像

[root@kubernetes-master-001 ~]# sed -i 's#quay.io/coreos/flannel#registry.cn-hangzhou.aliyuncs.com/k8sos/flannel#g' kube-flannel.yaml

4.安装flannel

#1.安装flannel

[root@kubernetes-master-001 ~]# kubectl apply -f kube-flannel.yaml

Warning: policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

#2.查看flannel运行状态

[root@kubernetes-master-001 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-978bbc4b6-6dpvj 1/1 Running 0 18m

coredns-978bbc4b6-lgj6g 1/1 Running 0 18m

etcd-kubernetes-master-001 1/1 Running 0 18m

kube-apiserver-kubernetes-master-001 1/1 Running 0 18m

kube-controller-manager-kubernetes-master-001 1/1 Running 0 18m

kube-flannel-ds-wcs76 1/1 Running 0 71s

kube-proxy-hjljv 1/1 Running 0 18m

kube-scheduler-kubernetes-master-001 1/1 Running 0 18m

十、 增加命令提示

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

十一、添加从节点(Node节点)

1.Node节点加入集群

[root@kubernetes-node-001 ~]# kubeadm join 192.168.13.100:6443 --token vb47mm.6tehbl5sbc5fqcz7 --discovery-token-ca-cert-hash sha256:107d3665d2b689dd124cc60ed41adcbbf215ab28e2af1b6ca03d27c901575a9d

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

[root@kubernetes-node-002 ~]# kubeadm join 192.168.13.100:6443 --token vb47mm.6tehbl5sbc5fqcz7 --discovery-token-ca-cert-hash sha256:107d3665d2b689dd124cc60ed41adcbbf215ab28e2af1b6ca03d27c901575a9d

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

2.主节点查看集群信息

[root@kubernetes-master-001 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kubernetes-master-001 Ready control-plane,master 40m v1.21.2

kubernetes-node-001 Ready <none> 6m58s v1.21.2

kubernetes-node-002 Ready <none> 19m v1.21.2

十二、测试集群

#1.测试一

[root@kubernetes-master-001 ~]# kubectl run test -it --rm --image=busybox:1.28.3

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

/ #

#2.测试二

[root@kubernetes-master-001 ~]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

[root@kubernetes-master-001 ~]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

[root@kubernetes-master-001 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 42m

nginx NodePort 10.111.239.146 <none> 80:30828/TCP 8s

[root@kubernetes-master-001 ~]# curl 192.168.13.100:30828

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>