items.py

1 import scrapy 2 class LagouItem(scrapy.Item): 3 # define the fields for your item here like: 4 # name = scrapy.Field() 5 #id 6 # obj_id=scrapy.Field() 7 #职位名 8 positon_name=scrapy.Field() 9 #工作地点 10 work_place=scrapy.Field() 11 #发布日期 12 publish_time=scrapy.Field() 13 #工资 14 salary=scrapy.Field() 15 #工作经验 16 work_experience=scrapy.Field() 17 #学历 18 education=scrapy.Field() 19 #full_time 20 full_time=scrapy.Field() 21 #标签 22 tags=scrapy.Field() 23 #公司名字 24 company_name=scrapy.Field() 25 # #产业 26 # industry=scrapy.Field() 27 #职位诱惑 28 job_temptation=scrapy.Field() 29 #工作描述 30 job_desc=scrapy.Field() 31 #公司logo地址 32 logo_image=scrapy.Field() 33 #领域 34 field=scrapy.Field() 35 #发展阶段 36 stage=scrapy.Field() 37 #公司规模 38 company_size=scrapy.Field() 39 # 公司主页 40 home = scrapy.Field() 41 #职位发布者 42 job_publisher=scrapy.Field() 43 #投资机构 44 financeOrg=scrapy.Field() 45 #爬取时间 46 crawl_time=scrapy.Field()

lagou.py

# -*- coding: utf-8 -*- import scrapy from scrapy.linkextractors import LinkExtractor from scrapy.spiders import CrawlSpider, Rule from LaGou.items import LagouItem from LaGou.utils.MD5 import get_md5 from datetime import datetime class LagouSpider(CrawlSpider): name = 'lagou' allowed_domains = ['lagou.com'] start_urls = ['https://www.lagou.com/zhaopin/'] content_links=LinkExtractor(allow=(r"https://www.lagou.com/jobs/d+.html")) page_links=LinkExtractor(allow=(r"https://www.lagou.com/zhaopin/d+")) rules = ( Rule(content_links, callback="parse_item", follow=False), Rule(page_links,follow=True) ) def parse_item(self, response): item=LagouItem() #获取到公司拉钩主页的url作为ID # item["obj_id"]=get_md5(response.url) #公司名称 item["company_name"]=response.xpath('//dl[@class="job_company"]//a/img/@alt').extract()[0] # 职位 item["positon_name"]=response.xpath('//div[@class="job-name"]//span[@class="name"]/text()').extract()[0] #工资 item["salary"]=response.xpath('//dd[@class="job_request"]//span[1]/text()').extract()[0] # 工作地点 work_place=response.xpath('//dd[@class="job_request"]//span[2]/text()').extract()[0] item["work_place"]=work_place.replace("/","") # 工作经验 work_experience=response.xpath('//dd[@class="job_request"]//span[3]/text()').extract()[0] item["work_experience"]=work_experience.replace("/","") # 学历 education=response.xpath('//dd[@class="job_request"]//span[4]/text()').extract()[0] item["education"]=education.replace("/","") # full_time item['full_time']=response.xpath('//dd[@class="job_request"]//span[5]/text()').extract()[0] #tags tags=response.xpath('//dd[@class="job_request"]//li[@class="labels"]/text()').extract() item["tags"]=",".join(tags) #publish_time item["publish_time"]=response.xpath('//dd[@class="job_request"]//p[@class="publish_time"]/text()').extract()[0] # 职位诱惑 job_temptation=response.xpath('//dd[@class="job-advantage"]/p/text()').extract() item["job_temptation"]=",".join(job_temptation) # 工作描述 job_desc=response.xpath('//dd[@class="job_bt"]/div//p/text()').extract() item["job_desc"]=",".join(job_desc).replace("xa0","").strip() #job_publisher item["job_publisher"]=response.xpath('//div[@class="publisher_name"]//span[@class="name"]/text()').extract()[0] # 公司logo地址 logo_image=response.xpath('//dl[@class="job_company"]//a/img/@src').extract()[0] item["logo_image"]=logo_image.replace("//","") # 领域 field=response.xpath('//ul[@class="c_feature"]//li[1]/text()').extract() item["field"]="".join(field).strip() # 发展阶段 stage=response.xpath('//ul[@class="c_feature"]//li[2]/text()').extract() item["stage"]="".join(stage).strip() # 投资机构 financeOrg=response.xpath('//ul[@class="c_feature"]//li[3]/p/text()').extract() if financeOrg: item["financeOrg"]="".join(financeOrg) else: item["financeOrg"]="" #公司规模 if financeOrg: company_size= response.xpath('//ul[@class="c_feature"]//li[4]/text()').extract() item["company_size"]="".join(company_size).strip() else: company_size = response.xpath('//ul[@class="c_feature"]//li[3]/text()').extract() item["company_size"] = "".join(company_size).strip() # 公司主页 item["home"]=response.xpath('//ul[@class="c_feature"]//li/a/@href').extract()[0] # 爬取时间 item["crawl_time"]=datetime.now() yield item

pipelines.py

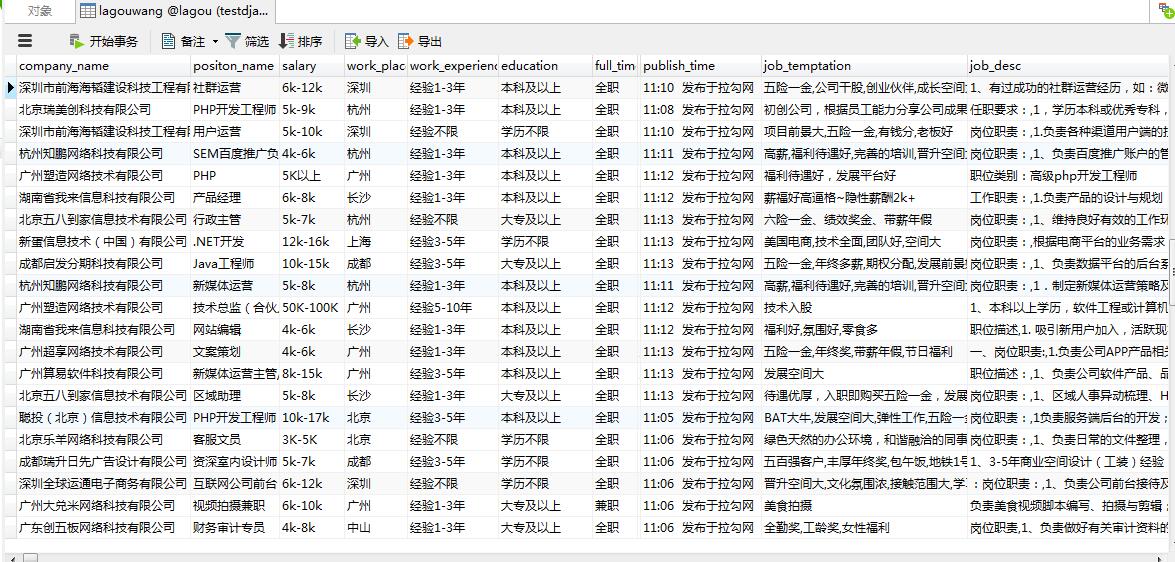

# -*- coding: utf-8 -*- # Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html import pymysql class LagouPipeline(object): def process_item(self, item, spider): con = pymysql.connect(host="127.0.0.1", user="root", passwd="229801", db="lagou",charset="utf8") cur = con.cursor() sql = ("insert into lagouwang(company_name,positon_name,salary,work_place,work_experience,education,full_time,tags,publish_time,job_temptation,job_desc,job_publisher,logo_image,field,stage,financeOrg,company_size,home,crawl_time)" "VALUES (%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s,%s)") lis=(item["company_name"],item["positon_name"],item["salary"],item["work_place"],item["work_experience"],item["education"],item['full_time'],item["tags"],item["publish_time"],item["job_temptation"],item["job_desc"],item["job_publisher"],item["logo_image"],item["field"],item["stage"],item["financeOrg"],item["company_size"],item["home"],item["crawl_time"]) cur.execute(sql, lis) con.commit() cur.close() con.close() return item

middlewares.py (主要是User_Agent的随机切换 没有加ip代理)

import random from LaGou.settings import USER_AGENTS class RandomUserAgent(object): def process_request(self, request, spider): useragent = random.choice(USER_AGENTS) request.headers.setdefault("User-Agent", useragent)

settings.py

BOT_NAME = 'LaGou' SPIDER_MODULES = ['LaGou.spiders'] NEWSPIDER_MODULE = 'LaGou.spiders' ROBOTSTXT_OBEY = False DOWNLOAD_DELAY = 5 COOKIES_ENABLED = False USER_AGENTS = [ "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5" ] DOWNLOADER_MIDDLEWARES = { 'LaGou.middlewares.RandomUserAgent': 1, # 'LaGou.middlewares.MyCustomDownloaderMiddleware': 543, } ITEM_PIPELINES = { #'scrapy_redis.pipelines.RedisPipeline':300, 'LaGou.pipelines.LagouPipeline': 300, }

main.py(用于启动调试)

1 #coding=utf-8 2 from scrapy.cmdline import execute 3 execute(["scrapy","crawl","lagou"])

在settings.py配置加入如下代码会实现分布式数据保存在redis里面,怎么从redis取出数据参考前几章

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter" SCHEDULER = "scrapy_redis.scheduler.Scheduler" SCHEDULER_PERSIST = True ITEM_PIPELINES = { 'scrapy_redis.pipelines.RedisPipeline':300, #'LaGou.pipelines.LagouPipeline': 300, }

主要用到知识点:CrawlSpider的(LinkExtractor,Rule),内容的处理(xpath,extract),字符的处理(join,replace,strip,split),User_Agent随机切换等