主机:三台,Centos7.6+docker19.03.4 (192.168.145.7,192.168.145.67,192.168.145.77)

镜像:zookeeper:latest(docker pull zookeeper)version 3.6.1,wurstmeister/kafka:latest (docker pull wurstmeister/kafka) version 2.13_2.6.0,kafka-manager(docker pull sheepkiller/kafka-manager)version 1.3.1.8

[root@localhost ~]# docker pull zookeeper

(2) 创建本地目录,三台都一样

[root@localhost ~]# mkdir -pv /kafka_cluster/zookeeper/{conf,data,datalog}

(3) 准备配置文件,zoo.cfg 和 log4j.properties,三台都一样

[root@localhost ~]# vim /kafka_cluster/zookeeper/conf/zoo.cfg # The number of milliseconds of each tick tickTime=2000 # The number of ticks that the initial # synchronization phase can take initLimit=10 # The number of ticks that can pass between # sending a request and getting an acknowledgement syncLimit=5 # the directory where the snapshot is stored. # do not use /tmp for storage, /tmp here is just # example sakes. # zookeeper镜像中设定会将zookeeper的data与dataLog分别映射到/data, /datalog # 本质上,这个配置文件是为zookeeper的容器所用,容器中路径的配置与容器所在的宿主机上的路径是有区别的,要区分清楚。 dataDir=/data dataLogDir=/datalog # the port at which the clients will connect clientPort=2181 # the maximum number of client connections. # increase this if you need to handle more clients #maxClientCnxns=60 # # Be sure to read the maintenance section of the # administrator guide before turning on autopurge. # # http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance # # The number of snapshots to retain in dataDir #autopurge.snapRetainCount=3 # Purge task interval in hours # Set to "0" to disable auto purge feature #autopurge.purgeInterval=1t server.1=192.168.145.7:2888:3888 server.2=192.168.145.67:2888:3888 server.3=192.168.145.77:2888:3888

[root@localhost ~]# vim /kafka_cluster/zookeeper/conf/log4j.properties # Define some default values that can be overridden by system properties zookeeper.root.logger=INFO, CONSOLE zookeeper.console.threshold=INFO zookeeper.log.dir=. zookeeper.log.file=zookeeper.log zookeeper.log.threshold=DEBUG zookeeper.tracelog.dir=. zookeeper.tracelog.file=zookeeper_trace.log # # ZooKeeper Logging Configuration # # Format is "<default threshold> (, <appender>)+ # DEFAULT: console appender only log4j.rootLogger=${zookeeper.root.logger} # Example with rolling log file #log4j.rootLogger=DEBUG, CONSOLE, ROLLINGFILE # Example with rolling log file and tracing #log4j.rootLogger=TRACE, CONSOLE, ROLLINGFILE, TRACEFILE # # Log INFO level and above messages to the console # log4j.appender.CONSOLE=org.apache.log4j.ConsoleAppender log4j.appender.CONSOLE.Threshold=${zookeeper.console.threshold} log4j.appender.CONSOLE.layout=org.apache.log4j.PatternLayout log4j.appender.CONSOLE.layout.ConversionPattern=%d{ISO8601} [myid:%X{myid}] - %-5p [%t:%C{1}@%L] - %m%n # # Add ROLLINGFILE to rootLogger to get log file output # Log DEBUG level and above messages to a log file log4j.appender.ROLLINGFILE=org.apache.log4j.RollingFileAppender log4j.appender.ROLLINGFILE.Threshold=${zookeeper.log.threshold} log4j.appender.ROLLINGFILE.File=${zookeeper.log.dir}/${zookeeper.log.file} # Max log file size of 10MB log4j.appender.ROLLINGFILE.MaxFileSize=10MB # uncomment the next line to limit number of backup files #log4j.appender.ROLLINGFILE.MaxBackupIndex=10 log4j.appender.ROLLINGFILE.layout=org.apache.log4j.PatternLayout log4j.appender.ROLLINGFILE.layout.ConversionPattern=%d{ISO8601} [myid:%X{myid}] - %-5p [%t:%C{1}@%L] - %m%n # # Add TRACEFILE to rootLogger to get log file output # Log DEBUG level and above messages to a log file log4j.appender.TRACEFILE=org.apache.log4j.FileAppender log4j.appender.TRACEFILE.Threshold=TRACE log4j.appender.TRACEFILE.File=${zookeeper.tracelog.dir}/${zookeeper.tracelog.file} log4j.appender.TRACEFILE.layout=org.apache.log4j.PatternLayout ### Notice we are including log4j's NDC here (%x) log4j.appender.TRACEFILE.layout.ConversionPattern=%d{ISO8601} [myid:%X{myid}] - %-5p [%t:%C{1}@%L][%x] - %m%n

(4) 准备data/myid文件,三台机器的myid的值,分别对应zoo.cfg文件中的server.x的数字x

#192.168.145.7 [root@localhost ~]# echo 1 > /kafka_cluster/zookeeper/data/myid #192.168.145.67 [root@localhost ~]# echo 2 > /kafka_cluster/zookeeper/data/myid #192.168.145.77 [root@localhost ~]# echo 3 > /kafka_cluster/zookeeper/data/myid

(5) 启动容器

#以192.168.145.7为例,另两台改一下--name即可 #方式一,使用宿主机网卡模式 [root@localhost ~]# docker run -d --name=zookeeper-1 --restart=always --net=host -v /kafka_cluster/zookeeper/conf:/conf -v /kafka_cluster/zookeeper/data:/data -v /kafka_cluster/zookeeper/datalog:/datalog zookeeper:latest #方式二,使用端口映射模式 [root@localhost ~]# docker run -d --name=zookeeper-1 --restart=always -p 2181:2181 -p 2888:2888 -p 3888:3888 -v /kafka_cluster/zookeeper/conf:/conf -v /kafka_cluster/zookeeper/data:/data -v /kafka_cluster/zookeeper/datalog:/datalog zookeeper:latest #但要注意zoo.cfg中的server.1=192.168.145.7:2888:3888对应于本机的IP改为0.0.0.0,即 server.1=0.0.0.0:2888:3888 server.2=192.168.145.67:2888:3888 server.3=192.168.145.77:2888:3888 #其它两台类似修改即可

(6) 查看集群状态

[root@localhost ~]# docker exec -it zookeeper-1 /bin/bash ./bin/zkServer.sh status ZooKeeper JMX enabled by default Using config: /conf/zoo.cfg Client port found: 2181. Client address: localhost. Mode: follower

(1) 下载镜像,三台都一样

[root@localhost ~]# docker pull wurstmeister/kafka

(2) 创建本地目录,三台都一样

[root@localhost ~]# mkdir -pv /kafka_cluster/kafka/{config,data,logs}

(3) 准备配置文件,server.properties 、log4j.properties、tools-log4j.properties,三台都一样

[root@node1 ~]# vim /kafka_cluster/kafka/config/server.properties # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # see kafka.server.KafkaConfig for additional details and defaults ############################# Server Basics ############################# # The id of the broker. This must be set to a unique integer for each broker. broker.id=0 ############################# Socket Server Settings ############################# # The address the socket server listens on. It will get the value returned from # java.net.InetAddress.getCanonicalHostName() if not configured. # FORMAT: # listeners = listener_name://host_name:port # EXAMPLE: # listeners = PLAINTEXT://your.host.name:9092 #listeners=PLAINTEXT://:9092 # Hostname and port the broker will advertise to producers and consumers. If not set, # it uses the value for "listeners" if configured. Otherwise, it will use the value # returned from java.net.InetAddress.getCanonicalHostName(). #advertised.listeners=PLAINTEXT://your.host.name:9092 # Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details #listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL # The number of threads that the server uses for receiving requests from the network and sending responses to the network num.network.threads=3 # The number of threads that the server uses for processing requests, which may include disk I/O num.io.threads=8 # The send buffer (SO_SNDBUF) used by the socket server socket.send.buffer.bytes=102400 # The receive buffer (SO_RCVBUF) used by the socket server socket.receive.buffer.bytes=102400 # The maximum size of a request that the socket server will accept (protection against OOM) socket.request.max.bytes=104857600 ############################# Log Basics ############################# # A comma separated list of directories under which to store log files log.dirs=/tmp/kafka-logs # The default number of log partitions per topic. More partitions allow greater # parallelism for consumption, but this will also result in more files across # the brokers. num.partitions=1 # The number of threads per data directory to be used for log recovery at startup and flushing at shutdown. # This value is recommended to be increased for installations with data dirs located in RAID array. num.recovery.threads.per.data.dir=1 ############################# Internal Topic Settings ############################# # The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state" # For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3. offsets.topic.replication.factor=1 transaction.state.log.replication.factor=1 transaction.state.log.min.isr=1 ############################# Log Flush Policy ############################# # Messages are immediately written to the filesystem but by default we only fsync() to sync # the OS cache lazily. The following configurations control the flush of data to disk. # There are a few important trade-offs here: # 1. Durability: Unflushed data may be lost if you are not using replication. # 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush. # 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks. # The settings below allow one to configure the flush policy to flush data after a period of time or # every N messages (or both). This can be done globally and overridden on a per-topic basis. # The number of messages to accept before forcing a flush of data to disk #log.flush.interval.messages=10000 # The maximum amount of time a message can sit in a log before we force a flush #log.flush.interval.ms=1000 ############################# Log Retention Policy ############################# # The following configurations control the disposal of log segments. The policy can # be set to delete segments after a period of time, or after a given size has accumulated. # A segment will be deleted whenever *either* of these criteria are met. Deletion always happens # from the end of the log. # The minimum age of a log file to be eligible for deletion due to age log.retention.hours=168 # A size-based retention policy for logs. Segments are pruned from the log unless the remaining # segments drop below log.retention.bytes. Functions independently of log.retention.hours. #log.retention.bytes=1073741824 # The maximum size of a log segment file. When this size is reached a new log segment will be created. log.segment.bytes=1073741824 # The interval at which log segments are checked to see if they can be deleted according # to the retention policies log.retention.check.interval.ms=300000 ############################# Zookeeper ############################# # Zookeeper connection string (see zookeeper docs for details). # This is a comma separated host:port pairs, each corresponding to a zk # server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002". # You can also append an optional chroot string to the urls to specify the # root directory for all kafka znodes. zookeeper.connect=localhost:2181 # Timeout in ms for connecting to zookeeper zookeeper.connection.timeout.ms=18000 ############################# Group Coordinator Settings ############################# # The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance. # The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms. # The default value for this is 3 seconds. # We override this to 0 here as it makes for a better out-of-the-box experience for development and testing. # However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup. group.initial.rebalance.delay.ms=0

[root@node1 ~]# vim /kafka_cluster/kafka/config/log4j.properties # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # Unspecified loggers and loggers with additivity=true output to server.log and stdout # Note that INFO only applies to unspecified loggers, the log level of the child logger is used otherwise log4j.rootLogger=INFO, stdout, kafkaAppender log4j.appender.stdout=org.apache.log4j.ConsoleAppender log4j.appender.stdout.layout=org.apache.log4j.PatternLayout log4j.appender.stdout.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.kafkaAppender=org.apache.log4j.DailyRollingFileAppender log4j.appender.kafkaAppender.DatePattern='.'yyyy-MM-dd-HH log4j.appender.kafkaAppender.File=${kafka.logs.dir}/server.log log4j.appender.kafkaAppender.layout=org.apache.log4j.PatternLayout log4j.appender.kafkaAppender.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.stateChangeAppender=org.apache.log4j.DailyRollingFileAppender log4j.appender.stateChangeAppender.DatePattern='.'yyyy-MM-dd-HH log4j.appender.stateChangeAppender.File=${kafka.logs.dir}/state-change.log log4j.appender.stateChangeAppender.layout=org.apache.log4j.PatternLayout log4j.appender.stateChangeAppender.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.requestAppender=org.apache.log4j.DailyRollingFileAppender log4j.appender.requestAppender.DatePattern='.'yyyy-MM-dd-HH log4j.appender.requestAppender.File=${kafka.logs.dir}/kafka-request.log log4j.appender.requestAppender.layout=org.apache.log4j.PatternLayout log4j.appender.requestAppender.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.cleanerAppender=org.apache.log4j.DailyRollingFileAppender log4j.appender.cleanerAppender.DatePattern='.'yyyy-MM-dd-HH log4j.appender.cleanerAppender.File=${kafka.logs.dir}/log-cleaner.log log4j.appender.cleanerAppender.layout=org.apache.log4j.PatternLayout log4j.appender.cleanerAppender.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.controllerAppender=org.apache.log4j.DailyRollingFileAppender log4j.appender.controllerAppender.DatePattern='.'yyyy-MM-dd-HH log4j.appender.controllerAppender.File=${kafka.logs.dir}/controller.log log4j.appender.controllerAppender.layout=org.apache.log4j.PatternLayout log4j.appender.controllerAppender.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.authorizerAppender=org.apache.log4j.DailyRollingFileAppender log4j.appender.authorizerAppender.DatePattern='.'yyyy-MM-dd-HH log4j.appender.authorizerAppender.File=${kafka.logs.dir}/kafka-authorizer.log log4j.appender.authorizerAppender.layout=org.apache.log4j.PatternLayout log4j.appender.authorizerAppender.layout.ConversionPattern=[%d] %p %m (%c)%n # Change the line below to adjust ZK client logging log4j.logger.org.apache.zookeeper=INFO # Change the two lines below to adjust the general broker logging level (output to server.log and stdout) log4j.logger.kafka=INFO log4j.logger.org.apache.kafka=INFO # Change to DEBUG or TRACE to enable request logging log4j.logger.kafka.request.logger=WARN, requestAppender log4j.additivity.kafka.request.logger=false # Uncomment the lines below and change log4j.logger.kafka.network.RequestChannel$ to TRACE for additional output # related to the handling of requests #log4j.logger.kafka.network.Processor=TRACE, requestAppender #log4j.logger.kafka.server.KafkaApis=TRACE, requestAppender #log4j.additivity.kafka.server.KafkaApis=false log4j.logger.kafka.network.RequestChannel$=WARN, requestAppender log4j.additivity.kafka.network.RequestChannel$=false log4j.logger.kafka.controller=TRACE, controllerAppender log4j.additivity.kafka.controller=false log4j.logger.kafka.log.LogCleaner=INFO, cleanerAppender log4j.additivity.kafka.log.LogCleaner=false log4j.logger.state.change.logger=INFO, stateChangeAppender log4j.additivity.state.change.logger=false # Access denials are logged at INFO level, change to DEBUG to also log allowed accesses log4j.logger.kafka.authorizer.logger=INFO, authorizerAppender log4j.additivity.kafka.authorizer.logger=false

[root@node1 ~]# vim /kafka_cluster/kafka/config/tools-log4j.properties # Licensed to the Apache Software Foundation (ASF) under one or more # contributor license agreements. See the NOTICE file distributed with # this work for additional information regarding copyright ownership. # The ASF licenses this file to You under the Apache License, Version 2.0 # (the "License"); you may not use this file except in compliance with # the License. You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. log4j.rootLogger=WARN, stderr log4j.appender.stderr=org.apache.log4j.ConsoleAppender log4j.appender.stderr.layout=org.apache.log4j.PatternLayout log4j.appender.stderr.layout.ConversionPattern=[%d] %p %m (%c)%n log4j.appender.stderr.Target=System.err

(4) 启动容器,这里使用命令行环境变量模式,不修改配置文件了

#另外两台修改--name,KAFKA_BROKER_ID,KAFKA_LISTENERS这三个值即可 #方式一,使用宿主机网卡模式 [root@node1 ~]# docker run -d --name=kafka1 --restart=always --net=host -v /etc/hosts:/etc/hosts -v /kafka_cluster/kafka/data:/kafka -v /kafka_cluster/kafka/config:/opt/kafka/config -v /kafka_cluster/kafka/logs:/opt/kafka/logs -e KAFKA_BROKER_ID=1 -e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 -e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://192.168.145.7:9092 -e KAFKA_ZOOKEEPER_CONNECT=192.168.145.7:2181 -e KAFKA_HEAP_OPTS="-Xmx1G -Xms1G" wurstmeister/kafka:latest #方式二,使用端口映射模式 [root@node1 ~]# docker run -d --name=kafka1 -p 9092:9092 -v /kafka_cluster/kafka/data:/kafka -v /kafka_cluster/kafka/config:/opt/kafka/config -v /kafka_cluster/kafka/logs:/opt/kafka/logs -e KAFKA_BROKER_ID=1 -e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 -e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://192.168.145.7:9092 -e KAFKA_ZOOKEEPER_CONNECT=192.168.145.7:2181 -e KAFKA_HEAP_OPTS="-Xmx1G -Xms1G" wurstmeister/kafka:latest

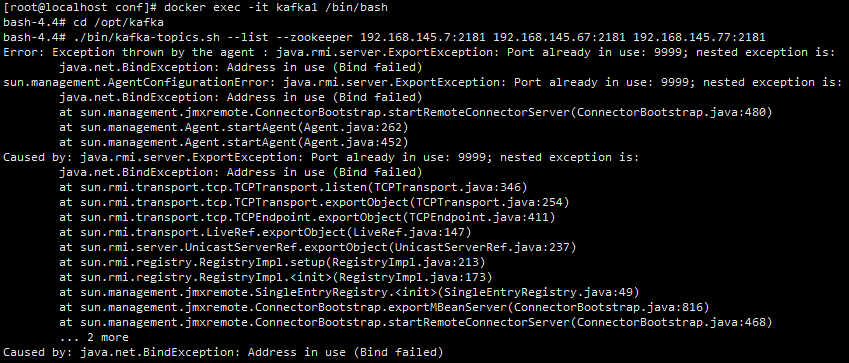

解决方法:修改kafka-run-class.sh脚本

bash-4.4# vi /opt/kafka_2.13-2.6.0/bin/kafka-run-class.sh #找到base_dir=$(dirname $0)/.. 行,在其上方添加如下代码: ISKAFKASERVER="false" if [[ "$*" =~ "kafka.kafka" ]]; then ISKAFKASERVER="true" fi #找到if [ $JMX_PORT ]; then 行,将其注释掉,然后添加如下代码 if [ $JMX_PORT ] && [ -z "$ISKAFKASERVER" ]; then #然后保存退出即可 #再试下执行bin目录下脚本工具,可以发现无报错了 bash-4.4# cd /opt/kafka bash-4.4# ./bin/kafka-topics.sh --list --zookeeper 192.168.145.7:2181 192.168.145.67:2181 192.168.145.77:2181 __consumer_offsets test

(5) 查看kafka状态

[root@localhost ~]# docker exec -it zookeeper-1 /bin/bash ./bin/zkCli.sh Connecting to localhost:2181 log4j:WARN No appenders could be found for logger (org.apache.zookeeper.ZooKeeper). log4j:WARN Please initialize the log4j system properly. log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info. Welcome to ZooKeeper! JLine support is enabled WATCHER:: WatchedEvent state:SyncConnected type:None path:null [zk: localhost:2181(CONNECTED) 0] ls /brokers/ids [1, 2, 3] [zk: localhost:2181(CONNECTED) 1] get /brokers/ids/1 {"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://192.168.145.7:9092"],"jmx_port":9999,"port":9092,"host":"192.168.145.7","version":4,"timestamp":"1599460603079"} [zk: localhost:2181(CONNECTED) 2] get /brokers/ids/2 {"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://192.168.145.67:9092"],"jmx_port":9999,"port":9092,"host":"192.168.145.67","version":4,"timestamp":"1599460744091"} [zk: localhost:2181(CONNECTED) 3] get /brokers/ids/3 {"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://192.168.145.77:9092"],"jmx_port":9999,"port":9092,"host":"192.168.145.77","version":4,"timestamp":"1599460773190"}

(6) 测试消息创建与发送

#在192.168.145.7上创建一个消息 [root@localhost ~]# docker exec -it kafka1 /bin/bash #查看topic列表 bash-4.4# cd /opt/kafka bash-4.4# ./bin/kafka-topics.sh --list --zookeeper 192.168.145.7:2181 192.168.145.67:2181 192.168.145.77:2181 #创建一个topic bash-4.4# ./bin/kafka-topics.sh --create --topic test --zookeeper 192.168.145.7:2181 --replication-factor 1 --partitions 1 #查询集群描述 bash-4.4# ./bin/kafka-topics.sh --describe --zookeeper 192.168.145.7:2181 192.168.145.67:2181 192.168.145.77:2181 --topic test #向topic写数据 bash-4.4# ./bin/kafka-console-producer.sh --bootstrap-server 192.168.145.7:9092,192.168.145.67:9092,192.168.145.77:9092 --topic test >test a message #在192.168.145.67上消费一个topic [root@node2 data]# docker exec -it kafka2 /bin/bash bash-4.4# cd /opt/kafka bash-4.4# ./bin/kafka-console-consumer.sh --bootstrap-server 192.168.145.7:9092,192.168.145.67:9092,192.168.145.77:9092 --topic test --from-beginning test a message

[root@localhost ~]# docker pull sheepkiller/kafka-manager

(2) 启动容器

[root@localhost ~]# docker run -d --restart=always --name=kafka-manager -p 9000:9000 -e ZK_HOSTS="192.168.145.7:2181" sheepkiller/kafka-manager:latest

(3) 浏览器访问