三台Linux系统搭建K8S集群

服务器配置如下:

| hostname | Server IP | centos version | docker version | k8s version |

| master | 192.168.72.131 | 7.7.1908 | 19.03.8 | 1.18.2 |

| node2 | 192.168.72.132 | 7.7.1908 | 19.03.8 | 1.18.2 |

| node3 | 192.168.72.133 | 7.7.1908 | 19.03.8 | 1.18.2 |

基本配置

1、host配置:

#分别在服务器上修改hostname,使用hostnamectl命令,或者直接修改 /etc/hostname 文件

hostnamectl --static set-hostname master

hostnamectl --static set-hostname node2

hostnamectl --static set-hostname node3

#每台机器都执行

cat >> /etc/hosts << EOF

192.168.72.131 master

192.168.72.132 node2

192.168.72.133 node3

EOF

2、关闭防火墙:

systemctl stop firewalld & systemctl disable firewalld

3、关闭swap

#临时关闭

swapoff -a

#永久关闭,重启后生效

vi /etc/fstab

/dev/mapper/centos-swap swap ... #注释以下代码

4、关闭selinux

#获取状态

getenforce

#暂时关闭

setenforce 0

#永久关闭 需重启

vim /etc/sysconfig/selinux

SELINUX=disabled #修改以下参数,设置为disable

5、修改网络配置

# 所有机器上都要进行

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

6、统一时间【如果需要】

#统一时区,为上海时区

ln -snf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

bash -c "echo 'Asia/Shanghai' > /etc/timezone"

#统一使用阿里服务器进行时间更新

yum install -y ntpdate #安装ntpdate工具

ntpdate ntp1.aliyun.com #更新时间

安装docker

1、删除原有的docker组件

yum remove docker

docker-client

docker-client-latest

docker-common

docker-latest

docker-latest-logrotate

docker-logrotate

docker-selinux

docker-engine-selinux

docker-engine

2、配置系统docker源

yum install -y yum-utils device-mapper-persistent-data lvm2

# 注意:此处更换了阿里的源,适用国内用户

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3、查看docker安装列表,选择并安装

yum list docker-ce --showduplicates | sort -r

# 此处直接安装最新版本的docker-ce

yum install -y docker-ce

# 注:如果要安装指定的版本可以参考下边的命令

yum install -y docker-ce-3:19.03.8-3.el7.x86_64

4、启动docker

systemctl enable docker && systemctl start docker

5、更换镜像仓库源 阿里云docker仓库

# 进入阿里云帐号,依次进入:控制台 --> 容器镜像服务(可以搜索到) --> 镜像中心 --> 镜像加速器;

镜像加速器中获取到加速器地址: "https://xxxxxxx.mirror.aliyuncs.com"

如下图所示:https://cr.console.aliyun.com/cn-hangzhou/instances/mirrors

# linux下默认文件为/etc/docker/daemon.json,添加下列仓库

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://79e563fi.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"

]

}

EOF

# 或者

tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors": ["https://79e563fi.mirror.aliyuncs.com"] } EOF

# 重启docker使其生效

systemctl daemon-reload && systemctl restart docker

安装K8S组件:

1、更新K8S源(所有节点)

# 访问此地址

https://developer.aliyun.com/mirror/ https://developer.aliyun.com/mirror/kubernetes?spm=a2c6h.13651102.0.0.3e221b11QNoepV

# 写入加速文件

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

# 安装组件

yum install -y kubelet kubeadm kubectl

# 启动kubelet 服务

systemctl enable kubelet && systemctl start kubelet(maste节点未初始化完成之前,启动kubelet会出现异常)

参考:kubelet启动异常 启动失败

以上步骤每个node都需要执行

配置master服务器

配置k8s初始化文件

1、master节点下生成默认配置文件

kubeadm config print init-defaults > init-kubeadm.conf

2、修改init-kubeadm.conf 主要参数

# localAPIEndpointc,advertiseAddress为master-ip ,port默认不修改

localAPIEndpoint:

advertiseAddress: 192.168.56.101 #此处为master的IP

bindPort: 6443

#imageRepository: k8s.gcr.io #更换k8s镜像仓库

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

# kubernetesVersion: v1.18.0 #修改为版本 v1.18.2

kubernetesVersion: v1.18.2

# 配置子网络

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.246.0.0/16 #添加pod子网络,使用的是flannel网络

3、拉取下载k8s组件

# 查看安装时需要的镜像文件列表

kubeadm config images list --config init-kubeadm.conf

# 更换k8s镜像仓库之前,即 imageRepository: k8s.gcr.io

k8s.gcr.io/kube-apiserver:v1.18.2

k8s.gcr.io/kube-controller-manager:v1.18.2

k8s.gcr.io/kube-scheduler:v1.18.2

k8s.gcr.io/kube-proxy:v1.18.2

k8s.gcr.io/pause:3.2

k8s.gcr.io/etcd:3.4.3-0

k8s.gcr.io/coredns:1.6.7

# 更换k8s镜像仓库之后,即 imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.18.2

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.18.2

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.18.2

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.18.2

registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2

registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0

registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.6.7

# 根据配置文件进行镜像下载,这里使用镜像仓库 --> imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kubeadm config images pull --config init-kubeadm.conf

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.18.2

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.18.2

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.18.2

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.18.2

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.6.7

4、初始化k8s

# init

# 如果kubeadm init过了,此时需要加个参数来忽略到这些:--ignore-preflight-errors=all

kubeadm init --config init-kubeadm.conf

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.18.2 [config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.18.2 [config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.18.2 [config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.18.2 [config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2 [config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0 [config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:1.6.7 [root@master ~]# kubeadm init --config init-kubeadm.conf W0506 23:11:46.807147 11111 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] [init] Using Kubernetes version: v1.18.2 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.72.131] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [master localhost] and IPs [192.168.72.131 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.72.131 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" W0506 23:11:53.514098 11111 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC" [control-plane] Creating static Pod manifest for "kube-scheduler" W0506 23:11:53.515754 11111 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 21.505498 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node master as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: abcdef.0123456789abcdef [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.72.131:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:4082a7afce070910097c7926919f404cd25eb3be614598139704fd0367149aee

# 启动后可以根据提示执行下列命令,并记录john token

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.72.131:6443 --token abcdef.0123456789abcdef

--discovery-token-ca-cert-hash sha256:4082a7afce070910097c7926919f404cd25eb3be614598139704fd0367149aee

# 此处要记录下 join语句,如果join token忘记,则需要执行下边命令重新生成 kubeadm token create --print-join-command

# k8s 其他节点加入集群(此步骤先不要执行)

kubeadm join 192.168.72.131:6443 --token abcdef.0123456789abcdef

--discovery-token-ca-cert-hash sha256:4082a7afce070910097c7926919f404cd25eb3be614598139704fd0367149aee

#启动kubelet

systemctl enable kubelet && systemctl start kubelet

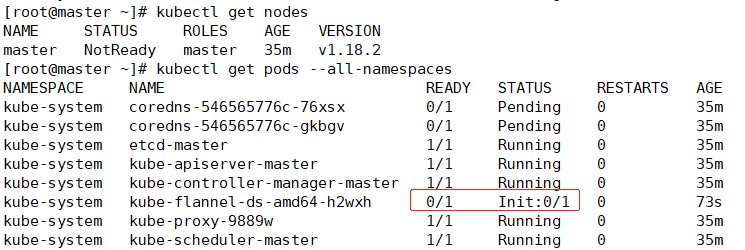

5、查看master节点初始化情况

# 查看启动状态

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady master 16m v1.18.2 //NotReady状态是因为缺少flannel或者Calico这样的网络组件

# 查看组件状态

# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

# 查看具体初始化情况

# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-546565776c-76xsx 0/1 Pending 0 16m //需要安装网络插件

kube-system coredns-546565776c-gkbgv 0/1 Pending 0 16m

kube-system etcd-master 1/1 Running 0 16m

kube-system kube-apiserver-master 1/1 Running 0 16m

kube-system kube-controller-manager-master 1/1 Running 0 16m

kube-system kube-proxy-9889w 1/1 Running 0 16m

kube-system kube-scheduler-master 1/1 Running 0 16m

6、安装网络插件flannel

获取flannel网络组件:https://github.com/coreos/flannel

1、 在线获取部署清单,并基于此清单下载镜像,启动并部署flannel

2、flannel默认的网段是10.244.0.0/16,如果想修改为自己特定的网段,可以先下载yaml文件,修改网段

# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# vim kube-flannel.yml

net-conf.json: | { "Network": "10.246.0.0/16", "Backend": { "Type": "vxlan" } }

# kubectl apply -f kube-flannel.yml

在线部署flannel

# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds-amd64 created

daemonset.apps/kube-flannel-ds-arm64 created

daemonset.apps/kube-flannel-ds-arm created

daemonset.apps/kube-flannel-ds-ppc64le created

daemonset.apps/kube-flannel-ds-s390x created

# 正在下载flannel镜像

# flannel镜像下载完成并加载后

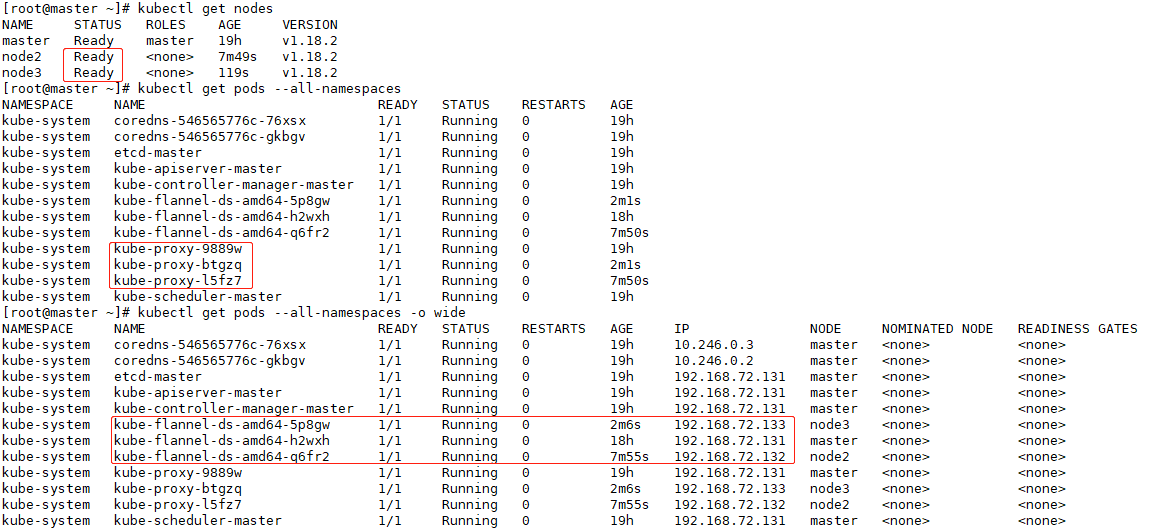

配置node

node节点 初始化内容

# 拷贝 master 机器上 $HOME/.kube/config 到各node节点上

scp $HOME/.kube/config root@node2:~/

scp $HOME/.kube/config root@node3:~/

# 分别在node2和node3上执行下边命令

# 不然执行kubectl 会报错

mkdir -p $HOME/.kube

mv $HOME/config $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

node节点注册master

# 直接使用指令加入

kubeadm join 192.168.72.131:6443 --token abcdef.0123456789abcdef

--discovery-token-ca-cert-hash sha256:4082a7afce070910097c7926919f404cd25eb3be614598139704fd0367149aee

# token加入语句忘记了可以在master上使用下边命令进行生成

kubeadm token create --print-join-command

配置etcdctl命令

etcdctl 二进制包网站 使用etcdctl

下载文件,并解压到 /usr/local/etcd 目录,然后创建软连接即可

mkdir -pv /usr/local/etcd/ && tar xzvf etcd-v3.4.9-linux-amd64.tar.gz -C /usr/local/etcd --strip-components=1

/usr/local/etcd/etcdctl version && echo " " && /usr/local/etcd/etcd --version

ln -s /usr/local/etcd/etcd /usr/bin/etcd && ln -s /usr/local/etcd/etcdctl /usr/bin/etcdctl

后续操作:

1、更新镜像,参考

kubectl set image deployment nginx-deployment nginx=nginx:1.14.4 //更新镜像

kubectl rollout status deployment nginx-deployment //查看镜像更新过程

podSubnet: 10.246.0.0/16