正文

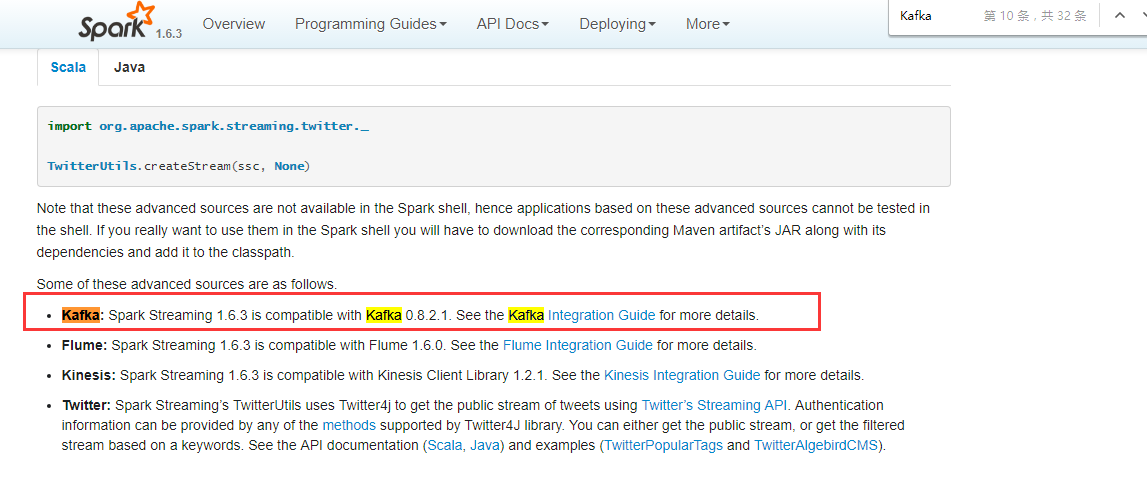

首先打开spark官网,找一个自己用版本我选的是1.6.3的,然后进入SparkStreaming ,通过搜索这个位置找到Kafka,

点击过去会找到一段Scala的代码

import org.apache.spark.streaming.kafka._

val kafkaStream = KafkaUtils.createStream(streamingContext,

[ZK quorum], [consumer group id], [per-topic number of Kafka partitions to consume])如果想看createStream方法,可以值通过SparkStreaming中的 Where to go from here 中看到,有Java,Scala,Python的documents选择自己编码的一种点击进去。我这里用的Scala,点击KafkaUtils进去后会看到这个类中有很多的方法,其中我们要找的是createStream方法,看看有哪些重载。我们把这个方法的解释赋值过来。

defcreateStream(jssc: JavaStreamingContext, zkQuorum: String, groupId: String, topics: Map[String, Integer]): JavaPairReceiverInputDStream[String, String]

最后我们在IDEA中写Scala获取Kafka代码

def main(args: Array[String]): Unit = {

val spark = SparkSession.builder()

.appName(Constants.SPARK_APP_NAME_PRODUCT)

.getOrCreate()

val map = Map("topic" -> 1)

val ssc = new StreamingContext(spark.sparkContext, Seconds(5))

val createStream: ReceiverInputDStream[(String, String)] = KafkaUtils.createStream(ssc, "hadoop01:9092,hadoop02:9092,hadoop03:9092", "groupId", map, StorageLevel.MEMORY_AND_DISK_SER)

val map1: DStream[String] = createStream.map(_._2)

}

简答的代码过程,因为还有一些后续的工作要做,所以只是简单的写了一些从Kafa获取数据的代码从官网查找的一个过程,也是怀着学习的态度与大家一起交流,希望大牛们多多指点。

Create an input stream that pulls messages from Kafka Brokers. Storage level of the data will be the default StorageLevel.MEMORY_AND_DISK_SER_2.

JavaStreamingContext object

Zookeeper quorum (hostname:port,hostname:port,..)

The group id for this consumer

Map of (topic_name -> numPartitions) to consume. Each partition is consumed in its own thread

DStream of (Kafka message key, Kafka message value)