为什么要初始化

非线性VINS估计器的性能对于初始的速度,尺度,重力向量,空间点3D位置,以及外参等非常敏感。在很多场合中,能做到相机和IMU即插即用,线上自动校准与初始化,将会给用户带来极大的方便性。VINS里面分四步进行,第一个就是上次讲的旋转外参校准,第二个就是找到某帧作为系统初始化原点,计算3D地图点,第三就是将相机坐标系转到IMU坐标系中,第四就是相机与IMU对齐,包括IMU零偏初始化,速度,重力向量,尺度初始化

初始化系统原点与转换到IMU坐标系

bool Estimator::initialStructure()

{

TicToc t_sfm;

//check imu observibility, 通过协方差检测IMU的可观测性

{

map<double, ImageFrame>::iterator frame_it;

Vector3d sum_g;

for (frame_it = all_image_frame.begin(), frame_it++; frame_it != all_image_frame.end(); frame_it++)

{

double dt = frame_it->second.pre_integration->sum_dt;

Vector3d tmp_g = frame_it->second.pre_integration->delta_v / dt;

sum_g += tmp_g;

}

Vector3d aver_g;

aver_g = sum_g * 1.0 / ((int)all_image_frame.size() - 1);

double var = 0;

for (frame_it = all_image_frame.begin(), frame_it++; frame_it != all_image_frame.end(); frame_it++)

{

double dt = frame_it->second.pre_integration->sum_dt;

Vector3d tmp_g = frame_it->second.pre_integration->delta_v / dt;

var += (tmp_g - aver_g).transpose() * (tmp_g - aver_g);

//cout << "frame g " << tmp_g.transpose() << endl;

}

var = sqrt(var / ((int)all_image_frame.size() - 1));

//ROS_WARN("IMU variation %f!", var);

if(var < 0.25)

{

ROS_INFO("IMU excitation not enouth!");

//return false;

}

}

// global sfm 准备

Quaterniond Q[frame_count + 1];

Vector3d T[frame_count + 1];

map<int, Vector3d> sfm_tracked_points;

vector<SFMFeature> sfm_f;

for (auto &it_per_id : f_manager.feature)

{

int imu_j = it_per_id.start_frame - 1;

SFMFeature tmp_feature;

tmp_feature.state = false;

tmp_feature.id = it_per_id.feature_id;

for (auto &it_per_frame : it_per_id.feature_per_frame)

{

imu_j++;

Vector3d pts_j = it_per_frame.point;

tmp_feature.observation.push_back(make_pair(imu_j, Eigen::Vector2d{pts_j.x(), pts_j.y()}));

}

sfm_f.push_back(tmp_feature);

}

//在窗口内选择跟最后一帧视差最大的帧,利用五点法计算相对旋转和平移量

Matrix3d relative_R;

Vector3d relative_T;

int l;

if (!relativePose(relative_R, relative_T, l))

{

ROS_INFO("Not enough features or parallax; Move device around");

return false;

}

// 为了更方便理解,把relativePose 函数取了出来

| ----------------------------------------------------------------------------------------------------------

| bool Estimator::relativePose(Matrix3d &relative_R, Vector3d &relative_T, int &l)

| {

| // find previous frame which contians enough correspondance and parallex with newest frame,循环找出和最新帧视差最大的历史帧

| for (int i = 0; i < WINDOW_SIZE; i++)

| {

| vector<pair<Vector3d, Vector3d>> corres;

| corres = f_manager.getCorresponding(i, WINDOW_SIZE);

| if (corres.size() > 20)

| {

| double sum_parallax = 0;

| double average_parallax;

| for (int j = 0; j < int(corres.size()); j++)

| {

| Vector2d pts_0(corres[j].first(0), corres[j].first(1));

| Vector2d pts_1(corres[j].second(0), corres[j].second(1));

| double parallax = (pts_0 - pts_1).norm();

| sum_parallax = sum_parallax + parallax;

| }

| average_parallax = 1.0 * sum_parallax / int(corres.size());

| // 找到后就可以利用五点法计算,如果计算失败就继续循环

| if(average_parallax * 460 > 30 && m_estimator.solveRelativeRT(corres, relative_R, relative_T))

| {

| l = i;

| ROS_DEBUG("average_parallax %f choose l %d and newest frame to triangulate the whole structure", average_parallax * 460, l);

| return true;

| }

| }

| }

| return false;

| }

| // 核心的五点法,也是求本质矩阵

| bool MotionEstimator::solveRelativeRT(const vector<pair<Vector3d, Vector3d>> &corres, Matrix3d &Rotation, Vector3d &Translation)

| {

| if (corres.size() >= 15)

| {

| vector<cv::Point2f> ll, rr;

| for (int i = 0; i < int(corres.size()); i++)

| {

| ll.push_back(cv::Point2f(corres[i].first(0), corres[i].first(1)));

| rr.push_back(cv::Point2f(corres[i].second(0), corres[i].second(1)));

| }

| cv::Mat mask;

| cv::Mat E = cv::findFundamentalMat(ll, rr, cv::FM_RANSAC, 0.3 / 460, 0.99, mask);

| cv::Mat cameraMatrix = (cv::Mat_<double>(3, 3) << 1, 0, 0, 0, 1, 0, 0, 0, 1);

| cv::Mat rot, trans;

| // recoverPose内用了一些复杂的条件判断如何选取R和T,但是其用了单位矩阵作为cameraMatrix,feature_tracker_node在处理图像的是已经把图像坐标转为归一化的相机坐标了,并且进行了去畸变,所以之后的重投影部分只需要外参,不再需要内参了.

| int inlier_cnt = cv::recoverPose(E, ll, rr, cameraMatrix, rot, trans, mask);

| //cout << "inlier_cnt " << inlier_cnt << endl;

|

| Eigen::Matrix3d R;

| Eigen::Vector3d T;

| for (int i = 0; i < 3; i++)

| {

| T(i) = trans.at<double>(i, 0);

| for (int j = 0; j < 3; j++)

| R(i, j) = rot.at<double>(i, j);

| }

|

| Rotation = R.transpose();

| Translation = -R.transpose() * T;

| if(inlier_cnt > 12)

| return true;

| else

| return false;

| }

| return false;

| }

| ----------------------------------------------------------------------------------------------------------

GlobalSFM sfm;

/* sfm.construct 函数本身就很巨大,里面很多步骤,代码就不贴了,大致如下:

1. 三角化l 帧与frame_num - 1帧,得到一些地图点。

2. 通过这些地图点,利用pnp的方法计算l+1, l+2, l+3, …… frame_num-2 相对于l的位姿。而且每计算一帧,就会与frame_num - 1帧进行三角化,得出更多地图点

3. 三角化l+1, l+2 …… frame_num-2帧与l帧

4. 对l-1, l-2, l-3 等帧与sfm_f的特征点队列进行pnp求解,得出相对于l的位姿,并三角化其与l帧。

5. 三角化剩余的点

6. ceres全局BA优化,最小化3d投影误差

*/

if(!sfm.construct(frame_count + 1, Q, T, l,

relative_R, relative_T,

sfm_f, sfm_tracked_points))

{

ROS_DEBUG("global SFM failed!");

marginalization_flag = MARGIN_OLD;

return false;

}

//solve pnp for all frame,因为有continue存在,怎么会solve all,感觉只是不满足时间戳相等的才pnp求解

map<double, ImageFrame>::iterator frame_it;

map<int, Vector3d>::iterator it;

frame_it = all_image_frame.begin( );

for (int i = 0; frame_it != all_image_frame.end( ); frame_it++)

{

// provide initial guess

cv::Mat r, rvec, t, D, tmp_r;

if((frame_it->first) == Headers[i].stamp.toSec())

{

frame_it->second.is_key_frame = true;

frame_it->second.R = Q[i].toRotationMatrix() * RIC[0].transpose();

frame_it->second.T = T[i];

i++;

continue;

}

if((frame_it->first) > Headers[i].stamp.toSec())

{

i++;

}

Matrix3d R_inital = (Q[i].inverse()).toRotationMatrix();

Vector3d P_inital = - R_inital * T[i];

cv::eigen2cv(R_inital, tmp_r);

cv::Rodrigues(tmp_r, rvec);

cv::eigen2cv(P_inital, t);

frame_it->second.is_key_frame = false;

vector<cv::Point3f> pts_3_vector;

vector<cv::Point2f> pts_2_vector;

for (auto &id_pts : frame_it->second.points)

{

int feature_id = id_pts.first;

for (auto &i_p : id_pts.second)

{

it = sfm_tracked_points.find(feature_id);

if(it != sfm_tracked_points.end())

{

Vector3d world_pts = it->second;

cv::Point3f pts_3(world_pts(0), world_pts(1), world_pts(2));

pts_3_vector.push_back(pts_3);

Vector2d img_pts = i_p.second.head<2>();

cv::Point2f pts_2(img_pts(0), img_pts(1));

pts_2_vector.push_back(pts_2);

}

}

}

cv::Mat K = (cv::Mat_<double>(3, 3) << 1, 0, 0, 0, 1, 0, 0, 0, 1);

if(pts_3_vector.size() < 6)

{

cout << "pts_3_vector size " << pts_3_vector.size() << endl;

ROS_DEBUG("Not enough points for solve pnp !");

return false;

}

if (! cv::solvePnP(pts_3_vector, pts_2_vector, K, D, rvec, t, 1))

{

ROS_DEBUG("solve pnp fail!");

return false;

}

cv::Rodrigues(rvec, r);

MatrixXd R_pnp,tmp_R_pnp;

cv::cv2eigen(r, tmp_R_pnp);

R_pnp = tmp_R_pnp.transpose();

MatrixXd T_pnp;

cv::cv2eigen(t, T_pnp);

T_pnp = R_pnp * (-T_pnp);

frame_it->second.R = R_pnp * RIC[0].transpose();

frame_it->second.T = T_pnp;

}

if (visualInitialAlign())

return true;

else

{

ROS_INFO("misalign visual structure with IMU");

return false;

}

}

相机与IMU对齐

bool Estimator::visualInitialAlign()

{

TicToc t_g;

VectorXd x;

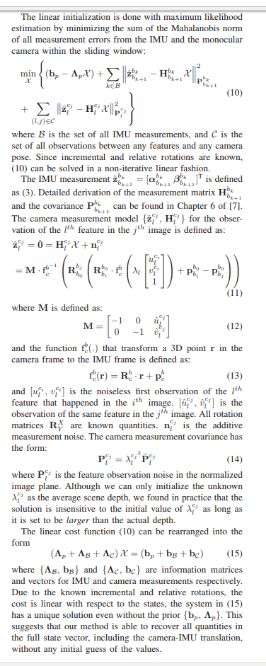

/*里面包含

1. solveGyroscopeBias()零偏初始化: 在滑动窗口中,每两帧之间的相对旋转与IMU预积分产生的旋转进行最小二乘法优化,用ldlt求解

2. LinearAlignment() 尺度初始化:由于在视觉初始化SFM的过程中,将其中位姿变化较大的两帧中使用E矩阵求解出来旋转和位移,后续的PnP和三角化都是在这个尺度下完成的。所以当前的尺度与IMU测量出来的真实世界尺度肯定不是一致的,所以需要这里进行对齐。这里对齐的方法主要是通过在滑动窗口中每两帧之间的位置和速度与IMU预积分出来的位置和速度组成一个最小二乘法的形式,然后求解出来

3. RefineGravity()重力向量优化:进一步细化重力加速度,提高估计值的精度,形式与LinearAlignment()是一致的,只是将g改为g⋅ĝ +w1b1+w2b2

2和3的公式相对难懂,请参考下面图片

*/

bool result = VisualIMUAlignment(all_image_frame, Bgs, g, x);

if(!result)

{

ROS_DEBUG("solve g failed!");

return false;

}

// change state

for (int i = 0; i <= frame_count; i++)

{

Matrix3d Ri = all_image_frame[Headers[i].stamp.toSec()].R;

Vector3d Pi = all_image_frame[Headers[i].stamp.toSec()].T;

Ps[i] = Pi;

Rs[i] = Ri;

all_image_frame[Headers[i].stamp.toSec()].is_key_frame = true;

}

VectorXd dep = f_manager.getDepthVector();

for (int i = 0; i < dep.size(); i++)

dep[i] = -1;

f_manager.clearDepth(dep);

//triangulat on cam pose , no tic三角化特征点,

Vector3d TIC_TMP[NUM_OF_CAM];

for(int i = 0; i < NUM_OF_CAM; i++)

TIC_TMP[i].setZero();

ric[0] = RIC[0];

f_manager.setRic(ric);

f_manager.triangulate(Ps, &(TIC_TMP[0]), &(RIC[0]));

//更新相机速度,位置和旋转量(通过精确求解的尺度,重力向量)

double s = (x.tail<1>())(0);

for (int i = 0; i <= WINDOW_SIZE; i++)

{

pre_integrations[i]->repropagate(Vector3d::Zero(), Bgs[i]);

}

for (int i = frame_count; i >= 0; i--)

Ps[i] = s * Ps[i] - Rs[i] * TIC[0] - (s * Ps[0] - Rs[0] * TIC[0]);

int kv = -1;

map<double, ImageFrame>::iterator frame_i;

for (frame_i = all_image_frame.begin(); frame_i != all_image_frame.end(); frame_i++)

{

if(frame_i->second.is_key_frame)

{

kv++;

Vs[kv] = frame_i->second.R * x.segment<3>(kv * 3);

}

}

for (auto &it_per_id : f_manager.feature)

{

it_per_id.used_num = it_per_id.feature_per_frame.size();

if (!(it_per_id.used_num >= 2 && it_per_id.start_frame < WINDOW_SIZE - 2))

continue;

it_per_id.estimated_depth *= s;

}

Matrix3d R0 = Utility::g2R(g);

double yaw = Utility::R2ypr(R0 * Rs[0]).x();

R0 = Utility::ypr2R(Eigen::Vector3d{-yaw, 0, 0}) * R0;

g = R0 * g;

//Matrix3d rot_diff = R0 * Rs[0].transpose();

Matrix3d rot_diff = R0;

for (int i = 0; i <= frame_count; i++)

{

Ps[i] = rot_diff * Ps[i];

Rs[i] = rot_diff * Rs[i];

Vs[i] = rot_diff * Vs[i];

}

ROS_DEBUG_STREAM("g0 " << g.transpose());

ROS_DEBUG_STREAM("my R0 " << Utility::R2ypr(Rs[0]).transpose());

return true;

}