1.读取

file_path = r'C:Userszgz机器学习RobitStuSMSSpamCollection' email = open(file_path,'r',encoding='utf-8') # 打开文件 email_data = [] # 列表存邮件 email_label = [] # 存标签 csv_reader = csv.reader(email,delimiter = ' ') for line in csv_reader: email_label.append(line[0]) email_data.append(preprocessing(line[1])) # 每封邮件做预处理 email.close() print("总的邮件数",len(email_data))

2.数据预处理

# 根据词性,生成还原参数 pos def get_wordnet_pos(treebank_tag): if treebank_tag.startswith("J"): return nltk.corpus.wordnet.ADJ elif treebank_tag.startswith("V"): return nltk.corpus.wordnet.VERB elif treebank_tag.startswith("N"): return nltk.corpus.wordnet.NOUN elif treebank_tag.startswith("R"): return nltk.corpus.wordnet.ADV else: return nltk.corpus.wordnet.NOUN # 预处理 def preprocessing(text): tokens = [word for sent in nltk.sent_tokenize(text) for word in nltk.word_tokenize(sent)] # 分词 stops = stopwords.words('english') # 停用词 tokens = [token for token in tokens if token not in stops] # 去掉停用词 tokens = [token.lower() for token in tokens if len(token) >= 3] tag = nltk.pos_tag(tokens) # 词性标注 imtzr = WordNetLemmatizer() tokens = [imtzr.lemmatize(token, pos=get_wordnet_pos(tag[i][1])) for i, token in enumerate(tokens)] # 词性还原 preprocessed_text = ''.join(tokens) return preprocessed_text

3.数据划分—训练集和测试集数据划分

from sklearn.model_selection import train_test_split

x_train,x_test, y_train, y_test = train_test_split(data, target, test_size=0.2, random_state=0, stratify=y_train)

import numpy as np from sklearn.model_selection import train_test_split sms_data = np.array(sms_data) sms_label = np.array(sms_label) # test_size 指定划分的测试集样本占比 x_train, x_test, y_train, y_test = train_test_split(sms_data, sms_label, test_size=0.2, random_state=0, stratify=sms_label) print("原数据集大小:",len(sms_data)) print("训练集大小:",len(x_train)) print("测试集大小:",len(x_test))

4.文本特征提取

sklearn.feature_extraction.text.CountVectorizer

sklearn.feature_extraction.text.TfidfVectorizer

from sklearn.feature_extraction.text import TfidfVectorizer

tfidf2 = TfidfVectorizer()

观察邮件与向量的关系

向量还原为邮件

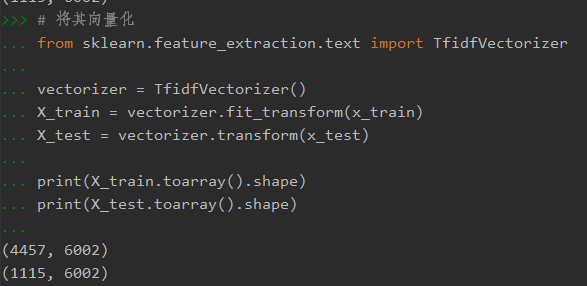

from sklearn.feature_extraction.text import TfidfVectorizer vectorizer = TfidfVectorizer() X_train = vectorizer.fit_transform(x_train) X_test = vectorizer.transform(x_test) print(X_train.toarray().shape) print(X_test.toarray().shape)

4.模型选择

from sklearn.naive_bayes import GaussianNB

from sklearn.naive_bayes import MultinomialNB

说明为什么选择这个模型?

from sklearn.naive_bayes import MultinomialNB

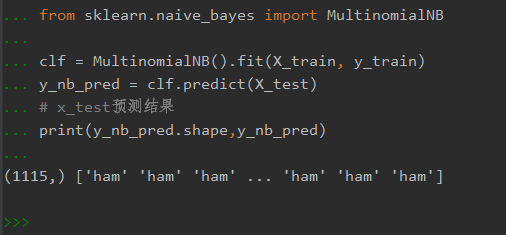

clf = MultinomialNB().fit(X_train, y_train) y_nb_pred = clf.predict(X_test) # x_test预测结果 print(y_nb_pred.shape,y_nb_pred)

5.模型评价:混淆矩阵,分类报告

from sklearn.metrics import confusion_matrix

confusion_matrix = confusion_matrix(y_test, y_predict)

说明混淆矩阵的含义:

混淆矩阵也称误差矩阵,是表示精度评价的一种标准格式,用n行n列的矩阵形式来表示。

混淆矩阵的每一列代表了预测类别 ,每一列的总数表示预测为该类别的数据的数目;每一行代表了数据的真实归属类别 ,每一行的数据总数表示该类别的数据实例的数目。

矩阵中的这四个数值,经常被用来定义其他一些度量。

from sklearn.metrics import classification_report

说明准确率、精确率、召回率、F值分别代表的意义:

准确率是我们最常见的评价指标,而且很容易理解,就是被分对的样本数除以所有的样本数,通常来说,正确率越高,分类器越好。

6.比较与总结

如果用CountVectorizer进行文本特征生成,与TfidfVectorizer相比,效果如何?

CountVectorizer:只考虑词汇在文本中出现的频率,属于词袋模型特征。

TfidfVectorizer: 除了考量某词汇在文本出现的频率,还关注包含这个词汇的所有文本的数量。能够削减高频没有意义的词汇出现带来的影响, 挖掘更有意义的特征。属于Tfidf特征。

相比之下,文本条目越多,Tfid的效果会越显著。

CountVectorizer与TfidfVectorizer相比,对于负类的预测更加准确,而正类的预测则稍逊色。但总体预测正确率也比TfidfVectorizer稍高,相比之下似乎CountVectorizer更适合进行预测。