1.训练文件的配置

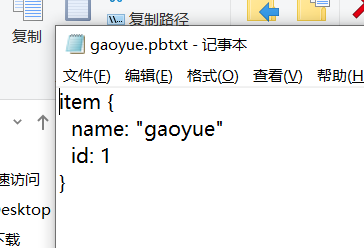

将生成的csv和record文件都放在新建的mydata文件夹下,并打开object_detection文件夹下的data文件夹,复制一个后缀为.pbtxt的文件到mtdata文件夹下,并重命名为gaoyue.pbtxt

用记事本打开该文件,因为我只分了一类,所以将其他内容删除,只剩下这一个类别,并将name改为gaoyue。

这时我们拥有的所有文件如下图所示。

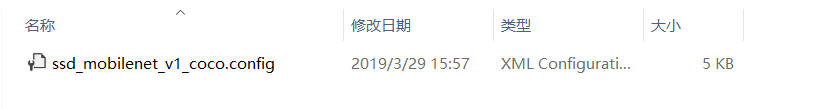

我们在object_detection文件夹下新建一个training文件夹,在里面新建一个记事本文件并命名为 ssd_mobilenet_v1_coco.config

打开,输入以下代码,按右边注释进行修改

# SSD with Mobilenet v1 configuration for MSCOCO Dataset. # Users should configure the fine_tune_checkpoint field in the train config as # well as the label_map_path and input_path fields in the train_input_reader and # eval_input_reader. Search for "PATH_TO_BE_CONFIGURED" to find the fields that # should be configured. model { ssd { num_classes: 1 # 你类别的数量,我这里只分了一类 box_coder { faster_rcnn_box_coder { y_scale: 10.0 x_scale: 10.0 height_scale: 5.0 width_scale: 5.0 } } matcher { argmax_matcher { matched_threshold: 0.5 unmatched_threshold: 0.5 ignore_thresholds: false negatives_lower_than_unmatched: true force_match_for_each_row: true } } similarity_calculator { iou_similarity { } } anchor_generator { ssd_anchor_generator { num_layers: 6 min_scale: 0.2 max_scale: 0.95 aspect_ratios: 1.0 aspect_ratios: 2.0 aspect_ratios: 0.5 aspect_ratios: 3.0 aspect_ratios: 0.3333 } } image_resizer { fixed_shape_resizer { height: 300 300 } } box_predictor { convolutional_box_predictor { min_depth: 0 max_depth: 0 num_layers_before_predictor: 0 use_dropout: false dropout_keep_probability: 0.8 kernel_size: 1 box_code_size: 4 apply_sigmoid_to_scores: false conv_hyperparams { activation: RELU_6, regularizer { l2_regularizer { weight: 0.00004 } } initializer { truncated_normal_initializer { stddev: 0.03 mean: 0.0 } } batch_norm { train: true, scale: true, center: true, decay: 0.9997, epsilon: 0.001, } } } } feature_extractor { type: 'ssd_mobilenet_v1' min_depth: 16 depth_multiplier: 1.0 conv_hyperparams { activation: RELU_6, regularizer { l2_regularizer { weight: 0.00004 } } initializer { truncated_normal_initializer { stddev: 0.03 mean: 0.0 } } batch_norm { train: true, scale: true, center: true, decay: 0.9997, epsilon: 0.001, } } } loss { classification_loss { weighted_sigmoid { } } localization_loss { weighted_smooth_l1 { } } hard_example_miner { num_hard_examples: 3000 iou_threshold: 0.99 loss_type: CLASSIFICATION max_negatives_per_positive: 3 min_negatives_per_image: 0 } classification_weight: 1.0 localization_weight: 1.0 } normalize_loss_by_num_matches: true post_processing { batch_non_max_suppression { score_threshold: 1e-8 iou_threshold: 0.6 max_detections_per_class: 100 max_total_detections: 100 } score_converter: SIGMOID } } } train_config: { batch_size: 16 # 电脑好的话可以调高点,我电脑比较渣就调成16了 optimizer { rms_prop_optimizer: { learning_rate: { exponential_decay_learning_rate { initial_learning_rate: 0.004 decay_steps: 800720 decay_factor: 0.95 } } momentum_optimizer_value: 0.9 decay: 0.9 epsilon: 1.0 } } # Note: The below line limits the training process to 200K steps, which we # empirically found to be sufficient enough to train the pets dataset. This # effectively bypasses the learning rate schedule (the learning rate will # never decay). Remove the below line to train indefinitely. num_steps: 200000 # 训练的steps data_augmentation_options { random_horizontal_flip { } } data_augmentation_options { ssd_random_crop { } } } train_input_reader: { tf_record_input_reader { input_path: "mydata/gaoyue_train.record" # 训练的tfrrecord文件路径 } label_map_path: "mydata/gaoyue.pbtxt" } eval_config: { num_examples: 8000 # 验证集的数量 # Note: The below line limits the evaluation process to 10 evaluations. # Remove the below line to evaluate indefinitely. max_evals: 10 } eval_input_reader: { tf_record_input_reader { input_path: "mydata/gaoyue_test.record" # 验证的tfrrecord文件路径 } label_map_path: "mydata/gaoyue.pbtxt" shuffle: false num_readers: 1 }

新建后的文件显示如下。

这时,我们训练的准备工作就做好了。

2.训练模型

在object_detection文件夹下打开Anaconda Prompt,输入命令

python model_main.py --pipeline_config_path=training/ssd_mobilenet_v1_coco.config --model_dir=training --alsologtostderr

在训练过程中如果出现no model named pycocotools的问题的话,请参考这个网址(http://www.mamicode.com/info-detail-2660241.html)解决。亲测有效

即:

(1)从https://github.com/pdollar/coco.git 下载源码,解压至全英文路径下。

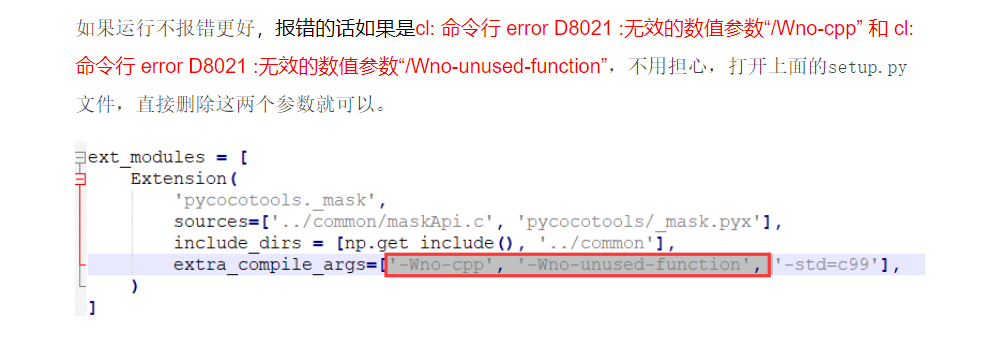

(2)使用cmd进入解压后的cocoapi-master/PythonAPI路径下,输入python setup.py build_ext --inplace。如果这一步有报错,请打开set_up.py文件,将其中这两个参数删除。

即:

(3)上一步执行没问题之后,继续在cmd窗口运行命令:python setup.py build_ext install

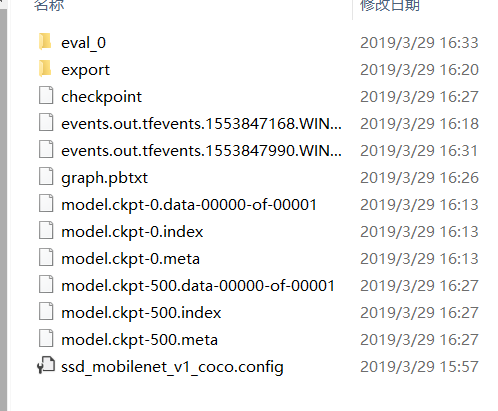

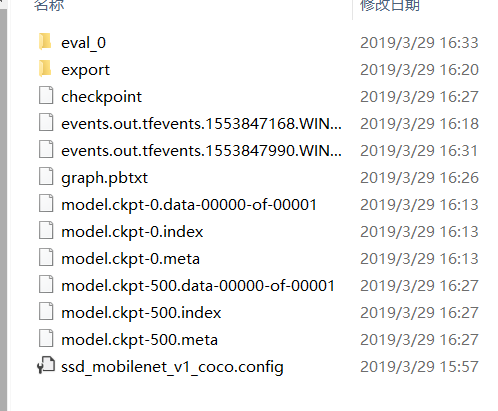

训练完成后,training文件夹下是这样的情况

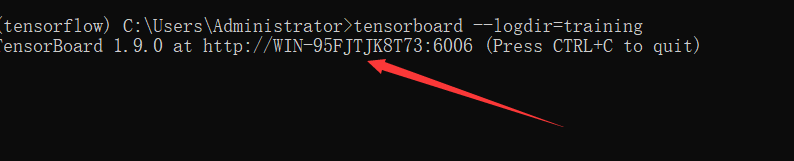

(如果想观察训练过程中参数的变化以及网络的话,可以打开新的一个Anaconda Prompt cd到object_detection文件夹下

输入命令:tensorboard --logdir=training),复制出现的网址即可。如图所示

如果显示不出来的话,新建网页在地址栏输入http://localhost:6006/(后面的6006是我的端口号,根据你自己的输入)

3.生成模型

定位到object_detection目录下,打开Anaconda Promp输入命令

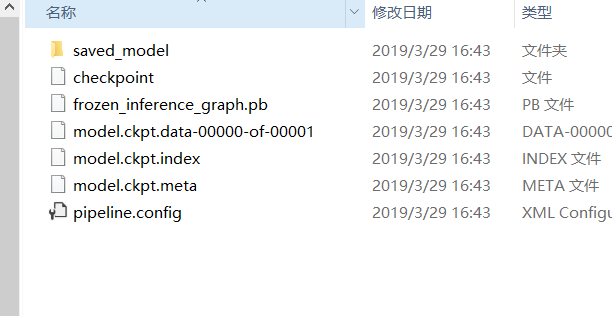

python export_inference_graph.py --input_type image_tensor --pipeline_config_path training/ssd_mobilenet_v1_coco.config --trained_checkpoint_prefix training/model.ckpt-500 --output_directory gaoyue_detection

(注意这两处标红的地方,1. model.ckpt-500是指你训练的轮数的文件,这里因为我只训练了500轮,所以改成了500(如下图中的500)

2. output_directory是输出模型的路径,最好是新建一个文件夹来存放模型,我新建了一个名为gaoyue_detection的模型)

命令执行完成后,打开gaoyue_detection文件夹,里面的内容如图所示

表示执行成功,这样,我们用自己数据集训练的目标检测模型就做好了

下一节会详细说我们自己模型的验证