https://www.zhihu.com/question/64470274

http://colah.github.io/posts/2015-08-Understanding-LSTMs/

https://jasdeep06.github.io/posts/Understanding-LSTM-in-Tensorflow-MNIST/

https://stackoverflow.com/questions/37901047/what-is-num-units-in-tensorflow-basiclstmcell#

http://keras-cn.readthedocs.io/en/latest/layers/recurrent_layer/

keras.layers.recurrent.LSTM(units, activation='tanh', recurrent_activation='hard_sigmoid', use_bias=True, kernel_initializer='glorot_uniform', recurrent_initializer='orthogonal', bias_initializer='zeros', unit_forget_bias=True, kernel_regularizer=None, recurrent_regularizer=None, bias_regularizer=None, activity_regularizer=None, kernel_constraint=None, recurrent_constraint=None, bias_constraint=None, dropout=0.0, recurrent_dropout=0.0)

model = Sequential()

model.add(LSTM(32, return_sequences=True, stateful=True,batch_input_shape=(batch_size, timesteps, data_dim)))

model.add(LSTM(32, return_sequences=True, stateful=True))

model.add(LSTM(32, stateful=True))

model.add(Dense(num_classes, activation='softmax'))

类似上述代码中,加重黑色数字的含义。

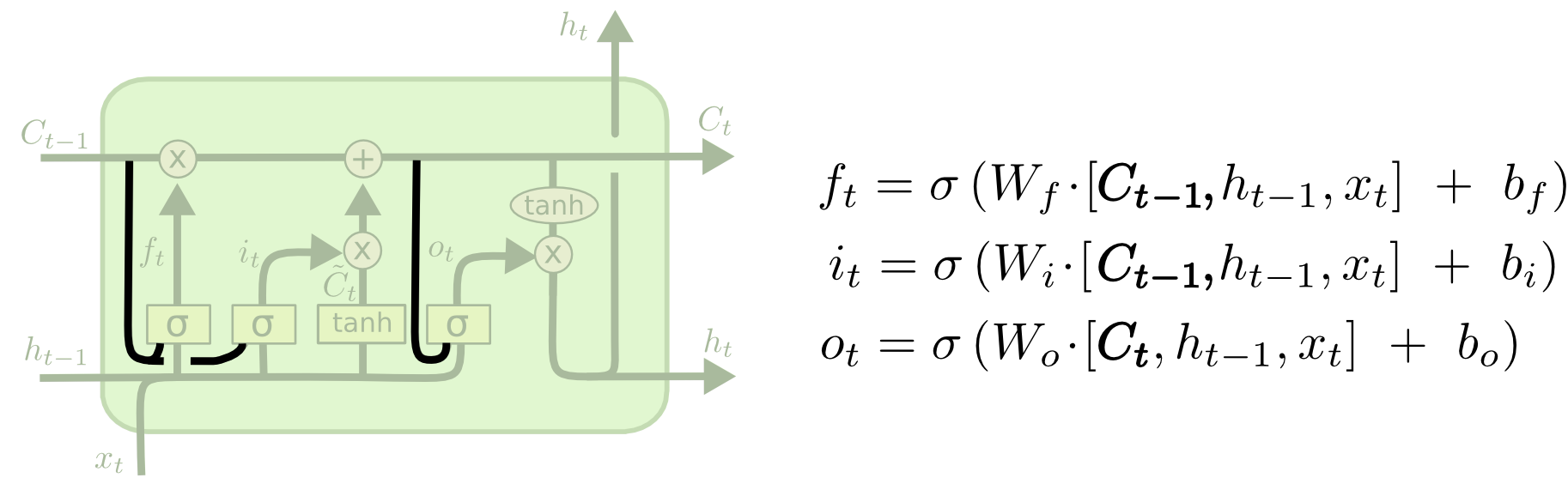

下图是加了peephole的lstm,用来示例,lstm则需要去掉Ct-1和Ct项。可以看到LSTM里面有几个参数矩阵,Wf、Wi、Wo都是参数矩阵。我的理解,上面的数字32就是这个参数矩阵的组数。比如初始一组参数矩阵,Wf、Wi、Wo,计算一个lstm值,然后再给一组参数矩阵Wf1、Wi1、Wo1,可以再算一个lstm值,共32组。参考的博客里第一个也是类似的解释。