1.选一个自己感兴趣的主题或网站。(所有同学不能雷同)

爬取京东里售卖的《大国大城》的评论

2.用python 编写爬虫程序,从网络上爬取相关主题的数据。

import pandas as pd import numpy as np import time import re,os import requests import jieba.analyse from wordcloud import WordCloud import matplotlib.pyplot as plt import json import matplotlib.pyplot as plt %matplotlib inline ##爬取一页评论 def get_one_page(Page): url1='https://sclub.jd.com/comment/productPageComments.action?productId=11992230&score=0&sortType=3&page=' url2='&pageSize=10&isShadowSku=0&callback=fetchJSON_comment98vv1915' url = url1+str(Page)+url2 print (url) html = requests.get(url).content time.sleep(0.2) html = html.decode('gbk','ignore') return html ##清洗一页评论,提取相关数据 def clean_one_page(html): html1 = re.findall(r'fetchJSON_comment98vv1915((.*?));',html) df_one_page=[] for i in json.loads(html1[0])['comments']: cid=i['id'] print (i['id']) guid= i['guid'] content=i['content'] creationTime=i['creationTime'] replyCount=i['replyCount'] score=i['score'] usefulVoteCount=i['usefulVoteCount'] uselessVoteCount=i['uselessVoteCount'] viewCount=i['viewCount'] nickname=i['nickname'] userClient=i['userClient'] userLevelName=i['userLevelName'] isMobile=i['isMobile'] userClientShow=i['userClientShow'] ##有些评论是没有图片的,默认为0 try: imageCount=i['imageCount'] except KeyError : imageCount=0 df_one_comment = { 'cid':cid, 'cguid':guid, 'ccontent':content, 'creationTime':creationTime, 'replyCount':replyCount, 'score':score, 'usefulVoteCount':usefulVoteCount, 'uselessVoteCount':uselessVoteCount, 'viewCount':viewCount, 'nickname':nickname, 'userClient':userClient, 'userLevelName':userLevelName, 'isMobile':isMobile, 'userClientShow':userClientShow, 'imageCount':imageCount} df_one_page.append(df_one_comment) return df_one_page df_ALL=pd.DataFrame() ##暂时爬取70页 for num in range(70): html=get_one_page(num) df_page = clean_one_page(html) pagenum = num+1 df_ALL=df_ALL.append(pd.DataFrame(df_page,index=range(100*pagenum,100*pagenum+len(df_page))))

##进行分词

contents = ''.join(df_ALL['ccontent'])

contents_rank = jieba.analyse.extract_tags(contents,topK=100,withWeight=True)

#frequencies : array of tuples。A tuple contains the word and its frequency.

key_words=[]

for i in contents_rank:

key_words.append((i[0],i[1]))

print (key_words)

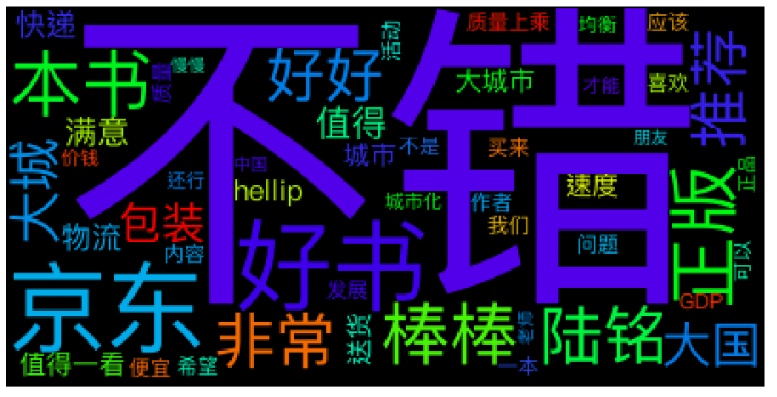

3.对爬了的数据进行文本分析,生成词云。

plt.figure(figsize=(16,32))

wc=WordCloud(font_path='/System/Library/Fonts/PingFang.ttc'

,background_color='Black'

,max_words=50)

wc.generate_from_frequencies(key_words)

plt.imshow(wc)

plt.axis('off')

plt.show()

4.对文本分析结果进行解释说明。

这本书的评价还是很不错的,值得购买,售后态度和物流也是挺不错的

5.写一篇完整的博客,描述上述实现过程、遇到的问题及解决办法、数据分析思想及结论。

6.最后提交爬取的全部数据、爬虫及数据分析源代码。