再看 Attention U-Net 源码的时候,注意到了有 keras.layers 里面有 Multiply 和 multiply 两个方法

它们可以实现相同的效果,但是语法稍有不同

# 按照图层的模式处理 Multiply()([m1, m2]) # 相当于一个函数操作 multiply([m1, m2])

另外可以实现 broadcast 操作,但是第 0 维必须为相同的数字,可以设想为样本数量是不变的,第 1 维可以有差别

举例

from keras.layers import Multiply, multiply, Add, add

import numpy as np

a = np.arange(25).reshape(5, 1, 5)

a

[out]

array([[[ 0, 1, 2, 3, 4]],

[[ 5, 6, 7, 8, 9]],

[[10, 11, 12, 13, 14]],

[[15, 16, 17, 18, 19]],

[[20, 21, 22, 23, 24]]])

b = np.arange(50, 150).reshape(5, 4, 5)

b

[out]

array([[[ 50, 51, 52, 53, 54],

[ 55, 56, 57, 58, 59],

[ 60, 61, 62, 63, 64],

[ 65, 66, 67, 68, 69]],

[[ 70, 71, 72, 73, 74],

[ 75, 76, 77, 78, 79],

[ 80, 81, 82, 83, 84],

[ 85, 86, 87, 88, 89]],

[[ 90, 91, 92, 93, 94],

[ 95, 96, 97, 98, 99],

[100, 101, 102, 103, 104],

[105, 106, 107, 108, 109]],

[[110, 111, 112, 113, 114],

[115, 116, 117, 118, 119],

[120, 121, 122, 123, 124],

[125, 126, 127, 128, 129]],

[[130, 131, 132, 133, 134],

[135, 136, 137, 138, 139],

[140, 141, 142, 143, 144],

[145, 146, 147, 148, 149]]])

Multiply()([a, b])

# Multiply()([b, a])

# 一样的效果

# multiply([a, b])

# multiply([b, a])

[out]

<tf.Tensor: shape=(5, 4, 5), dtype=int64, numpy=

array([[[ 0, 51, 104, 159, 216],

[ 0, 56, 114, 174, 236],

[ 0, 61, 124, 189, 256],

[ 0, 66, 134, 204, 276]],

[[ 350, 426, 504, 584, 666],

[ 375, 456, 539, 624, 711],

[ 400, 486, 574, 664, 756],

[ 425, 516, 609, 704, 801]],

[[ 900, 1001, 1104, 1209, 1316],

[ 950, 1056, 1164, 1274, 1386],

[1000, 1111, 1224, 1339, 1456],

[1050, 1166, 1284, 1404, 1526]],

[[1650, 1776, 1904, 2034, 2166],

[1725, 1856, 1989, 2124, 2261],

[1800, 1936, 2074, 2214, 2356],

[1875, 2016, 2159, 2304, 2451]],

[[2600, 2751, 2904, 3059, 3216],

[2700, 2856, 3014, 3174, 3336],

[2800, 2961, 3124, 3289, 3456],

[2900, 3066, 3234, 3404, 3576]]])>

Add()([a, b])

# Add()([b, a])

# 一样的效果

# add([a, b])

# add([b, a])

[out]

<tf.Tensor: shape=(5, 4, 5), dtype=int64, numpy=

array([[[ 50, 52, 54, 56, 58],

[ 55, 57, 59, 61, 63],

[ 60, 62, 64, 66, 68],

[ 65, 67, 69, 71, 73]],

[[ 75, 77, 79, 81, 83],

[ 80, 82, 84, 86, 88],

[ 85, 87, 89, 91, 93],

[ 90, 92, 94, 96, 98]],

[[100, 102, 104, 106, 108],

[105, 107, 109, 111, 113],

[110, 112, 114, 116, 118],

[115, 117, 119, 121, 123]],

[[125, 127, 129, 131, 133],

[130, 132, 134, 136, 138],

[135, 137, 139, 141, 143],

[140, 142, 144, 146, 148]],

[[150, 152, 154, 156, 158],

[155, 157, 159, 161, 163],

[160, 162, 164, 166, 168],

[165, 167, 169, 171, 173]]])>

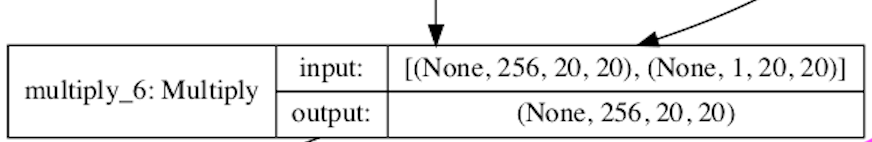

对于 Attention U-Net 实现图如下:

-

第一个输入:相当于 20*20 的图像有 256 层

-

第二个输入:相当于 20*20 的图像有 1 层,每个像素值对应一个权重值

- 相乘的话,需要第二个输入乘以第一个输入的每一层