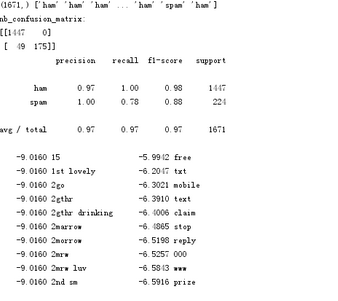

import csv from sklearn.model_selection import train_test_split import nltk from nltk.corpus import stopwords from nltk.stem import WordNetLemmatizer from sklearn.naive_bayes import MultinomialNB def preprocessing(text): # text = text.decode("utf-8") tokens = [word for sent in nltk.sent_tokenize(text) for word in nltk.word_tokenize(sent)] stops = stopwords.words('english') tokens = [token for token in tokens if token not in stops] tokens = [token.lower() for token in tokens if len(token) >= 3] lmtzr = WordNetLemmatizer() tokens = [lmtzr.lemmatize(token) for token in tokens] preprocessed_text = ' '.join(tokens) return preprocessed_text def read_data(): sms=open(r'd:/SMSSpamCollectionjsn.txt','r',encoding='utf-8') sms_data = [] sms_label = [] csv_reader=csv.reader(sms,delimiter=' ') nltk.download('punkt') nltk.download('wordnet') for line in csv_reader: print(line) sms_label.append(line[0]) sms_data.append(preprocessing(line[1])) sms.close() x_train,x_test,y_train,y_test = train_test_split(sms_data,sms_label,test_size=0.3,random_state=0,stratify=sms_label) print(len(sms_data),len(x_train),len(x_test)) print(x_train) return sms_data,sms_label,x_train,x_test,y_train,y_test def xiangliang(x_train,x_test): from sklearn.feature_extraction.text import TfidfVectorizer vectorizer = TfidfVectorizer(min_df=2, ngram_range=(1, 2), stop_words='english', strip_accents='unicode') # ,norm='12' x_train = vectorizer.fit_transform(x_train) x_test = vectorizer.transform(x_test) return x_train,x_test,vectorizer def beiNB(x_train, y_train,x_test): clf = MultinomialNB().fit(x_train, y_train) y_nb_pred = clf.predict(x_test) return y_nb_pred,clf def result(vectorizer,clf): from sklearn.metrics import confusion_matrix from sklearn.metrics import classification_report print(y_nb_pred.shape, y_nb_pred) print('nb_confusion_matrix:') cm = confusion_matrix(y_test, y_nb_pred) print(cm) cr = classification_report(y_test, y_nb_pred) print(cr) feature_names = vectorizer.get_feature_names() coefs = clf.coef_ intercept = clf.intercept_ coefs_with_fns = sorted(zip(coefs[0], feature_names)) n = 10 top = zip(coefs_with_fns[:n], coefs_with_fns[:-(n + 1):-1]) for (coef_1, fn_1), (coef_2, fn_2) in top: print(' %.4f %-15s %.4f %-15s' % (coef_1, fn_1, coef_2, fn_2)) if __name__ == '__main__': sms_data,sms_lable,x_train,x_test,y_train,y_test = read_data() X_train,X_test,vectorizer = xiangliang(x_train,x_test) y_nb_pred,clf = beiNB(X_train, y_train,X_test) result(vectorizer,clf)