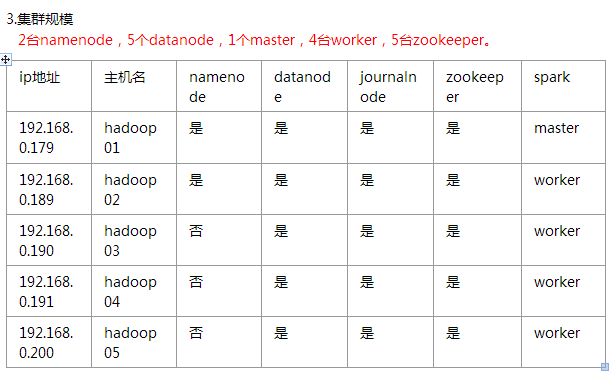

一、环境

soft soft nproc 102400

root soft nproc unlimited

soft soft nofile 102400

soft hard nofile 102400

五、安装hadoop

#配置hadoop-env.sh

export JAVA_HOME=/home/hadoop/jdk1.8.0_101

#编辑core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://base</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/data/hadoopdata/tmp</value>

</property>

<!-- 指定zookeeper地址 -->

<property>

<name>ha.zookeeper.quorum</name>

<value>n1:2181,n2:2181,n3:2181,n4:2181,n5:2181</value>

</property>

<property>

<name>fs.hdfs.impl</name>

<value>org.apache.hadoop.hdfs.DistributedFileSystem</value>

<description>The FileSystem for hdfs: uris.</description>

</property>

</configuration>

#编辑hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<!-- 开几个备份 -->

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///home/hadoop/data/hadoopdata/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/home/hadoop/data/hadoopdata/disk1</value>

<final>true</final>

</property>

<property>

<name>dfs.nameservices</name>

<value>base</value>

</property>

<property>

<name>dfs.ha.namenodes.base</name>

<value>n1,n2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.base.n1</name>

<value>n1:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.base.n2</name>

<value>n2:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.base.n1</name>

<value>n1:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.base.n2</name>

<value>n2:50070</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://n1:8485;n2:8485;n3:8485;n4:8485;n5:8485/base</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.base</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/hadoop/.ssh/id_rsa</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/home/hadoop/app/hadoop-2.7.3/journal</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>n1:2181,n2:2181,n3:2181,n4:2181,n5:2181</value>

</property>

<property>

<!--指定ZooKeeper超时间隔,单位毫秒 -->

<name>ha.zookeeper.session-timeout.ms</name>

<value>2000</value>

</property>

<property>

<name>fs.hdfs.impl.disable.cache</name>

<value>true</value>

</property>

</configuration>

#编辑mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.map.memory.mb</name>

<value>8000</value>

</property>

<property>

<name>mapreduce.reduce.memory.mb</name>

<value>8000</value>

</property>

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx8000m</value>

</property>

<property>

<name>mapreduce.reduce.java.opts</name>

<value>-Xmx8000m</value>

</property>

<property>

<name>mapred.task.timeout</name>

<value>1800000</value> <!-- 30 minutes -->

</property>

</configuration>

#编辑yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<configuration>

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>2000</value>

</property>

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<!-- 开启RM高可靠 -->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<!-- 指定RM的cluster id -->

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>yrc</value>

</property>

<!-- 指定RM的名字 -->

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!-- 分别指定RM的地址 -->

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>n1</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>n2</value>

</property>

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm2</value>

</property>

<!-- 指定zk集群地址 -->

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>n1:2181,n2:2181,n3:2181,n4:2181,n5:2181</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>86400</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>3</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>57000</value>

</property>

<property>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>4000</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>10000</value>

</property>

</configuration>

#编辑slaves文件

n1

n2

n3

n4

n5

#将hadoop目录scp到其他主机

六、启动

启动所有zookeeper,查看状态是否成功

启动所有journalnode

hadoop-daemons.sh start journalnode

#hadoop01上执行

hdfs namenode -format #namenode 格式化

hdfs zkfc -formatZK #格式化高可用

sbin/hadoop-daemon.sh start namenode #启动namenode

#备份节点执行

hdfs namenode -bootstrapStandby #同步主节点和备节点之间的元数据

停止所有journalnode,namenode

启动hdfs和yarn相关进程

./start-dfs.sh

./start-yarn.sh

备份节点手动启动resourcemanager

#先更改备份节点的yarn-site.xml文件

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm2</value> #这里改成node的id,否则会报端口已占用的错误

</property>

./yarn-daemon.sh start resourcemanager

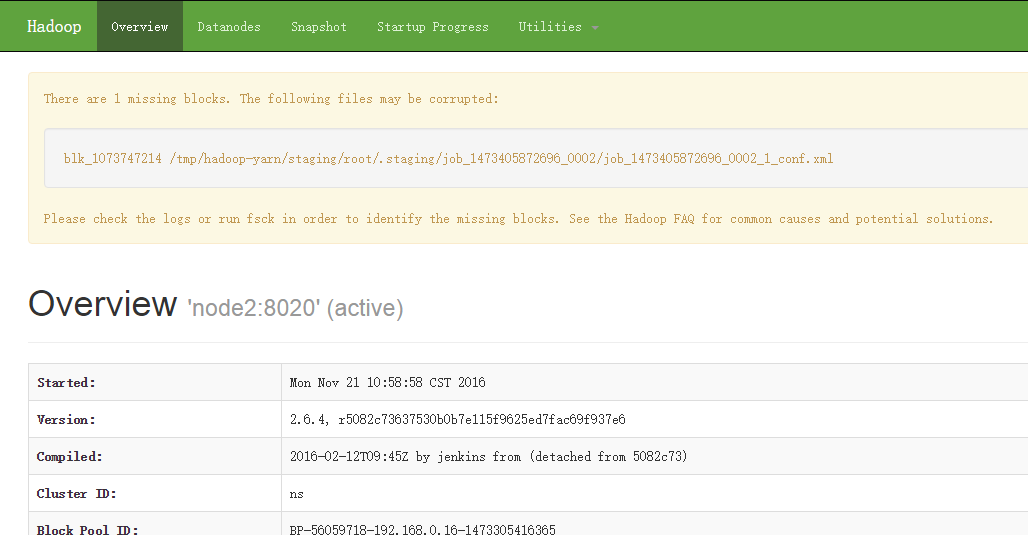

七、检查

[root@n1 ~]# jps

16016 Jps

24096 DFSZKFailoverController

19859 QuorumPeerMain

22987 NameNode

18878 Datanode

29397 ResourceManager

#浏览器访问

http://ip:50070