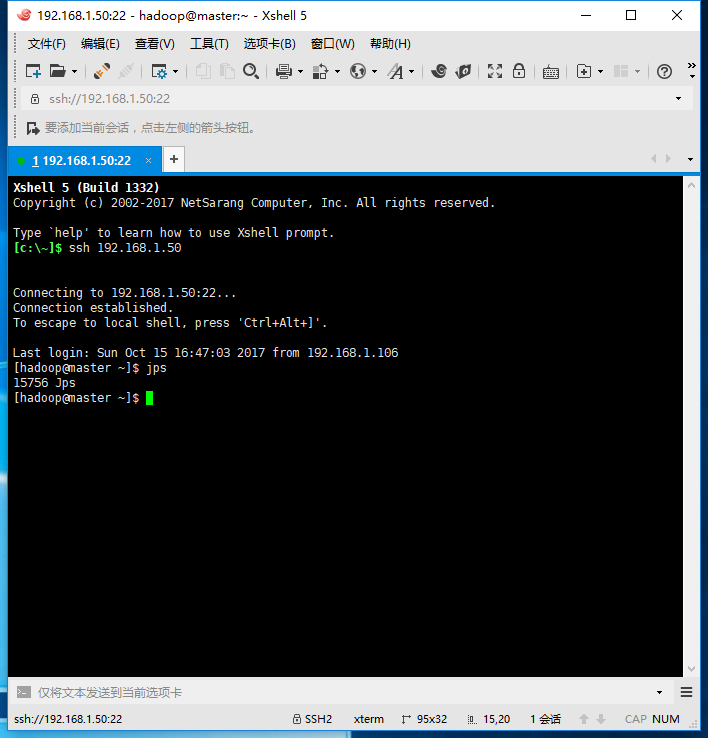

搭建idea开发环境,实现idea远程开发、调试、打包。

资源环境

- idea 2017.2

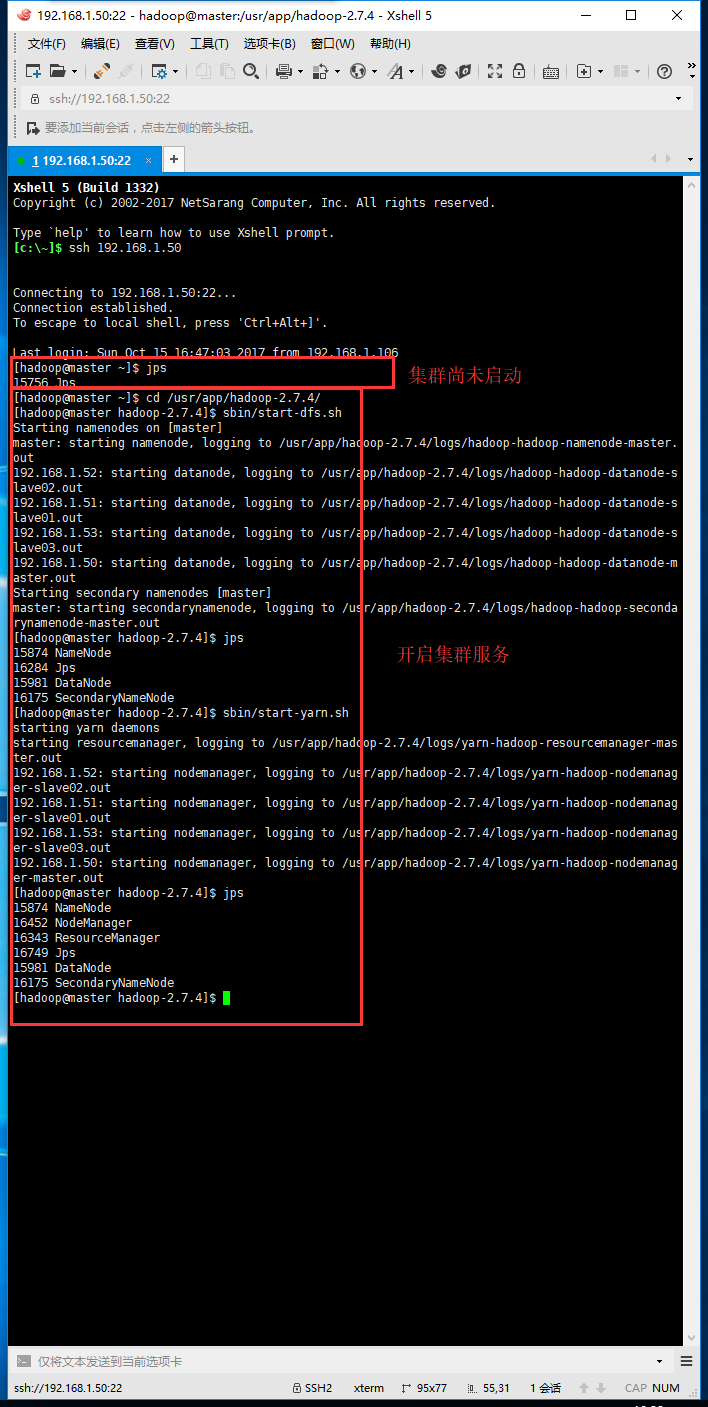

- Hadoop 集群环境

搭建步骤:http://www.cnblogs.com/YellowstonePark/p/7750213.html

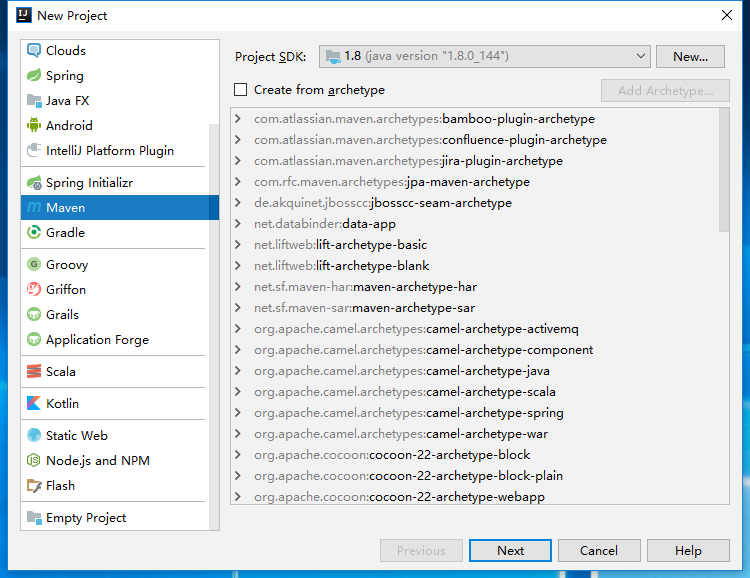

新建项目

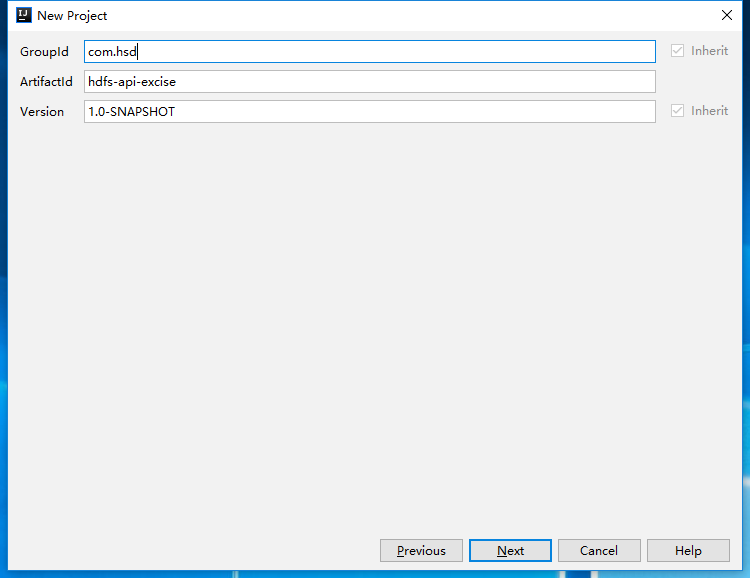

输入GroupId、ArtifactId

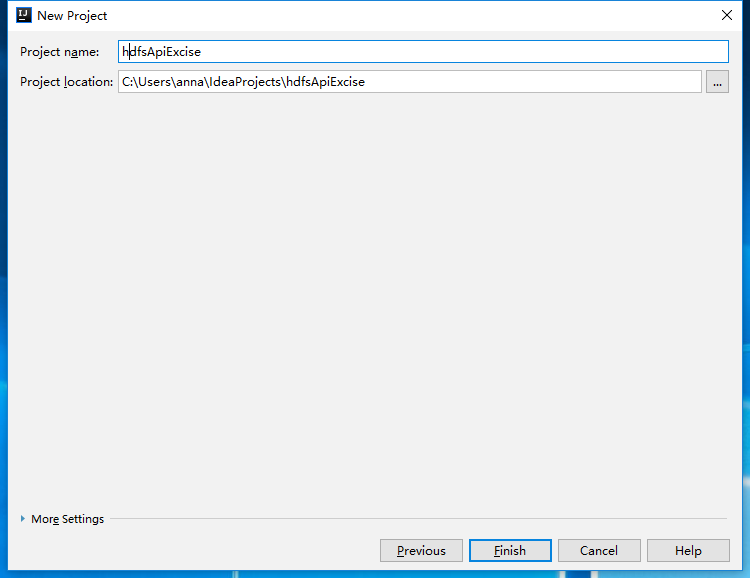

输入project Name 、Project Location

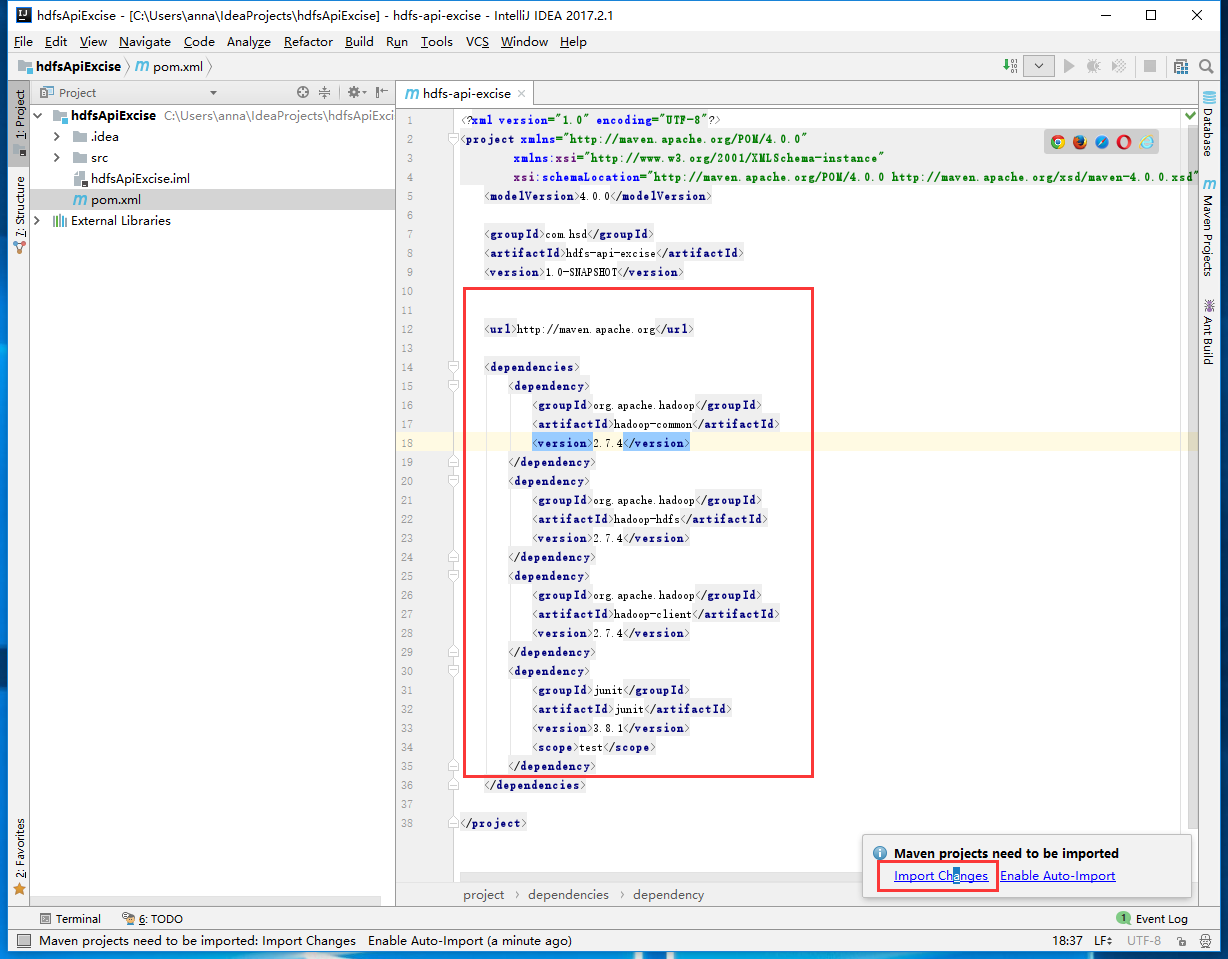

修改pom.xml 添加依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.hadoopbook</groupId>

<artifactId>hadoop-demo</artifactId>

<version>1.0-SNAPSHOT</version>

<packaging>jar</packaging>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.4</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.4</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.4</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>3.8.1</version>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<finalName>${project.artifactId}</finalName>

</build>

</project>

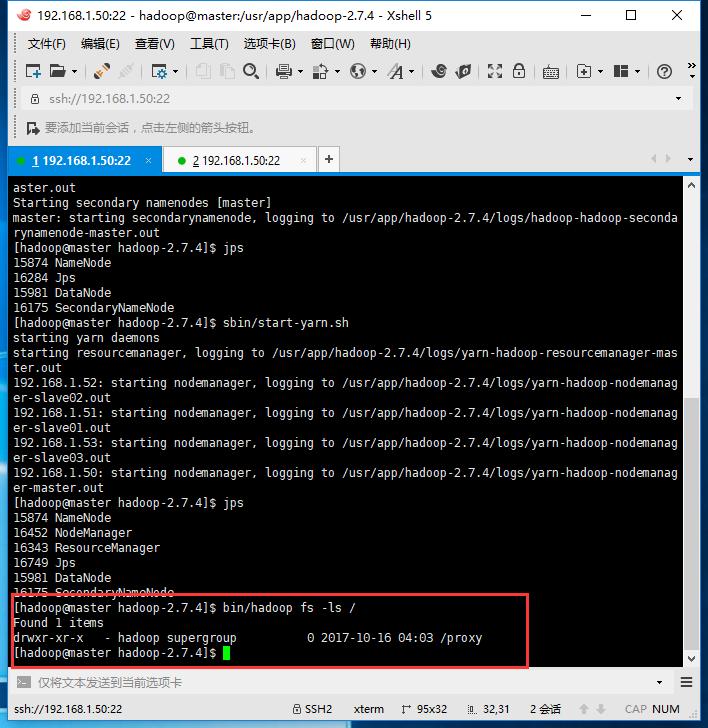

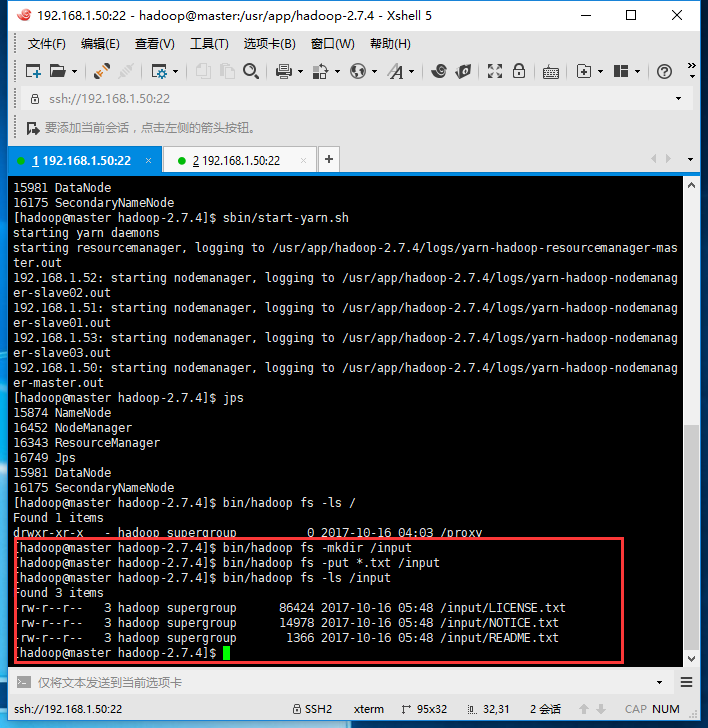

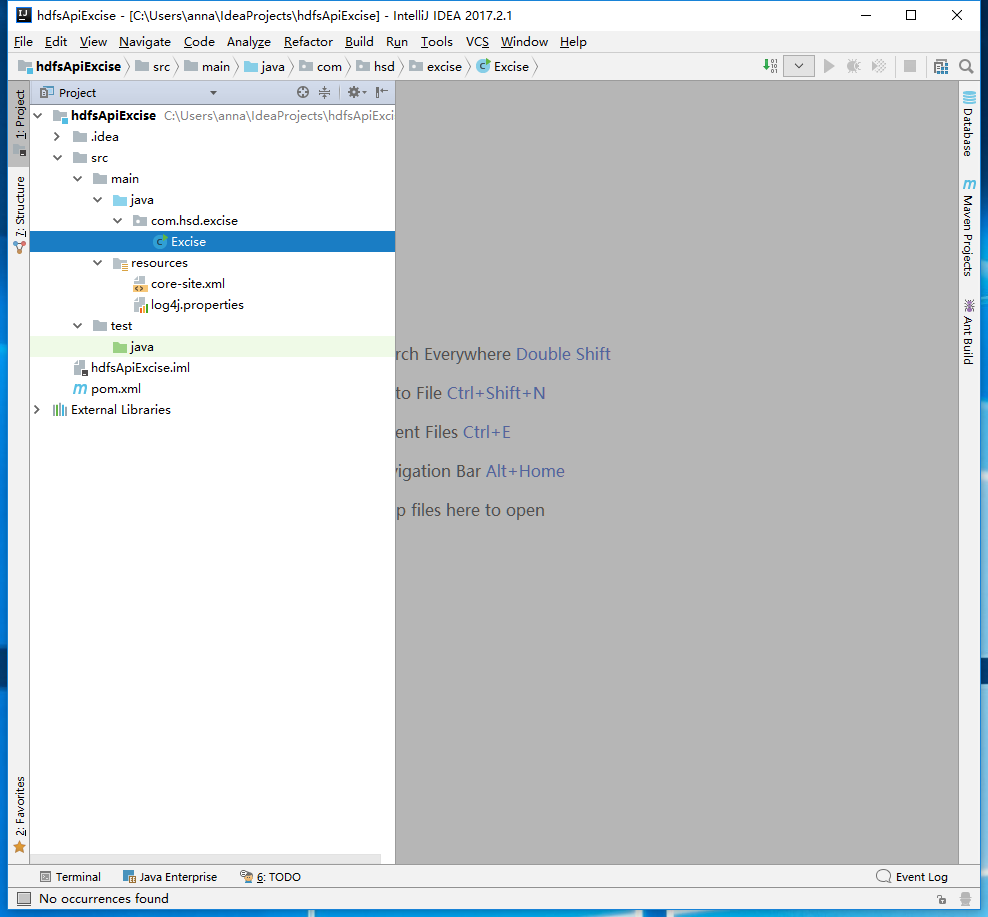

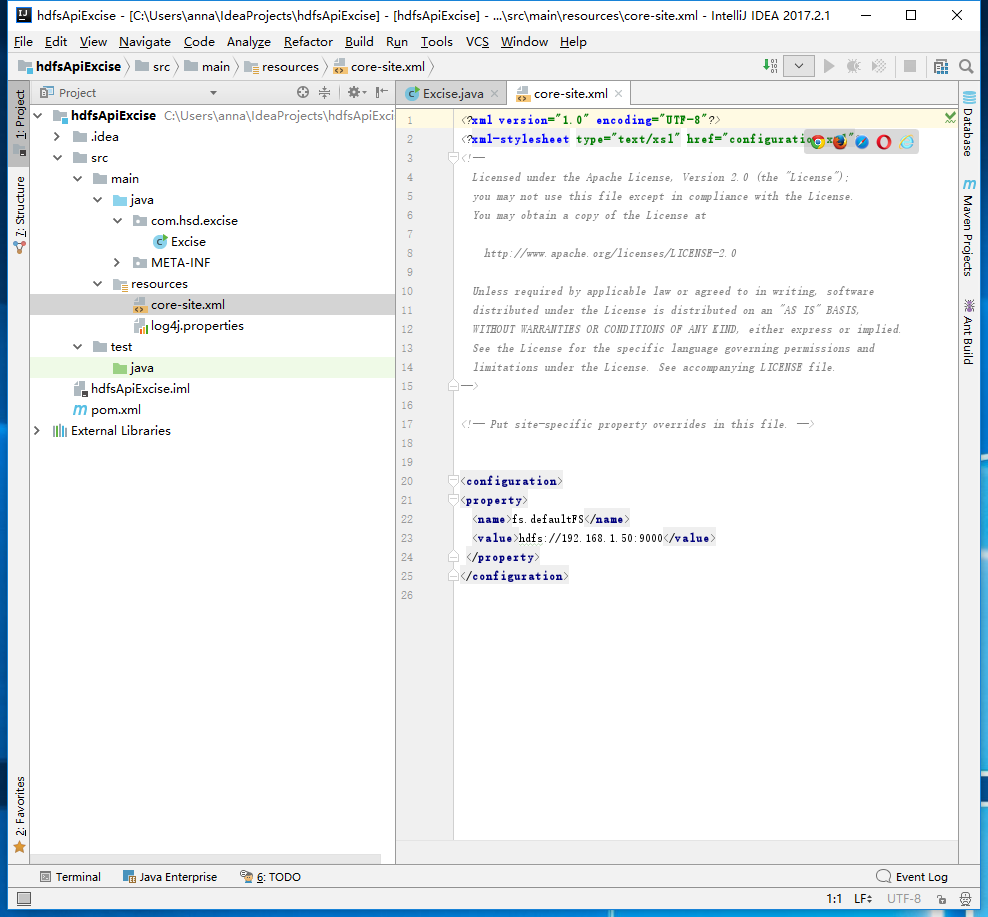

将hadoop配置文件夹下的log4j.properties 和 core-site.xml文件复制到项目resources目录下

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://192.168.1.50:9000</value>

</property>

</configuration>

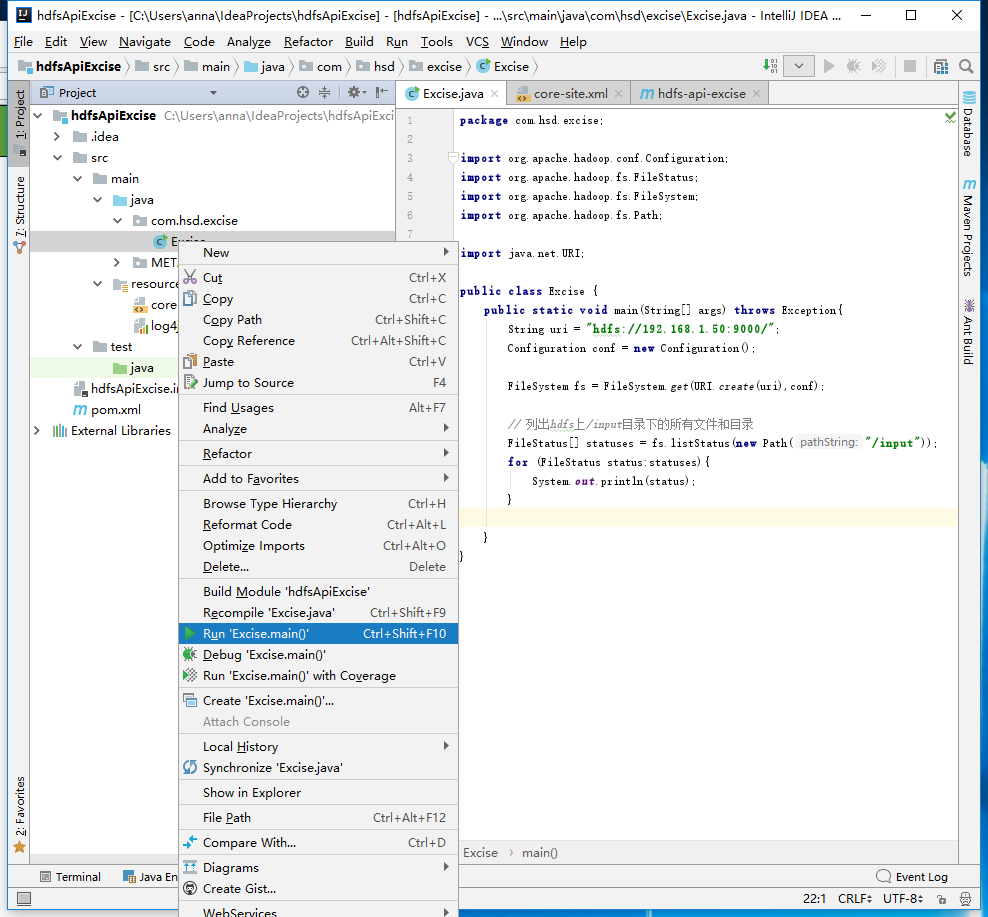

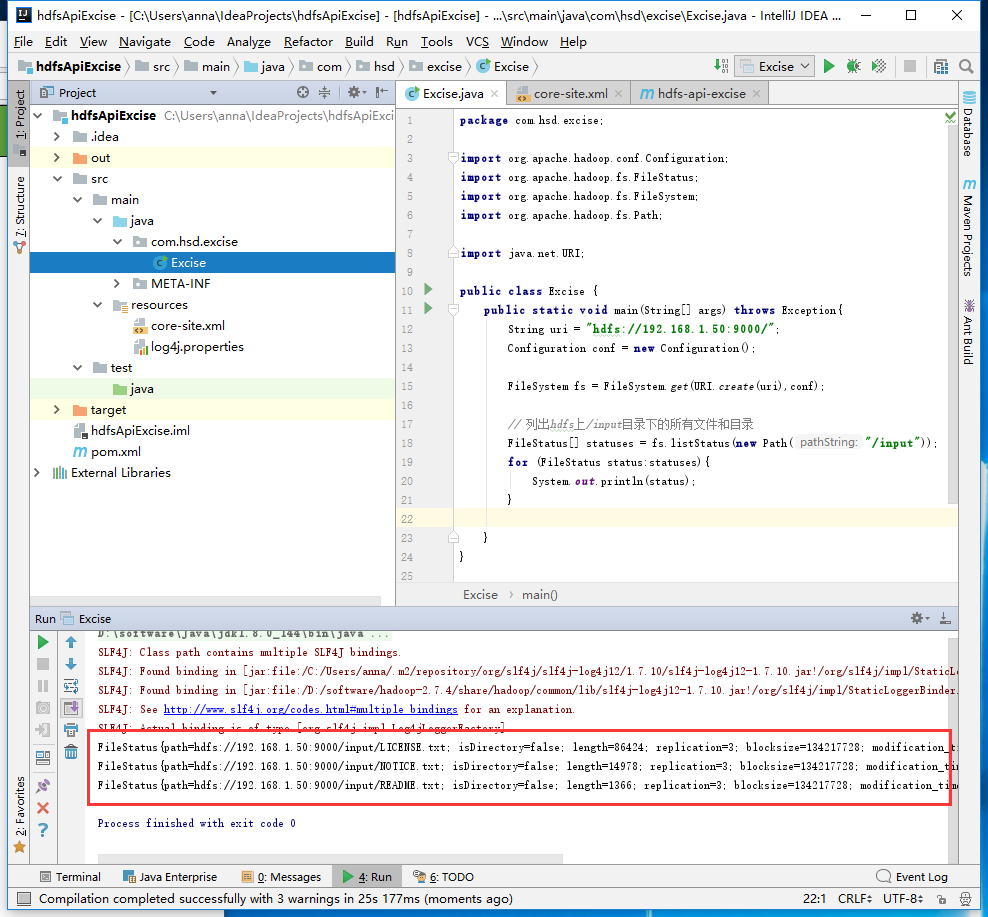

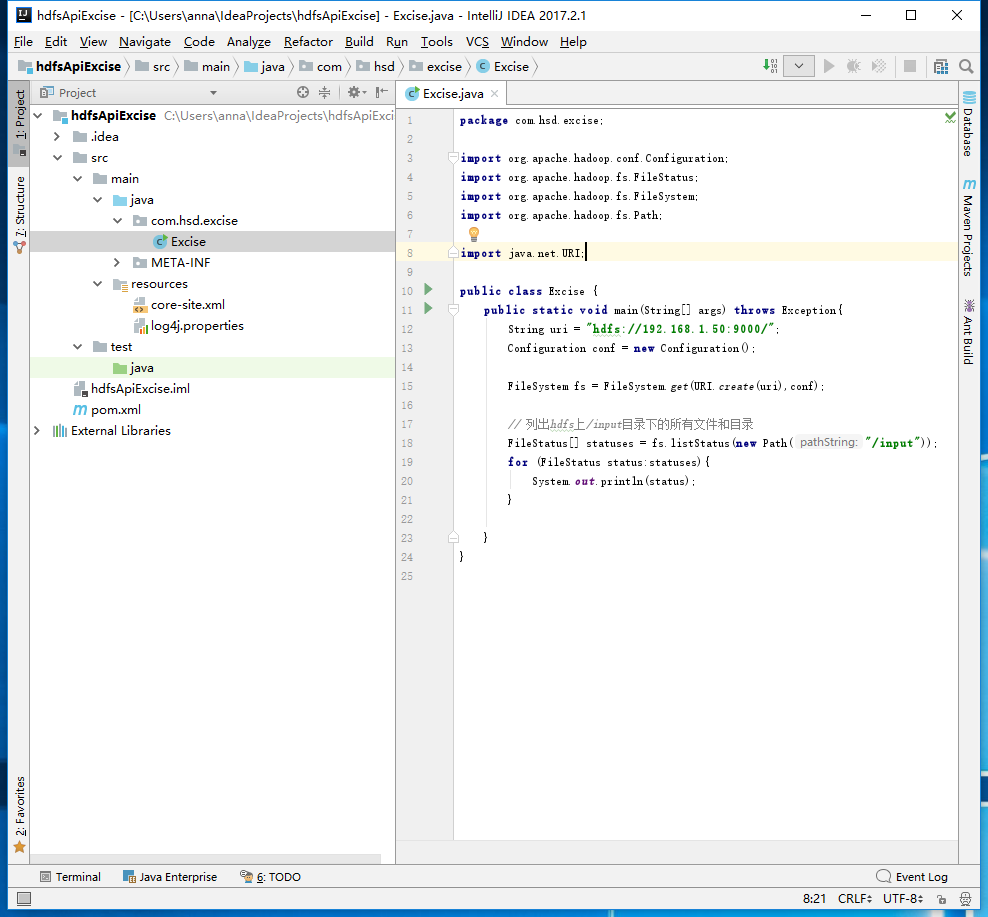

新建包com.hsd.excise 和 Excise java类文件

package com.hsd.excise;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import java.net.URI;

public class Excise {

public static void main(String[] args)throws Exception{

String uri = "hdfs://192.168.1.50:9000/";

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(URI.create(uri),conf);

//列出hdfs上 /input 目录下的所有文件

FileStatus[] statuses = fs.listStatus(new Path("/input"));

for (FileStatus status:statuses){

System.out.println(status);

}

}

}

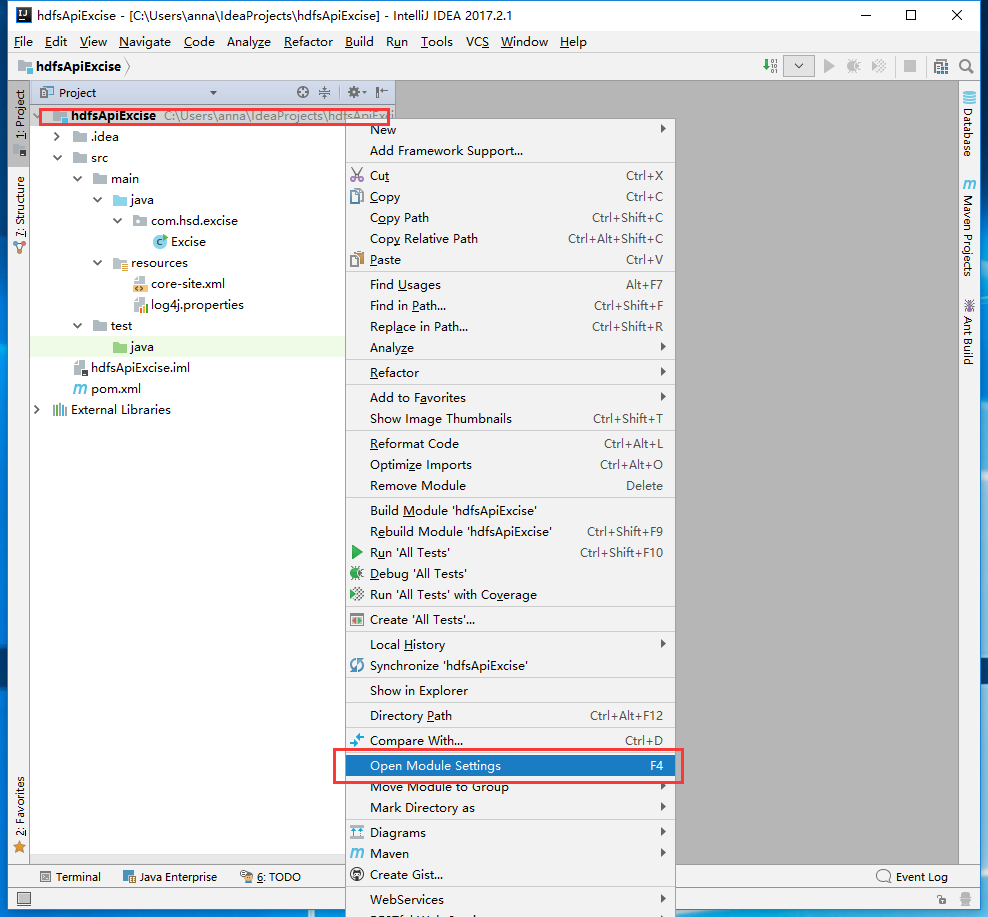

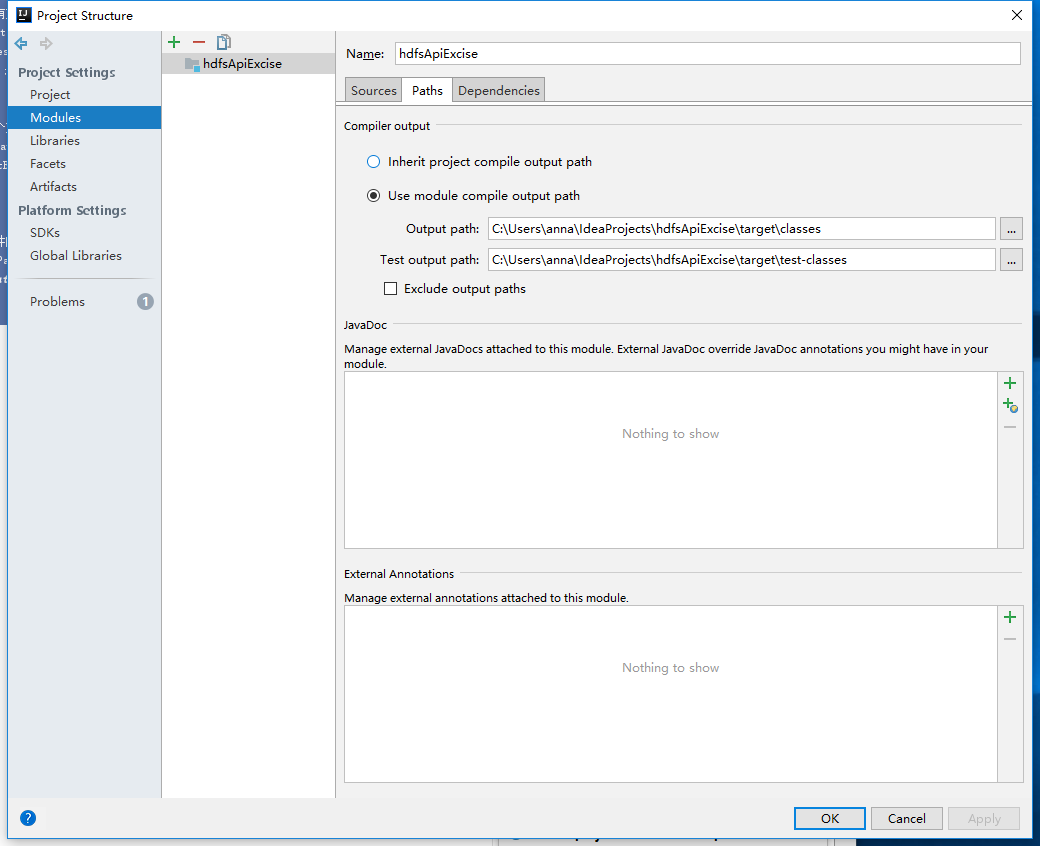

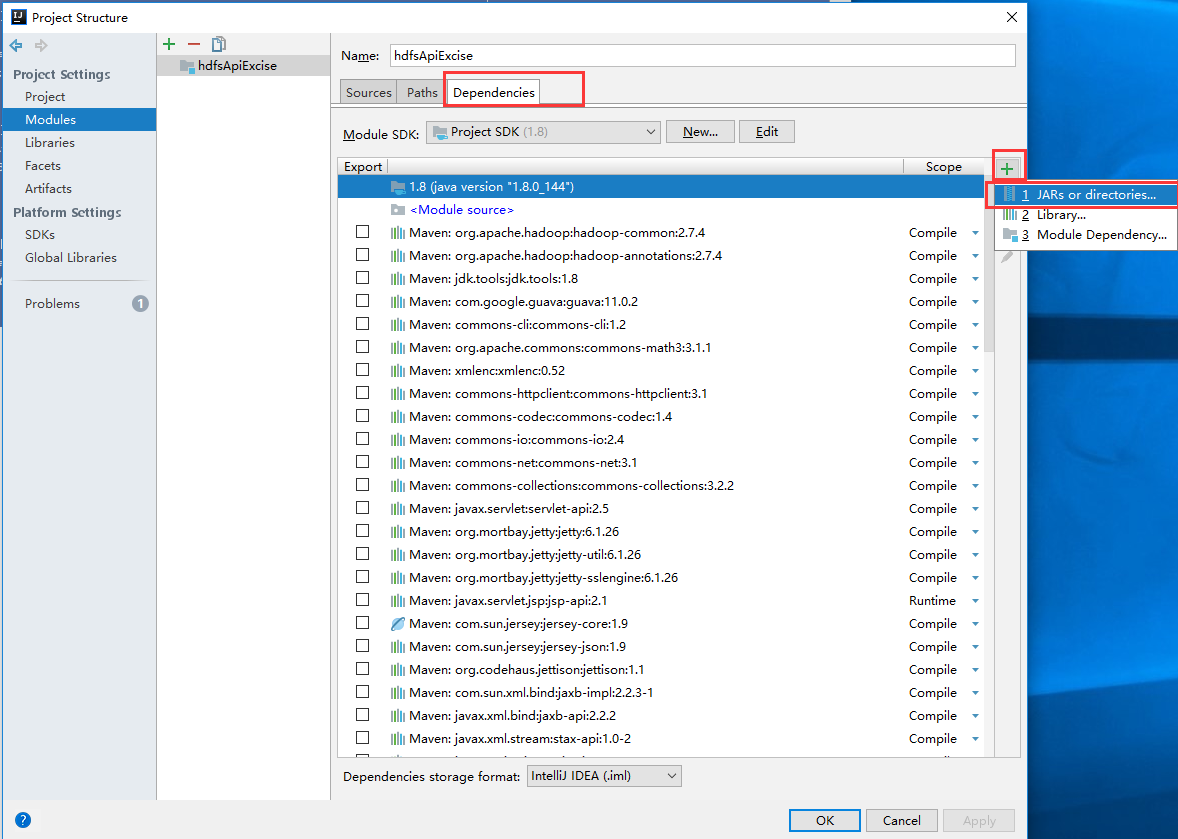

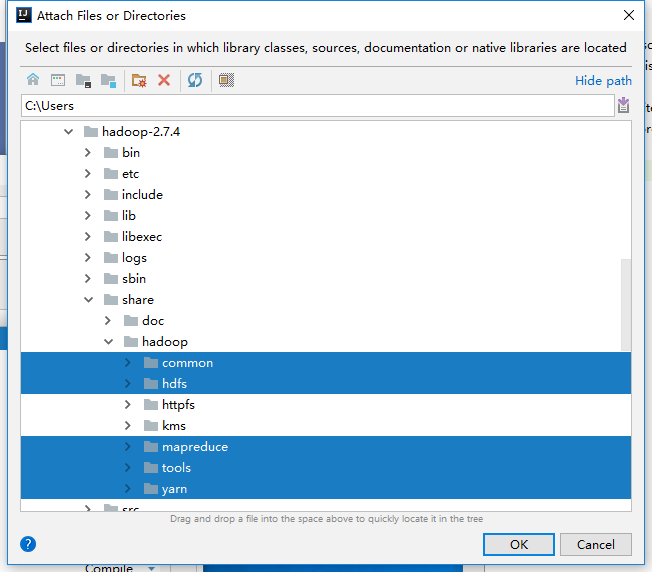

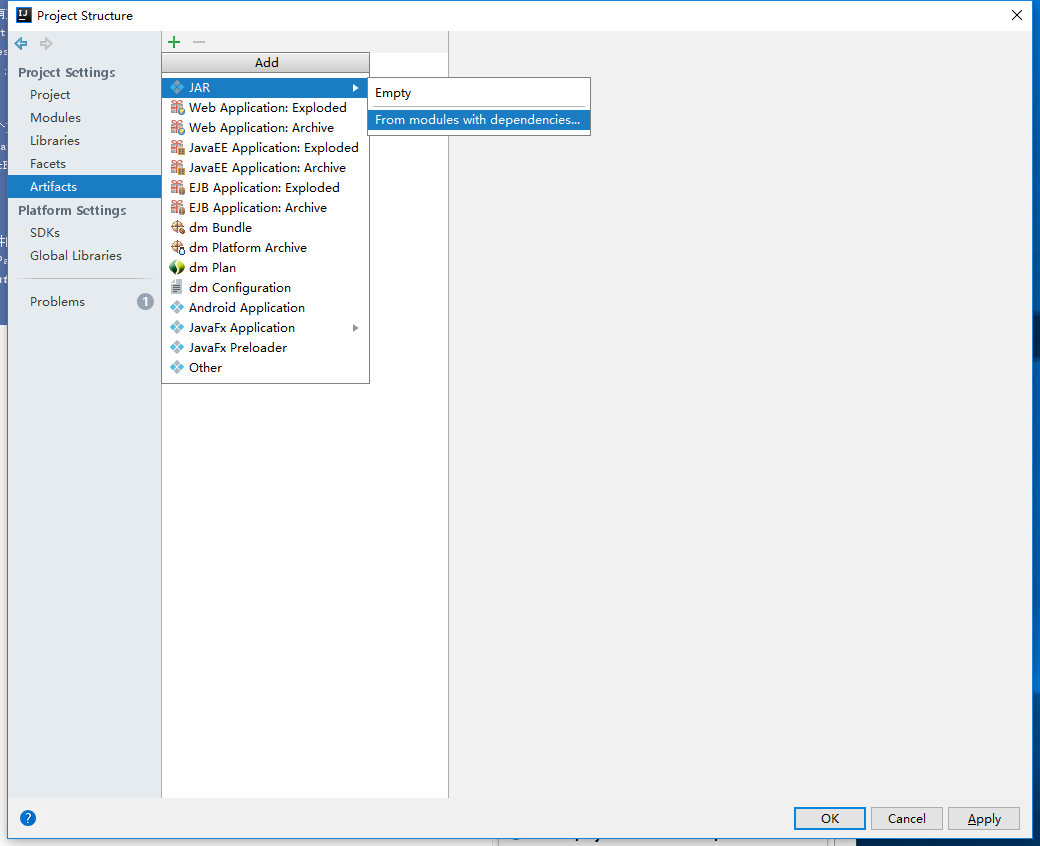

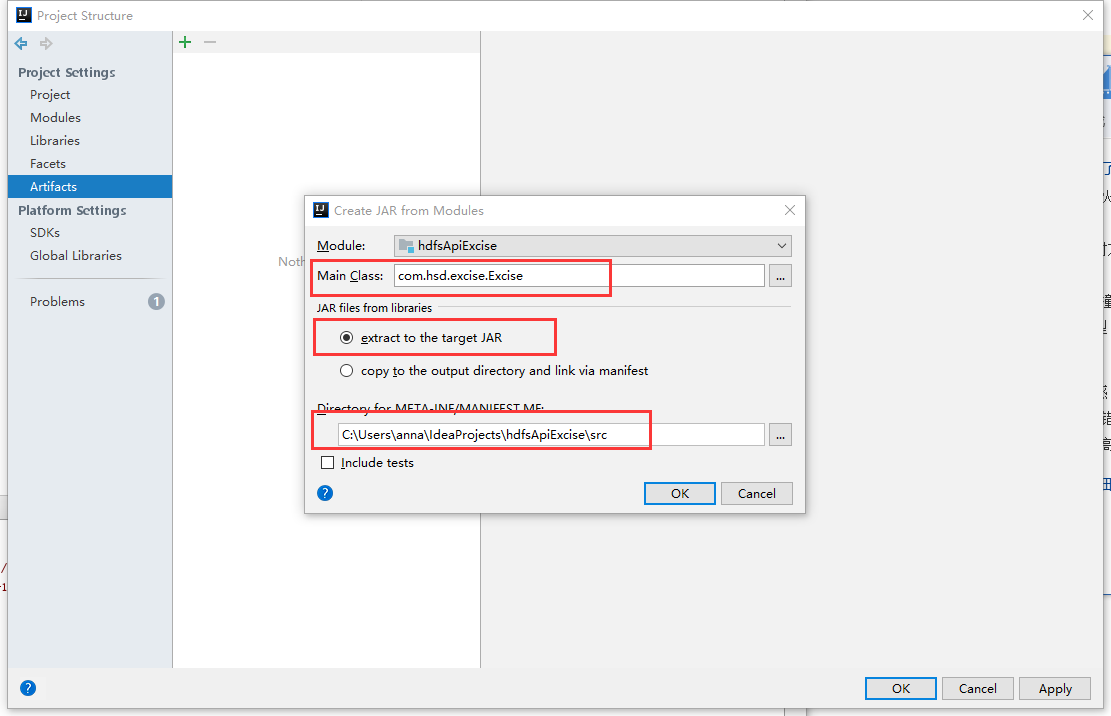

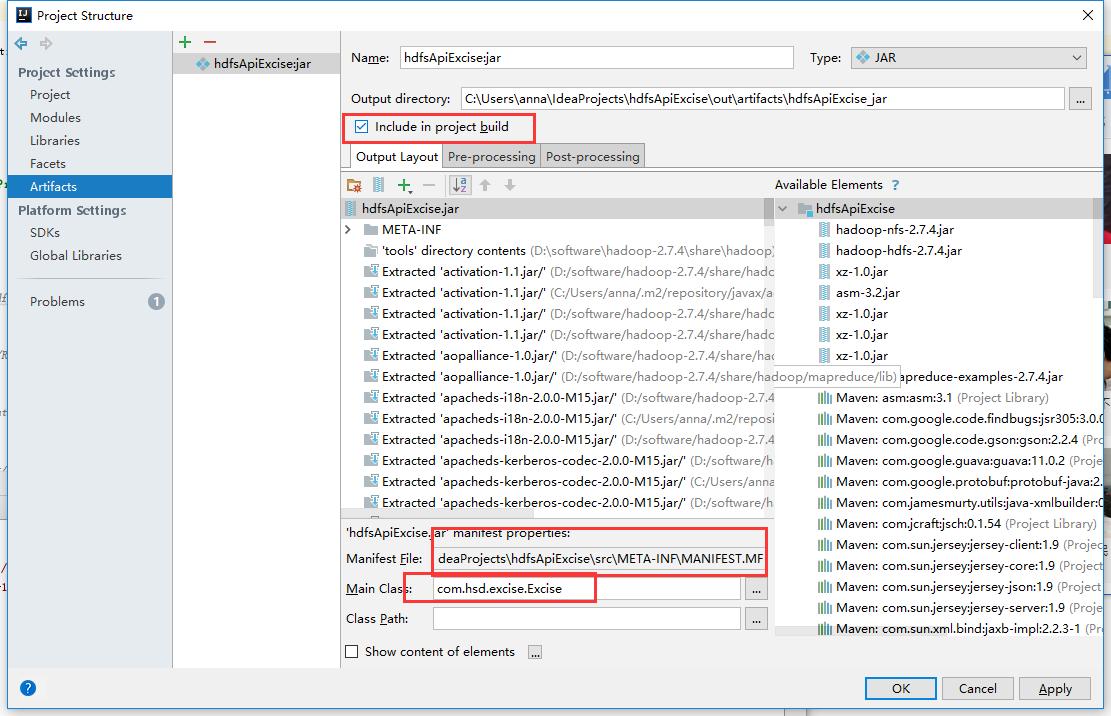

选中 项目名右键 ->Open Module Setting