欢迎关注【无量测试之道】公众号,回复【领取资源】,

Python编程学习资源干货、

Python+Appium框架APP的UI自动化、

Python+Selenium框架Web的UI自动化、

Python+Unittest框架API自动化、

资源和代码 免费送啦~

文章下方有公众号二维码,可直接微信扫一扫关注即可。

一、什么是爬虫?

它是指向网站发起请求,获取资源后分析并提取有用数据的程序;

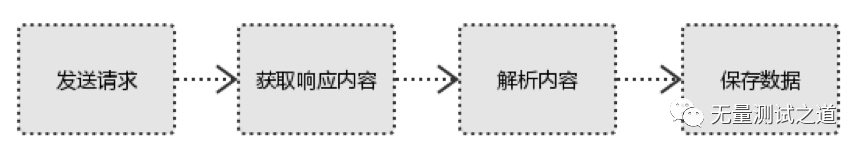

爬虫的步骤:

1、发起请求

使用http库向目标站点发起请求,即发送一个Request

Request包含:请求头、请求体等

2、获取响应内容

如果服务器能正常响应,则会得到一个Response

Response包含:html,json,图片,视频等

3、解析内容

解析html数据:正则表达式(RE模块),第三方解析库如Beautifulsoup,pyquery等

解析json数据:json模块

解析二进制数据:以wb的方式写入文件

4、保存数据

数据库(MySQL,Mongdb、Redis)文件

二、本次选择爬虫的数据来源于链家,因为本人打算搬家,想观察一下近期的链家租房数据情况,所以就直接爬取了链家数据,相关的代码如下:

1 from bs4 import BeautifulSoup as bs 2 from requests.exceptions import RequestException 3 import requests 4 import re 5 from DBUtils import DBUtils 6 7 def main(response): #web页面数据提取与入库操作 8 html = bs(response.text, 'lxml') 9 for data in html.find_all(name='div',attrs={"class":"content__list--item--main"}): 10 try: 11 print(data) 12 Community_name = data.find(name="a", target="_blank").get_text(strip=True) 13 name=str(Community_name).split(" ")[0] 14 sizes=str(Community_name).split(" ")[1] 15 forward=str(Community_name).split(" ")[2] 16 flood = data.find(name="span",class_="hide").get_text(strip=True) 17 flood=str(flood).replace(" ","").replace("/","") 18 sqrt= re.compile("dd+㎡") 19 area=str(data.find(text=sqrt)).replace(" ","") 20 maintance=data.find(name="span",class_="content__list--item--time oneline").get_text(strip=True) 21 maintance=str(maintance) 22 price=data.find(name="span",class_="content__list--item-price").get_text(strip=True) 23 price=str(price) 24 print(name,sizes,forward,flood,maintance,price) 25 insertsql = "INSERT INTO test_log.`information`(Community_name,size,forward,area,flood,maintance,price) VALUES "('"+name+"','"+sizes+"','"+forward+"','"+area+"','"+flood+"','"+maintance+"','"+price+"');" 26 insert_sql(insertsql) 27 except: 28 print("have an error!!!") 29 30 def insert_sql(sql): #数据入库操作 31 dbconn=DBUtils("test6") 32 dbconn.dbExcute(sql) 33 34 def get_one_page(urls): #获取web页面数据 35 try: 36 headers = {"Host": "bj.lianjia.com", 37 "Connection": "keep-alive", 38 "Cache-Control": "max-age=0", 39 "Upgrade-Insecure-Requests": "1", 40 "User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/83.0.4103.61 Safari/537.36", 41 "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9", 42 "Sec-Fetch-Site": "none", 43 "Sec-Fetch-Mode": "navigate", 44 "Sec-Fetch-User": "?1", 45 "Sec-Fetch-Dest": "document", 46 "Accept-Encoding": "gzip, deflate, br", 47 "Accept-Language": "zh-CN,zh;q=0.9", 48 "Cookie": "lianjia_uuid=fa1c2e0b-792f-4a41-b48e-78531bf89136; _smt_uid=5cfdde9d.cbae95b; sensorsdata2015jssdkcross=%7B%22distinct_id%22%3A%2216b3fad98fc1d1-088a8824f73cc4-e353165-2710825-16b3fad98fd354%22%2C%22%24device_id%22%3A%2216b3fad98fc1d1-088a8824f73cc4-e353165-2710825-16b3fad98fd354%22%2C%22props%22%3A%7B%22%24latest_traffic_source_type%22%3A%22%E8%87%AA%E7%84%B6%E6%90%9C%E7%B4%A2%E6%B5%81%E9%87%8F%22%2C%22%24latest_referrer%22%3A%22https%3A%2F%2Fwww.baidu.com%2Flink%22%2C%22%24latest_referrer_host%22%3A%22www.baidu.com%22%2C%22%24latest_search_keyword%22%3A%22%E6%9C%AA%E5%8F%96%E5%88%B0%E5%80%BC%22%7D%7D; _ga=GA1.2.1891741852.1560141471; UM_distinctid=17167f490cb566-06c7739db4a69e-4313f6b-100200-17167f490cca1e; Hm_lvt_9152f8221cb6243a53c83b956842be8a=1588171341; lianjia_token=2.003c978d834648dbbc2d3aa4b226145cd7; select_city=110000; lianjia_ssid=fc20dfa1-6afb-4407-9552-2c4e7aeb73ce; CNZZDATA1253477573=1893541433-1588166864-https%253A%252F%252Fwww.baidu.com%252F%7C1591157903; CNZZDATA1254525948=1166058117-1588166331-https%253A%252F%252Fwww.baidu.com%252F%7C1591154084; CNZZDATA1255633284=1721522838-1588166351-https%253A%252F%252Fwww.baidu.com%252F%7C1591158264; CNZZDATA1255604082=135728258-1588168974-https%253A%252F%252Fwww.baidu.com%252F%7C1591153053; _jzqa=1.2934504416856578000.1560141469.1588171337.1591158227.3; _jzqc=1; _jzqckmp=1; _jzqy=1.1588171337.1591158227.1.jzqsr=baidu.-; _qzjc=1; _gid=GA1.2.1223269239.1591158230; _qzja=1.1313673973.1560141469311.1588171337488.1591158227148.1591158227148.1591158233268.0.0.0.7.3; _qzjto=2.1.0; srcid=eyJ0Ijoie1wiZGF0YVwiOlwiMThmMWQwZTY0MGNiNTliNTI5OTNlNGYxZWY0ZjRmMmM3ODVhMTU3ODNhNjMwODhlZjlhMGM2MTJlMDFiY2JiN2I4OTBkODA0M2Q0YTM0YzIyMWE0YzIwOTBkODczNTQwNzM0NTc1NjBlM2EyYTc3NmYwOWQ3OWQ4OWJjM2UwYzAwY2RjMTk3MTMwNzYwZDRkZTc2ODY0OTY0NTA5YmIxOWIzZWQyMWUzZDE3ZjhmOGJmMGNmOGYyMTMxZTI1MzIxMGI4NzhjNjYwOGUyNjc3ZTgxZjA2YzUzYzE4ZjJmODhmMTA1ZGVhOTMyZTRlOTcxNmNiNzllMWViMThmNjNkZTJiMTcyN2E0YzlkODMwZWIzNmVhZTQ4ZWExY2QwNjZmZWEzNjcxMjBmYWRmYjgxMDY1ZDlkYTFhMDZiOGIwMjI2NTg1ZGU4NTQyODBjODFmYTUyYzI0NDg5MjRlNWI0N1wiLFwia2V5X2lkXCI6XCIxXCIsXCJzaWduXCI6XCI2Yzk3M2U5M1wifSIsInIiOiJodHRwczovL2JqLmxpYW5qaWEuY29tL2RpdGllenVmYW5nL2xpNDY0NjExNzkvcnQyMDA2MDAwMDAwMDFsMSIsIm9zIjoid2ViIiwidiI6IjAuMSJ9"} 49 response = requests.get(url=urls, headers=headers) 50 main(response) 51 except RequestException: 52 return None 53 54 55 56 if __name__=="__main__": 57 for i in range(64): #遍历翻页 58 if(i==0): 59 urls = "https://bj.lianjia.com/ditiezufang/li46461179/rt200600000001l1/" 60 get_one_page(urls) 61 else: 62 urls = "https://bj.lianjia.com/ditiezufang/li46461179/rt200600000001l1/".replace("rt","pg"+str(i)) 63 get_one_page(urls)

说明:本代码中使用了《Python之mysql实战》的那篇文章,请注意结合着一起来看。

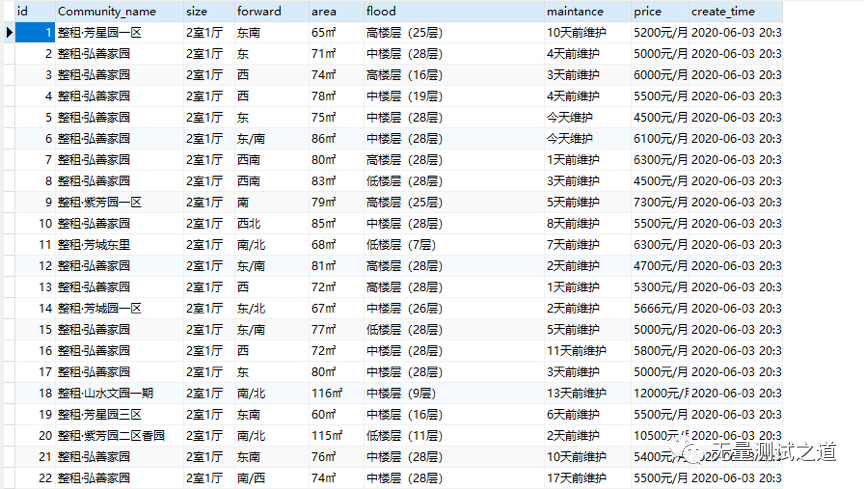

三、以下是获取到的数据入库后的结果图

结论:爬虫是获取数据的重要方式之一,我们需要掌握多种方式去获取数据。机器学习是基于数据的学习,我们需要为机器学习做好数据的准备,大家一起加油哟~

备注:我的个人公众号已正式开通,致力于测试技术的分享,包含:大数据测试、功能测试,测试开发,API接口自动化、测试运维、UI自动化测试等,微信搜索公众号:“无量测试之道”,或扫描下方二维码:

添加关注,一起共同成长吧。