下载Spark

解压并移动到/software目录:

tar -zxvf spark-2.2.0-bin-without-hadoop.tgz

mv spark-2.2.0-bin-without-hadoop /software/spark

在/etc/profile文件添加:

export SPARK_HOME=/software/spark

export PATH=$SPARK_HOME/sbin:$SPARK_HOME/bin:$PATH

保存并更新/etc/profile:source /etc/profile

复制spark-env.sh.template并重命名为spark-env.sh:

cd $SPARK_HOME/conf

cp spark-env.sh.template spark-env.sh

在spark-env.sh中添加:

export JAVA_HOME=/software/jdk

export SCALA_HOME=/software/scala

export HADOOP_HOME=/software/hadoop

export SPARK_MASTER_IP=localhost

export SPARK_WORKER_MEMORY=1G

export SPARK_DIST_CLASSPATH=$($HADOOP_HOME/bin/hadoop classpath)

启动

$SPARK_HOME/sbin/start-all.sh

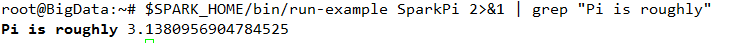

测试Spark是否安装成功:

$SPARK_HOME/bin/run-example SparkPi 2>&1 | grep "Pi is roughly"

输出信息比较多,使用grep命令过滤:

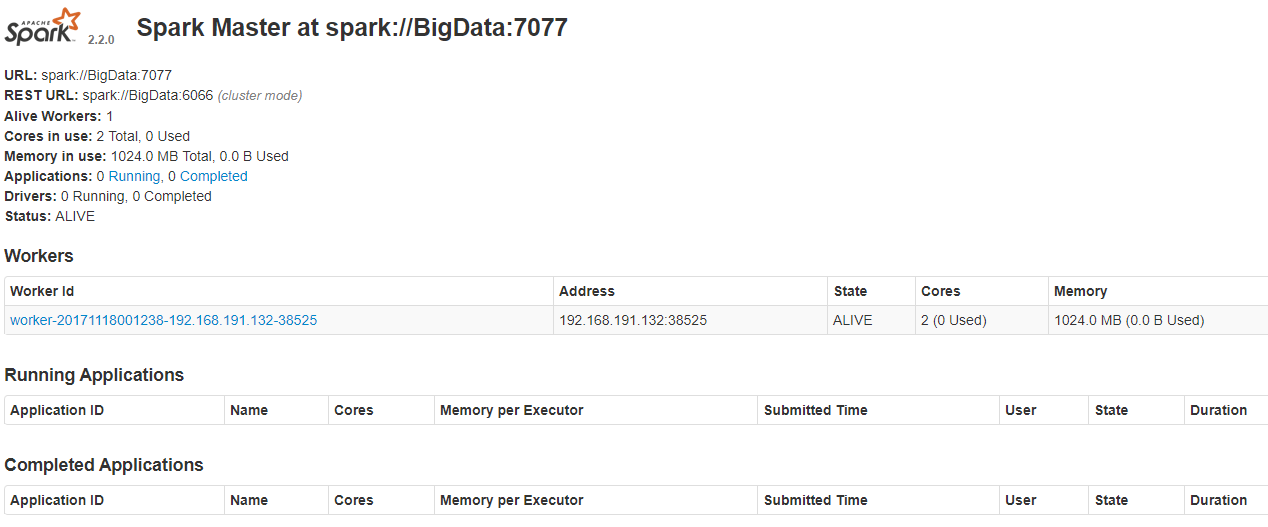

检查WebUI,浏览器打开端口:http://localhost:8080

停止

$SPARK_HOME/sbin/stop-all.sh

安装完成。